Back to all blogs

Spot 30 critical red flags hiring teams miss in remote interviews, including AI cheating, proxy candidates, and interview fraud and learn how to protect interview integrity.

Abhishek Kaushik

Mar 5, 2026

Remote hiring has transformed recruitment but it has also opened the door to new forms of interview fraud and AI-assisted cheating that traditional evaluation methods struggle to detect.

Surveys of hiring professionals report that 59% suspect candidates misrepresent themselves using AI tools or fraudulent tactics in the hiring process, and about 17% have directly encountered deepfake or proxy-assisted interviews.

Companies are also reporting broader patterns of deception, in some studies, up to 72% of recruiters have encountered AI-related job fraud, including fake credentials, fabricated work samples, and even deepfake interview scenarios.

These trends highlight a stark reality that interviews are being gamed at scale, and human interviewers alone often can’t spot when polished answers hide external assistance or even identity fraud.

This blog explores the 30 most commonly missed red flags in remote interviews and why hiring teams need to rethink how interview integrity is evaluated today.

30 Interview Red Flags Hiring Teams Miss

Remote interviews unlocked global hiring at scale but they also quietly broke many of the trust assumptions interviews were built on.

Below are 30 critical red flags in remote interviews that signal potential AI assistance, impersonation, or external help and why hiring teams frequently overlook them.

1. Delayed Responses Followed by Unnaturally Perfect Answers

In remote interviews, response timing matters as much as content.

Why this is a red flag

Suggests off-screen prompting or AI generation

Breaks natural thinking rhythm

Commonly missed because: Interviewers assume nerves or internet lag.

👉 Top Behavioral Signs of Cheating During Remote Interviews

2. Eyes Frequently Shifting Away From the Screen

Subtle but recurring eye movement patterns matter.

Why this is a red flag

Indicates reading from another device or prompt

Often aligns with complex questions

Commonly missed because: Interviewers normalize multitasking in remote calls.

3. Answers That Sound More “Written” Than Spoken

Spoken language has imperfections. Generated responses don’t.

Why this is a red flag

AI-generated responses often have:

Over-structured phrasing

Perfect grammar

Balanced, essay-like flow

Commonly missed because: Polished communication is mistaken for competence.

4. Sudden Spikes in Answer Quality

Not all answers are created equal but dramatic swings are telling.

Why this is a red flag

Suggests selective external help

Indicates lack of consistent skill ownership

Commonly missed because: Interviewers focus on the best answers, not variance.

5. Inability to Rephrase or Simplify an Answer

True understanding survives paraphrasing.

Why this is a red flag

Scripted or AI answers fail when reframed

Indicates memorization, not comprehension

Commonly missed because: Interviewers move on after “good enough” responses.

👉 How to Detect Cheating in a Gmeet Interview

6. Overuse of Generic Frameworks Without Context

Frameworks are useful until they replace thinking.

Why this is a red flag

AI tools default to popular models

Lack of real-world anchoring

Commonly missed because: Framework familiarity is rewarded in interviews.

7. Strong Theoretical Knowledge, Weak Practical Depth

Theory is easier to fake than experience.

Why this is a red flag

AI excels at explaining concepts

Struggles with messy, real-world edge cases

Commonly missed because: Interviewers don’t probe operational details.

8. Inconsistent Voice, Tone, or Vocabulary

Language consistency is a powerful integrity signal.

Why this is a red flag

Switching between personal speech and generated language

Sudden shifts in sophistication

Commonly missed because: Remote interviews reduce sensitivity to delivery changes.

9. Candidate Avoids Screen Sharing or Live Tasks

Resistance to live problem-solving is revealing.

Why this is a red flag

Live tasks reduce ability to use AI assistance

Proxy candidates often avoid exposure

Commonly missed because: Interviewers don’t want to appear “distrustful”.

10. Perfect Answers to Ambiguous Questions

Ambiguity usually produces hesitation.

Why this is a red flag

AI tends to resolve ambiguity confidently

Humans ask clarifying questions

Commonly missed because: Confidence is mistaken for decisiveness.

11. No Visible Thinking Process

Remote interviews hide cognitive effort.

Why this is a red flag

AI provides outputs, not reasoning trails

Real problem-solving shows pauses, corrections

Commonly missed because: Interviewers focus on final answers only.

12. Candidate Performs Better Than Their Resume Suggests

Too good can also be suspicious.

Why this is a red flag

Proxy interviews inflate perceived skill

Resume becomes irrelevant

Commonly missed because: Teams celebrate “hidden gems”.

13. Candidate Performs Worse in Later Rounds

Integrity breaks under continuity.

Why this is a red flag

Different person interviewing

Loss of external support

Commonly missed because: Attributed to fatigue or pressure.

14. Inability to Explain Past Work Without Generalities

AI can summarize not relive experience.

Why this is a red flag

Lack of emotional or contextual detail

No “war stories”

Commonly missed because: Interviewers don’t dig into specifics.

15. Answers That Don’t Match the Question’s Constraints

Constraint handling reveals real thinking.

Why this is a red flag

AI often ignores subtle constraints

External helpers may not hear full question

Commonly missed because: Interviewers don’t enforce constraints strictly.

16. Minimal Clarifying Questions

Curiosity is a human signal.

Why this is a red flag

AI assumes and proceeds

Humans clarify uncertainty

Commonly missed because: Quick answers feel efficient.

17. Mismatch Between Test / Assignment and Interview Discussion

This gap is one of the strongest fraud indicators.

Why this is a red flag

Work may not be their own

AI-generated or outsourced assignments

Commonly missed because: Teams treat assessments and interviews separately.

18. Repeating Common Online Examples Verbatim

AI and coaching reuse the same references.

Why this is a red flag

Identical examples across candidates

No personalization

Commonly missed because: Examples sound “industry standard”.

19. Candidate Rarely Admits Uncertainty

Humans don’t know everything.

Why this is a red flag

AI fills gaps confidently

External helpers smooth over doubt

Commonly missed because: Interviewers reward certainty.

20. Behavioral Answers That Don’t Align With Technical Depth

Cross-signal mismatches matter.

Why this is a red flag

Strong storytelling, weak execution

Polished narratives hiding gaps

Commonly missed because: Behavioral rounds feel safer.

21. Unnatural Pauses Only During Technical Questions

Pattern matters more than isolated moments.

Why this is a red flag

External help triggered selectively

Integrity varies by question type

Commonly missed because: Interviewers don’t track response timing.

22. Candidate Avoids Camera-On Policies or Identity Checks

Identity continuity is foundational.

Why this is a red flag

Enables proxy interviewing

Weakens accountability

Commonly missed because: Fear of harming candidate experience.

23. Answers Mirror Job Description Language Too Closely

AI loves job descriptions.

Why this is a red flag

Low originality

Repackaged text, not experience

Commonly missed because: Alignment is rewarded.

24. Inability to Debug or Critique Their Own Answer

Reflection reveals ownership.

Why this is a red flag

AI-generated answers resist critique

Ownership requires judgment

Commonly missed because: Interviewers rarely revisit answers.

25. Overly Structured STAR Responses Across All Questions

Consistency can be suspicious.

Why this is a red flag

Suggests coaching or scripts

Lacks natural variation

Commonly missed because: Interviewers are trained to expect STAR.

26. Candidate Performs Exceptionally Well in First Round Only

First impressions can be engineered.

Why this is a red flag

Initial round heavily assisted

Later rounds reveal baseline ability

Commonly missed because: Early momentum bias.

27. No Personal Opinions or Trade-Offs

Real work involves judgment.

Why this is a red flag

AI stays neutral

Humans take positions

Commonly missed because: Neutrality feels professional.

28. Difficulty Thinking Under Time Pressure

Time pressure reduces external assistance.

Why this is a red flag

Performance drops sharply

Hesitation increases

Commonly missed because: Interviewers avoid stressing candidates.

29. Multiple Small Inconsistencies Across the Interview

Fraud rarely fails once, it leaks.

Why this is a red flag

Integrity issues show in fragments

Patterns matter more than any single signal

Commonly missed because: Each inconsistency is dismissed in isolation.

30. Interview “Feels Too Smooth”

The most dangerous red flag is comfort.

Why this is a red flag

High-risk interviews often feel flawless

Friction exposes truth

Commonly missed because: Teams equate smoothness with quality.

Remote interviews didn’t just change where we hire, they changed what needs to be evaluated.

High-integrity hiring requires:

Tracking consistency across questions and rounds

Evaluating response patterns, not just responses

Designing interviews that reveal independent thinking

The future of remote hiring is about designing interviews where fraud has nowhere to hide.

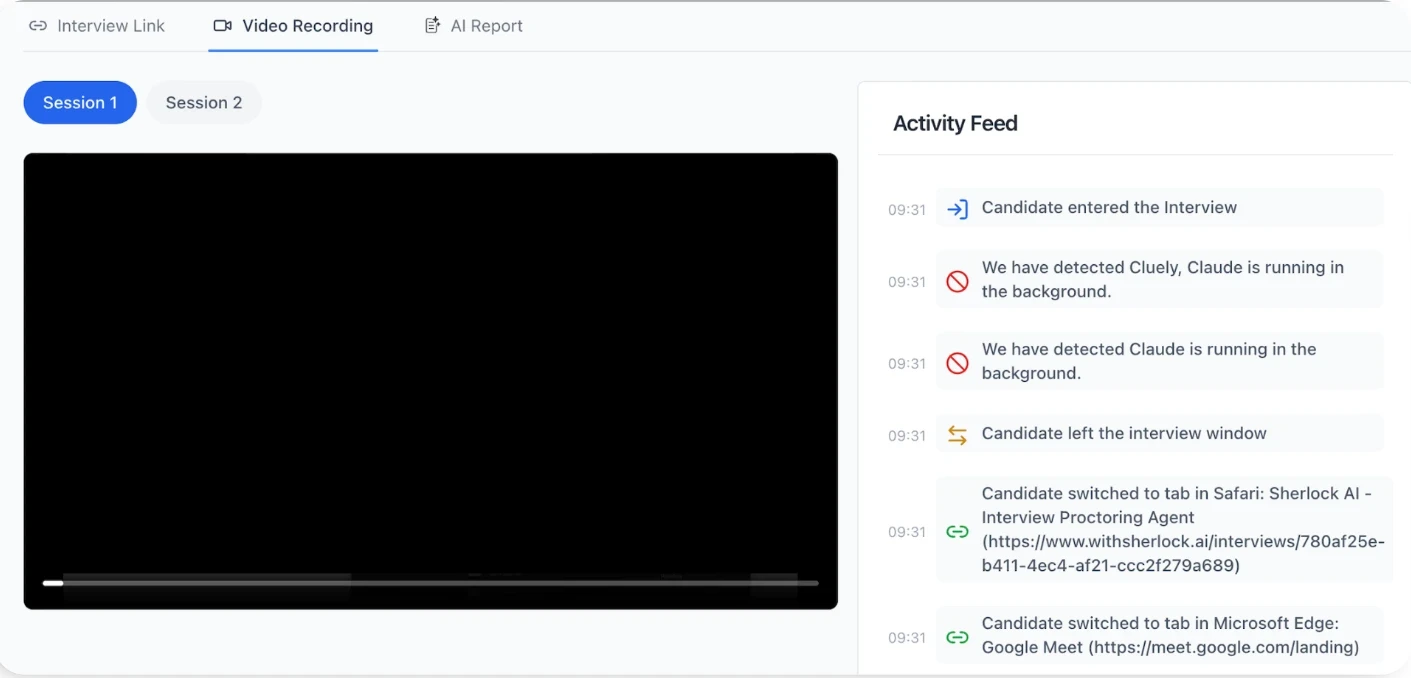

Sherlock AI: How It Secures Remote Interviews

Sherlock AI is purpose-built to help hiring teams detect fraud, AI-assisted cheating, and integrity gaps in remote interviews without disrupting the candidate experience.

Key features:

AI Fraud Detection – Identifies AI-assisted or scripted responses and sophisticated cheating behavior in live or recorded remote interviews.

Multimodal Integrity Monitoring – Uses device activity, audio cues, and behavioral patterns together to flag unusual interaction patterns beyond simple webcam proctoring.

Real-Time Alerts – Provides interviewers with live alerts on suspicious signals so they can probe further without losing focus on core evaluation.

AI Fluency Observation – When AI tools are permitted, Sherlock assesses how effectively candidates use them instead of just blocking usage.

Automatic Notes & Insights – Captures detailed interview notes and performance insights, helping teams maintain consistent evaluation records.

Cross-Round Detection – Flags inconsistencies across multiple interview rounds, helping uncover proxy candidates or fluctuating integrity signals.

Detects Multiple Cheating Tools – Capable of spotting usage of sophisticated external aids (e.g., AI interview assistants) that would otherwise go unnoticed.

Seamless Integration – Works with popular video platforms and calendars, automatically joining interview meetings to protect integrity with minimal setup.

Sherlock AI turns subtle remote-interview anomalies into actionable integrity insights, helping you focus on candidate ability, not presentation polish.

Conclusion

Remote interviews have made hiring faster and cheating easier. Most interview fraud today doesn’t look suspicious; it looks polished, prepared, and confident.

The red flags discussed in this blog show why relying only on behavioral judgment and gut instinct is no longer enough. Hiring teams need to evaluate consistency, authenticity, and independent thinking and not just good answers.

When interview integrity is built into the process and supported by tools like Sherlock AI, remote interviews become harder to game and easier to trust.