Back to all blogs

Discover how AI-assisted interview cheating is different from traditional cheating, the risks it poses to hiring, and how Sherlock AI helps recruiters detect fraud, verify candidates, and protect hiring integrity.

Abhishek Kaushik

Feb 9, 2026

Cheating in job interviews is nothing new. For decades, candidates have exaggerated resumes, made up references, or faked skills to get a job. But the methods and scale of cheating are changing. Today, recruiters face not only traditional dishonesty but also new forms of fraud that use technology to deceive hiring teams.

Recent data shows how widespread cheating has become. A large survey found that 7 in 10 job seekers admit to lying or cheating during the hiring process, including assessments and interviews.

Industry analysis and hiring manager reports also highlight growing concern about technology-assisted cheating. In surveys of hiring leaders, 59% suspected candidates of misrepresenting themselves AI tools, and nearly one in three had actually encountered a candidate using a false identity or proxy in an interview. Fraudulent hires can cost companies thousands of dollars in lost productivity and repeated hiring cycles.

In this blog, we will compare AI-related interview cheating with traditional cheating. We’ll explain what each looks like and how companies can protect their hiring processes going forward.

What Traditional Interview Cheating Looks Like

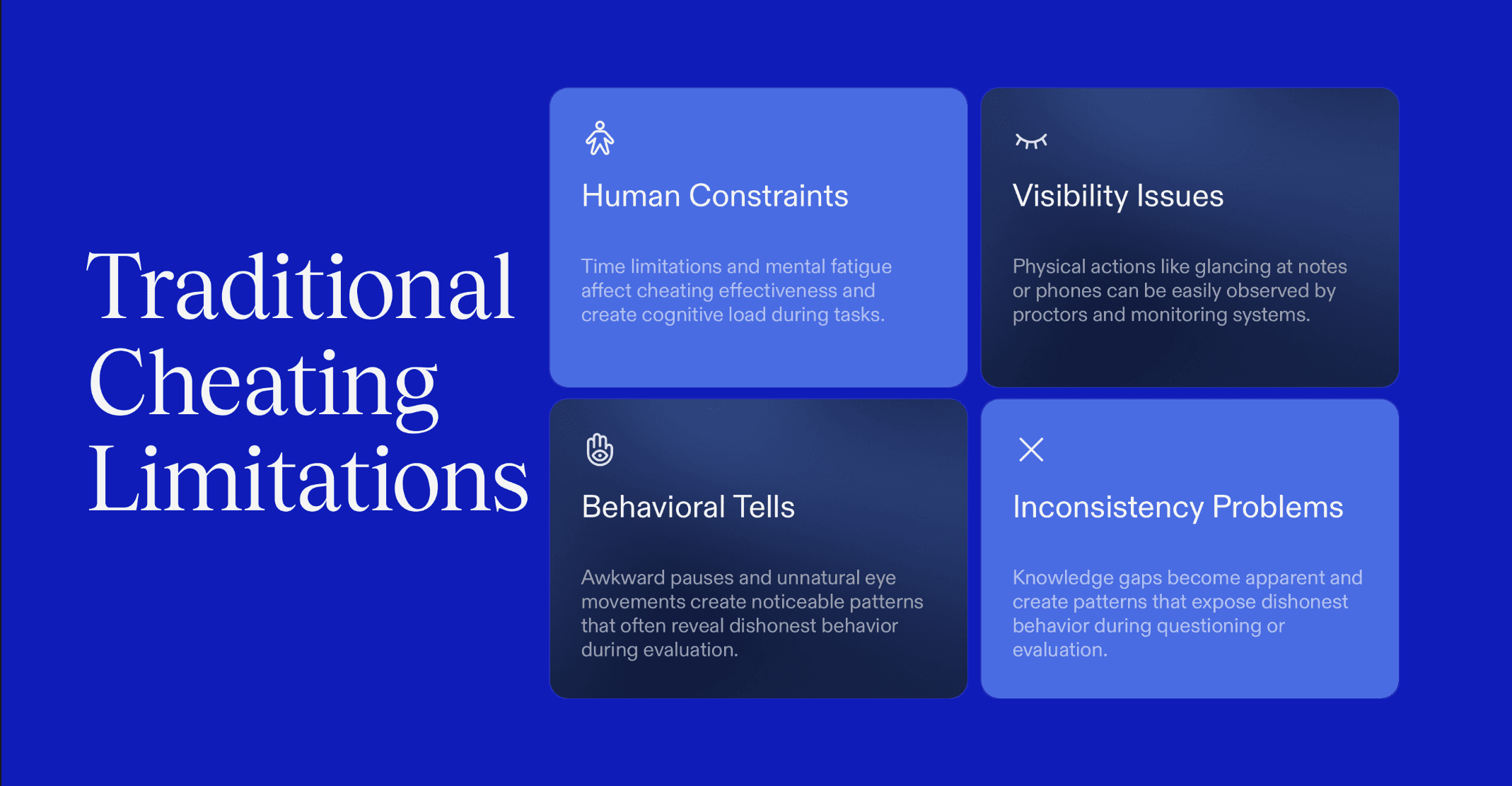

Traditional cheating depends on people, planning, and visible actions. These methods are risky, slow, and often leave clues.

Common examples include:

1. Impersonation and proxy candidates

Some candidates send a more qualified person to take the interview or assessment on their behalf. This has been widely reported in remote hiring, especially in technical roles where identity verification is weak.

2. Leaked interview questions and answer sharing

Candidates memorize questions from past interviews shared on forums or private groups. This gives the illusion of skill without real understanding.

3. Off-screen notes and friend prompting

Candidates place notes near the camera or receive help from someone nearby feeding answers silently.

4. Pre-recorded or rehearsed answers

Used mainly in asynchronous video interviews, where candidates repeat scripted responses that do not reflect real problem-solving ability.

These tactics rely on human effort and coordination. They are limited by time, fatigue, and visibility. Most importantly, they leave behavioral traces such as eye movement, awkward pauses, mismatched tone, or inconsistent knowledge.

What AI Interview Cheating Introduces

AI changes the nature of cheating completely. It removes most of the human friction that made traditional cheating detectable.

New forms include:

1. Real-time AI copilots generating answers silently

Candidates run AI tools in the background that listen to questions and generate responses instantly. The candidate only has to read or repeat them.

2. Voice bots using speech-to-text, LLMs, and text-to-speech loops

Here, the voice bot listens, processes the question, creates an answer, and speaks it back with little delay. This can happen fast enough to pass as natural conversation.

3. Second-screen AI setups and hidden browser overlays

Candidates use a separate device or invisible browser layers so interview platforms cannot detect external help.

4. Deepfake-based identity fraud

This is where AI changes the game completely. Candidates using deepfake can now:

Use AI-generated faces in video interviews

Replace their voice with someone else’s

Mask their real identity or appear as another person

Animate static photos or prerecorded videos to look live

In these cases, the recruiter may not even be speaking to the real candidate. The fraud is no longer about getting help. It is about becoming someone else digitally.

Unlike human helpers, these tools do not get tired, distracted, or inconsistent. They operate continuously throughout the interview.

Why AI Cheating Is Fundamentally Different

AI-based cheating is not just another method. It changes the threat model itself.

It operates in real time: Traditional cheating often happens before or around the interview. AI cheating happens during the interview, live, adapting instantly to each question.

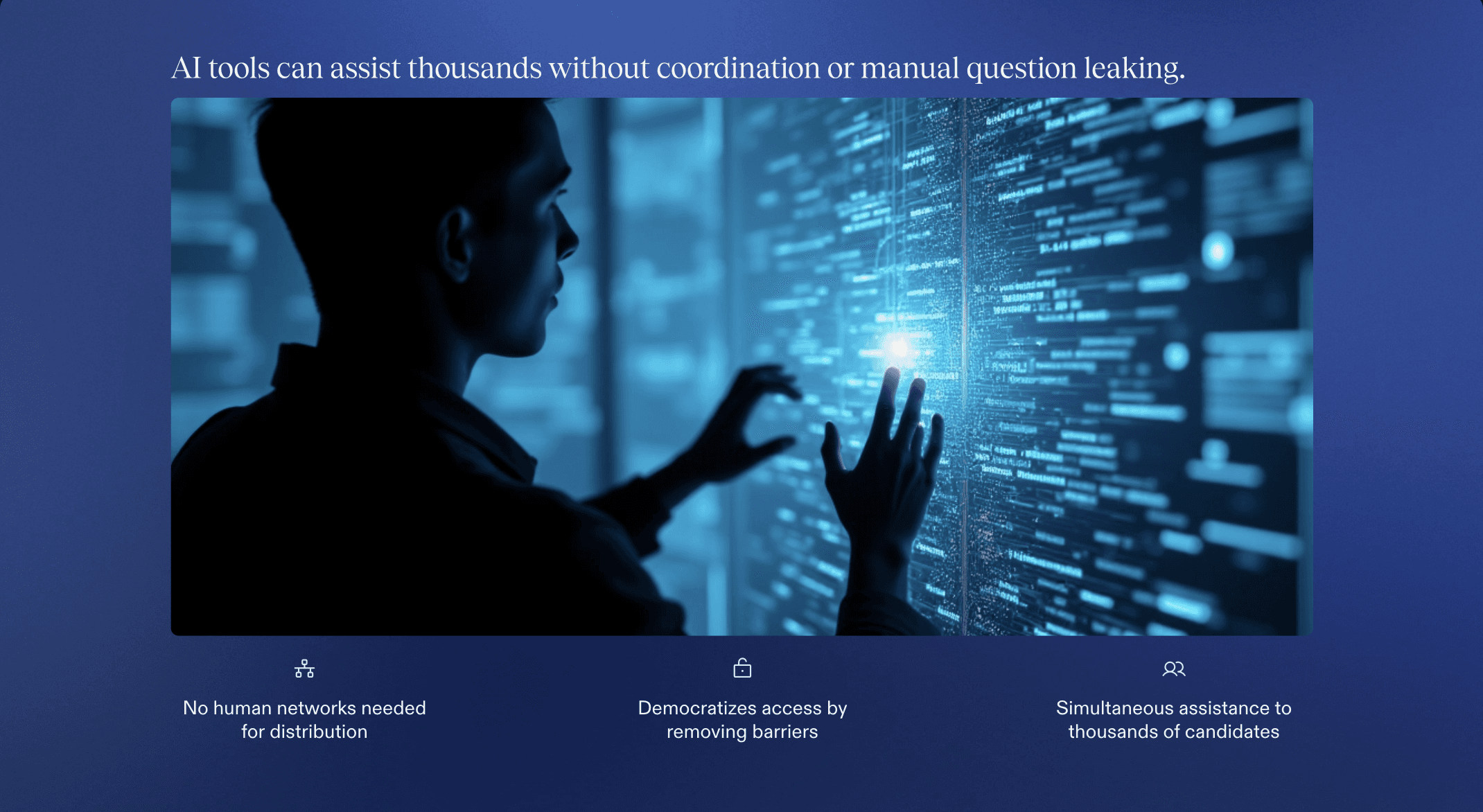

It scales effortlessly: A single AI tool can assist thousands of candidates simultaneously. There is no need to coordinate with other people or leak questions manually.

It leaves little behavioral footprint: Human cheating often shows in body language, hesitation, or mismatched confidence. AI-generated responses are fluent, confident, and consistent, which makes them harder to distinguish from real skill.

This is why cheating is no longer only a question of candidate ethics. It is now a technology problem. The tools used to cheat have become more advanced than the tools used to detect cheating.

Why Old Controls Fail Against AI Cheating

Most interview monitoring systems were built to catch human behavior. They assume that cheating involves visible actions, physical cues, or rule-breaking patterns. That assumption no longer holds.

AI-driven cheating does not behave like human cheating, which makes many current controls ineffective.

Detection Area | What Worked for Traditional Cheating | Why It Fails Against AI Cheating |

|---|---|---|

Webcam monitoring | Caught candidates looking off-screen, reading notes, reacting to helpers nearby | AI tools do not require visible movements. Candidates can keep natural eye contact while receiving help through audio or hidden prompts |

Eye movement and gaze tracking | Flagged frequent glances away from screen or unusual head movement | AI assistance does not force eye shifts or physical cues, so gaze patterns look normal |

Tab switching and screen focus | Detected candidates searching for answers or opening external sites | AI tools can run in the background, on second devices, or via extensions without switching tabs |

Identity verification | Prevented basic impersonation and proxy candidates | Deepfakes and voice cloning allow candidates to appear as someone else while passing surface identity checks |

Audio monitoring | Rarely used beyond noise detection | AI voice bots and virtual audio routing make AI assistance sound like the candidate’s own voice |

Behavioral anomalies | Caught hesitation, inconsistent answers, nervous behavior | AI generates fluent, confident, and consistent responses that appear like strong performance |

Proctoring alerts | Triggered on rule violations and visible misconduct | AI cheating does not break visible rules or create obvious violations |

Response timing | Long pauses or delays were suspicious | AI delivers instant, structured answers that seem efficient rather than suspicious |

Traditional detection looks for human mistakes. AI cheating avoids making them.

That is why AI interview cheating is not just harder to catch. It bypasses the entire logic on which most monitoring systems were built.

Risk Impact: Why AI Cheating Is More Dangerous Than Traditional Cheating

AI-assisted interview cheating creates far greater risks for businesses than traditional cheating. Unlike old methods that rely on human effort, AI cheating can operate at scale, hide skill gaps, and bypass conventional detection. This makes the consequences faster, larger, and harder to manage.

One major risk is scale and speed. A single AI setup can be used across multiple interviews, helping many candidates at once. Traditional cheating, like using notes or a proxy candidate, is limited by human coordination and time.

AI also distorts perceived skill. Candidates appear far more capable than they really are, producing polished, confident responses that hide gaps in knowledge. This leads recruiters to overestimate ability and make bad hiring decisions.

The cost implications are significant:

Bad hires that cost at least 30% of the employee’s first-year salary and require retraining or replacement

Longer time-to-productivity as teams spend extra effort onboarding and mentoring underqualified hires

Increased attrition due to misaligned roles, creating repeated recruitment and training costs

AI cheating also erodes trust in remote hiring. Recruiters lose confidence in interview signals because AI-generated responses leave minimal behavioral footprints. When left unchecked, these risks can lead to systemic hiring failures, impacting revenue, team performance, and employer brand.

In short, AI interview cheating is not just an ethics problem. It is a business-critical risk that affects productivity, finances, and long-term hiring outcomes.

Sherlock AI: The Solution to Modern Interview Cheating

Sherlock AI is designed to address the new challenges posed by AI-assisted interview cheating by combining real-time analysis with advanced detection methods to protect hiring integrity.

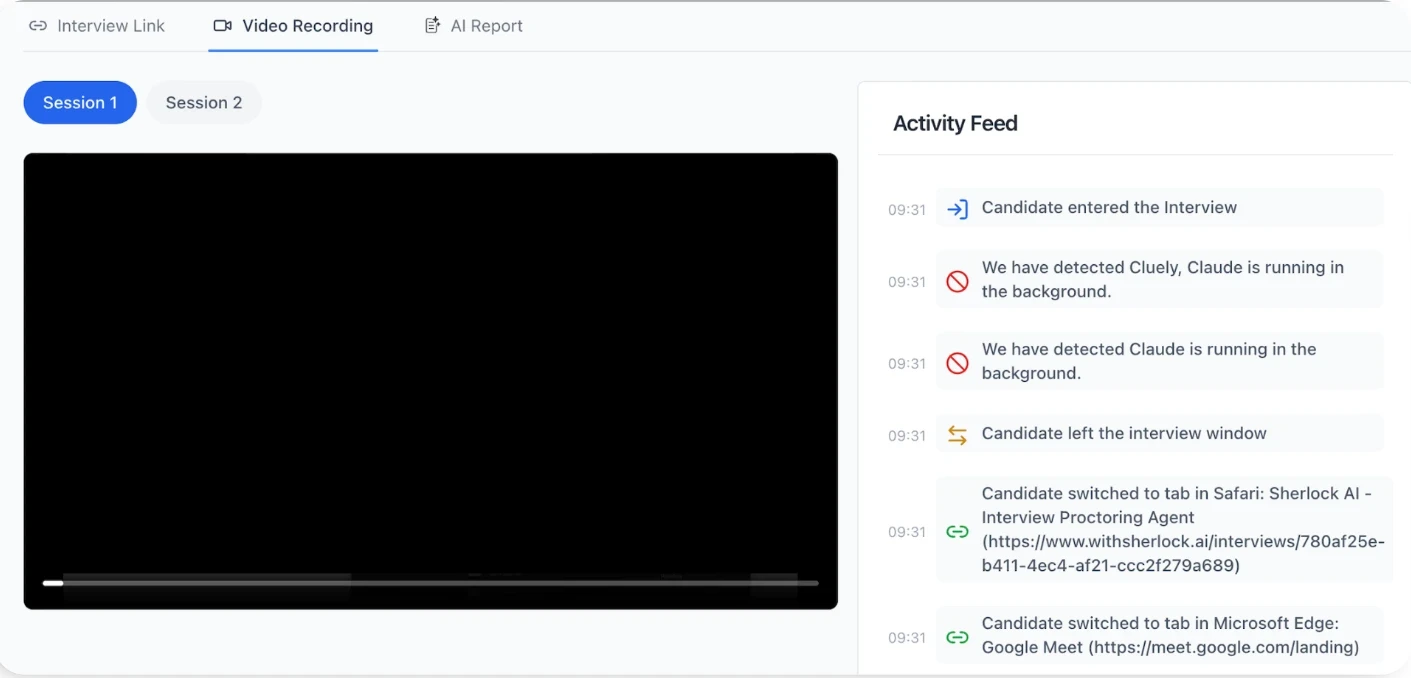

It identifies patterns and anomalies that human proctors and legacy systems cannot see, including hidden AI activity, synthetic voices, and manipulated video feeds.

Key capabilities of Sherlock AI include:

Real-time interview monitoring – Detects AI assistance, deepfake candidates, and second-screen setups during live interviews.

Behavioral anomaly detection – Flags responses that are too perfect, too fast, or inconsistent with the candidate’s background.

Voice and video analysis – Recognizes synthetic voices, altered speech patterns, and manipulated video feeds to ensure identity verification.

Background process detection – Identifies hidden AI tools running silently on candidate devices.

Actionable reporting – Provides recruiters with clear evidence and risk scores, making it easy to make informed hiring decisions.

Sherlock AI does not replace recruiters. Instead, it empowers them with the tools to detect deception, verify skills, and ensure fairness, safeguarding both the hiring process and organizational performance.

Conclusion

Interview cheating has evolved. Traditional methods like hidden notes, proxy candidates, and pre-recorded answers are no longer the biggest threat. Today, AI-assisted cheating, including real-time AI copilots, voice bots, deepfakes, and hidden browser tools operates at scale, leaves minimal traces, and can make candidates appear far more skilled than they actually are.

The risks are significant: bad hires, longer time-to-productivity, increased attrition, and erosion of trust in remote hiring. Legacy monitoring systems were not designed to detect these invisible threats, leaving companies vulnerable to systemic hiring failures.

Sherlock AI provides a modern solution. By detecting AI-assisted cheating, analyzing behavioral anomalies, verifying identity, and monitoring background processes, it equips recruiters with the tools they need to make informed, confident hiring decisions. Organizations that adopt advanced solutions like Sherlock AI can protect productivity, reduce risk, and maintain integrity in their hiring processes.

In a world where technology can disguise skill and identity, proactive detection is no longer optional, it is essential.