Back to all blogs

Protect your interview pipeline from AI abuse, deepfakes, and real time answer tools. Learn how Sherlock AI secures remote hiring with interview integrity.

Abhishek Kaushik

Feb 17, 2026

Artificial intelligence is reshaping how companies hire. Recruiters now use AI for sourcing, screening, scheduling, and assessments. At the same time, candidates are also using AI in ways that create serious risks for employers.

Some candidates rely on AI generated resumes. Others use real time AI tools to get answers during interviews. In more advanced cases, deepfake technology is being used to impersonate someone else entirely. Nearly 91 percent of U.S. hiring managers have encountered or suspected AI generated interview answers during online meetings, and 72 percent of recruiters report seeing fake resumes or portfolios created with AI.

As AI adoption in hiring continues to grow, protecting the interview pipeline is no longer optional. It is a critical part of hiring risk management. Companies that fail to address AI abuse risk making poor hiring decisions, exposing sensitive systems, and damaging trust in their recruitment process.

This guide explains how organizations can protect interview pipelines from AI abuse while still moving fast and delivering a fair candidate experience.

What Is AI Abuse in the Interview Process

AI abuse in hiring happens when candidates use artificial intelligence to misrepresent their skills, identity, or performance during the recruitment process.

Common forms include:

AI generated resumes and applications

Candidates use AI tools to create polished resumes that exaggerate experience or fabricate achievements.Real time AI interview assistance

During virtual interviews, candidates use hidden tools that listen to questions and generate suggested answers instantly.AI assisted coding tests

Some candidates use AI copilots or second devices to solve technical challenges they cannot complete on their own.Deepfake impersonation

Fraudsters use AI generated video or voice to appear as a completely different person in a remote interview.Third party support

Another individual provides answers off camera while the candidate repeats them as their own.

These tactics make it harder for recruiters to know whether they are evaluating real ability or AI supported performance.

Key Strategies to Protect Interview Pipelines from AI Abuse

As AI powered interview abuse becomes more advanced, organizations need a structured approach to protect hiring integrity. Preventing fraud now requires a mix of smarter interview design, human oversight, and technology that can detect hidden assistance.

1. Shift toward human centric and proctored assessments

Live interaction remains one of the strongest defenses against AI misuse.

Use live video or in person interviews whenever possible

Replace unsupervised take home tests with live coding or proctored technical tasks

Keep final hiring decisions in the hands of trained interviewers rather than fully automated systems

Human judgment is still essential, but it should be supported by the right safeguards.

2. Redesign interviews to make AI assistance harder

Interview formats can be structured to expose real understanding rather than rehearsed answers.

Ask behavioral questions based on real past experiences

Use situational questions that require candidates to explain their decision making

Include live problem solving or whiteboarding exercises

Ask follow up questions that go deeper into specific projects or tools

AI tools are good at generating generic answers. They struggle when candidates must provide detailed, personal, and context rich explanations on the spot.

3. Use AI detection and interview security technology

The same technology that enables abuse can also help detect it.

Organizations can use:

Tools that flag AI generated resumes or written responses

Secure and monitored environments for remote technical assessments

Interview integrity platforms that analyze behavioral, visual, and interaction signals during live interviews

These tools help recruiters spot patterns that are difficult to catch manually.

4. Strengthen identity verification in remote hiring

Knowing that the right person is in the interview is fundamental.

Conduct short live screening calls before formal rounds

Compare the candidate’s appearance and behavior across multiple stages

Watch for inconsistencies in voice, video quality, or interaction style

Identity verification should be treated as a standard part of remote hiring, not an exception.

5. Set clear policies on acceptable AI use

Not all AI use by candidates is malicious. However, real time AI assisted answering during interviews crosses an ethical line.

Organizations should:

Clearly communicate what types of AI use are not allowed during interviews

Include these expectations in interview guidelines and candidate communications

Train hiring teams to recognize signs of AI generated responses or external assistance

Clarity helps set fair expectations for everyone involved.

Read more: How to Prevent Cheating With AI During The Hiring Process

Traditional Interview Controls vs AI Era Risks

Interview Stage | Traditional Assumption | AI Era Risk | Impact on Hiring |

|---|---|---|---|

Resume screening | Resume reflects real experience | AI generated or heavily embellished resumes | Shortlisting unqualified candidates |

Technical assessments | Candidate completes work independently | AI copilots or hidden tools provide live solutions | False signal of technical ability |

Virtual interviews | Person on camera is the real candidate | Deepfakes, proxy candidates, or identity swaps | Hiring the wrong individual entirely |

Answer quality | Strong answers indicate strong knowledge | Real time AI generated responses | Misjudging actual competence |

Behavioral responses | Personal stories reflect lived experience | Scripted or AI composed narratives | Inaccurate assessment of soft skills |

Interview observation | Recruiters can spot suspicious behavior | AI assistance is invisible and off screen | Fraud goes undetected |

Final hiring decision | Performance in interviews equals job readiness | Interview performance inflated by AI support | Higher risk of poor performance after hiring |

This is where interview integrity technology becomes essential.

How Sherlock AI Helps to Protect Interview Pipelines

Interview redesign, recruiter training, and stronger policies all play an important role in reducing AI abuse. But in an era of invisible AI assistance, those steps alone are not enough. Interview integrity now requires a dedicated technology layer that works quietly in the background while interviews happen.

Sherlock AI provides that layer. It protects remote interview pipelines in real time, helping organizations ensure that the person they hire is the same person they interviewed, with the skills they genuinely demonstrated.

1. Real time interview monitoring without disruption

Sherlock AI operates silently during live interviews on platforms like Zoom, Microsoft Teams, and Google Meet. It does not interrupt the conversation or create friction for candidates or interviewers. Instead, it continuously analyzes integrity signals in the background while the interview proceeds naturally.

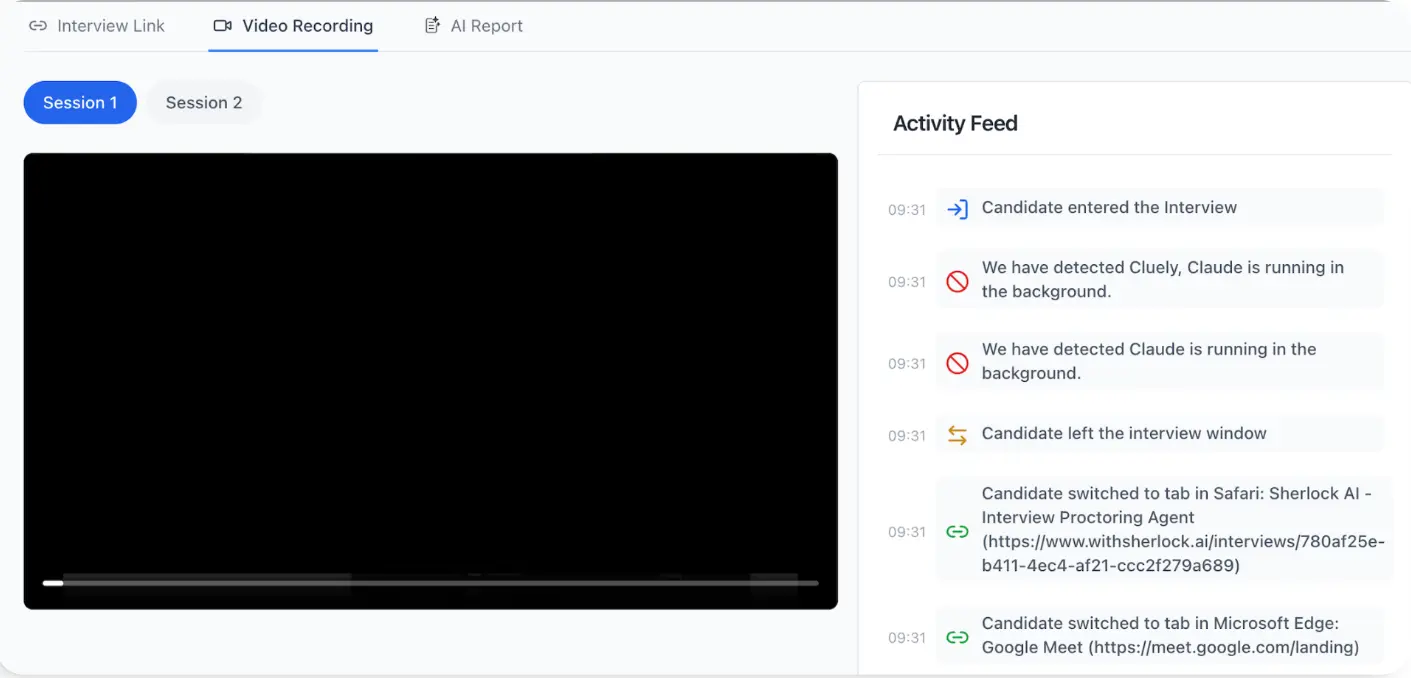

2. Detection of AI copilot and external assistance

Modern candidates can use hidden AI tools, real time answer generators, or off screen prompting systems. Sherlock AI identifies patterns associated with these behaviors, even when tools are minimized or kept out of direct view. This helps surface cases where responses may not be coming solely from the candidate’s own knowledge.

3. Response timing and fluency analysis

AI assisted answers often have unnatural delivery patterns. Sherlock AI analyzes response latency, speech flow, and sudden shifts in articulation. Overly structured or unusually fast and polished answers can be flagged for further review when they do not match normal human thinking patterns.

4. Eye line and attention drift detection

When candidates rely on external tools or prompts, their visual focus often shifts away from natural conversation. Sherlock AI monitors repeated off screen gaze patterns and attention drift that may indicate reading, prompting, or hidden assistance.

5. Impersonation and proxy interview detection

One of the most serious risks in remote hiring is identity fraud. Sherlock AI analyzes facial, voice, and behavioral consistency to detect signs of stand ins, identity swaps, or deepfake assisted interviews. This helps ensure the person on camera is the real candidate.

6. Continuous identity consistency checks

Identity verification should not happen only at login. Sherlock AI checks that the same individual remains present and engaged throughout the entire interview session, reducing the risk of mid interview swaps or proxy participation.

7. Behavioral inconsistency tracking during follow ups

Candidates who rely heavily on scripted or AI generated responses often struggle when interviewers dig deeper. Sherlock AI highlights behavioral inconsistencies, shallow reasoning, and loss of depth across follow up questions, giving recruiters additional context about authenticity.

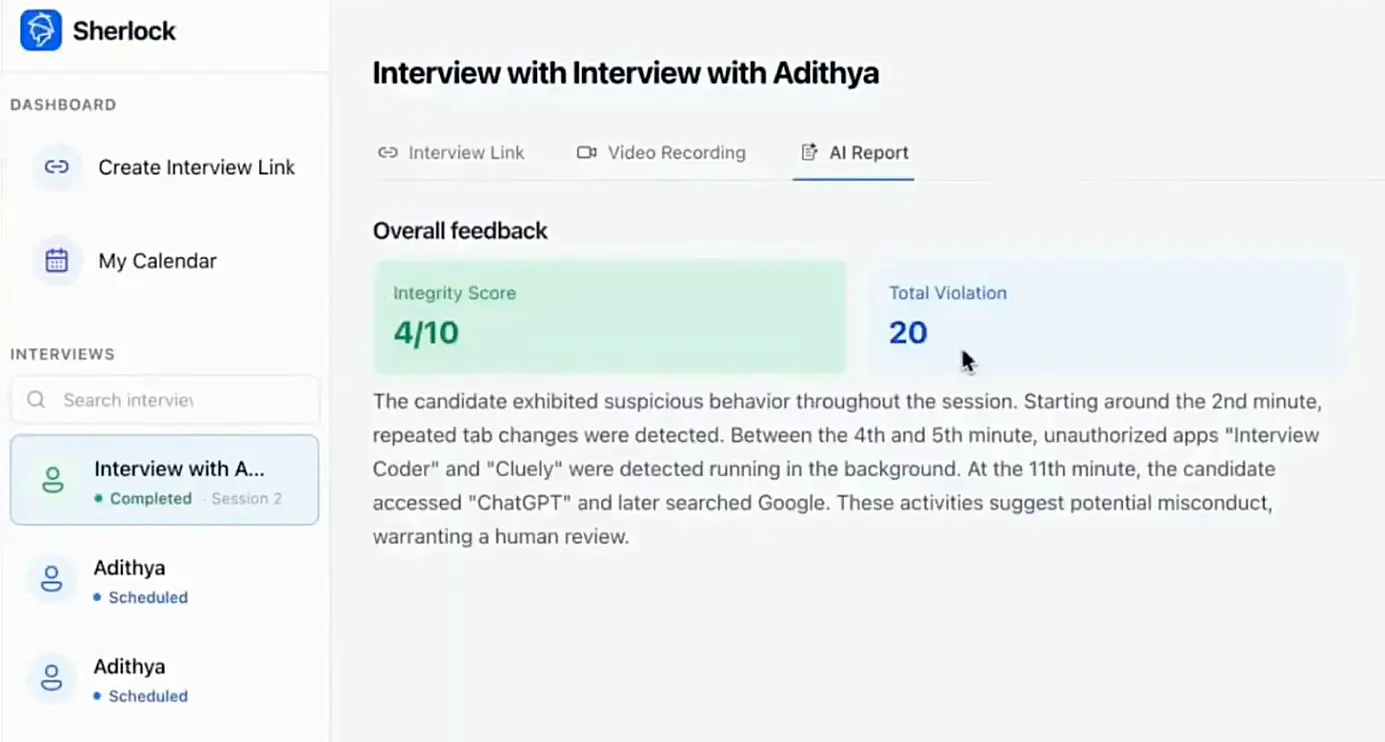

8. Early risk visibility before hiring decisions

Perhaps most importantly, Sherlock AI provides integrity signals during the interview stage, before offers are made or system access is granted. This allows hiring teams to make informed decisions early, reducing the cost and risk of fraudulent hires.

In a hiring landscape shaped by AI on both sides of the screen, interview integrity can no longer rely on observation alone. Sherlock AI gives organizations the visibility they need to protect their interview pipelines, hire with confidence, and maintain trust in remote hiring.

Conclusion

AI is now part of every stage of hiring, and that includes how candidates present themselves in interviews. While artificial intelligence brings speed and efficiency to recruitment, it also introduces new forms of risk that traditional hiring methods were never designed to handle.

AI generated resumes, real time answer assistance, impersonation, and deepfake driven fraud are changing what interview integrity means. Protecting interview pipelines is no longer just about asking the right questions. It is about making sure the person answering is genuine, unaided, and accurately representing their skills.

Organizations that rely only on interviewer intuition or outdated processes leave themselves exposed to bad hires, security threats, and long term business consequences. The future of hiring requires a dedicated integrity layer that works alongside recruiters, not instead of them.

Sherlock AI provides that layer. By monitoring interviews in real time, detecting AI assisted behavior, and verifying identity consistency, Sherlock AI helps companies hire with confidence in an era where AI can sit invisibly on the candidate’s side of the screen. Protecting interview pipelines from AI abuse is now a core part of responsible hiring, and Sherlock AI is built to make that protection practical, scalable, and reliable.