Back to all blogs

Deepfakes are reshaping job interview fraud. Learn how impersonation works, the risks to employers, and how Sherlock AI detects and prevents interview fraud in real time.

Abhishek Kaushik

Mar 5, 2026

Remote hiring unlocked global talent. It also unlocked a new category of fraud that most hiring teams are not prepared for.

Deepfake technology is no longer experimental or rare. It is being actively used in job interviews to impersonate candidates and gain unauthorized access to companies. What once required advanced technical skill can now be done using low cost consumer tools. A recent enterprise security survey found that 41% of organizations have hired a fraudulent candidate, meaning impersonation and identity fraud are no longer theoretical threats but real events in many companies.

For employers, this is not just a recruiting challenge. It is a security, compliance, and financial risk.

This article explores how deepfakes are being used in interviews, why the threat is accelerating, and how organizations can respond using modern identity verification and fraud prevention systems like Sherlock AI.

How Widespread Are Deepfakes in Job Interviews

Deepfake related interview fraud has shifted from isolated incidents to a growing pattern across remote hiring environments.

Recruiters and hiring managers report cases where:

The face on camera does not match the person who joins later onboarding steps

Candidates perform well live but cannot repeat the same skills in follow up assessments

Voice tone, lip movement, or lighting behaves unnaturally

Technical answers appear to be relayed in real time from hidden tools

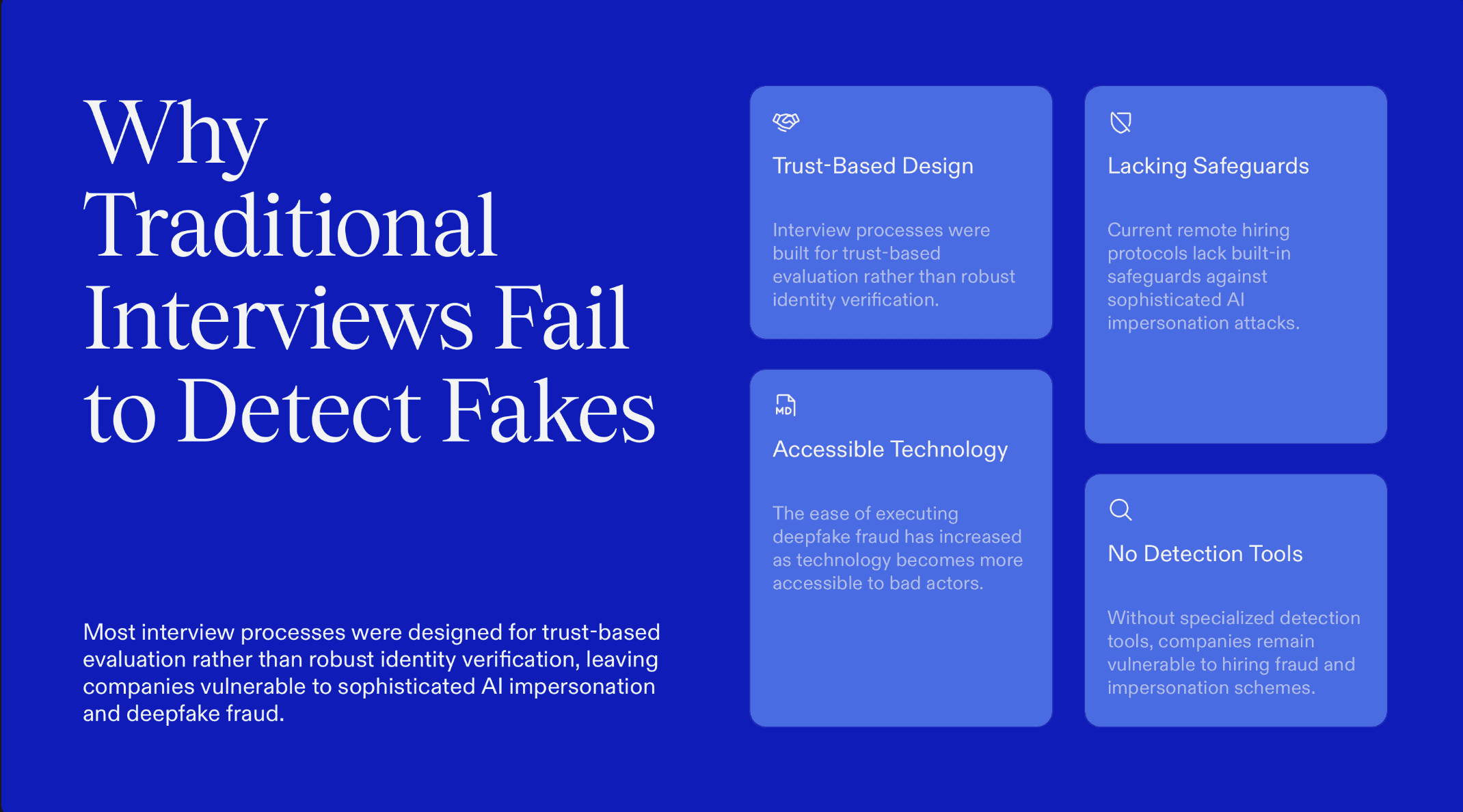

Because most interview processes were designed for trust based evaluation rather than identity verification, these incidents often go undetected.

As remote hiring continues to scale, deepfake misuse is becoming easier to execute and harder to spot without specialized tools.

How Deepfakes Are Used in Interviews

Fraudsters are combining multiple AI tools and coordination tactics to create convincing real time impersonation during interviews. These methods are rarely used in isolation. Instead, attackers layer visual manipulation, voice synthesis, hidden assistance, and identity deception to bypass traditional hiring checks.

Below are the most common techniques used today.

1. Face Swap Applications

These tools replace a live candidate’s face with someone else in real time. The person visible on camera may not be the person actually speaking.

Indicators include:

Unnatural facial edges or blurring around the jaw or hairline

Lighting on the face that does not match the surrounding environment

Facial expressions that appear slightly delayed compared to speech

Face swapping allows a skilled operator to impersonate a different identity during the interview.

2. AI Video Generators and Virtual Cameras

Synthetic or AI enhanced video can be streamed into meeting platforms through virtual camera software, making prerecorded or manipulated footage appear live.

Indicators include:

Very smooth or static backgrounds with little environmental variation

Limited head movement or repeated gesture patterns

Video quality that looks artificially clean compared to typical webcams

This method is often used to present prepared video segments as real time participation.

3. Voice Cloning Engines

Fraudsters can replicate a candidate’s voice from short audio samples. A different person may speak while sounding like the expected applicant.

Indicators include:

Speech that sounds slightly flat or digitally processed

Tone and emotion that do not change naturally with the conversation

Subtle distortion during faster speech or interruptions

Voice cloning is especially effective when combined with visual deepfakes.

4. Scripted Persona Switching

In some interviews, the person who appears at the start is not the one who completes technical tasks.

Indicators include:

Sudden changes in voice tone, confidence level, or communication style

Camera briefly turning off before technical discussions

Noticeable differences in problem solving speed between segments

This tactic allows fraud groups to combine a personable front with a hidden specialist.

5. Pre Recorded “Live” Responses

Attackers sometimes prepare high quality video answers for common questions and play them through virtual camera tools during the interview.

Indicators include:

Answers that begin instantly with no natural reaction time

Identical lighting and posture across different responses

Lack of interruption handling when the interviewer interjects

These clips are often used for behavioral or introductory questions.

6. Latency Masking and Lip Sync Manipulation

Deepfake systems may introduce small delays between voice and facial movement. Fraudsters may disguise this as connection issues.

Indicators include:

Lip movement slightly out of sync with speech

Frequent claims of audio lag or network instability

Facial expressions that look smoothed or less detailed than normal

Artificial lag can be used to hide technical artifacts.

7. Multi Person Support Behind the Scenes

In high value roles, the candidate on camera may be supported by an off screen team.

Indicators include:

Rapid improvement in answer quality after difficult questions

Inconsistent speaking pace between different topics

Background noises or whispered cues during pauses

The interview becomes a coordinated effort rather than an individual assessment.

8. Accent and Language Manipulation

Real time voice conversion can modify accent or fluency to match the resume.

Indicators include:

Pronunciation that shifts during the interview

Fluency in prepared answers but struggle in spontaneous discussion

Voice tone that sounds processed during longer sentences

This tactic is often used when language skills are a key job requirement.

Because these techniques are frequently combined and constantly evolving, visual judgment alone is no longer a reliable defense. Interview fraud has become a technical problem that requires technical detection.

How to Respond to Deepfakes in Interviews

Organizations need a structured approach that combines technology, process, and awareness. Deepfake interview fraud cannot be handled through intuition alone.

1. Start Identity Verification Before the Interview

Do not wait until the offer stage to verify who the candidate is.

Tie interview access to verified email, phone, and device signals. Early identity checks reduce the chance of anonymous or synthetic participants entering the process.

2. Use Real Time Identity and Deepfake Detection

Human observation is no longer enough.

Use systems that analyze live video, audio, and behavioral signals for signs of impersonation, synthetic media, or hidden assistance. Automated detection helps identify risks that recruiters cannot see.

3. Check Identity Consistency Across Hiring Stages

Fraud often appears as small inconsistencies across touchpoints.

Compare signals from application, interview, assessment, and onboarding stages. Differences in appearance, voice, device patterns, or behavior may indicate identity switching.

4. Train Recruiters to Recognize Risk Signals

Provide practical training on common signs of AI assistance and impersonation.

This includes unnatural eye movement, delayed responses, mismatched communication ability, and inconsistent identity details. Awareness helps teams flag issues early.

5. Verify Identity Through a Second Channel

When risk is detected, confirm identity using an independent method.

This may include secure document verification, a follow up call to a verified phone number, or identity checks that are separate from the interview session.

Responding to deepfake interview fraud requires moving from trust based evaluation to trust plus verification. Organizations that combine recruiter awareness with real time identity intelligence are far better equipped to protect their hiring process.

Read More: How To Spot AI Deepfakes Posing As Job Candidates in Interviews

Impact on Job Interviews

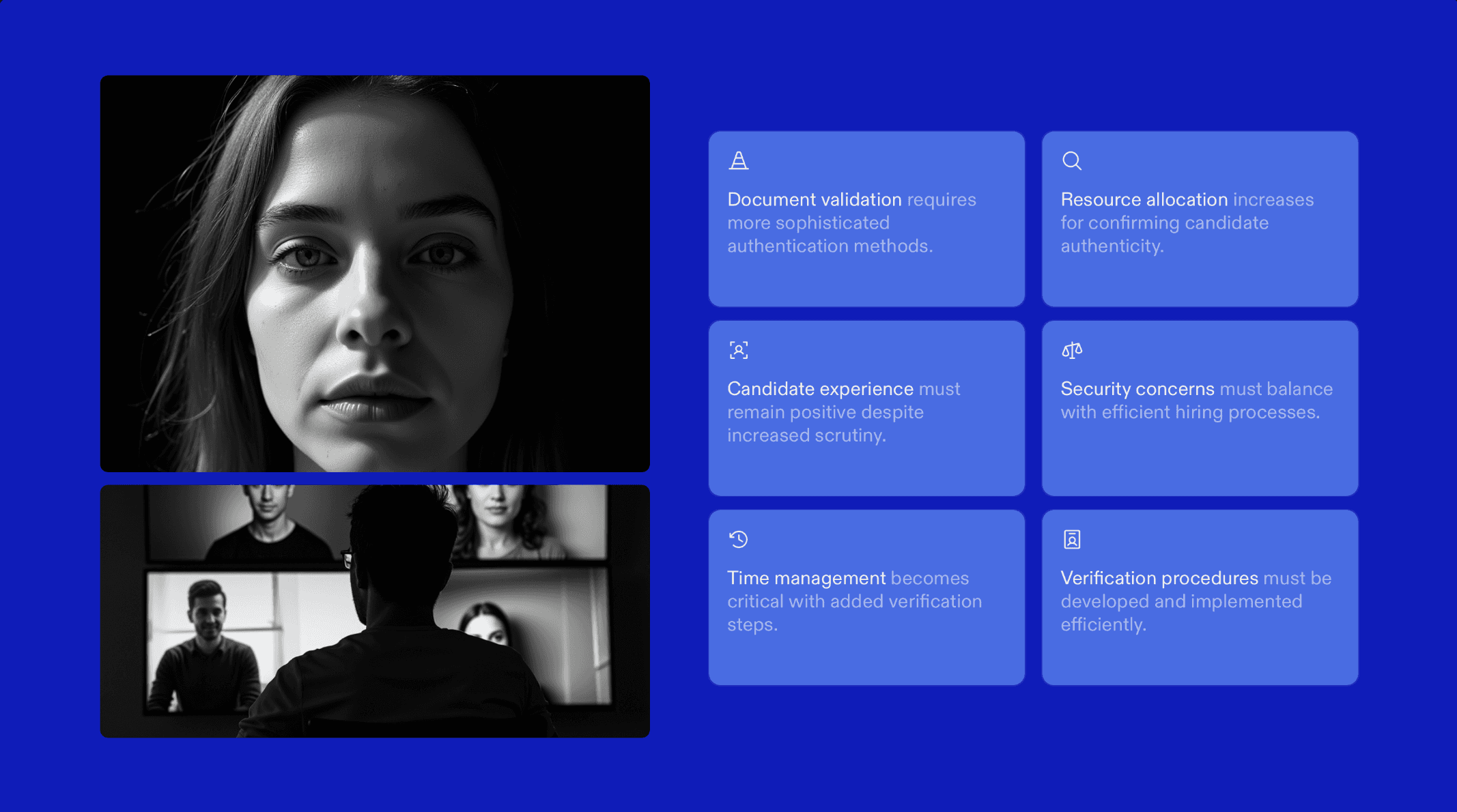

The implications of AI-powered deepfakes in job interviews are significant and far-reaching for employers:

Undermines Interview Integrity

Deepfakes make it harder to trust the authenticity of the person on the other end, weakening the core purpose of interviews to assess skills and personality.Fraudulent Hires

Malicious actors can secure positions under false pretenses, potentially bypassing experience checks and evaluations.Unfair Advantage for Bad Actors

Candidates using deepfake technology can gain an advantage over genuine applicants by masking identity or experience.Use of Stolen Identity Data

Deepfakes often involve stolen personally identifiable information, allowing impostors to pose as real people during remote interviews.Difficulty in Detecting Manipulation

Deepfakes can be hard to spot with the naked eye, and mismatches between video and audio are often subtle.Increased Burden on Recruiters

Recruiters may need to invest more time and resources in verifying candidate authenticity rather than focusing on evaluating fit and skill.

How Sherlock AI Helps Detect and Prevent Interview Fraud

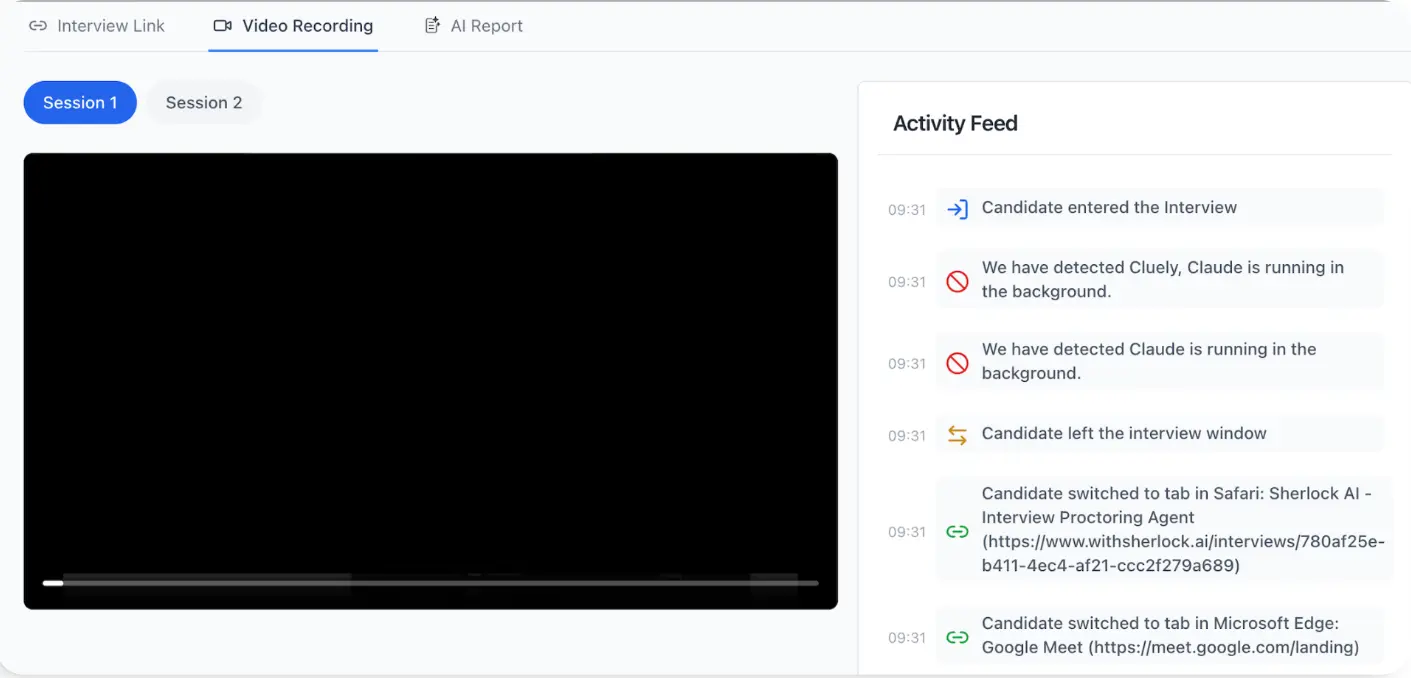

Modern interview fraud is subtle, layered, and difficult to detect through observation alone. Sherlock AI is designed to surface hidden risks in real time, giving hiring teams deeper visibility without disrupting genuine candidates.

1. Real Time AI Fraud Detection

Sherlock AI continuously monitors interview sessions using advanced models that analyze audio, video, behavioral, and system level signals together.

Detects AI assisted answering patterns and potential deepfake behavior during the live session

Flags anomalies as they happen instead of relying only on post interview review

Allows interviewers to stay focused on conversation while Sherlock works silently in the background

2. Multimodal Behavioral and Context Monitoring

Rather than depending on a single cue like eye movement or voice tone, Sherlock AI combines multiple data streams to identify complex cheating patterns more reliably.

Device level signals that may indicate hidden screens or unusual hardware setups

Audio irregularities such as background prompts, overlapping voices, or synthetic speech artifacts

Behavioral patterns including response timing shifts, unnatural pauses, and sudden clarity changes

This multimodal approach reduces false assumptions and improves detection accuracy.

3. Seamless Calendar and Meeting Integration

Security should not add friction to scheduling. Sherlock AI integrates directly with major calendar platforms so protection starts automatically.

Works with Google Calendar, Apple Calendar, and Outlook

Automatically secures scheduled interview sessions

Generates protected meeting environments without extra steps for recruiters

4. Real Time Alerts and Interview Commentary

Sherlock AI supports interviewers during the session with contextual intelligence.

Sends live alerts when suspicious patterns are detected

Provides moment specific notes that help interviewers probe deeper with follow up questions

Surfaces signals in a way that supports decision making rather than interrupting flow

5. Automated Notes and Interview Insights

Beyond fraud detection, Sherlock AI helps improve interview quality and consistency.

Captures structured notes automatically throughout the session

Helps teams review how candidates approached problems, not just final answers

Enables consistent comparisons across candidates without relying on manual note taking

This creates a stronger audit trail and more objective hiring discussions.

In a world where polished answers can be AI assisted, rehearsed, or delivered through impersonation, Sherlock AI provides real time, context aware protection. It strengthens hiring decisions while preserving a smooth and respectful interview experience.

Conclusion

Deepfakes in job interviews are no longer a distant or theoretical threat. They are actively reshaping how interview fraud happens, making impersonation more convincing and detection more difficult for hiring teams.

What used to be a rare edge case has evolved into a scalable risk. Fraudsters can now combine face manipulation, voice synthesis, and real time AI assistance to bypass traditional interview processes. The result is not just a bad hire, but potential exposure to security breaches, financial fraud, compliance violations, and long term reputational damage.

By combining recruiter awareness with purpose built technology like Sherlock AI, companies can protect their hiring process without slowing down recruitment. Proactive verification helps ensure that the person being evaluated is genuine, qualified, and truly present.

As deepfake technology continues to advance, the organizations that adapt early will be the ones that maintain trust, protect their systems, and hire with confidence.