Back to all blogs

Discover how to spot AI deepfakes posing as job candidates in interviews and prevent impersonation, hiring fraud, and identity manipulation.

Abhishek Kaushik

Feb 3, 2026

AI has transformed recruitment, but it has also introduced a dangerous new threat: deepfake job candidates.

Recruiters today are no longer just assessing skills and culture fit. They are increasingly tasked with answering a more fundamental question. Is the person on the screen even real?

With AI generated faces, voice cloning, and real time face swapping tools becoming more accessible, deepfakes are actively infiltrating remote hiring pipelines. Fraudsters are using synthetic identities to secure jobs, access sensitive systems, and bypass background checks. This creates serious financial and security risks for organizations.

A Resume Genius survey of hiring managers found that 17% have encountered candidates using deepfake technology to alter their appearance or video feeds during interviews, and research from Gartner predicts that by 2028, as many as 1 in 4 job candidates globally could be fake.

This guide explains how to spot AI deepfakes posing as job candidates, why traditional interview methods fall short, and how modern hiring teams can protect themselves using behavioral intelligence and AI powered detection.

Why Deepfake Candidates Are Harder to Detect Than Ever

Deepfake technology has advanced rapidly. Modern systems can now:

Maintain eye contact and natural facial expressions

Sync lips accurately with cloned voices

Respond instantly during live interviews

Minimize obvious visual artifacts and glitches

Because of this, recruiters can no longer rely on intuition, webcams, or surface level identity checks. Fraud now hides in behavioral patterns rather than visual cues.

Read more: Rise of AI Interview Fraud in 2026: Deepfakes, Proxy Hiring & How to Protect Your Company

How To Spot AI Deepfakes Posing As Job Candidates

AI driven deepfakes are no longer limited to social media or misinformation campaigns. They are actively being used to manipulate remote hiring processes. Recruiters now face a new challenge of verifying not just skills, but the authenticity of the person behind the screen.

Spotting AI deepfakes posing as job candidates requires close attention to behavioral signals, reasoning patterns, and consistency across interactions. Below are key indicators hiring teams can use to identify synthetic or assisted candidates during interviews.

1. Request Actions That Challenge AI Limitations

Deepfake systems are designed to appear realistic in predictable interview settings. They struggle when asked to respond to spontaneous physical actions.

Recruiters can apply techniques like:

Ask candidates to touch their face or place a hand in front of the camera

Request head turns or profile views during live conversation

Ask candidates to adjust lighting, reposition the camera, or hold up a physical object

These actions disrupt facial overlays and real time filters. Even advanced deepfakes struggle to adapt without visual distortion, hesitation, or refusal.

2. Watch for Technical and Visual Inconsistencies

Despite recent advances, deepfake video still produces subtle visual artifacts that reveal manipulation.

Key indicators include:

Unnatural blending where the face meets the background

Lighting inconsistencies that affect the face differently from the room

Lip movement that does not fully align with speech

Robotic eye movement, irregular blinking, or overly smooth facial expressions

These signs often intensify during longer interviews or when lighting and camera angles change. Human interviewers may miss them, but consistent observation increases detection accuracy.

3. Implement Identity Verification Protocols

Deepfake candidates often succeed because identity checks are treated as one time formalities.

For remote roles, especially those involving system access, identity verification should be layered and continuous:

Request government issued identification through secure channels before interviews

Visually compare documents with the live video feed

Conduct live skill assessments that require real time thinking

Apply the same verification standards across all candidates

Impersonators may pass document checks, but they struggle to maintain identity consistency across multiple verification steps.

4. Ask Contextual and Cultural Questions

Deepfake operators rely heavily on scripted knowledge. They often fail when questions require lived experience, cultural awareness, or personal judgment.

Effective approaches include:

Asking location specific questions based on where the candidate claims to have worked or lived

Probing work history using scenario based follow ups rather than factual recall

Asking about interpersonal conflicts, failures, or decision making trade offs

For example, instead of asking what tools someone used at a previous company, ask how they handled a difficult team disagreement. These questions expose shallow or fabricated experience quickly.

5. Analyze Technical Indicators

Some of the strongest deepfake signals exist beyond the interview screen.

Hiring teams should watch for:

IP address locations that do not align with the candidate’s stated location

Candidates insisting on uncommon or specific video platforms that better support deepfake tools

Repeated targeting of remote technical roles with privileged system access

Technology companies are frequent targets because they hire remotely, manage valuable intellectual property, and often move quickly during hiring cycles.

Why These Five Signals Matter for Modern Hiring

AI deepfakes are not a future concern. They are already impacting real hiring decisions. Traditional interview methods were not designed to detect synthetic identities, which is why structured detection signals are now essential.

By combining physical verification, behavioral analysis, contextual questioning, and technical monitoring, organizations significantly reduce the risk of hiring a deepfake candidate.

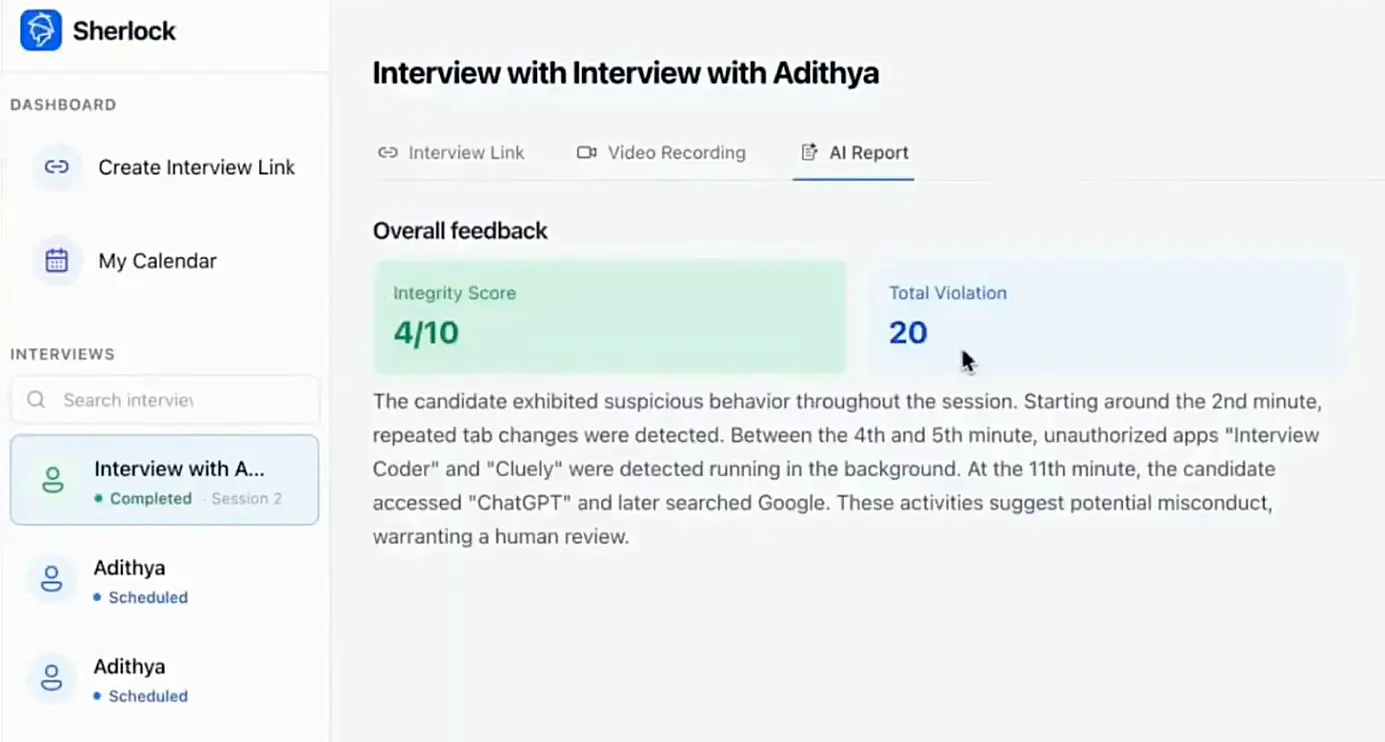

Platforms like Sherlock AI strengthen this process by analyzing behavioral consistency, response structure, timing patterns, and identity signals across interview stages. Instead of relying on visual trust alone, Sherlock AI helps recruiters assess authenticity at scale.

Key Sherlock AI Features That Support These Signals

Behavioral consistency analysis to identify scripted or AI assisted responses across the interview.

Response structure and reasoning flow detection to surface unnatural explanation patterns.

Timing and interaction monitoring to flag latency and pacing anomalies linked to AI assistance.

Cross interview identity comparison to detect proxy interviews and impersonation risks.

Contextual risk insights instead of binary decisions to support informed recruiter judgment.

Scalable interview integrity for remote hiring without disrupting candidate experience.

Final Thoughts

The impact of hiring a deepfake candidate goes far beyond a failed interview or bad hire. Once inside an organization, fraudulent hires can gain access to internal systems, source code repositories, customer data, and financial workflows. Cybersecurity investigations show that malicious actors often use fake employees to steal intellectual property, reroute payments, install ransomware, or maintain long term access for future attacks. In technical and remote first organizations, this type of breach can result in severe financial losses and long lasting reputational damage.

Traditional hiring practices were not designed to detect synthetic identities. Resume screening, visual observation, and one time identity verification are insufficient when attackers can manipulate video, audio, and responses in real time. This is why modern hiring teams must shift toward behavioral verification, reasoning based interviews, and consistency checks across interview stages. Deepfake candidates struggle most with adaptability, experiential depth, and sustained authenticity over time.

The consequences extend beyond a bad hire, including data theft, financial fraud, and long term system access. By applying the five detection methods outlined above and using behavioral analysis tools like Sherlock AI, hiring teams can move from surface level trust to evidence based interview integrity and protect their organizations from modern hiring threats.