Back to all blogs

Deepfakes are entering hiring interviews through AI-generated video, voice cloning, and proxy candidates. Learn how to detect and prevent deepfake interviews at scale.

Abhishek Kaushik

Feb 19, 2026

As remote hiring becomes the norm, the rise of deepfake technology is creating a new class of risk that goes beyond simple resume embellishment.

Research shows that 17% of U.S. hiring managers have seen candidates using deepfakes in video interviews, while advisory firms like Gartner project that by 2028, up to 25% of job applicants worldwide could be fake, using AI to impersonate real candidates or fabricate identities.

Deepfakes are no longer science fiction; they’re evolving rapidly and becoming easier to create and harder to detect, undermining the trust that traditional video interviews once provided. In this environment, hiring teams must understand how deepfakes work, why conventional safeguards fall short, and how to protect the interview process from sophisticated AI-driven impersonation.

What Deepfakes Look Like in Modern Interviews

In the context of hiring, deepfakes are AI-generated visual or auditory media that mimic a real person’s identity in an interview setting. This can include:

AI-generated or altered video of a candidate or interviewer

Cloned or synthesized voices that match the visual feed

Hybrid deepfakes where video and audio are both manipulated for added realism

These aren’t cartoonish filters; modern deepfakes can be convincing enough to fool human interviewers in real time.

👉 How To Spot AI Deepfakes Posing As Job Candidates in Interviews

How Deepfakes Differ from Traditional Impersonation

Traditional impersonation might involve someone pretending to be someone else without technological enhancement. Deepfakes, on the other hand:

Leverage AI to synthesize faces and voices indistinguishable from the real thing

Can be deployed in real time, not just pre-recorded

Blur the line between human and machine participation

That makes them far harder to detect than a simple résumé lie or proxy interview.

Why Remote and Video-First Interviews Are Prime Targets

Remote hiring eliminates in-person identity cues such as body language, or verifying documents in person, offering deepfake attackers an ideal environment.

Deepfakes in interviews shift the risk from accidental misrepresentation to intentional identity fraud. When AI can convincingly simulate a candidate’s face, voice, or behavior, remote hiring processes can no longer rely on surface-level cues to assess authenticity.

Why Traditional Interview Safeguards Fail Against Deepfakes

Seeing a face on camera and hearing a voice are no longer guarantees of authenticity and this has profound implications for remote hiring:

1. “Camera On” and Visual Familiarity Aren’t Reliable Proof

In the early days of remote interviews, requiring candidates to turn on their webcams felt like a strong safeguard.

Unfortunately, deepfakes can mimic facial expressions, speech, and natural mannerisms convincingly enough to fool human interviewers, especially in video calls that tend to be short and high-context.

2. Limits of Video Calls and Identity Checks

Traditional video-based identity verification assumes what’s on screen is real. But attackers can:

Replace faces with synthetic ones trained on publicly available media

Clone voices that match lip movements with high precision

Use stolen or fabricated identities layered with AI generation

Deepfakes can look and sound real even when generated from minimal source material, and remote platforms don’t inherently verify who is behind the camera.

3. Human Intuition Isn’t Enough

Deepfakes can maintain eye contact, produce appropriate micro-expressions, and even respond to dynamic cues, all without revealing obvious signs of fakery unless actively analyzed.

Experts note deepfakes don’t need to be perfect; they just need to be convincing enough to pass initial trust checks.

4. One-Time Authentication Doesn’t Provide Ongoing Assurance

Traditional authentication like checking a government ID or running a background screen before interviews, establishes identity once, assuming continuity thereafter.

But deepfakes can be introduced after those checks, or interview streams can be hijacked mid-conversation with synthetic media.

Modern fraud schemes show that the person or persona seen during the interview may not be the same one verified earlier in the process.

5. Deepfakes + AI Answer Assistance Amplify Each Other

Deepfakes alone can fake identity. Combined with AI tools that generate or assist responses in real time, they can also fake competence. This creates two layers of deception:

Identity deception (fake face/voice)

Performance deception (AI-assisted responses)

When both are present, traditional visual cues and reliance on interview content become even less trustworthy.

Visual familiarity, once a cornerstone of remote interviews, no longer guarantees the candidate you think you’re assessing is actually real or present.

Detecting and Preventing Deepfake Interviews

Deepfakes often appear convincing at first glance, but they struggle under sustained, dynamic interaction. Common signals include:

Lip-sync mismatches and unnatural facial motion: Subtle delays between speech and mouth movement, rigid facial expressions, limited blinking, or oddly smooth skin textures can indicate synthetic face overlays.

Audio-video desynchronization: Even advanced deepfakes may show timing drift between voice and visuals, inconsistent head movement during speech, or tonal changes that don’t match facial emotion.

Behavioral inconsistencies under follow-up: When interviewers probe deeper, deepfake setups often falter; responses become generic, emotionally flat, delayed, or inconsistent with earlier answers, especially when asked to reason aloud or reflect on lived experiences.

These signals are rarely obvious in isolation, but patterns emerge when interviews are observed holistically.

Best Practices for Prevention

To defend against deepfake interviews, organizations need to rethink how interviews are structured and evaluated:

1. Continuous monitoring during interviews

Deepfakes may appear only during certain segments of an interview.

Ongoing observation rather than a quick identity check at the start helps surface anomalies that emerge under pressure or extended interaction.

2. Behavioral and contextual analysis, not just visuals

Relying solely on what the camera shows is no longer enough. Interviewers should pay attention to:

Response timing and reasoning depth

Emotional congruence between answers and expressions

Consistency across technical, behavioral, and situational questions

Authentic candidates tend to show natural variation; synthetic behavior often looks polished but shallow.

3. Designing interviews that expose synthetic behavior

Deepfakes perform best in predictable, scripted environments. Interviews that include:

Follow-up questions based on prior answers

Real-time problem solving

Requests for personal reflection or trade-off reasoning

make it harder for synthetic systems to keep up convincingly.

While interview design and interviewer vigilance help, manual detection doesn’t scale.

Deepfake technology is improving faster than human perception, and high-volume hiring leaves little room for slow, subjective judgment.

This is driving the need for interview integrity systems that continuously analyze behavioral, audio, and visual signals in real time, surfacing risks humans might miss and restoring confidence in remote hiring.

Sherlock AI: A Purpose-Built Solution to Detect and Prevent Deepfake Interviews

Sherlock AI is a purpose-built interview integrity system designed to detect deepfakes, proxy participation, and AI-generated behavior as interviews unfold, giving hiring teams the confidence to evaluate real people rather than synthetic interactions.

Multimodal Detection Built for Interview Fraud

Sherlock AI analyzes multiple signals simultaneously, instead of relying on a single indicator like video or audio alone.

It evaluates:

Video signals

Unnatural facial motion

Lip-sync inconsistencies

Rigid or overly smooth expressions often seen in deepfakes

Audio signals

Synthetic or transformed speech patterns

Tonal inconsistency across questions

Voice characteristics that shift mid-interview

Behavioral signals

Delayed responses under follow-ups

Script-like answers typical of AI copilots

Inconsistencies between reasoning and prior statements

This multimodal approach makes it harder for deepfakes, voice clones, or AI-assisted proxies to pass unnoticed.

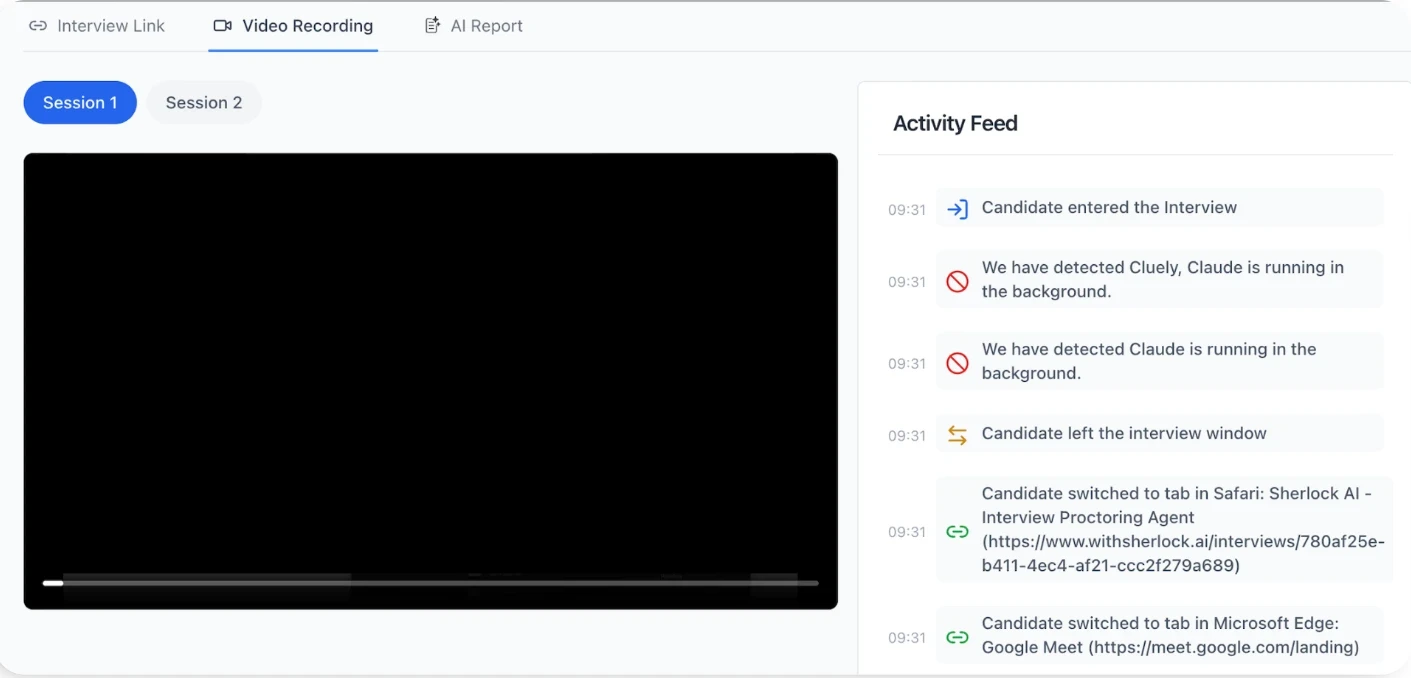

Real-Time Risk Signals During Live Interviews

Instead of flagging issues after the interview ends, Sherlock AI works as the interview happens.

It can surface:

Indicators of deepfake video or voice manipulation

Signs of real-time AI answer copilots feeding responses

Patterns suggesting proxy candidates assisted by AI

Behavioral shifts when candidates move from memorized to dynamic questions

This allows interviewers to:

Adapt questioning on the fly

Probe deeper where risk signals appear

Maintain interview flow without disrupting the candidate experience

Behavioral + Contextual Intelligence (Not Black-Box Scores)

Sherlock AI doesn’t reduce candidates to opaque pass/fail labels.

Instead, it provides:

Explainable risk insights

Context around why a response or behavior appears synthetic

Visibility into sudden changes in response quality, tone, or timing

This keeps decision-making transparent and firmly in human hands while giving interviewers better signals than intuition alone.

Continuous Monitoring Across Interview Stages

Deepfake and AI-assisted fraud doesn’t always appear at the start.

Sherlock AI:

Monitors continuously, not just during identity checks

Cross-checks behavioral and voice consistency across: Screening calls, technical rounds and behavioral interviews

Detects when the “same candidate” behaves differently across stages

This closes the gap left by one-time verification methods.

Built for Scale, Compliance, and Auditability

Beyond detection, Sherlock AI supports hiring teams operationally by:

Capturing structured interview insights automatically

Creating audit trails for high-risk interviews

Supporting compliance and post-interview reviews

Scaling across high-volume remote hiring without added interviewer burden

Deepfakes, AI copilots, and proxy candidates have become systemic risks in remote hiring.

Sherlock AI is purpose-built to defend interviews against these threats by combining behavioral intelligence, multimodal detection, and real-time monitoring, restoring trust in who you’re actually evaluating during an interview.

Conclusion

Deepfakes, AI answer copilots, and proxy candidates are fundamentally changing what interview fraud looks like.

Traditional safeguards like camera-on policies, human intuition, and one-time identity checks, were never designed for this level of sophistication. As deepfake technology improves, relying on visual familiarity or gut feel creates false confidence and exposes organizations to bad hires, security risks, and long-term trust issues.

To protect hiring integrity at scale, interview processes must evolve. That means moving beyond static verification toward continuous, AI-native monitoring that understands behavioral, audio, and visual signals together.