Back to all blogs

Campus interviews are increasingly vulnerable to AI-assisted cheating. Learn why traditional hiring signals fail and how teams can detect real skill early.

Abhishek Kaushik

Feb 10, 2026

In the age of generative AI, campus recruitment is facing an unexpected crisis. Surveys from professional communities reveal that around 20% of jobseekers admit to secretly using AI during interviews, and more than half of respondents say doing so has become “the new norm.”

On college campuses, the trend isn’t confined to interviews alone: educators are reporting sharp rises in AI misuse during academic work, with 78% of faculty seeing more cheating since AI tools became widely available and concerns that reliance on AI is undermining both critical thinking and ethical standards.

These developments paint a worrying picture: as generative AI becomes more accessible and sophisticated, traditional campus interview processes are increasingly vulnerable to misuse. This raises serious questions about fairness, skills validation, and the future of early-career hiring.

How AI Is Quietly Entering Campus Interviews

Campus interviews haven’t been “hacked” overnight, they’ve been quietly adapted. As interview formats stayed largely the same, candidates started layering AI into the process in ways that are hard to notice and even harder to prove.

AI assistance now shows up at multiple points in the campus interview journey:

Hidden AI copilots during virtual rounds

Students run LLMs in the background during coding tests, case interviews, and even HR conversations using them for real-time hints, structured answers, or cleaner explanations while appearing fully engaged on screen.Secondary devices and discreet audio assistance

Phones, tablets, or laptops placed just outside the camera frame, along with subtle ear-pieces, allow candidates to receive AI-generated guidance without breaking eye contact or interview flow.Shared, real-time prep channels

Group chats on WhatsApp, Discord, or Telegram circulate AI-generated answers, frameworks, and follow-ups as campus drives unfold. Once one candidate encounters a question, a refined response quickly reaches dozens of others.AI-polished responses mistaken for “high potential”

Clear structure, confident delivery, and textbook-perfect answers align exactly with what interviewers expect from strong fresh graduates making AI-assisted performance difficult to distinguish from genuine capability.

The challenge is misalignment. Campus interviews were designed to spot enthusiasm and fundamentals, not to detect external assistance. And that design gap is where AI is quietly reshaping outcomes.

Read more: How To Catch Candidates Using AI During Interviews

Why Traditional Campus Hiring Signals No Longer Work

Campus hiring was built for scale. Thousands of candidates, limited time, and a need to make quick decisions pushed companies toward fast, repeatable signals. That model worked until AI learned to perform those signals better than humans.

Several structural factors make colleges especially exposed:

Short, high-volume interviews with predictable questions

Campus rounds prioritize consistency and speed, which leads to reused questions and familiar formats. These are exactly the conditions where AI thrives, recognizing patterns and producing “ideal” answers on demand.Webcam-only assessments and timed tests

Online aptitude tests, coding screens, and video interviews assume isolation and independent thinking. In reality, AI can generate solutions, explanations, and even step-by-step reasoning faster than most fresh graduates under time pressure.Interviewers optimizing for throughput, not verification

When interviewers are expected to evaluate dozens of candidates in a day, the focus naturally shifts to surface-level clarity, confidence, and correctness rather than probing how answers are formed.A widening gap between interview performance and job readiness

Fresh graduates who perform exceptionally well in AI-assisted interviews often struggle where there’s no script, no instant guidance, and sustained problem-solving is required.

The result is a signal collapse. When everyone sounds articulate, structured, and confident, those traits stop differentiating real ability. In campus hiring today, perfection has become meaningless.

The Downstream Risk: Bad Early-Career Hires at Scale

The real danger of AI cheating in campus interviews isn’t the interview itself, it’s what happens after. When assisted performance drives hiring decisions, the cost shows up slowly, across teams, and at scale.

AI-assisted candidates struggle in real work environments

Once hired, these candidates face open-ended problems, ambiguous requirements, and long execution cycles - conditions where real understanding matters and AI can’t quietly fill the gaps. The result is slower ramp-up, frequent blockers, and inconsistent output.

Rising training costs and manager overhead

Teams compensate by extending onboarding, increasing shadowing, and providing constant guidance. Managers and senior engineers spend disproportionate time correcting basics instead of building or mentoring high-potential talent.

Early attrition and silent performance churn

Some hires drop out quickly when expectations don’t match capability. Others stay but underperform, creating a long tail of “present but unproductive” employees that quietly drain time and morale.

Low-capability hires embedded across teams

Campus hiring happens in batches. When AI-inflated performance slips through, the issue isn’t one bad hire, it’s dozens placed across critical teams, multiplying the impact and making remediation complex.

Early-career roles are the hardest to course-correct

Foundational habits, problem-solving approaches, and ownership mindset form in the first few years. When those foundations are weak, later interventions become expensive and often ineffective.

Campus hiring mistakes don’t trigger immediate alarms. They accumulate in missed deadlines, stretched managers, and teams carrying weight they can’t quite explain. That’s what makes them dangerous.

Sherlock AI: Restoring Trust in Campus Interviews

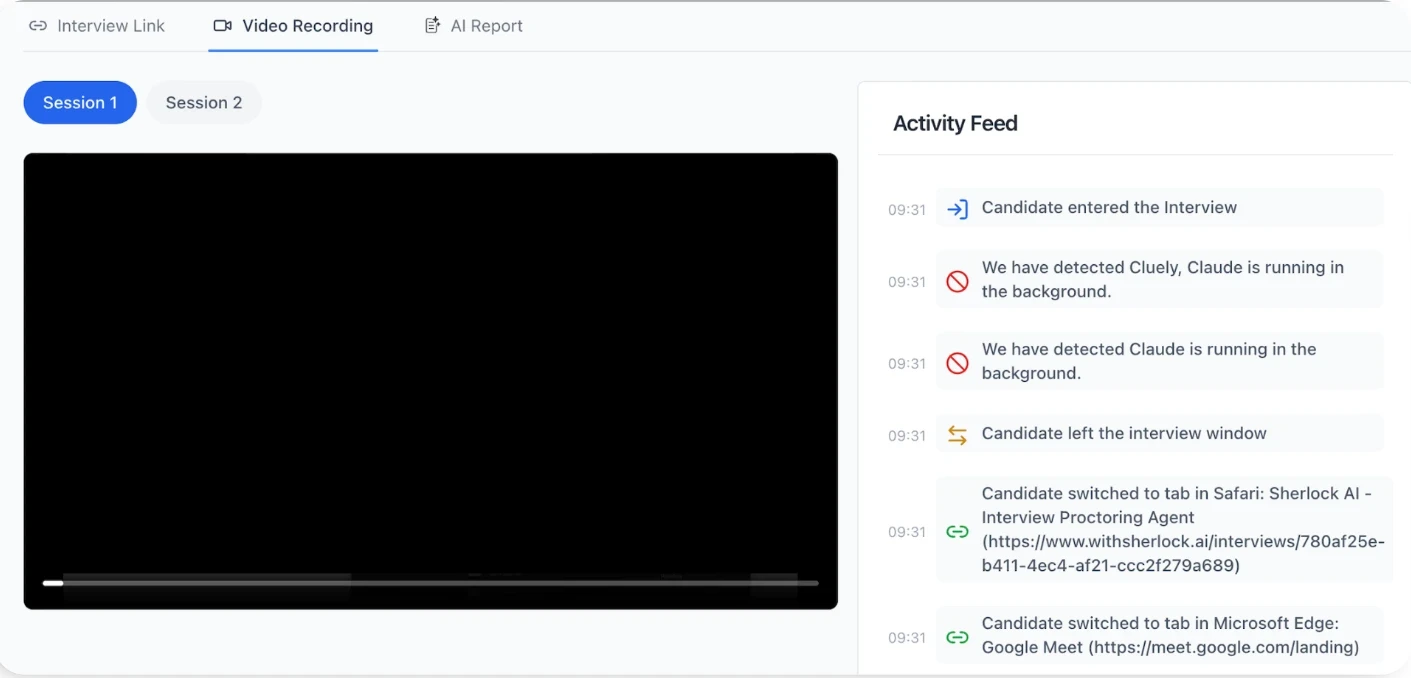

Sherlock AI works as a silent interview integrity layer during live Zoom, Teams, or Google Meet interviews.

It doesn’t interrupt candidates or change the interview format. Instead, it focuses on what traditional campus hiring misses:

Detecting real-time external assistance

Sherlock AI identifies signals of hidden AI copilots, second-device usage, scripted responses, and external prompting, patterns that are impossible to catch through webcam monitoring alone.Spotting impersonation and proxy behavior

In high-volume campus drives, identity substitution and proxy interviews are rising risks. Sherlock AI continuously verifies that the person answering is the same person being evaluated.Separating polished answers from genuine skill

By analyzing response timing, behavioral cues, and consistency across questions, Sherlock AI helps recruiters see whether answers are being generated, coached, or truly understood.Designed for scale, not suspicion

Campus interviews move fast. Sherlock is built to run silently in the background, giving hiring teams post-interview signals without slowing down throughput or burdening interviewers.

Most importantly, Sherlock AI doesn’t assume candidates are cheating, it assumes signals need validation. As AI continues to flatten interview performance, campus hiring needs systems that can tell the difference between potential and assistance.

When everyone looks perfect, trust has to be engineered not guessed.

Conclusion

Campus interviews are no longer reliable on confidence and clarity alone. As AI makes assisted performance indistinguishable from real skill, early-career hiring decisions are increasingly based on distorted signals.

When interview performance can be manufactured, capability gaps only surface after teams are built and work begins.

That’s why interviews now need verification, not just evaluation. Sherlock AI runs silently inside live interview calls to detect external assistance and impersonation, helping hiring teams identify genuine potential before mistakes compound.