Back to all blogs

Behavioral interview answers are getting harder to trust. Explore the signs of AI-generated responses and how technology like Sherlock AI helps protect hiring decisions.

Abhishek Kaushik

Feb 17, 2026

Behavioral interviews are built on a simple idea. Past behavior predicts future performance. When candidates describe real experiences, hiring teams learn how they think, act under pressure, and work with others.

But that foundation is starting to crack.

AI tools can now generate polished, emotionally intelligent, and perfectly structured answers to almost any behavioral question in seconds. Candidates are no longer just preparing stories. Some are delivering responses that are partially or fully AI-generated in real time. In broader recruitment surveys, 72% of candidates admit to lying on their resumes, and 38% admit to lying during interviews.

The challenge for hiring teams is no longer just evaluating answers. It is figuring out whether the answer reflects real experience or artificial assistance.

Why AI-Generated Behavioral Answers Are Hard to Spot

Unlike technical cheating, behavioral interview fraud does not look suspicious on the surface.

AI-generated responses are often:

Well structured using popular frameworks like STAR

Emotionally aware and professionally worded

Free of hesitation, filler, or uncertainty

Tailored exactly to the question being asked

In short, they sound like ideal interview answers. That is exactly the problem.

Traditional red flags such as looking off screen or long pauses do not always apply anymore. Candidates can receive subtle AI assistance that helps them refine, rephrase, or even generate stories while maintaining natural eye contact and conversation flow.

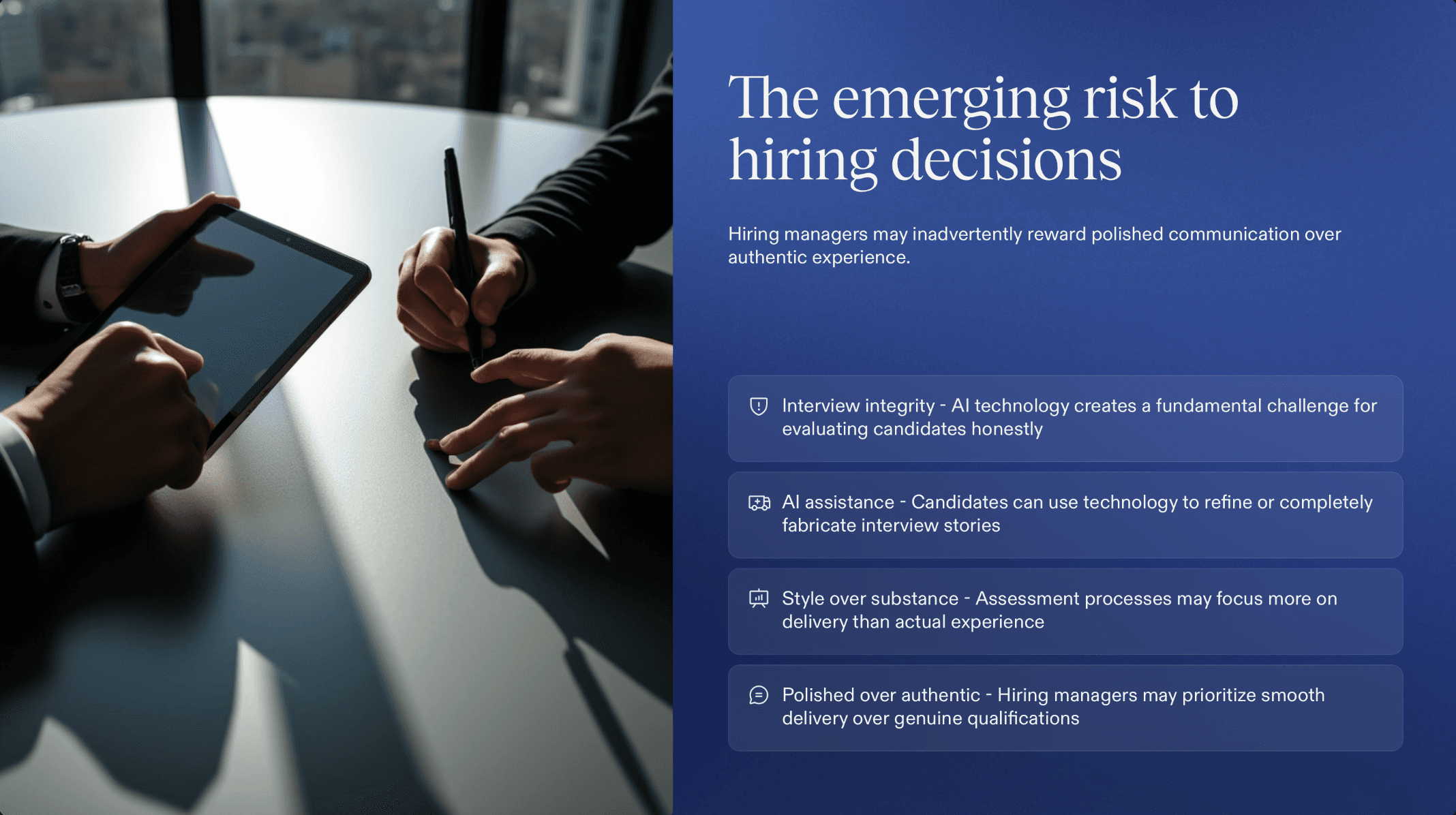

This creates a new kind of risk. Hiring decisions may be based on communication quality rather than authentic experience.

Key Signs a Behavioral Answer May Be AI-Generated

No single signal proves AI involvement. However, certain patterns appear more often when answers are generated or heavily assisted by AI.

1. Answers Sound Too Polished and Generic

Real experiences tend to be messy. They include small details, imperfect decisions, and emotional nuance.

AI-generated answers often sound:

Overly structured and textbook perfect

Lacking specific names, dates, tools, or metrics

Universally applicable to almost any role or company

If a story could easily fit into a leadership seminar slide deck, it may not come from lived experience.

2. Lack of Sensory or Contextual Detail

People who went through an experience usually remember small, human details. These details are difficult for AI to invent convincingly without prompts.

Watch for answers that skip over:

Team dynamics or personalities

Environmental context such as deadlines, constraints, or pressure

What the candidate was thinking or feeling in the moment

When asked follow up questions, candidates using AI assistance may struggle to go deeper beyond the initial polished version.

3. Inconsistent Depth Across Questions

A strong candidate typically shows a consistent level of depth and reflection across different questions.

AI-assisted candidates may show a different pattern:

Extremely strong, structured answers to common questions

Vague or shallow responses to unexpected follow ups

Difficulty expanding when the interviewer changes direction

This happens because AI works best with predictable prompts, not spontaneous conversational turns.

4. Delayed but Perfectly Formed Responses

A short pause to think is normal. But repeated pauses followed by highly refined, multi-part answers can be a signal of real time AI assistance.

Look for patterns such as:

Consistent delays before complex answers

Responses that sound edited rather than spoken

Sentences that feel written rather than conversational

Human speech usually includes minor corrections, informal phrasing, and thinking out loud. AI-assisted answers often sound like final drafts.

5. Overuse of Framework Language

Frameworks like STAR are useful. However, real candidates rarely label their thinking so cleanly in natural conversation.

AI-generated answers may feel like they are ticking boxes:

Clear Situation, Task, Action, Result sequence every time

Repeated leadership buzzwords

Balanced but formulaic reflections

When every answer follows the same polished template, authenticity may be missing.

Read More: 10 Best Interview Cheating Detection Tools

How Interviewers Can Test for Authenticity

Instead of trying to catch candidates off guard, the goal should be to gently pressure test whether the story is real.

Here are techniques that make AI assistance harder to rely on.

1. Ask for Unexpected Detail

Follow up with questions that require memory, not structure.

Examples:

What was the mood in the room when that happened

Who pushed back the most and why

What did you do in the first 10 minutes after you realized there was a problem

These details are easier to recall from real experience than to generate on the fly.

2. Change the Timeline

Ask candidates to retell a part of the story in a different order.

For example:

You mentioned the result. Now walk me through only the actions you personally took, step by step

What happened right before that decision

AI-generated narratives can break down when forced out of their original structure.

3. Focus on Emotions and Tradeoffs

AI can describe emotions, but it often does so in a general way.

Try questions like:

What part of that situation frustrated you the most

What option did you consider but decide against

Authentic answers usually include hesitation, reflection, and imperfect reasoning.

Real Experience vs AI-Generated Behavioral Answers

This table helps recruiters quickly understand the difference in patterns.

Dimension | Real Candidate Experience | AI-Generated or AI-Assisted Answer |

|---|---|---|

Story detail | Includes specific people, tools, constraints, and context | Often high level, broadly applicable, and less grounded in specifics |

Emotional reflection | Shows mixed feelings, uncertainty, or imperfect decisions | Emotionally aware but often polished and generic |

Delivery style | Natural pauses, small corrections, conversational tone | Smooth, structured, and sounds pre-edited |

Follow-up depth | Can expand with more detail when asked | Struggles when pushed beyond the original version |

Structure | May be loosely structured or slightly messy | Consistently follows ideal frameworks like STAR |

AI-generated behavioral answers do not just make candidates sound better. They distort hiring signals.

Recruiters may unintentionally reward communication polish over actual competence. Over time, this increases the risk of mis-hires, especially in roles where judgment, ownership, and real world problem solving matter most.

How Sherlock AI Detects AI-Assisted Behavioral Answers

As behavioral interviews become a target for real time AI assistance, hiring teams need more than intuition. Sherlock AI is built specifically to bring visibility into what is happening behind the screen during remote interviews.

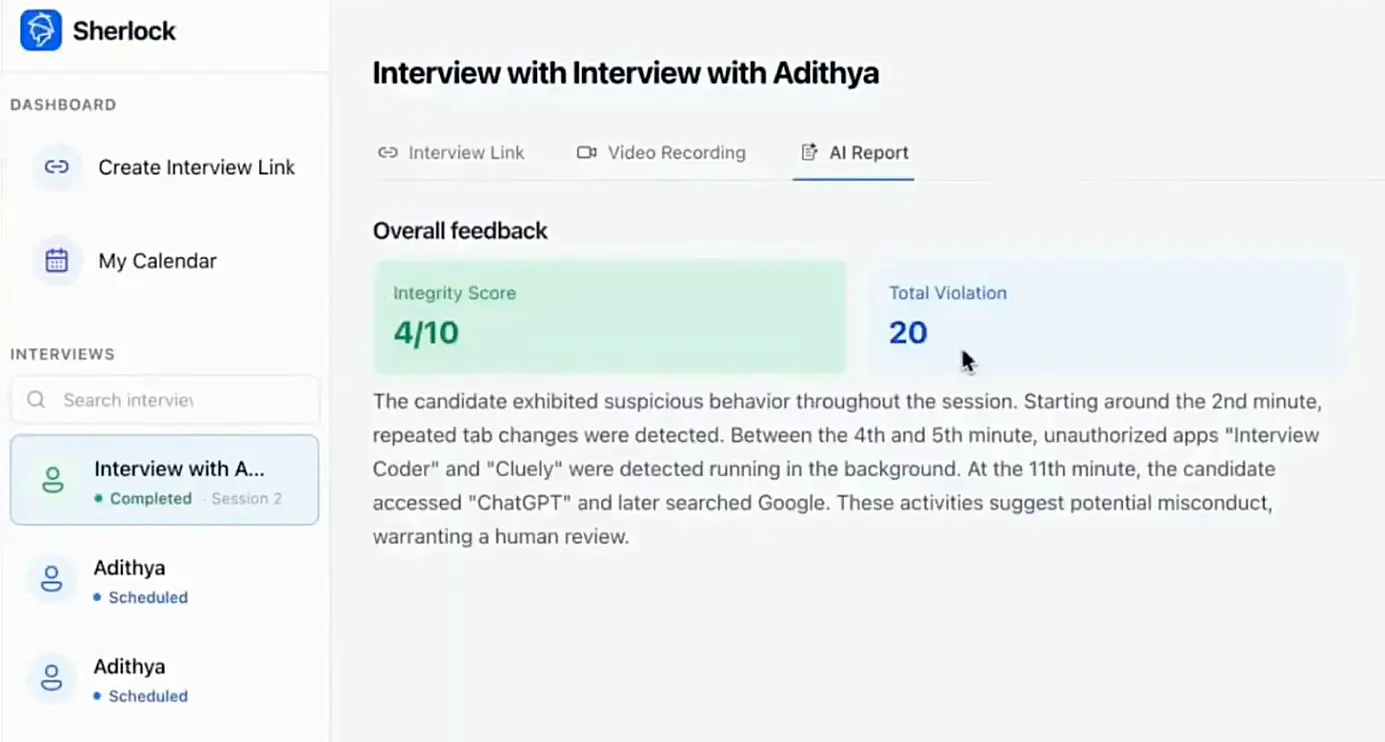

Sherlock AI focuses on identifying patterns that are difficult for humans to consistently track while conducting an interview.

1. Detection of Real Time AI Assistance Patterns

AI-assisted answers often have subtle delivery patterns that differ from natural recall. Sherlock AI analyzes behavioral signals such as response timing, interaction flow, and answer consistency across the interview.

This helps highlight moments where answers may be influenced by external tools rather than spontaneous memory.

2. Answer Consistency Analysis

When candidates rely on AI, their strongest answers tend to cluster around predictable, commonly asked questions. Depth and realism may drop when the conversation becomes less structured.

Sherlock AI helps surface inconsistencies in communication patterns, helping recruiters spot when performance varies in a way that suggests external support.

3. Behavioral Response Authenticity Signals

Sherlock AI is designed to support, not replace, interviewer judgment. It provides signals that help teams evaluate whether responses show natural recall, emotional realism, and contextual grounding.

This gives recruiters more confidence when deciding whether an answer reflects lived experience or generated content.

4. Scalable Protection for Remote Hiring

Manual detection does not scale. As interview volumes grow, it becomes harder for individual interviewers to notice subtle behavioral shifts.

Sherlock AI works quietly in the background, giving organizations a consistent layer of protection across all remote interviews, not just high risk roles.

The result is a fairer process for genuine candidates and reduced hiring risk for employers.

The Future of Behavioral Interviews

In a world where AI can help candidates sound better than they really are, authenticity becomes the most valuable signal of all. Hiring teams that adapt their interview techniques and use the right detection tools will be better equipped to identify real capability, not just well generated answers.

Tools like Sherlock AI help restore that visibility, giving teams the confidence to trust what they are hearing in remote interviews.

The goal is not to punish preparation. It is to ensure that the person you hire is the person who actually showed up in the interview.