Back to all blogs

Discover why traditional interview monitoring methods, how candidates exploit AI tools, the gaps in manual observation, and how solutions like Sherlock AI help protect hiring integrity.

Abhishek Kaushik

Feb 5, 2026

Traditional interview monitoring is facing a major challenge from the growing prevalence of AI‑assisted candidate behavior. Survey shows 51 percent of hiring managers say AI has made it harder to trust what they see and hear during virtual interviews, and nearly 60 % have suspected candidates of using AI or other deceptive tactics during some stage of the hiring process.

Meanwhile, the financial consequences of flawed hiring decisions are significant. Industry benchmarks show that a bad hire can cost a company up to 30 percent of that employee’s first‑year salary, with an average of $17,000 loss on each bad hire.

With generative AI enabling subtle, hard‑to‑detect assistance, traditional monitoring methods like basic webcam proctoring and manual observation are falling short.

The Rise of AI in Interviews: How Candidates Exploit Technology

The integration of artificial intelligence into everyday tools has made its way deep into hiring processes. AI is no longer something candidates use after an interview (e.g., between rounds); it’s increasingly deployed during live interviews to generate answers, write code, or even disguise a candidate’s identity.

1. Real‑time Answer Generation

Tools like ChatGPT and specialized interview assistants can parse questions from the interviewer, generate polished responses instantly, and feed them back to the candidate with minimal delay.

Some platforms and AI assistants run invisibly in the background or via an audio prompt, making them extremely hard to spot. In a widely shared TikTok clip, a young woman propped her smartphone beside her laptop during a live video interview so she could read AI‑generated responses to the recruiter’s questions, effectively outsourcing her answers in real time.

Amazon has updated internal hiring guidelines to explicitly ban the use of generative AI during interviews, signaling that this trend has become widespread enough to warrant corporate policy changes.

2. AI Coding Assistants in Technical Interviews

Candidates exploit AI copilots and interview‑specific assistants to produce correct solutions for technical problems in real time.

An AI interview assistant was reportedly used by a student to secure multiple internship offers from top tech firms, until the student was expelled from his university for ethical violations.

Recruiters report instances where code appears flawless on screen, but the candidate cannot explain how it was produced, a telltale sign of AI‑generated output.

3. Deepfake and Identity Manipulation

Deepfake technologies can create convincing faces or voice tracks to impersonate someone in a remote interview.

Surveys indicate about 17 percent of hiring managers have encountered deepfake attempts in interviews, where voice or video was manipulated to misrepresent a candidate’s identity.

Traditional methods like webcam proctoring and live questioning simply weren’t built to spot these invisible, adaptive AI signals, making them increasingly ineffective at guaranteeing interview integrity.

Why Traditional Monitoring Falls Short

As AI tools become more sophisticated, conventional interview monitoring methods are struggling to keep up. Webcam proctoring, live questioning, and manual observation were designed to detect simple cheating behaviors but modern AI-assisted techniques often bypass these safeguards completely.

1. Manual Observation Is Insufficient

Interviewers typically look for cues like hesitation, inconsistent answers, or unusual eye movement.

AI-generated responses are instant, polished, and contextually correct, often leaving no obvious behavioral clues.

Example: In coding interviews, candidates may produce flawless AI-assisted solutions but fail to explain their reasoning, a discrepancy too subtle for traditional evaluation to catch.

2. Webcam & Screen Monitoring Miss Hidden AI

Proctoring software generally monitors the candidate’s main screen and webcam.

AI tools can run on secondary devices, hidden browser tabs, or even phones placed just out of view.

3. AI Voice Synthesis Evades Detection

Advanced AI can convert text responses into natural-sounding speech in real time.

Candidates can read or play AI-generated answers aloud, making it virtually indistinguishable from their own voice.

Traditional proctoring and live questioning cannot reliably detect such audio-based cheating.

4. Proxy Participation & Deepfake Impersonation

AI combined with deepfake technology can simulate a candidate’s face and voice.

Proxy interviews or impersonation through deepfakes are nearly impossible to detect with standard monitoring, which typically only verifies superficial ID checks.

5. AI Can Exploit Interview Timing Loopholes

Some AI assistants analyze patterns in interview question sequences to predict follow-up questions.

Candidates can pre-generate multiple answers or prepare “smart scripts” that adapt dynamically, which human interviewers cannot track in real time.

6. Multi-tasking AI Goes Undetected

Candidates can run AI-driven notes, calculators, or coding assistants simultaneously on multiple devices.

This creates a scenario where a candidate appears engaged but is actually relying heavily on unseen AI support.

7. Behavioral Analytics Gaps

Traditional interviews rarely use keystroke dynamics, mouse movement patterns, or AI-detection algorithms.

Subtle patterns indicating AI use such as unusually fast response times with high accuracy, go unnoticed without technology designed to flag anomalies.

8. Unstructured Interviews Increase Risk

Open-ended, unstructured interviews give AI an advantage: it can generate articulate, context-aware responses for questions that lack strict scoring criteria.

Without structured evaluation rubrics, interviewers often cannot tell if the insight is genuine or AI-assisted.

Key Takeaways

Traditional monitoring focuses on visible, measurable behaviors, while AI-assisted cheating is invisible, subtle, and adaptive.

Techniques like AI-generated answers, voice synthesis, multi-device support, and deepfake impersonation exploit gaps in manual observation and basic proctoring.

Companies relying solely on conventional monitoring risk hiring candidates whose skills may be misrepresented, potentially leading to financial loss, reduced team productivity, and reputational damage.

Sherlock AI: Next‑Gen Solution for Interview Integrity

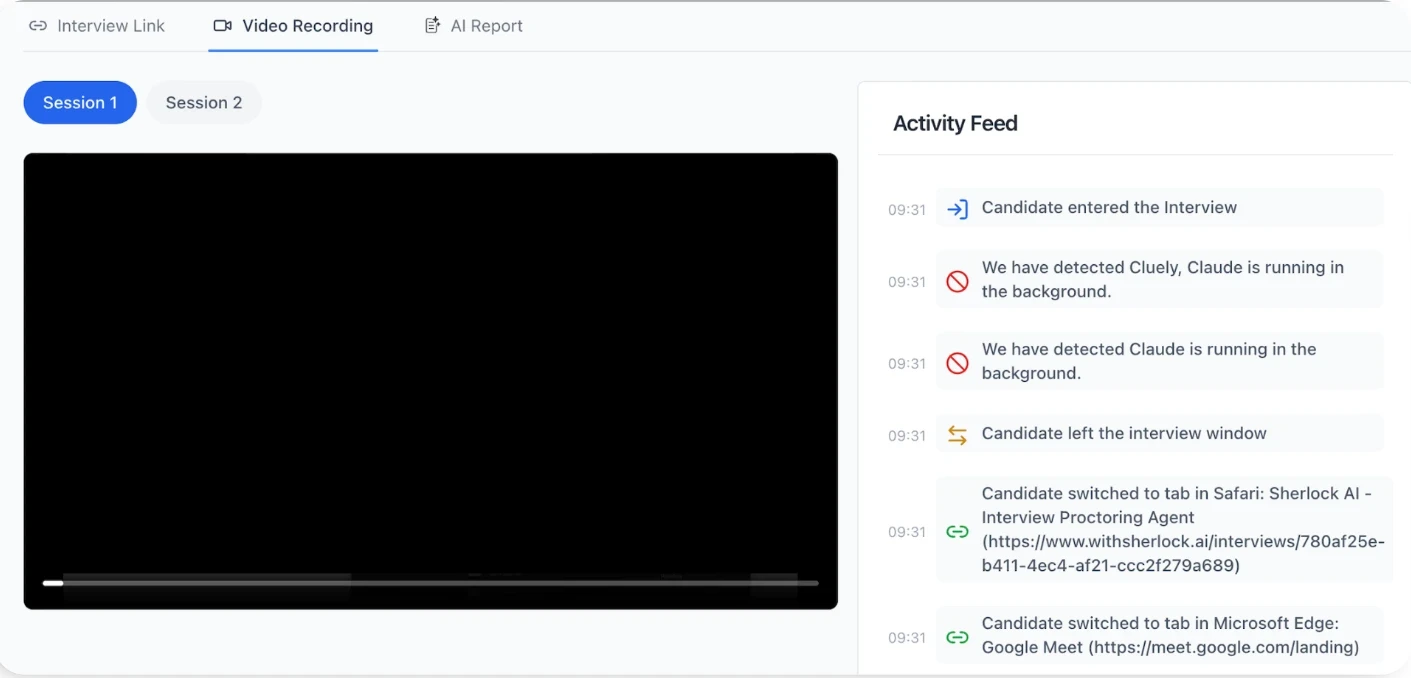

Sherlock AI offers an advanced, behavior-driven solution that helps recruiters detect subtle and invisible AI use during interviews.

Key Features:

Uses AI-driven behavioral analysis to detect anomalies like unusual response timing, inconsistencies between answers and reasoning, and rapid improvements in performance.

Monitors multiple signals simultaneously like webcam, screen activity, device interactions, and audio cues to identify potential AI-assisted behavior.

Provides real-time alerts to interviewers, enabling live follow-ups when suspicious patterns are detected.

Generates detailed post-interview reports with timestamps and confidence scores, giving objective evidence of potential misconduct.

Detects AI-generated answers, voice synthesis, and proxy participation, which traditional monitoring often misses.

Integrates seamlessly with platforms like Zoom, Microsoft Teams, and Google Meet, without disrupting existing workflows.

Focuses on high-value data for interview integrity, minimizing intrusive surveillance and respecting candidate privacy.

Augments human judgment, allowing interviewers to focus on evaluation while AI handles integrity checks.

By combining real-time insights with deep behavioral analytics, Sherlock AI closes the gaps left by traditional monitoring tools and empowers hiring teams to make confident, data-backed decisions in AI-rich interview environments.

Conclusion

The rise of AI in interviews has exposed the limitations of traditional monitoring methods. From AI-generated answers and real-time voice synthesis to deepfake impersonation and proxy participation, conventional proctoring, manual observation, and unstructured interviews are increasingly unable to ensure candidate authenticity.

Solutions like Sherlock AI demonstrate that technology can fight back. By using behavioral analytics, real-time alerts, and detailed post-interview reporting, it helps recruiters detect AI-assisted cheating invisible to traditional methods, while maintaining candidate privacy and supporting human judgment.

For organizations seeking reliable, fair, and future-proof hiring, investing in AI-aware monitoring tools is essential. Modern recruitment demands systems that adapt to AI, safeguard integrity, and provide actionable insights, ensuring that the interview process reflects candidates’ true skills and abilities.