Back to all blogs

Cheating in remote interviews is rising due to AI tools, proxy candidates, and deepfakes. Learn why it’s happening and how recruiters can stop fraud with modern interview security and verification.

Abhishek Kaushik

Feb 9, 2026

Remote hiring has brought speed and scale to talent acquisition but it has also exposed a growing challenge: cheating and misrepresentation in the hiring process.

According to a recent survey of nearly 1,800 job seekers, about 7 in 10 applicants admit to having lied or cheated at some point during the hiring process, including on online assessments and interviews.

Hiring teams are feeling the impact firsthand. According to a survey of 3,000 American managers, 59 % of hiring leaders suspect candidates are using AI or other deceptive tactics to misrepresent themselves in applications or interviews. Nearly 62 % believe candidates are better at faking identities with AI than employers are at spotting it.

As remote hiring becomes more embedded in workforce strategy, traditional verification touchpoints like in-person interviews and paper credentials are no longer sufficient to ensure that the person hired is truly the person who applied.

Why Cheating Is Increasing in Remote Hiring

The shift to remote hiring fundamentally altered how candidates are screened and verified. Traditional safeguards like in‑person verification, supervised testing, and on‑site behavioral cues have been replaced with digital touchpoints that are easier to manipulate. Below are the key forces driving the rise in candidate cheating and fraud today.

1. Fully Remote Interfaces + Unsuspected Weak Controls

Remote interviews and assessments eliminate physical oversight, creating fertile ground for deception.

Identity impersonation: Surveys in the U.S. show that 31 % of hiring managers interviewed someone later revealed to be using a fake identity, and 35 % said someone other than the applicant participated in a virtual interview.

Detection gaps: Only 19 % of employers feel confident their hiring process can reliably detect fraudulent applicants.

Without robust verification at every stage traditional remote tools aren’t enough to confirm authenticity.

2. AI Tools & Answer Generators Fuel Misrepresentation

AI that can code, write responses, or paraphrase on demand has lowered the barrier for candidates to present polished but insincere submissions.

AI deception suspected: 59 % of hiring managers reported suspecting candidates of using AI to misrepresent skills or generate interview content.

Misrepresentation outpacing detection: A majority of managers agree candidates are now better at faking identities with AI than employers are at spotting it.

Common behaviors include:

Using AI to generate or embellish resumes.

Reading off AI‑generated scripts during interviews.

Outsourcing coding and technical problems to AI or ghost assistants.

3. Deepfakes, Proxy Interviewing & Identity Spoofing

Generative AI isn’t just for writing, it can imitate faces and voices.

Deepfake encounters: About 17 % of U.S. hiring managers have encountered candidates using deepfake tech in interviews, highlighting how easily avatars can be deployed to impersonate real people.

Rapid growth: Research suggests deepfake activity in recruitment contexts has spiked significantly, making impersonation a growing vector of fraud.

Future risk: Advisory firms project that by 2028, up to 1 in 4 candidates worldwide could be fake, meaning either synthetic identities or fraudulently crafted personas.

These trends show that identity spoofing and synthetic candidate creation are no longer science fiction but business risks.

4. Global Hiring + Inadequate Identity Verification Standards

Remote hiring opened global talent pools but not all hiring processes kept pace with the need for robust identity checks.

Many organizations rely on basic video calls and self‑reported credentials, which sophisticated fraudsters can easily forge or manipulate.

Without identity‑binding steps like biometric verification, federated identity proofs, or credential cross‑checks, it’s simpler for bad actors to slip through.

The result? Genuine talent competes with fraudulent profiles, diluting recruiter confidence and slowing processes.

5. Competitive Job Markets Intensify the Pressure to “Get Through”

In sectors with intense competition like tech, finance, or cybersecurity, passing screens can feel like the biggest hurdle.

With interviews becoming gateways to limited opportunities, some candidates turn to unauthorized external help, AI, or off‑camera coaching to make it past screens.

Technical assessments, often unsupervised, are especially vulnerable to real‑time collaboration, secondary devices, or hidden assistance.

This behavior creates a misaligned labor market where desperate tactics thrive when honest candidates are held to the same standards without detection tools.

Why Honest Candidates Are Losing Out

Together, these forces mean that:

Dishonest candidates often look as polished as real ones until it’s too late.

Traditional safeguards (like video interviews or unmonitored coding tests) can’t reliably confirm authenticity anymore.

Recruiters without layered verification tools end up spending more time filtering noise than identifying quality talent.

As remote hiring evolves, organizations must evolve too, adopting identity verification, fraud detection, monitored assessments, and clear AI usage policies, to ensure that capability equals credibility in the hiring pipeline.

The Most Common Remote Hiring Fraud Tactics

What cheating actually looks like in practice:

1. Using ChatGPT or AI copilots during live interviews

Candidates run ChatGPT or similar tools on a second screen or hidden window during interviews.

Real-time prompts are used to generate answers to behavioral, system-design, or coding questions.

Tell-tale signs recruiters report:

Long unnatural pauses before answers.

Overly polished or generic responses inconsistent with earlier communication.

Sudden jumps in technical depth mid-interview.

2. Receiving answers via hidden devices or secondary screens

Candidates use:

Smartphones placed below the camera line.

Smartwatches or Bluetooth earpieces.

Tablets positioned behind the laptop screen.

Off-camera collaborators feed answers through:

Messaging apps (Slack, WhatsApp, Telegram).

Live audio via earbuds.

Red flags:

Eyes constantly shifting away from the webcam.

Repeating or paraphrasing content that sounds read aloud.

Typing sounds when no coding is required.

3. Proxy candidates interviewing on behalf of someone else

A more skilled stand-in attends the interview instead of the real applicant.

Common patterns:

Strong interview performance followed by weak on-the-job capability.

Mismatched voice, accent, or appearance across interview rounds.

Different person joining technical and HR interviews.

Often paired with:

Shared login credentials for assessments.

Remote desktop tools to switch control mid-interview.

4. Deepfake video filters and voice cloning

Candidates use AI tools to:

Overlay a different face onto live video.

Modify facial features or lip movement in real time.

Clone or enhance voices to match another identity.

Known red flags:

Unnatural blinking or facial lag.

Audio slightly out of sync with lip movement.

Blurred facial edges or lighting inconsistencies.

5. Screen-sharing manipulation or virtual machine tricks

Candidates run interviews inside:

Virtual machines (VMs).

Remote desktop sessions.

This allows:

Hidden access to notes, code, or AI tools while screen sharing appears “clean.”

Switching windows invisibly between interviewer view and helper tools.

Additional tactics:

Pre-written answers pinned behind the shared window.

Code copied from GitHub or Stack Overflow during “live” tasks.

How to Stop Cheating in Remote Interviews

Stopping cheating in remote interviews is about designing a hiring process where fraud is hard to execute, easy to detect, and risky to attempt.

Below is a practical action plan recruiters can deploy immediately.

1. Start with real identity verification and keep verifying

At a minimum, organizations should verify identity before the interview using:

Government ID capture

Live selfie or video verification

Liveness detection to prevent photo or replay attacks

But one-time checks aren’t enough. The real risk emerges mid-interview, when a proxy candidate swaps in or a different person takes over after the initial greeting.

That’s why modern teams use continuous face matching, which:

Compares the live video feed to the verified ID image

Flags if a different person appears

Detects camera tampering or sudden feed replacement

Alerts when the candidate leaves the frame for extended periods

This single control eliminates entire classes of fraud, including proxy interviewing and identity swapping across rounds.

2. Use behavioral and response-pattern analysis to spot hidden help

Instead of relying on gut feel, recruiters can use behavioral baselining to detect anomalies in how candidates respond, such as:

Long unnatural pauses followed by perfect answers

Sudden jumps in technical sophistication

Overly structured or generic responses that don’t match earlier communication

Inconsistent depth of understanding across similar questions

These patterns often indicate:

Real-time AI assistance

Off-camera coaching

Scripted or pre-generated answers

Behavioral analysis doesn’t accuse candidates, it flags when a response pattern is statistically inconsistent with natural human problem-solving under live conditions.

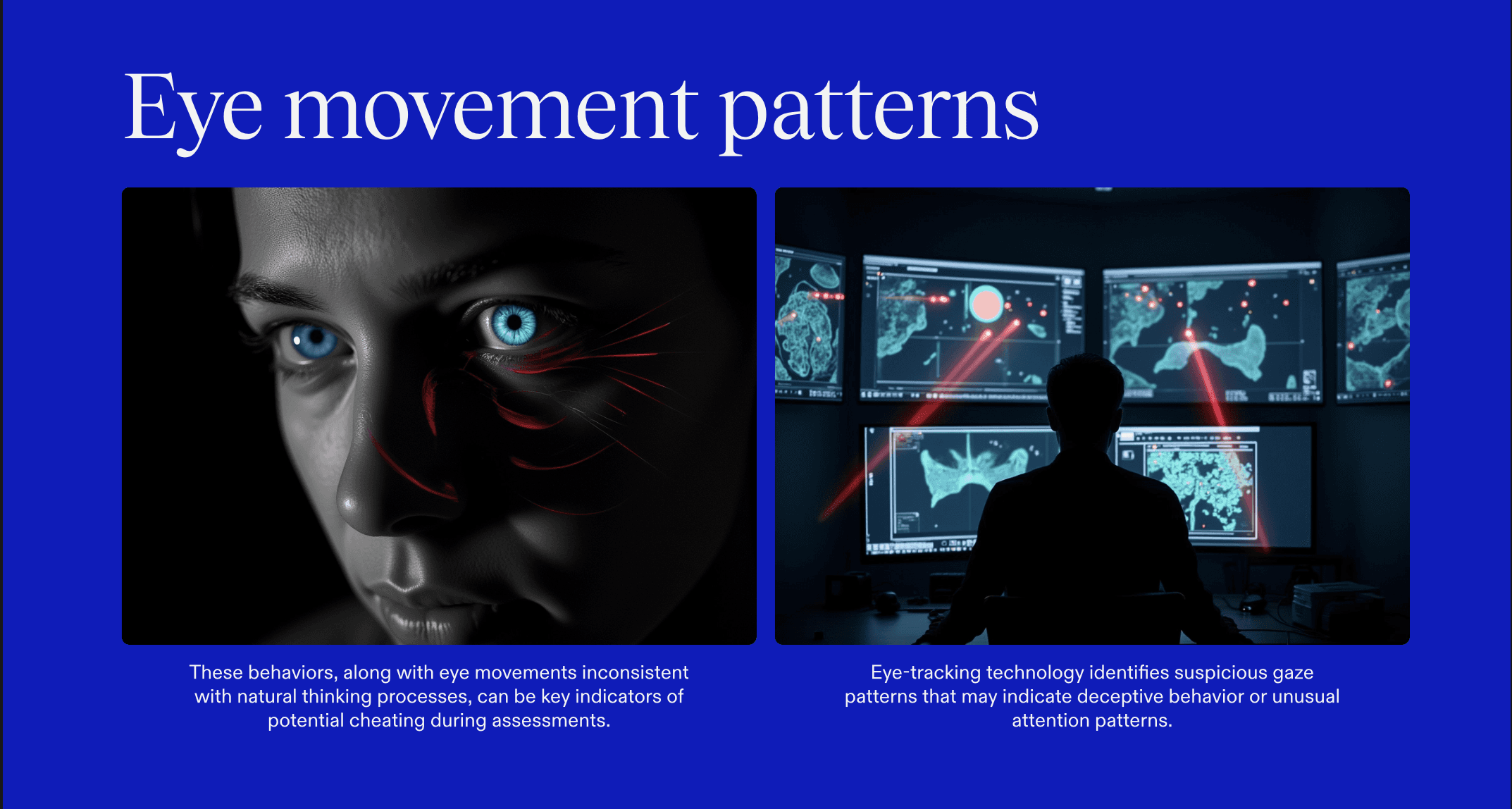

3. Monitor eye-gaze, voice signals, and latency anomalies

Most real-time cheating leaves physical and technical traces.

Modern interview monitoring tools can track subtle but telling signals, including:

Eye-gaze behavior

Frequent glances away from the screen

Repeated downward looks toward a phone or hidden notes

Eye movement that doesn’t align with natural thinking patterns

Voice and audio signals

Sudden changes in tone or cadence

Audio artifacts that suggest injection or relaying

Voice mismatches across different interview rounds

Latency and timing patterns

Delays between the end of a question and the start of a response

Inconsistent network behavior during key questions

Timing that matches known AI-generation response profiles

4. Lock down the interview environment itself

Recruiters can dramatically reduce cheating simply by controlling what candidates are allowed to run during interviews. Effective environment controls include:

Secure browsers that block: Tab switching, copy-paste. screen recording

Restrictions on: Clipboard access, external app usage, background process execution

5. Redesign interviews to make cheating harder and less useful

Technology alone isn’t enough. Interview design itself plays a huge role in either enabling or discouraging cheating.

High-integrity interview formats:

Ask candidates to explain reasoning step by step

Use follow-up “why” and “how” questions

Change problem parameters mid-solution

Require candidates to critique their own answers

Best practices that reduce cheating incentives:

Prefer short live exercises over long take-home tests

Use whiteboarding and real-time collaboration

Rotate question banks frequently

Tie questions directly to real on-the-job scenarios

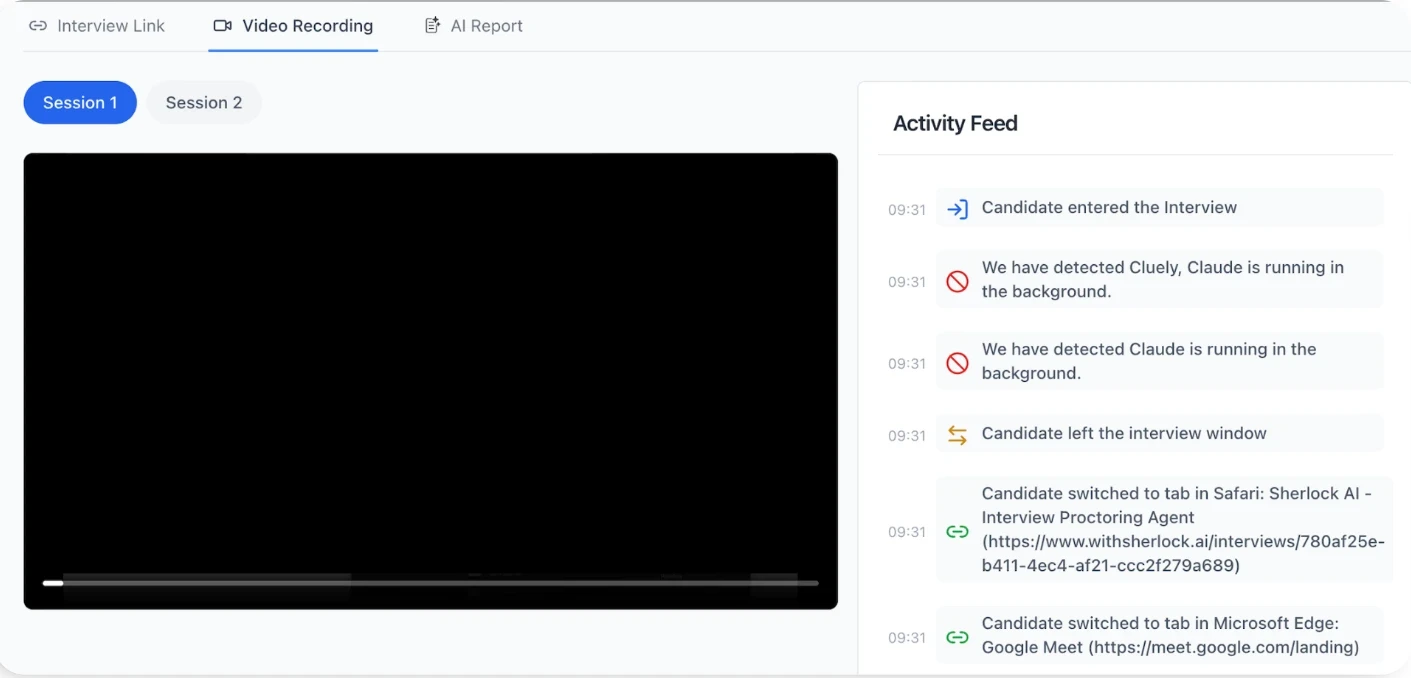

Sherlock AI: The Ultimate Defense Against Cheating in Remote Hiring

Sherlock AI adds a real-time fraud detection layer to live interviews, giving recruiters continuous visibility into candidate authenticity instead of blind trust.

Key Features That Stop Remote Interview Cheating

AI copilot and real-time AI assistance detection – Flags behavioral, gaze, and timing patterns that indicate candidates are using ChatGPT or AI copilots during live interviews.

Proxy candidate and identity swap detection – Continuously matches the live face on camera to the verified candidate identity to detect stand-ins and mid-interview swaps.

Deepfake video and synthetic face detection – Identifies facial warping, lip-sync mismatches, and video overlay artifacts used in deepfake or avatar-based interviews.

Voice cloning and audio relay detection – Detects voice mismatches and cadence shifts linked to voice cloning, hidden earpieces, or live answer relays.

Second-screen and hidden device detection – Uses gaze and head-movement analysis to surface off-screen phones, secondary monitors, and external answer feeds.

Scripted and coached answer detection – Flags unnatural pauses and sudden jumps in sophistication that signal off-camera coaching or pre-generated answers.

Virtual machine and remote desktop detection – Identifies interview environments used to hide AI tools, notes, and external resources.

Real-time fraud alerts, risk scores, and audit trails – Provides live alerts and evidence-backed risk scores to support defensible hiring decisions.

Conclusion

Cheating in remote hiring is a structural risk built into modern interview processes. AI copilots, proxy candidates, deepfakes, and second-screen assistance have made it easier than ever for dishonest applicants to outperform honest ones.

By combining identity verification, behavioral analysis, secure interview environments, and AI-based monitoring like Sherlock AI, recruiters can finally restore trust, protect hiring accuracy, and ensure that capability and not deception decides who gets hired.

Remote hiring is here to stay.

Cheating doesn’t have to be.