Back to all blogs

AI interview fraud is a growing security risk. Learn why traditional controls fail and how Sherlock AI secures the hiring process before access is granted.

Abhishek Kaushik

Feb 10, 2026

Security teams are now staring down a rising tide of AI-enabled interview fraud where applicants use generative tools, synthetic identities, deepfakes, or proxy actors to misrepresent themselves in interviews and screening processes.

The scale of the problem is already striking. Analysts warn that by 2028, one in four candidate profiles worldwide could be entirely fake, a level of deception that fundamentally introduces clear security implications for insider risk and initial access.

Nearly 60% of managers suspect candidates have used AI to deceive during interviews, and almost a third have confirmed fake identities or proxy interviewees in real hiring cycles.

These stats represent a novel attack surface that can directly enable unauthorized access, lateral movement, intellectual property theft, and long-term insider risk. Understanding how AI interview fraud works, why traditional security controls fail, and what defenses are effective is now essential for protecting the enterprise in the age of AI-driven deception.

AI Interview Fraud as a New Attack Vector

As remote hiring becomes more common, security teams face a new and rapidly evolving attack, one where threat actors use AI and deception to masquerade as legitimate job candidates and slip past traditional controls. Below are the key ways this manifests and why it matters for enterprise defenders.

1. AI-Assisted Candidates Exploit Remote Hiring Workflows

Remote hiring workflows are designed for efficiency, not robust identity proofing. Attackers have learned to exploit this.

Synthetic identities: With generative AI, fraudsters can fabricate resumes, social profiles, voices, and even video personas that look highly convincing to human interviewers.

Deepfake interviews: Approximately 17% of hiring managers report encountering deepfake technology used in video interviews, including AI-generated faces and voices.

Proxy actors & deception: Some fraudsters even use proxy interviewees (real people answering for the candidate), or rapidly generated AI responses during live calls to appear competent and pass technical screens.

These techniques allow bad actors to bypass surface-level vetting without ever being physically present or identity-verified.

2. Interviews Are Now Part of the Enterprise Attack Surface

With AI interview fraud, the threat can originate much earlier, at the point of hiring itself:

Legitimate credentials without legitimacy: A fake candidate who passes interviews and background screens gets real employee credentials, giving them access to internal systems once onboarding completes.

Remote work amplifies risk: The lack of in-person verification makes it easier for attackers to assume identities and avoid physical authentication steps that might expose them.

Attack surface expansion: Recruiting systems (ATS, video platforms, identity checks) now need to be considered part of the threat surface, meaning security must integrate hiring workflows into risk models and defenses where they previously did not.

In effect, the interview process now serves as an early access point rather than merely a HR procedure.

3. Fraud at Hiring Becomes a Precursor to Insider Threats and Breaches

The consequences of a fraudulent hire can be severe, extending far beyond HR headaches:

Insider access with malicious intent: Once onboarded, impostors can:

Access sensitive systems, databases, and intellectual property

Install malware, create backdoors, or execute ransomware

Harvest credentials or exfiltrate data undetected for months

State-linked threat actors: Nation-state groups such as North Korean IT operatives have reportedly used AI and falsified identities to secure remote tech jobs, directing salaries back to their state and potentially positioning themselves to disrupt or steal critical information.

Operational damage: Fake hires aren’t simply an expense; they can degrade productivity, erode team trust, and lead to reputational damage and regulatory exposure if breaches occur.

In short, when fraudsters use AI to infiltrate through hiring workflows, they gain trusted access making them much harder to detect by traditional security tooling that activates post-onboarding.

Why Traditional Security Controls Fail to Detect It

AI-enabled interview fraud exposes fundamental gaps in conventional security and hiring safeguards. Legacy identity tools, background checks, and monitoring systems simply weren’t designed to spot modern deception or synthetic identities, and that delay in detection gives attackers a huge head start.

Why IAM, Background Checks & Access Controls Activate Too Late

Traditional controls like IAM and pre/onboarding checks trigger only after a user is onboarded, meaning a fraudster can already hold valid corporate credentials before any security tooling engages.

Background checks lag reality: Standard background screening confirms documents and records weeks or months after hire by which time fraudsters may already have privileged access and ample time to embed themselves.

Static verification fails against dynamic deception: Identity verifications based on static photos or scanned IDs can easily be bypassed by AI-generated documents or deepfake presentations without live validation.

IAM trusts the onboarding trigger: Once someone has valid credentials in IAM, it treats them as “trusted,” meaning lateral movement, data access, and privilege escalation protections don’t kick in until after the fraud has already entered the system. This systemic trust on onboarding leaves threats hidden until much later.

Put simply: you don’t detect the fraud until it’s already inside your enterprise trust boundary.

Why Traditional Tools Can’t See Fraud at the Interview Stage

Existing monitoring and EDR tooling assume that threats begin at “first login”, not earlier in the hiring lifecycle.

Security tools don’t watch hiring workflows: SIEM, UEBA, and endpoint monitoring only activate once access credentials are in use. They don’t score risk during ATS submission, video interviews, or live assessments, where most AI fraud occurs.

HR systems lack deep verification signals: Applicant tracking systems and video platforms may log activity but have no inherent ability to detect whether a live interview feed is synthetic or if someone off-screen is controlling the responses. Tools that flag deepfake cues (lip-sync anomalies, biometric inconsistencies, liveness) are rarely integrated.

Blind spots in root data: Most hiring logs don’t feed into threat detection platforms, so suspicious patterns (like cluster submissions from reused IDs or odd geolocation footprints during interviews) never surface in security analytics.

As a result, fraud remains invisible until it morphs into a real security incident. By then, the attacker has already gained trusted credentials and likely moved laterally.

How AI Fraud Bypasses Technical and Procedural Safeguards

AI fraud exploits underlying assumptions and procedural weaknesses in verification practices:

Assumption of “good faith”: Hiring systems assume applicants are honest, and HR teams are optimized for experience and culture alignment not adversarial detection. This human-centered mindset makes AI deception harder to spot.

Deepfake and synthetic identity sophistication: AI tools can generate believable video, voice, and document forgeries that outpace legacy verification checks including some that even bypass background checks when stolen or forged IDs are used alongside AI personas.

Remote workflows increase exposure: With remote hiring now standard, there’s no physical handshake, no in-person ID match, and few real-time identity validations. Screens become the primary source of truth and AI can manipulate what you see and hear.

Credential reuse and recycled IDs: Fraudsters combine breached identities with AI to create consistent yet fake personas that slip past document validation and reference checks making traditional safeguards outdated against adaptive deception.

In short, traditional safeguards aren’t adversarial by design, they assume authenticity and speed over verification and risk scoring, which AI fraud exploits at every step.

How Security Teams Should Respond and Take Ownership

As AI interview fraud evolves into a genuine enterprise risk, it can no longer be treated as a purely HR issue. The moment a fraudulent hire gains access, it becomes a security failure, not a recruiting one, which means ownership must shift accordingly.

1. Move From “HR Problem” to “Security Responsibility”

AI-enabled hiring fraud directly enables:

Unauthorized access to internal systems

Creation of privileged insider threats

Regulatory, IP, and brand exposure

CISOs are increasingly recognizing hiring as part of the identity attack surface, just like cloud access or endpoint security. When fraud enters at hiring, it bypasses nearly every downstream control making early ownership critical.

2. Controls Security Can Introduce Pre-Onboarding

Security teams can extend their influence before credentials are ever issued by adding controls such as:

Live identity verification & liveness detection during interviews

Deepfake and external assistance detection for video calls

Risk scoring for candidates, similar to how devices and users are scored in Zero Trust

Anomaly detection across hiring data (reused identities, geo-mismatch, device fingerprints)

These bring security principles upstream where they can actually prevent infiltration, not just respond to it.

3. Partner With HR to Stop AI-Enabled Infiltration

Effective defense requires collaboration, not control battles:

Security defines threat models and controls

HR preserves candidate experience and process flow

Together, they create a fraud-aware hiring pipeline, not just a fast one

This partnership ensures organizations don’t have to choose between hiring speed and security, they can design for both.

However, most security teams still lack a practical way to enforce these controls inside live interviews without disrupting hiring workflows.

This is where purpose-built, interview-layer security solutions become essential.

Sherlock AI: Security Layer Against AI Interview Fraud

Sherlock AI operates as a dedicated security control at the interview itself. Rather than reacting after a fraudulent hire is onboarded, Sherlock AI prevents the threat from ever entering the organization’s trust boundary.

Unlike traditional tools that operate after identity is assumed, Sherlock AI brings security-grade detection into live hiring workflows, without disrupting the interview experience.

Purpose-Built for Interview-Stage Threats

Sherlock AI is designed specifically for the fraud patterns that modern security tools cannot see:

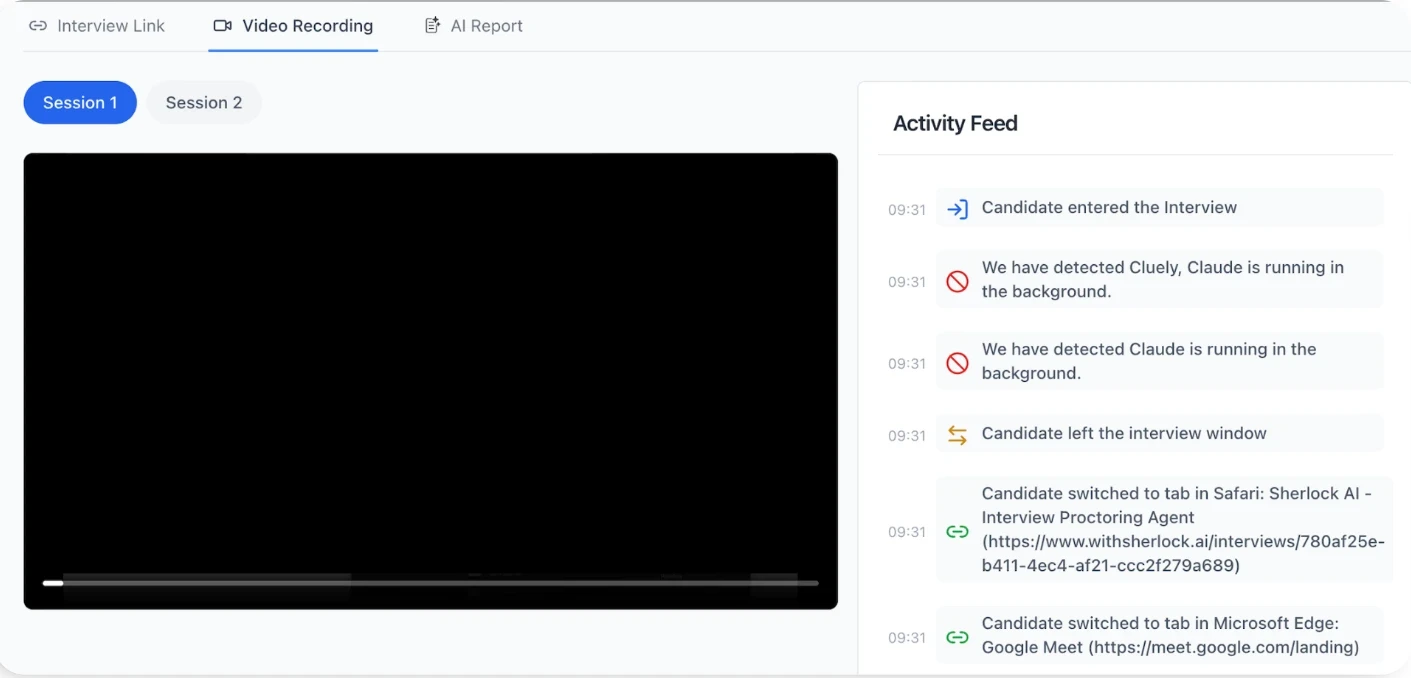

Impersonation detection: Identifies when the person on the call does not match the expected candidate identity across sessions and stages

External assistance & AI copilot detection: Flags real-time off-screen help, hidden LLM usage, and unnatural response patterns

Deepfake candidates: Detects synthetic or manipulated video and audio used to impersonate real people during live interviews

Proxy interviewees: Identifies when the person answering questions is not the actual candidate, including cases where a more skilled stand-in appears for technical rounds

Scripted and synthetic behavior analysis: Detects pre-generated answers, timing anomalies, and over-optimized response structures that signal AI support

Skill misrepresentation detection: Surfaces mismatches between claimed expertise and demonstrated real-time capability

These are the exact gaps where IAM, background checks, and EDR have no visibility.

Designed for Security Without Breaking Hiring

One of the key challenges for security teams is introducing controls without slowing down hiring or degrading candidate experience. Sherlock AI is architected to avoid that tradeoff:

Sits silently inside Zoom, Teams, and Google Meet

Requires no action from interviewers or candidates

Generates security-grade risk signals, not subjective opinions

Integrates cleanly into existing hiring workflows

From Hiring Signal to Security Signal

What makes Sherlock AI fundamentally different is that it converts interview behavior into actionable security intelligence:

Flags high-risk candidates before access is granted

Enables security review before onboarding, not after incidents

Creates an auditable trail for compliance and investigations

Allows consistent enforcement across geographies and roles

In effect, Sherlock AI transforms hiring from a blind trust process into a measurable, defensible security control point.

Why This Matters for Security Leaders

For CISOs and security architects, Sherlock represents a structural shift:

It closes a critical pre-onboarding blind spot

Reduces insider risk before it materializes

Aligns hiring with Zero Trust principles

Prevents the costliest class of breaches: those caused by trusted insiders who were never legitimate to begin with

As AI continues to reshape both hiring and cybercrime, organizations can no longer afford to leave interviews unsecured.

Sherlock AI shifts protection to where it matters most: before trust is granted.

It helps organizations move from hoping candidates are legitimate to knowing they are. In an age where AI can fake skills, identities, and confidence, securing the interview is no longer optional.

Conclusion

AI interview fraud has moved the front line of security further upstream, to the moment when trust is first created, not when access is granted.

As AI makes it easier to fake skills, identities, and even presence itself, traditional controls alone are no longer enough. If hiring remains unsecured, every security layer that follows is built on weak foundations.

This is why solutions like Sherlock AI matter. By securing interviews themselves, Sherlock AI helps organizations prevent fraudulent access before it ever becomes a security incident.

In the age of AI-driven deception, protecting your systems is not enough.

You must protect how people enter them.