Back to all blogs

Prevent AI cheating in remote interviews with 10 practical strategies and Sherlock AI for detecting suspicious patterns while keeping hiring decisions fair and accurate.

Abhishek Kaushik

Feb 9, 2026

Remote hiring made interviewing flexible and scalable but it also opened the door to sophisticated tools that feed real-time answers, hidden overlays, voice assistants, and even purpose-built cheating platforms.

A recent industry survey found that more than half of hiring managers believe AI has made it harder to trust candidate responses in remote interviews, with 59% of managers reporting suspicions that candidates used AI to misrepresent abilities, and 31% encountering cases where a candidate turned out to be a different person than claimed.

The same survey found companies report significant costs associated with fraudulent hires, 23% of employers reported losses of more than $50,000 due to hiring or identity fraud. 10% reported losses of more than $100,000.

In this blog, we’ll walk through 10 practical, recruiter-focused ways to prevent AI cheating in remote interviews to help you assess true candidate ability without letting AI distort the outcome.

Understanding How AI Is Used to Cheat in Remote Interviews

Before we move to prevention strategies, it’s important to understand how candidates are using AI to gain an unfair advantage in remote interviews. Modern AI tools are powerful and when misused, they can distort assessments of a candidate’s true skills. Here are the most common methods recruiters are seeing today:

1. Real-Time Answer Generators

Some candidates use AI tools that generate answers on the fly as questions are being asked.

These tools can parse text or voice input and instantly produce polished responses, whether for technical coding problems, case questions, or behavioural responses.

Answers sound good but may not reflect the candidate’s own knowledge or thought process.

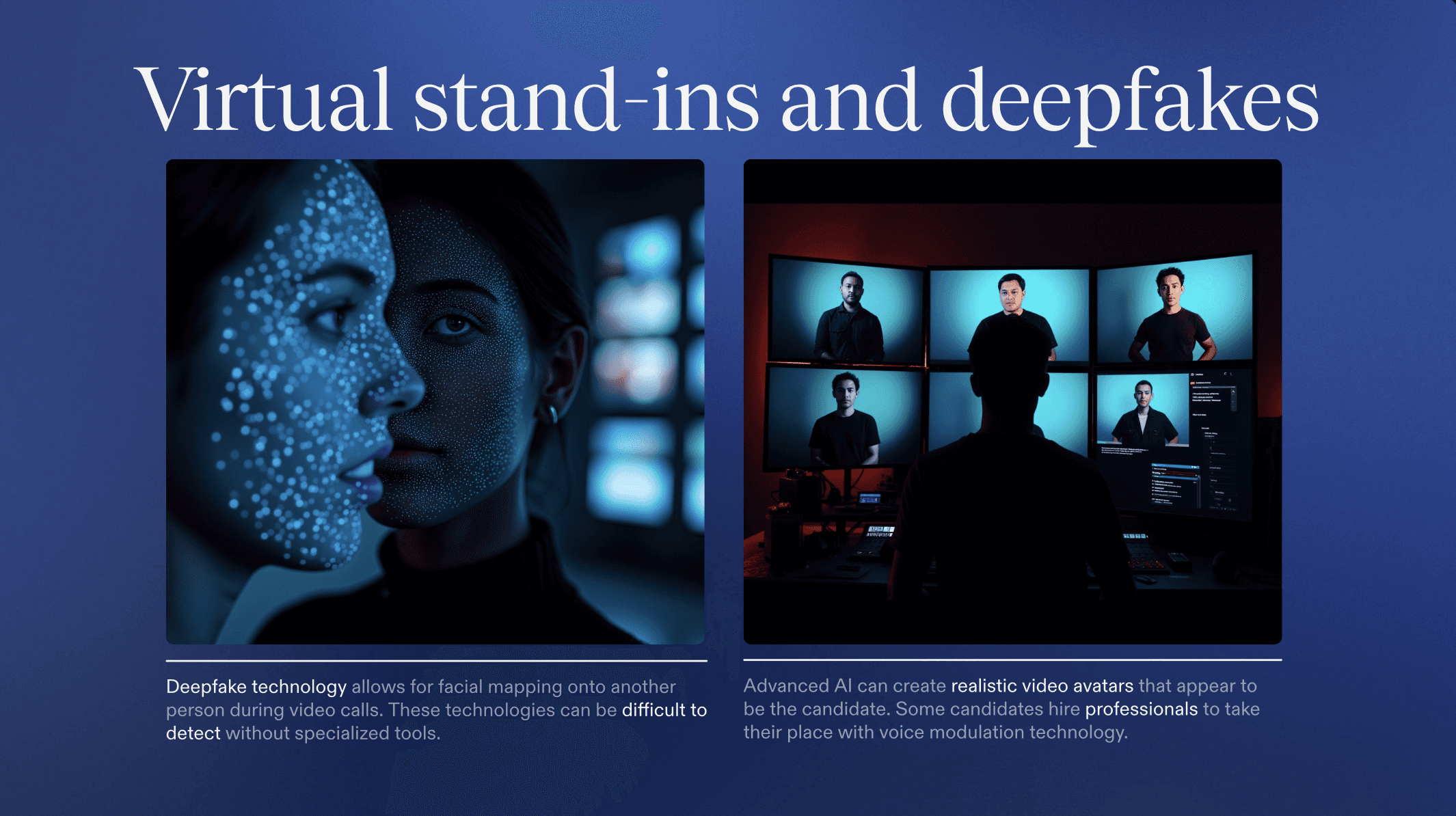

2. AI Voice Bots

With advancements in speech-to-text and real-time translation, AI voice bots can attend interviews on behalf of a candidate or assist them by listening to the interviewer’s questions and producing spoken responses.

These bots can hide behind a masked voice or substitute a human voice entirely, making it difficult to detect AI assistance without careful observation.

3. Second-Screen Copilots

Remote settings make it easy for candidates to consult a second screen or device during interviews.

On that device, an AI copilot can run large language models that offer instant solutions, code snippets, or structured interview answers all unknown to the interviewer.

Unlike in-person settings where eye contact and engagement are obvious, a second screen can be nearly invisible on a video call.

4. Browser Overlays and Background LLMs

Some advanced AI tools work as browser overlays or background services that can detect on-screen text (like your interview question) and silently generate prompts, suggestions, or even full responses.

These tools may run in a hidden tab, background process, or overlay, making them particularly tricky to spot without dedicated monitoring.

Understanding these techniques helps frame what you’re defending against. With that context, let’s look at practical ways to safeguard your remote interviews in the next sections.

10 Ways to Prevent AI Cheating in Remote Interviews

AI is changing the way candidates approach remote interviews and not always ethically. These 10 strategies show how to detect cheating and evaluate true candidate skills.

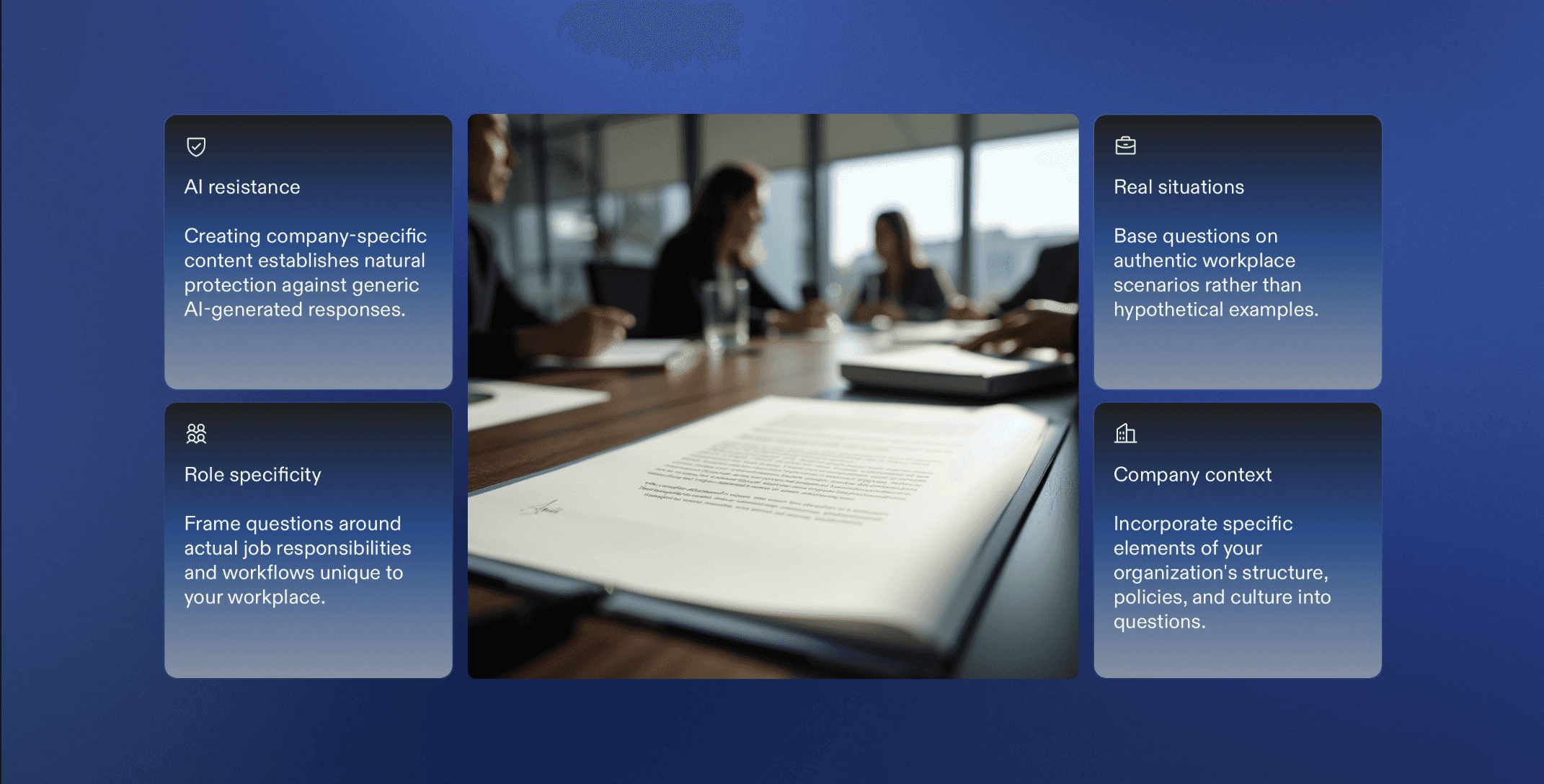

1. Design Questions AI Cannot Reliably Answer

Preventing AI cheating starts with what you ask. Generic or predictable questions are easy for AI to handle. Instead, focus on questions that require personal judgment and real experience.

Use experience-based prompts like:

“What’s a decision you regret and why?”

“What would you change about a past project?”

Make questions role- and company-specific, tied to your real environment rather than abstract scenarios.

Push candidates to explain tradeoffs and reasoning, not just outcomes.

Follow up with details that require consistency, such as metrics, constraints, or disagreements.

2. Make Interviews Adaptive, Not Scripted

Fixed interview scripts make it easy for AI tools to stay one step ahead. Adaptive interviews force candidates to think in real time instead of relying on pre-generated help.

For example, if a candidate says they “led a system redesign,” pause your planned flow and ask:

“What part of that system caused the most production incidents?”

“What did you remove, not just add?”

If they solve a problem quickly, change it mid-way:

“Now imagine latency doubles. What breaks first?”

“How would this change with half the budget?”

You can also ask them to revise their own answer:

“Given this new constraint, what would you change in your approach?”

These shifts make it hard for AI to keep up while revealing how the candidate actually reasons under pressure.

3. Enforce a Controlled Interview Environment

Even the best questions fail if candidates can freely use AI tools in the background. A controlled interview environment reduces the opportunity to access AI copilots without turning interviews into interrogations.

For example, require candidates to:

Share their screen throughout technical or case interviews

Keep only the interview platform and coding workspace open

Close messaging apps and browser extensions before starting

Watch for practical signals such as:

Frequent tab switching during questions

Audio glitches caused by virtual microphones or voice routing tools

Delays that align suspiciously with complex questions

Where possible, use interview platforms that detect tab changes, multiple displays, or virtual audio devices.

4. Prioritize Live Problem Solving Over Theoretical Q&A

Instead of asking, “What is the best approach for X?”, give candidates a live problem and ask them to work through it as you watch:

“Here’s a dataset. What’s the first thing you’d explore and why?”

“Design this API with me. Talk through your choices as you go.”

Interrupt with prompts like:

“Why did you choose that structure?”

“What would you do differently if scale doubled?”

Live problem solving exposes how candidates reason and makes AI assistance far harder to rely on without being obvious.

5. Require Candidates to Explain Their Reasoning

Don’t let candidates stop at conclusions. Interrupt and probe:

“Why did you choose this approach over the alternative?”

“What assumptions are you making here?”

“What would you change if this failed in production?”

For example, if a candidate suggests an architecture or solution, ask them to:

Walk through the decision path step by step

Identify risks they knowingly accepted

Explain what they would do differently with more time or fewer resources

Consistent, logical reasoning across follow-ups is difficult to fake with AI and quickly reveals whether the thinking is truly theirs.

6. Watch for Behavioral and Response Anomalies

Watch for signals such as:

Long, unnatural pauses before simple questions but instant answers for complex ones

Responses that are overly polished but vague when probed for specifics

Voice tone or pace changing mid-answer, especially after silence

A mismatch between casual conversation and suddenly formal, structured replies

For example, if a candidate gives a perfect explanation of a system but can’t recall basic implementation details, that gap is worth exploring further.

These anomalies don’t prove cheating on their own, but they tell interviewers where to dig deeper.

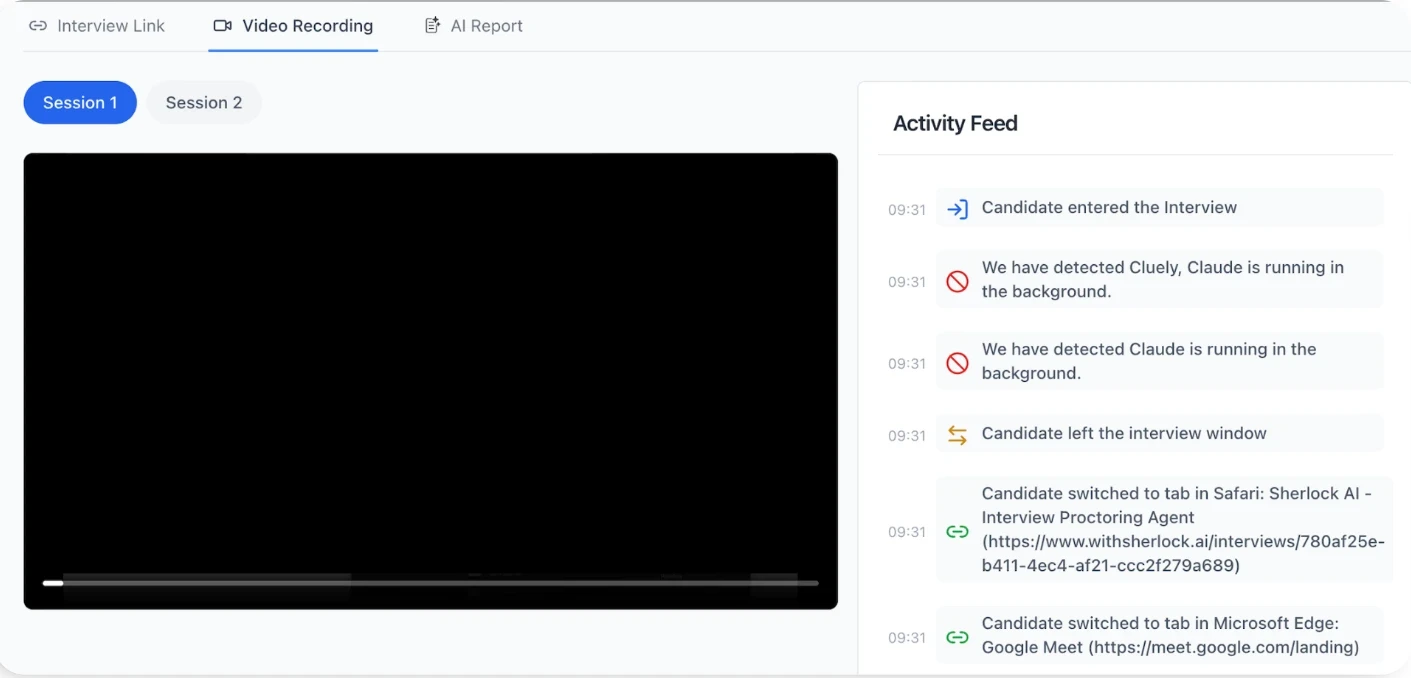

7. Use AI‑Based Detection Tool Alongside Human Judgment (Sherlock AI)

While smart interview design is your first line of defense, AI‑based detection tools can help surface subtle signals you might miss in real time.

Sherlock AI combine machine learning with multimodal behavioral analysis to highlight patterns that may indicate external assistance but always keep the final judgment with human interviewers.

Key features of Sherlock AI you can rely on:

AI Copilot Signals: Spots patterns of delayed, overly polished answers indicative of external AI help.

Attention & Gaze Tracking: Detects repeated off-screen glances or unnatural eye movement.

Deepfake & Proxy Detection: Flags facial inconsistencies, mismatched lip-sync, or unauthorized remote participants.

Speech Consistency Analysis: Identifies sudden shifts in tone, vocabulary, or response speed.

Device & Screen Monitoring: Flags background apps, tab switching, or virtual audio devices.

Real-Time Alerts & Integrity Reports: Highlights suspicious behavior during the interview and summarizes findings afterward.

Platform Integration: Works with Zoom, Teams, and other video systems without disrupting the session.

Sherlock AI gives recruiters actionable insight into potential AI-assisted cheating without making automatic judgments, helping ensure authenticity while maintaining candidate experience.

8. Train Interviewers to Handle AI-Driven Cheating

Even with tools and secure environments, the human interviewer is the ultimate line of defense. Recruiters need specific techniques to regain control if AI assistance is suspected.

Rapid Follow-Ups: If a candidate gives a perfectly polished answer, immediately ask for clarifications or examples:

“Can you show me the exact steps you took?”

“What was the biggest challenge you faced and how did you resolve it?”

Reframe Questions Mid-Answer: Change the context slightly to see if the candidate can adjust their response:

“If the deadline was halved, how would your approach differ?”

“What would you do differently if your resources were limited?”

Compare Across Rounds: Ask similar questions in multiple interviews to check for consistency in reasoning and depth.

Observe Engagement Patterns: Watch for unnatural pauses, sudden perfection, or overuse of jargon, often signs of AI prompting.

9. Set Clear Integrity Expectations With Candidates

Clear communication about what’s allowed, what’s monitored, and why integrity matters reduces the incentive to cheat, including using AI tools.

Explain Rules Upfront: Clearly tell candidates which tools or devices are prohibited and why.

Share the Monitoring Process: Briefly describe how the interview is observed or recorded, emphasizing fairness.

Highlight Consequences: Candidates are more likely to comply when consequences are transparent.

Promote a Culture of Authenticity: Reinforce that the goal is to evaluate real skills, not perfection.

By setting expectations clearly and early, you create an environment where candidates are less likely to rely on AI or other shortcuts, while also maintaining trust and fairness in the hiring process.

10. Combine Multiple Measures for Maximum Security

No single strategy can completely prevent AI-assisted cheating. The most effective approach is to layer multiple safeguards.

Mix Question Types: Use experience-based, live problem-solving, and follow-up reasoning questions together.

Secure the Environment: Limit tabs, background apps, and virtual audio devices while monitoring screen activity.

Train Interviewers: Equip them to spot anomalies, probe deeper, and adapt questions in real time.

Leverage AI Detection Tools: Use platforms like Sherlock AI to highlight suspicious patterns without replacing human judgment.

Set Clear Expectations: Communicate rules, monitoring processes, and integrity standards to candidates upfront.

For example, during a technical interview:

Ask the candidate to solve a coding problem live.

Observe reasoning and engagement for anomalies.

Check their screen and device activity for unusual patterns.

Use AI detection insights as a reference, then follow up with dynamic questions if needed.

Ensure the candidate knows the rules and the purpose of the process.

Conclusion

AI-assisted cheating in remote interviews is a growing challenge, but it can be managed with the right strategies. By combining smart question design, adaptive interviews, controlled environments, behavioral monitoring, and tools like Sherlock AI, recruiters can detect suspicious patterns while keeping final decisions human. Layering these measures ensures candidates are evaluated fairly, reduces the risk of cheating, and strengthens the overall trustworthiness of your hiring process.