Back to all blogs

A practical guide for recruiters to spot synthetic voices in live interviews, understand AI voice fraud risks, and use Sherlock AI for verification.

Abhishek Kaushik

Feb 5, 2026

Artificial intelligence has moved beyond written answers and now enters interviews through synthetic voices. Voice cloning and real time text to speech tools allow candidates to speak using AI generated audio that sounds polished, confident, and highly articulate. In remote interviews, this creates a new layer of hiring risk that is far harder to detect than traditional cheating. Security researchers created a fake candidate named “Gary” using synthetic voice and video in a Zoom interview scenario. The candidate seemed human to the naked eye but was flagged as manipulated in seconds by deepfake detection tools, showing how convincing synthetic voice can be in live settings.

Synthetic voices are designed to sound human, but they still lack subtle imperfections, emotional variability, and natural conversational flow. Recruiters who understand these gaps can quickly identify when an AI voice is being used to mask a candidate’s true communication ability. Industry reports indicate that roughly 15 percent of recruiters have seen deepfake faces or voice cloning attempts during remote interviews.

This guide explains how synthetic voices work, the red flags recruiters should watch for, and how Sherlock AI helps detect voice based fraud in live interviews.

What Are Synthetic Voices in Interviews

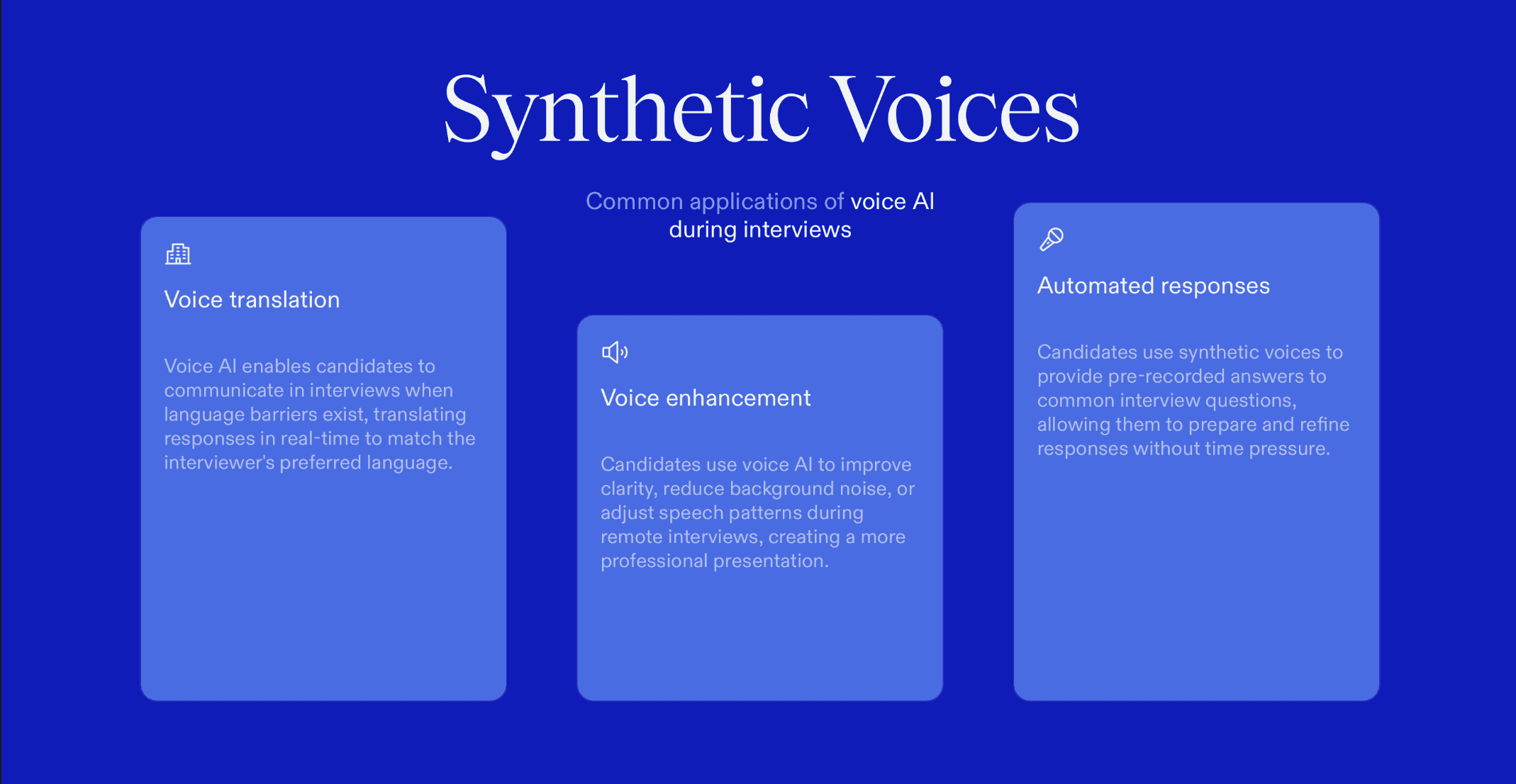

Synthetic voices are AI generated speech created using voice cloning or text to speech systems. In an interview setting, candidates may:

Use AI to convert typed answers into spoken responses

Clone their own voice or another voice to sound more fluent

Run AI copilots that generate answers which are spoken aloud instantly

Unlike reading from notes, this method allows candidates to appear natural while actually relying on AI generated speech.

This is becoming more common in remote hiring because voice AI tools now work in real time with very low delay.

Why Synthetic Voice Fraud Is a Growing Hiring Threat

A candidate using synthetic voice can pass interviews without truly possessing communication skills, technical understanding, or spontaneous thinking ability.

1. Voice AI tools are widely accessible and easy to hide

Advanced voice cloning and text to speech tools are now inexpensive, browser based, and require no technical expertise. Candidates can run them quietly in the background without triggering traditional monitoring systems.

2. Recruiters focus heavily on verbal communication during interviews

Interviewers rely on speech to evaluate confidence, clarity, and subject knowledge. Synthetic voices exploit this trust by presenting polished communication that may not reflect the candidate’s true ability.

3. Synthetic speech can sound more confident than the real candidate

AI generated voices are designed to eliminate hesitation, nervousness, and inconsistency. This creates an artificial impression of strong communication skills and professional maturity.

4. Traditional plagiarism or browser monitoring tools cannot detect audio manipulation

Most hiring safeguards focus on text copying or screen activity. Audio based AI assistance operates outside these systems, making it largely invisible to existing controls.

5. Candidates can pass interviews without real communication or thinking skills

With AI generating responses in real time, candidates may answer complex questions without understanding them. This prevents recruiters from accurately assessing problem solving and critical thinking.

6. Companies face costly hiring and post onboarding failures

When the candidate’s real abilities surface after hiring, performance drops quickly. This results in wasted training costs, lost productivity, and repeated rehiring efforts.

For companies, this leads to costly hiring mistakes and performance failures post onboarding.

Guide to Spot Synthetic Voices in Live Interviews

1. Listen for Overly Perfect Sentence Structure

Synthetic speech often sounds like written content read aloud rather than spontaneous conversation.

How it appears in interviews:

The candidate answers in numbered or essay style formats such as “There are three key reasons…”

Responses feel complete and polished from the first word with no verbal corrections

Example:

When asked a behavioral question, the candidate responds with a structured mini presentation instead of a natural story.

2. Assess Emotional Variation in the Voice

AI voices struggle to express authentic emotion or shift tone naturally.

How it appears in interviews:

The same pitch and pace are used for technical, personal, and opinion based questions

No excitement when discussing achievements or no hesitation during difficult topics

Example:

The candidate describes a major challenge with the same calm tone used to explain a definition.

3. Watch for Consistent Response Delays

AI assisted speech often introduces a noticeable processing pause before answers.

How it appears in interviews:

A repeated 3 to 5 second silence before complex responses

The delay happens even when the question is simple or conversational

Example:

Basic follow up questions still trigger long pauses as if the response is being generated.

4. Notice the Absence of Human Fillers

Natural speakers pause, think aloud, and use fillers during conversation.

How it appears in interviews:

No use of phrases like “let me think” or “that is a good question”

Answers begin immediately and flow perfectly without interruption

Example:

Every response starts cleanly without any verbal hesitation or self correction.

5. Identify Mispronunciations of Specific Terms

Voice models often struggle with uncommon or contextual language.

How it appears in interviews:

Incorrect pronunciation of company names or internal tools

Awkward handling of industry specific jargon

Example:

The candidate explains a role confidently but mispronounces the employer’s product name.

6. Check for Audio and Video Mismatch

Synthetic audio may not align perfectly with visual cues.

How it appears in interviews:

Lips move slightly before or after the audio starts

Facial expressions remain static while speech continues

Example:

The candidate finishes speaking but mouth movement lags or cuts off early.

7. Listen for Unnatural Audio Cleanliness

AI generated audio often lacks environmental realism.

How it appears in interviews:

No breathing or background noise at all

Audio sounds compressed or digitally smooth

Example:

The voice remains perfectly clear even when the candidate moves or turns away.

8. Observe How the Candidate Handles Interruptions

AI speech struggles to adapt when the conversation flow changes.

How it appears in interviews:

The candidate freezes or restarts after being interrupted

They cannot resume mid thought naturally

Example:

When interrupted, the candidate repeats the entire answer from the beginning.

9. Test for Scripted Delivery

AI generated speech often follows a predetermined structure.

How it appears in interviews:

Answers sound memorized rather than reasoned

No deviation when follow up questions are asked

Example:

The candidate repeats the same phrasing even when the question is reframed.

10. Ask for Personal and Spontaneous Examples

AI struggles with authentic lived experience.

How it appears in interviews:

Vague or generic stories with no emotional detail

Avoidance of questions about specific failures or conflicts

Example:

The candidate explains leadership in theory but cannot describe a real team situation.

11. Introduce Real Time Thinking Tasks

Live reasoning exposes synthetic assistance quickly.

How it appears in interviews:

The candidate delivers prepared sounding logic instead of thinking aloud

No pauses or corrections during problem solving

Example:

Asked to explain their thought process step by step, the answer sounds rehearsed.

12. Confirm Using Movement Based Checks

Voice generation systems often break during physical motion.

How it appears in interviews:

Audio lags or distorts when the candidate turns their head

Lip sync issues appear during movement

Example:

While speaking and turning, the voice stays steady but facial timing slips.

How Sherlock AI Helps Detect Synthetic Voice Fraud

Sherlock AI is purpose built to protect interview authenticity in an era where voice based AI assistance is increasingly difficult to detect. Unlike generic proctoring or screen monitoring tools, Sherlock AI focuses on live behavioral intelligence and voice analysis, the two areas where synthetic speech leaves detectable traces.

Sherlock AI operates passively during interviews, allowing recruiters to evaluate candidates naturally while the system continuously assesses authenticity signals in the background.

Sherlock AI can:

1. Detect Real Time AI Voice Modulation

Sherlock AI identifies vocal patterns commonly produced by voice cloning and text-to-speech systems, including digital smoothing and unnatural frequency consistency.

2. Identify Unnatural Speech Cadence Patterns

The platform analyzes pacing, rhythm, and response timing to detect over-structured or machine-generated speech delivery.

3. Flag Inconsistencies Between Voice and Facial Movement

Sherlock AI monitors synchronization between spoken audio and visible mouth movement to surface subtle lip sync anomalies.

4. Monitor Hesitation and Latency Patterns

Repeated response delays and predictable processing pauses are tracked as indicators of AI-generated speech initiation.

5. Analyze Conversational Adaptability

Sherlock AI observes how candidates respond to interruptions, reframed questions, and sudden topic shifts, areas where AI-assisted speech often degrades.

6. Assess Emotional and Tonal Variation

The system evaluates pitch range and tonal dynamics to detect emotional flatness commonly found in synthetic voices.

7. Provide Recruiter-Friendly Risk Indicators

Instead of raw technical data, Sherlock AI presents clear risk signals that help recruiters decide when deeper probing is needed.

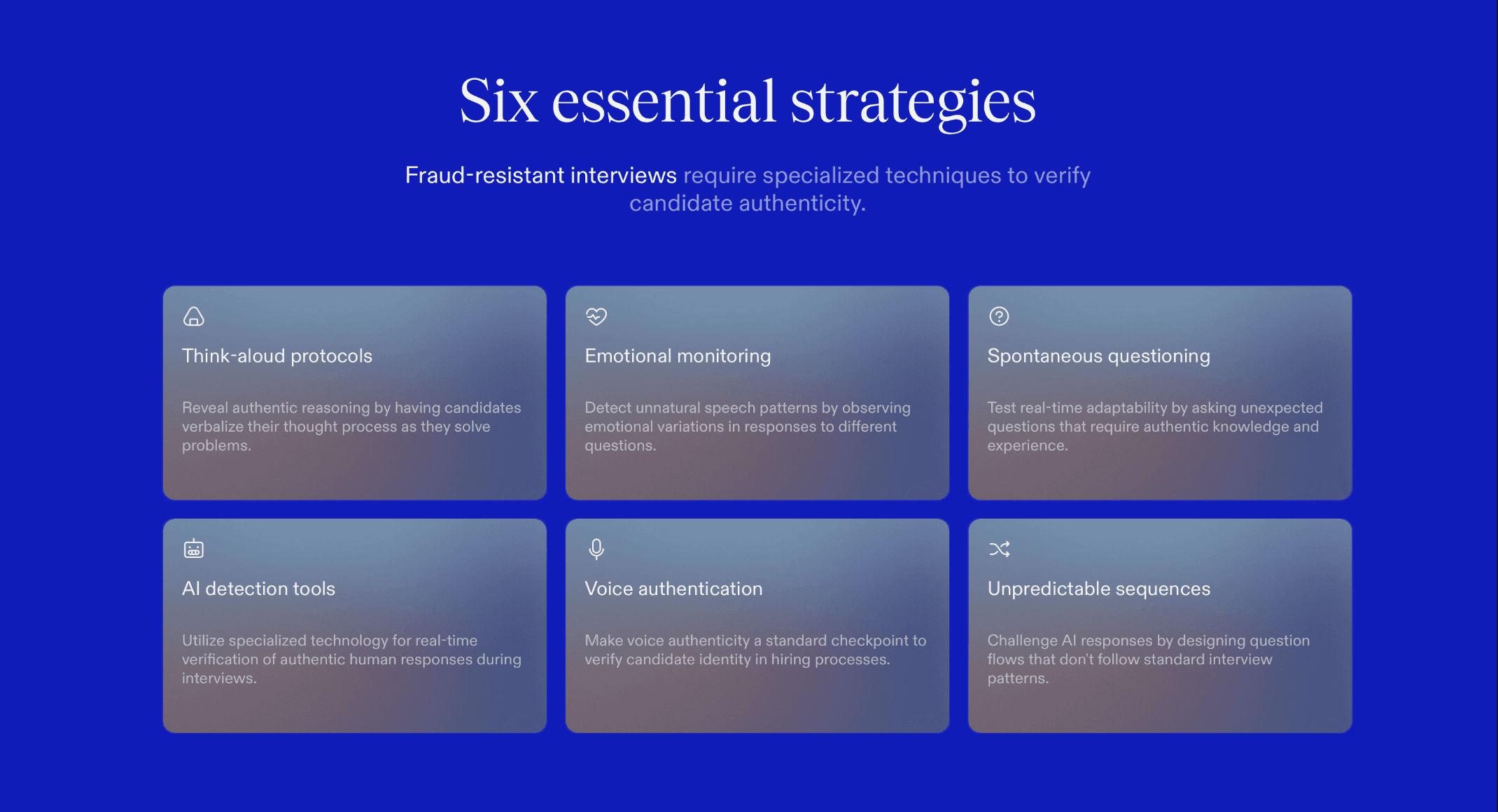

Best Practices for Future Safe Interviews

To ensure interview integrity and reduce the risk of synthetic voice fraud, recruiters should adopt the following preventive measures:

Include spontaneous and follow-up heavy rounds to test adaptability.

Ask candidates to think aloud to expose real-time reasoning.

Focus on emotional variation in speech to detect unnatural flatness.

Avoid predictable question sequences to challenge AI-generated responses.

Use AI fraud detection tools like Sherlock AI for real-time monitoring.

Treat voice authenticity as a hiring checkpoint in every interview.

Read More: Best AI tools for Detecting Dishonest Responses during Online Interviews

Final Takeaway

Synthetic voices are becoming the newest invisible tool candidates can use to bypass traditional hiring filters. They can make a candidate appear more confident, articulate, and knowledgeable than they truly are, making it increasingly difficult for recruiters to rely on intuition alone.

By carefully observing linguistic, audio, visual, and behavioral red flags, and combining these insights with Sherlock AI’s real-time detection capabilities, hiring teams can maintain trust in the interview process. Sherlock AI provides actionable risk indicators, flags inconsistencies, and helps recruiters ask the right follow-up questions to verify authenticity.

In today’s remote interview environment, the biggest question is no longer “Is the candidate present?”

It is “Is the voice truly theirs?”

With the right strategies and tools, including Sherlock AI, recruiters can confidently ensure that the candidate on screen is the candidate speaking, protecting their organization from hidden AI-assisted deception.