Back to all blogs

Learn how candidates are cheating in technical interviews and discover how structured interview design combined with Sherlock AI helps recruiters detect fraud and hire with confidence.

Abhishek Kaushik

Feb 3, 2026

Technical interviews today are becoming increasingly vulnerable to cheating.

According to a survey of recent job seekers, about 7 in 10 candidates admitted to lying or cheating at some point during the hiring process, with 22% specifically acknowledging cheating on technical assessments.

The rise of generative AI has added another layer of complexity. Major employers have publicly acknowledged concerns about candidates using AI tools to generate answers during interviews, prompting some companies to rethink how technical interviews are conducted in remote settings . What was once an occasional issue has now become a systemic risk that can directly impact hiring quality, team performance, and long-term retention.

Common Ways Candidates Cheat in Technical Interviews

Before we talk about solutions, it’s critical to understand how candidates are actually gaming technical interviews today, from AI-powered tools to identity fraud. These issues are not hypothetical; employers and the media are documenting them as real problems reshaping hiring.

1. AI Coding Assistants and Real-Time Answer Generation

Sophisticated AI tools can now listen to questions in real time and generate code or interview responses instantly. often without obvious signs to the interviewer.

“Invisible help”: Tools like Interview Coder and similar extensions are designed to work “undetected” during virtual interviews, producing ready-to-present answers on the fly. Recruiters have noticed that candidates pause just long enough for AI to generate perfect responses, then deliver them almost verbatim.

High prevalence: About 20% of professionals in a Blind survey admitted they’ve secretly used AI tools during interviews.

Real-world impact: Google’s CEO has publicly acknowledged that AI misuse in remote coding interviews has risen so much that the company is considering requiring in-person rounds to accurately assess candidate fundamentals.

AI doesn’t just help with syntax, it can outline full solutions, explain reasoning, and coach responses live. This makes it harder for interviewers to gauge genuine understanding.

2. Proxy Interviews & Remote Collaboration

Some candidates are no longer interviewing alone, they’re getting off-camera help.

Remote coaching: Candidates may have someone else listening and feeding answers via an earpiece, secondary device, or live chat while the candidate mouths responses. Recruiters have reported bizarre patterns such as off-sync answers and unnatural phrasing that feels “second-hand”, suggesting another person or machine is assisting.

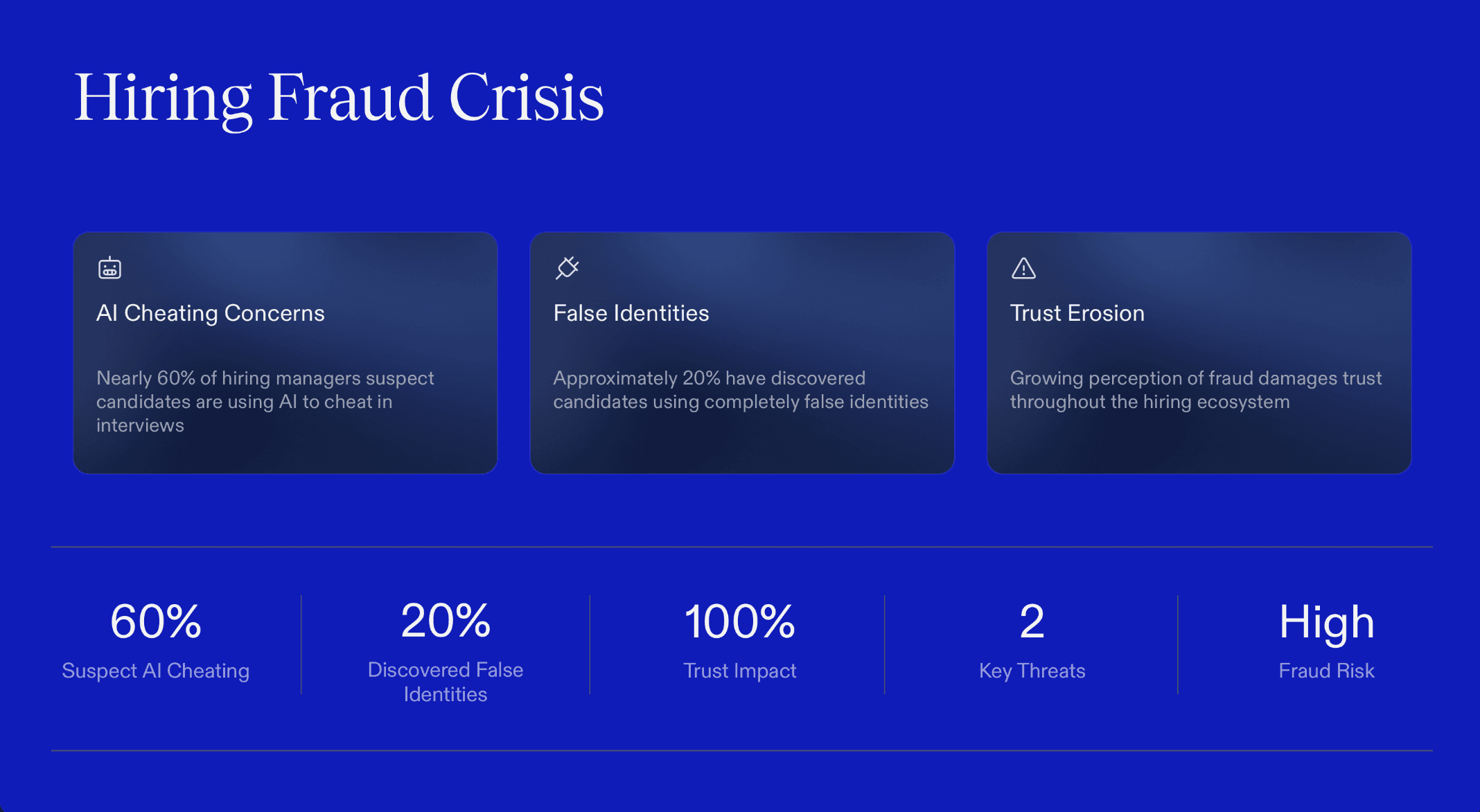

Unreliable identity: A survey of U.S. hiring managers found 31% had interviewed someone who later turned out to be using a false identity, and nearly two-thirds suspected candidates using AI to misrepresent themselves.

Some hiring managers have reported candidates hiding auxiliary devices just outside camera view, secondary screens feeding live assistance while they pretend to interview normally.

3. Deepfake or Identity Substitution Tactics

AI isn’t just helping with answers, it’s helping people disguise who they are.

Deepfake interviews: A growing number of recruiters report seeing candidates attempt to mask their real identities using AI-generated avatars or filters. In one documented case, an interviewer terminated a call after suspecting an AI overlay was disguising the interviewee.

Organized fraud rings: Major cybercrime investigations (e.g., those involving North Korean IT operatives) show that fake identities paired with remote interviews are being used to infiltrate corporate networks, often with sophisticated cover stories and stolen credentials designed to pass pre-employment checks.

4. Hidden Devices, Second Screens & Off-Camera Help

Not all cheating is about outsourcing the interview, many candidates are using technology tricks to get ahead.

Second screens and hidden tabs: Candidates may have private browser windows, code notebooks, or AI answers open on a hidden monitor. Recruiters have seen candidates’ eye movement, reflections in glasses, or repeated pauses that suggest they’re consulting unseen sources mid-interview.

Smartphone assistance: Viral social media clips show interviewees holding phones near their laptops during calls, using real-time tools to draft responses and read them back as their own.

A widely shared social post depicted a candidate using an AI assistant on their phone during a live interview, feeding answers generated in real time while reading them out to the recruiter.

Why This Matters

These cheating tactics erode trust, inflate hiring metrics, and lead to bad business outcomes:

Bad hires & lost productivity: Candidates selected via AI tricks often struggle on day one because they don’t actually have the skills they purported to demonstrate live.

Recruiter burnout: Interviewers report being forced to spend time playing detective, watching for unnatural pauses, shifting eyes, and repeated “perfect but hollow” answers instead of focusing on evaluating genuine talent.

Widespread fraud perception: Nearly 60% of hiring managers surveyed suspect AI-assisted deception, and a fifth have encountered candidates later revealed to be using false identities.

Structuring Interviews to Reduce Cheating

To reduce cheating and ensure your technical interviews actually measure skill, the design of your interview process matters as much as any tool you use. Thoughtful structuring not only makes cheating harder but helps you spot it quickly when it does happen.

1. Structured, Role-Specific Technical Questions

Asking every candidate the same core questions with consistent evaluation criteria removes ambiguity and makes it harder for candidates to succeed by rote memorization or recycled online solutions. Structured interviews have been shown to increase fairness and predictive validity compared to informal chats.

How to implement:

Develop question banks tied to real responsibilities of the role rather than generic algorithm drills.

Rotate questions and avoid widely shared public problem sets, which AI or past candidates can easily answer.

Companies like Google and Stripe avoid recycled interview puzzles and instead craft targeted, domain-relevant prompts that candidates can’t simply copy from public sources.

2. Live Problem-Solving with Follow-Up Reasoning

A candidate can paste or generate code, but they cannot easily fake reasoning on demand. Follow-ups force them to articulate why and how they reached a solution, which is much harder to fake with AI or external help.

How to implement:

Ask “Why did you choose this approach?” and “What would you change if …?” after they solve or code something.

Probe inconsistencies between solution and explanation. Genuine deep understanding stands out.

This moves interviews from code-output evaluation to cognitive evaluation, where patterns of cheating (e.g., pasted code without context) become much easier to detect.

3. Pair Programming or Collaborative Coding

When candidates code with an interviewer in real time, such as in a live pair-programming session, there’s much less room for hidden tools or collaboration with external helpers.

How to implement:

Use live coding platforms and share screens throughout.

Ask the candidate to think aloud and adjust tasks dynamically during the session.

You immediately see typing rhythm, logic evolution, and interactive problem-solving instead of just a final answer. This gives you richer insights into how they think and makes it difficult for outside assistance to hide.

4. Randomized Question Sets & Real-Time Modifications

Static question banks get shared, cached, and exploited. Randomizing questions and even making small real-time tweaks, significantly reduces the chance a candidate has seen or prepared exact answers.

How to implement:

Shuffle task elements (e.g. variable names, constraints) right before or during the interview.

Inject on-the-fly twists in live problem-solving sessions to see if candidates can reason, not recite.

Hiring asynchronous AI solutions thrive on predictability, mix it up and you immediately put honest thinking back at the center of the interview.

5. Layered, Multi-Stage Evaluation

Relying on just one type of screening (e.g., a coding quiz) makes your process vulnerable to cheating. Instead, stack multiple methods so that progress is based on consistency across different evaluation styles.

Layered approach:

Take-Home Projects: Realistic tasks tied to your codebase that require follow-up discussions.

Live Coding Session: Pair programming or coding with narrated reasoning.

Behavioral and Design Rounds: Explain architectural choices and past decisions.

Reference Checks: Validate claimed experience and project outcomes.

If a candidate aces a take-home but fails to explain it live, it’s a strong signal of outsourcing or cheating It should prompt deeper follow-up questions.

Sherlock AI: The Solution for Trustworthy Technical Interviews

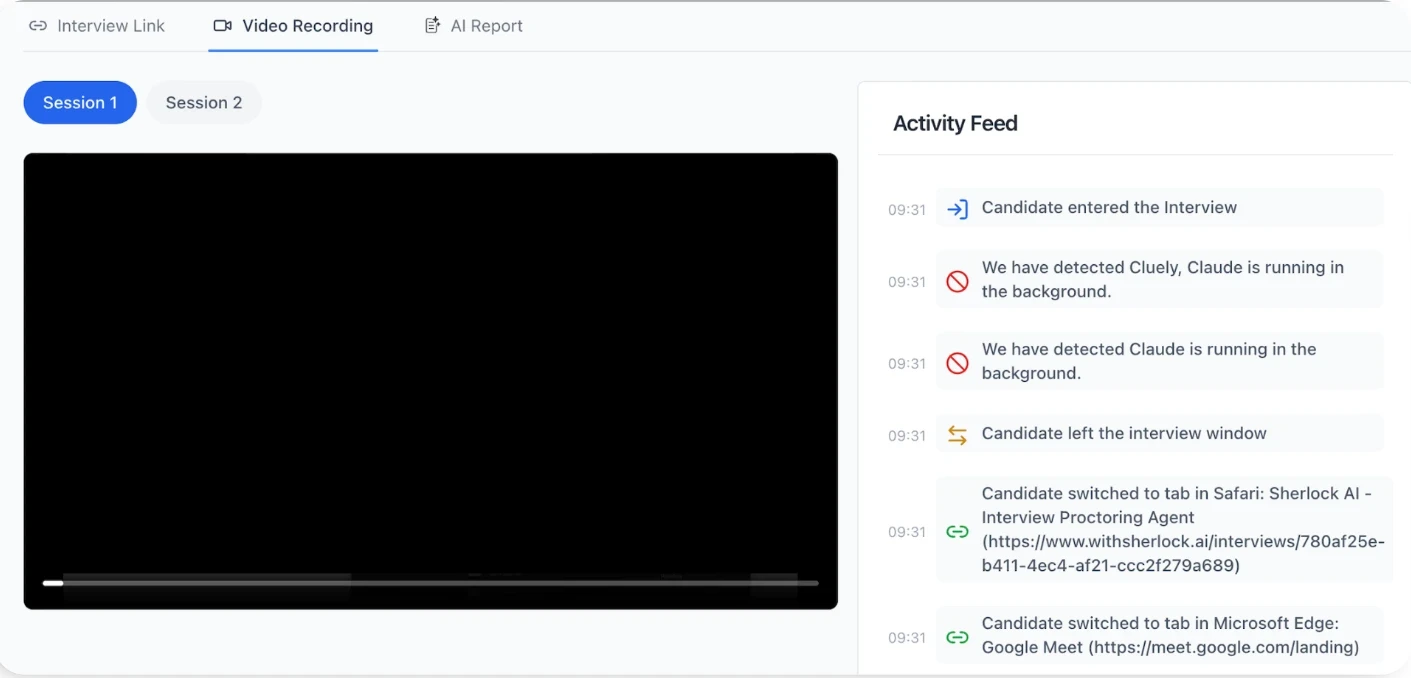

Sherlock AI strengthens existing interview processes by adding real-time intelligence and behavioral visibility, making dishonest behavior harder to hide and easier to investigate.

Key Features of Sherlock AI

AI-assisted answer detection: Identifies response patterns commonly associated with real-time AI usage, such as unnatural pauses followed by highly structured or overly polished answers that don’t align with the candidate’s prior reasoning depth.

Proxy interview detection: Flags behavioral inconsistencies that often appear when another person is feeding answers off-camera, including mismatches between response timing, speech cadence, and on-screen problem-solving activity.

Identity substitution safeguards: Surfaces sudden changes in communication style, confidence, or technical depth across interview stages that may indicate impersonation or candidate switching.

Early deepfake and synthetic persona signals: Detects subtle audio-visual inconsistencies, facial behavior anomalies, and timing irregularities that are difficult for real-time deepfake overlays to replicate reliably.

Behavioral consistency analysis: Monitors how candidates think, respond, and adapt throughout the interview to identify abrupt shifts that suggest external assistance rather than genuine problem-solving.

Response timing and cognitive latency tracking: Analyzes hesitation patterns to distinguish natural thinking time from delays caused by waiting for external input or AI-generated responses.

Real-time risk signals for interviewers: Surfaces contextual alerts during the interview so interviewers can ask follow-up questions when it still matters, rather than discovering issues after the fact.

Well-designed interviews reduce cheating.

Sherlock AI makes it visible.

Conclusion

Technical interview cheating is a systemic challenge driven by remote hiring, accessible AI tools, and increasingly sophisticated fraud tactics. Relying on instinct or manual observation alone is no longer enough to protect hiring quality.

An effective defense starts with thoughtful interview design: structured, role-specific questions, live problem-solving, and dynamic follow-ups that reward genuine thinking. But design alone can’t surface every signal in a virtual environment.

That’s where Sherlock AI adds critical visibility. By analyzing behavior, response patterns, and interaction integrity in real time, it helps hiring teams distinguish authentic skill from assisted performance, without disrupting candidate experience or replacing human judgment.