Back to all blogs

How to detect and prevent AI interview cheating in real time. Learn the signals, risks, and controls including how Sherlock AI protects interview integrity before bad hires happen.

Abhishek Kaushik

Apr 14, 2026

Artificial intelligence is reshaping how interviews are conducted and not always for the better. What began as a tool to streamline resume review and candidate screening has quietly become a vehicle for interview cheating.

According to a recent manager survey, 59% of employers suspect AI tools have been used to misrepresent candidates in interviews, and 31% have encountered someone with a fake identity or impostor in a live hiring session.

Even more telling: 20% of professionals admitted to secretly using AI during interviews, with more than half believing such assistance has “become the norm.”

This shift is a credibility crisis for talent acquisition. In this guide, we’ll explore how AI interview cheating works, the key signals that reveal it, and proven strategies to stop it before it undermines your hiring decisions.

AI Interview Cheating And Why It’s Hard to Spot

Artificial intelligence has moved beyond resume tweaks and mock-question prep, it’s now being used to actively cheat in live job interviews, and many hiring teams aren’t prepared for it. Traditional interview cues such as eye contact, fluency, and confidence no longer guarantee authenticity, because AI can mimic all of them with alarming sophistication.

Here’s how the landscape has shifted:

AI-assisted cheating isn’t rare, it’s happening now

17% of hiring managers have already encountered deepfake technology used to alter candidate identities in video interviews.

Research predicts that by 2028, 1 in 4 job candidates globally could be fake, often using AI-generated identities rather than real applicants.

AI cheating is hard-to-spot

AI interview fraud isn’t a single tactic. It has diversified, making detection increasingly difficult:

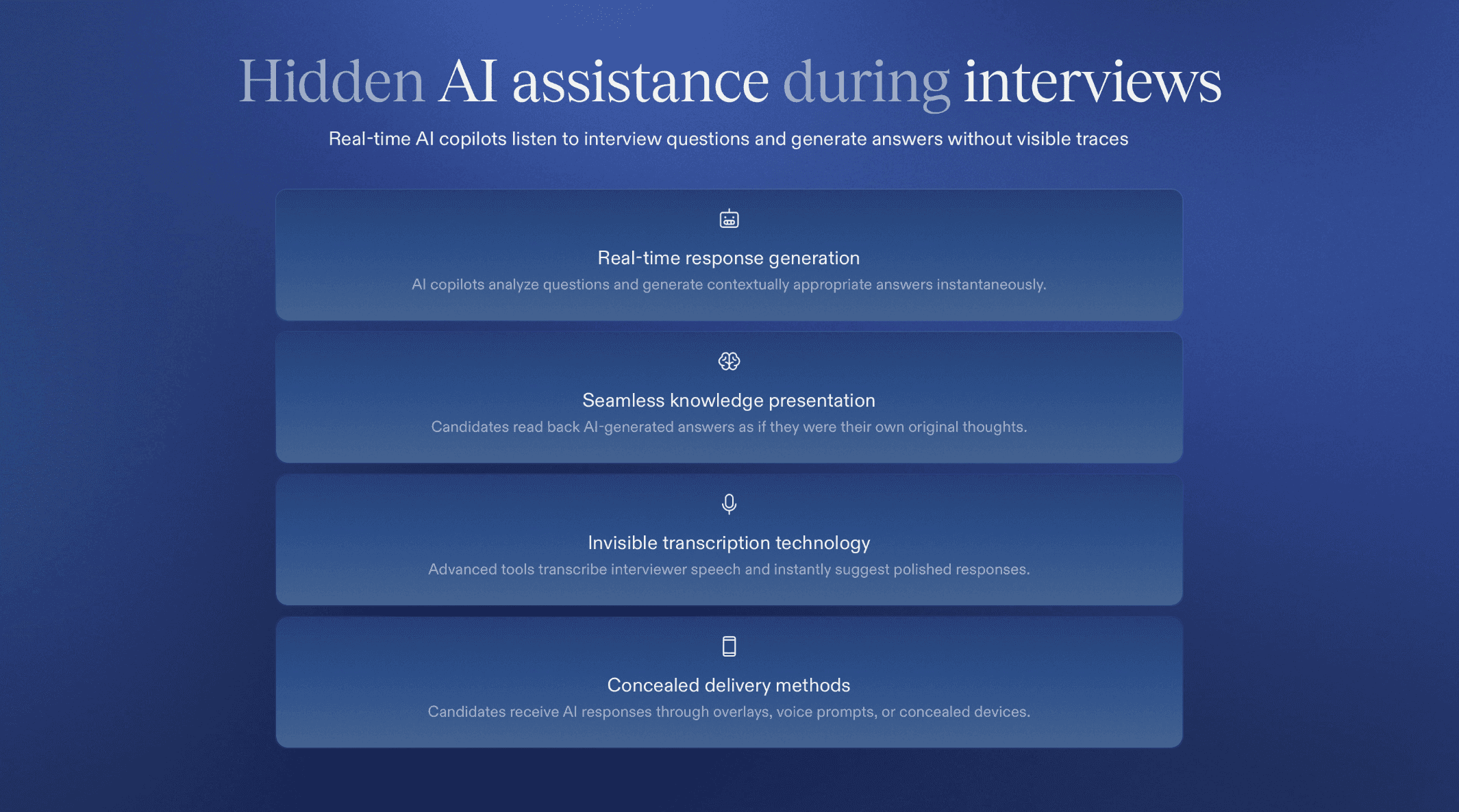

Invisible AI copilots: Real-time question listening and answer generation tools feed candidates responses through overlays, voice prompts, or hidden devices without obvious signs on screen.

Live answer assistance: Some tools transcribe the interviewer’s speech and instantly suggest highly polished replies that candidates read back as if original.

Deepfake identities: AI now can alter a person’s face or voice to impersonate someone else entirely during a video call and recruiters have already seen this in action.

Proxy interviewing: In extreme cases, one person may attend on behalf of the actual candidate, sometimes with AI to help them respond authentically.

Traditional signs of credibility have eroded

Recruiters once relied on cues like eye contact, hesitation, and problem-solving style but AI complicates all of them:

Eye movement and confidence no longer guarantee genuineness. AI-assisted answers can make responses smooth and very well-structured, sometimes too perfect, which ironically becomes a new red flag.

Surface fluency masks lack of real expertise. Candidates may ace the interview but falter immediately when asked rapid follow-ups or technical probing questions.

How to Detect AI Interview Cheating in Real Time

Catching AI-assisted cheating during a live interview can be tricky but it isn’t impossible. As cheating tools become more sophisticated and subtle, recruiters and interviewers need to rely on a mix of behavioral cues, technical signals, and adaptive questioning to distinguish genuine candidates from those getting real-time AI help.

Here’s how you can detect AI interview cheating as it’s happening:

1. Behavioral & Vocal Signals

These are often the first red flags that something feels “off”:

Unnatural pauses & consistent response timing: AI tools frequently need a brief moment to generate answers especially for complex questions. Recruiters report noticing repeating delays of 3–5 seconds before highly polished responses, regardless of question difficulty.

Odd eye movement patterns: Candidates using hidden tools (second screens, overlays, or prompts) may glance away repeatedly not like natural thinking, but in consistent left-to-right or corner patterns, suggesting they’re reading, not thinking.

Sudden shifts in speech style: AI-generated replies can sound too articulate, formal, or buzzword-rich compared to the candidate’s opening responses or when put on the spot with follow-ups.

Contradictions under pressure: When interviewers dive deeper. e.g., ask rapid follow-ups or unexpected scenarios, AI-assisted candidates may struggle to maintain consistency or logic, unlike genuine candidates.

2. Technical & System-Level Indicators

Real-time monitoring tools and patterns can expose hidden assistance:

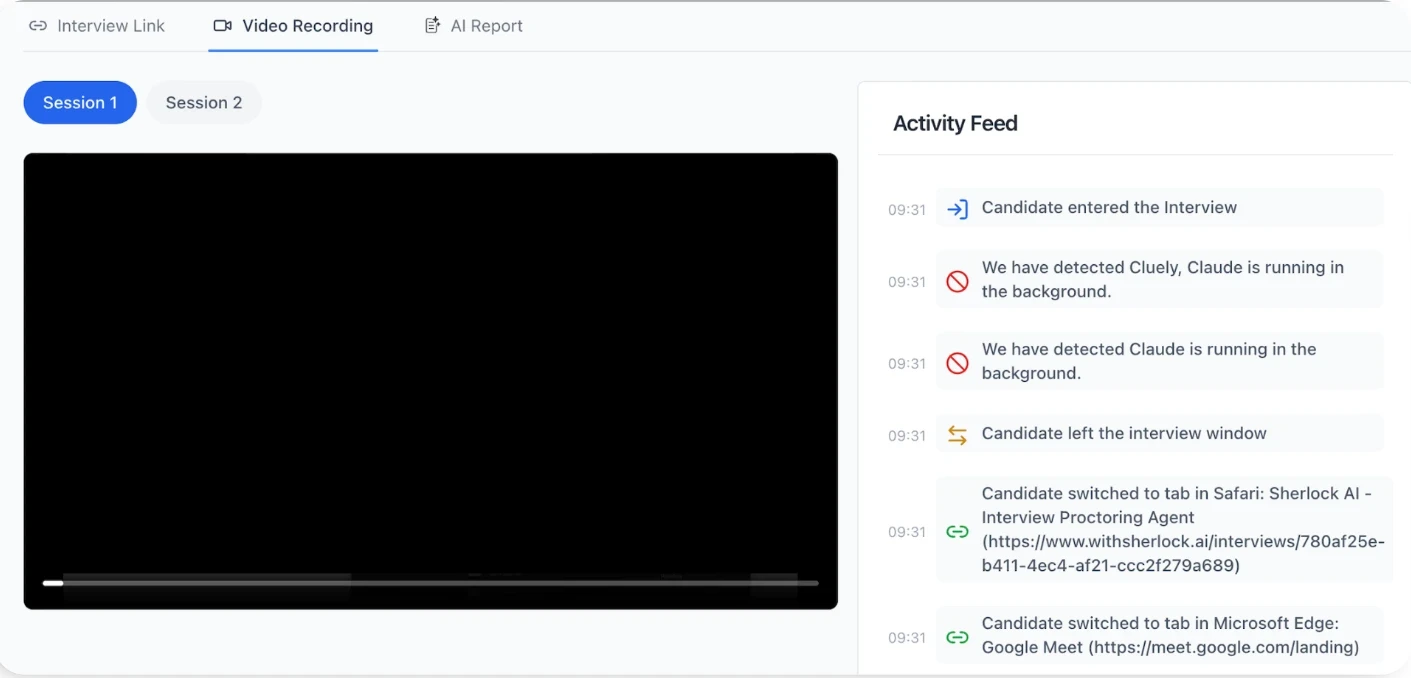

Tool/process detection: Advanced systems can detect unapproved software running in the background (AI copilots like InterviewCoder, Cluely, or other helper tools), even when hidden or minimized.

External input detection: Alerts when a second set of inputs (remote keyboard, unseen screens) are active, a strong sign someone else is typing answers or feeding responses.

Voice and facial consistency checks: Some detection systems analyze voice or video streams to make sure the same person is responding throughout (helping catch deepfakes or impersonation).

Speech pattern & response analysis: Real-time transcription and linguistic analysis can flag responses that are too structured, scripted, or patterned compared to normal human variance.

3. Tactical Interview Techniques to Reveal Cheating

Even without specialized tools, your questioning strategy matters:

Ask situational, specific follow-ups: Generic AI answers crack under domain-specific or highly situational probing (e.g., “Tell me about a failure only you can explain”). This exposes candidates who rely on general templates instead of lived experience.

Rapid-fire questions: Increasing the pace of questioning or injecting curveballs makes it harder for AI assistants to keep up and can reveal inconsistency or delay.

Behavior over polish: Look for natural thought patterns (pauses, self-correction, real emotional cues) over perfectly structured responses, AI tends to produce smoother, less human patterns.

Effective real-time detection blends behavioral intuition with technical signals and adaptive questioning. While no single method is foolproof on its own, recognizing these patterns together gives interviewers a much stronger chance of spotting AI-assisted cheating as it happens.

Read more: Top 10 AI Tools that Detect Cheating in Live Interviews

How to Prevent AI Interview Cheating Before It Becomes a Bad Hire

Detection helps you catch problems in the moment but prevention is what protects your hiring outcomes at scale. As AI-assisted cheating becomes easier and more normalized, organizations are realizing that interviews must be designed differently, with controls that start before Day 0, not after onboarding fails.

Here’s what effective prevention looks like in practice:

1. Redesign Interviews for Verification, Not Performance

Most interviews still reward polish over proof. AI thrives in that gap.

To reduce AI advantage:

Move away from generic, open-ended questions that AI can answer flawlessly.

Use experience-anchored questions (“Walk me through a real failure in your last role”) that require personal context.

Introduce live problem-solving, trade-off discussions, or role-specific scenarios where candidates must think aloud.

Vary question order and follow-ups dynamically, static scripts are easy for AI to anticipate.

2. Add Pre-Onboarding Controls (Before Access Is Granted)

Many companies wait until onboarding to verify identity or skills, that’s already too late.

Effective pre-onboarding controls include:

Identity verification before final interviews, not after offer acceptance.

Cross-checking live interview presence against official identity signals.

Verifying continuity, ensuring the same person interviewed is the one being hired.

Hiring fraud increasingly occurs before background checks are triggered, creating blind spots in early-stage hiring workflows.

3. Strengthen Identity Assurance in Live Interviews

AI has made impersonation easier through proxy interviewers, voice cloning, and deepfake video overlays.

Preventive measures include:

Continuous identity validation during interviews, not just one-time checks.

Monitoring face-voice consistency across the session.

Flagging anomalies such as sudden changes in voice tone, cadence, or facial behavior.

Forbes has reported a sharp rise in deepfake-assisted job fraud, warning that video alone is no longer sufficient proof of identity.

4. Verify Skills Beyond Q&A

Q&A tests knowledge recall, AI’s strongest skill, not real capability.

To reduce AI dependency:

Use work-sample simulations or short, live tasks.

Ask candidates to explain why they made certain decisions, not just what they did.

Revisit answers later in the interview to test consistency and depth.

Research shows that work-sample based evaluations are significantly more predictive of on-the-job performance than traditional interviews alone.

5. Why Purpose-Built Interview Integrity Systems Are Becoming Necessary

Human intuition and interview tweaks help but they don’t scale.

As AI cheating becomes more covert:

Manual detection becomes inconsistent across interviewers.

Signals like latency, eye-line drift, and impersonation cues are hard to track reliably without tooling.

Security and hiring teams need continuous, real-time integrity monitoring, not post-hire audits.

This is why many organizations are beginning to treat interview integrity as a security problem, not just a recruiting one, adopting systems purpose-built to detect and prevent AI-enabled fraud during live interviews, without disrupting the candidate experience.

Preventing AI interview cheating is about restoring trust in hiring signals. Companies that redesign interviews, add early controls, and invest in integrity-first systems stop bad hires before they enter the organization saving time, cost, and risk downstream.

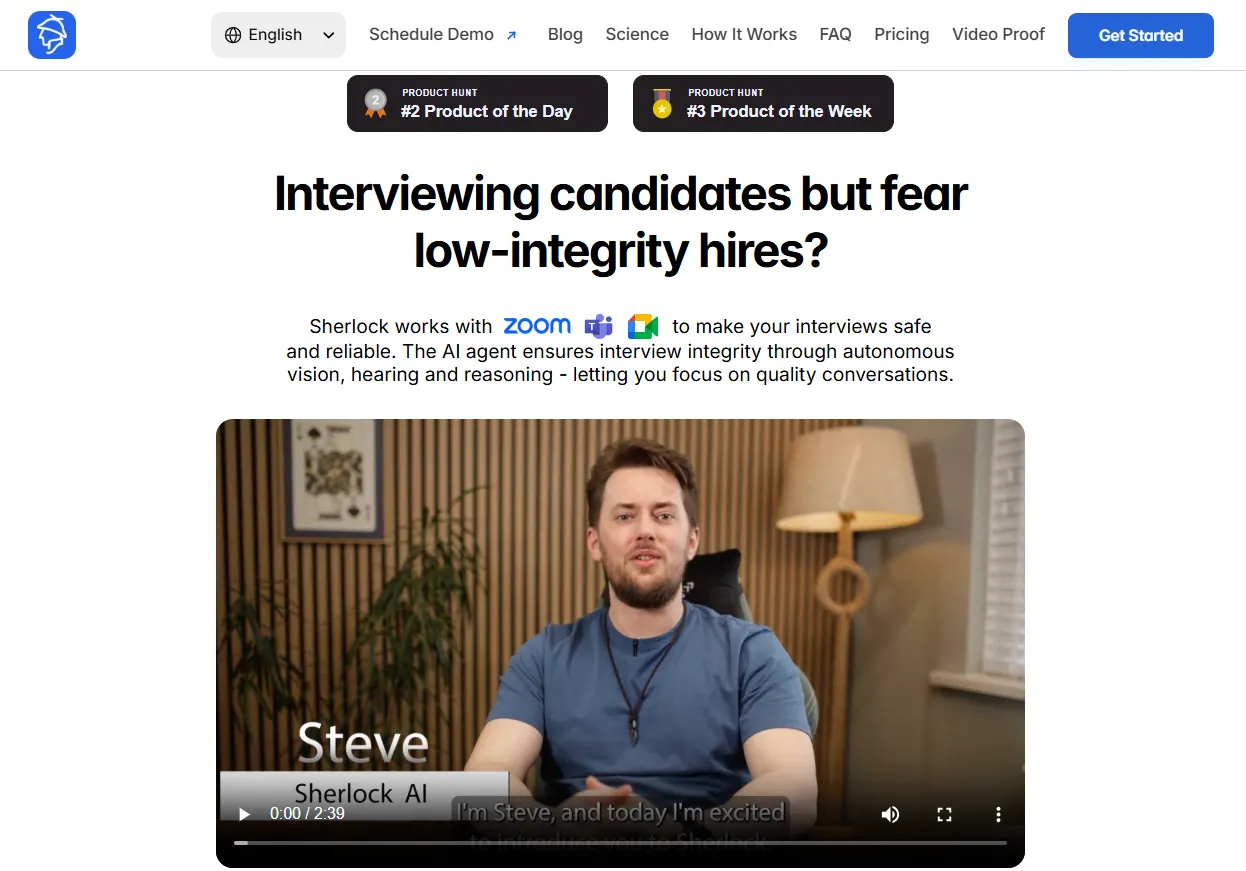

Sherlock AI: Prevent AI Interview Cheating

As AI-assisted cheating becomes more covert, most prevention strategies still rely on human judgment or interview redesign alone. That helps but it doesn’t scale, and it doesn’t catch what’s intentionally hidden. This is where Sherlock AI fits in.

Sherlock AI is built specifically to protect interview integrity in real time, without changing how interviews are run or disrupting candidates.

Key Features:

Real-time interview monitoring: Operates silently during live Zoom, Microsoft Teams, and Google Meet interviews without interrupting candidates or interviewers.

AI copilot and external assistance detection: Identifies patterns associated with hidden LLMs, real-time answer generation, scripting tools, and off-screen prompting even when tools are minimized or obscured.

Response latency and fluency analysis: Tracks unnatural timing, overly structured answers, and sudden shifts in articulation that often indicate AI-generated responses.

Eye-line and attention drift detection: Flags repeated gaze patterns and off-screen focus inconsistent with natural thinking or conversation flow.

Impersonation and proxy interview detection: Detects face, voice, and behavioral inconsistencies that suggest stand-ins, identity swaps, or deepfake-assisted interviews.

Continuous identity consistency checks: Ensures the same individual is present and responding throughout the interview session, not just at login.

Behavioral inconsistency tracking across follow-ups: Surfaces contradictions, shallow reasoning, and loss of depth when candidates are probed beyond scripted answers.

Non-intrusive, interview-agnostic design: Works with existing interview formats and questions, no process changes or candidate friction required.

Early risk visibility before offers are made: Provides signals during the interview stage, enabling action before hiring, onboarding, or access provisioning.

Interview design helps. Training helps. But in an era of invisible AI assistance, interview integrity needs its own system. Sherlock AI provides that missing layer ensuring the person you hire is the person you interviewed, with the skills they actually demonstrated.

Conclusion

AI has changed what interviews measure. Polished answers no longer guarantee real skill, they often reflect access to real-time assistance. As cheating becomes harder to see, relying on interviewer judgment alone isn’t enough.

Preventing AI interview cheating requires early, interview-stage controls that verify identity and behavior while the interview is happening. This is where tools like Sherlock AI fit in providing real-time integrity signals before offers are made or access is granted.

As remote hiring scales, interview integrity becomes a security problem, not just a hiring one. Teams that address it early make better hires and avoid costly mistakes later.