Back to all blogs

Detect AI cheating without video recording. Explore real-time behavioral cues, cross-round consistency, and automated tools to maintain hiring integrity.

Abhishek Kaushik

Feb 13, 2026

AI cheating in job interviews has moved from a fringe concern to a mainstream hiring challenge. Recruiters are now confronting AI‑generated responses, deepfake impersonations, and candidates using generative systems to misrepresent their skills, leading to rising mistrust and detection difficulties across industries.

72 % of recruiters report encountering fake resumes or credentials created with AI, and around 15 % have directly observed deepfake video or voice manipulation in interviews. With predictions that by 2028 as many as 1 in 4 candidate profiles could be fake, traditional video recordings or manual review simply can’t keep up with this scale of fraud.

Yet many organizations are hesitant to record interviews due to privacy concerns, legal restrictions, and candidate trust issues. This makes it essential to find detection methods that don’t rely on storing video or audio while still catching sophisticated cheating.

In this blog, we’ll explore why video recording isn’t enough, what non‑video signals you can monitor, and the tools and techniques that help identify AI‑assisted cheating without infringing on privacy.

Why Traditional Video Recording Isn’t Enough

Relying solely on video recordings to detect interview fraud is increasingly ineffective in the AI era. Video review only captures what’s on screen but modern cheating often happens out of view.

Limitations of post-interview video review: Reviewing recorded interviews after the fact is time-consuming, inconsistent, and rarely scalable for high-volume hiring. Subtle AI-assisted cues, like timing anomalies or off-screen prompts, are often missed.

How AI copilots, scripted answers, and off-screen prompts bypass video detection: Candidates can use AI tools to generate responses in real time or receive guidance from someone off-screen, making their answers appear human while completely avoiding detection on video.

Privacy concerns and regulatory restrictions: Recording interviews raises legal and ethical challenges. Many regions restrict video/audio capture without explicit consent, and excessive recording can harm candidate trust or violate data protection regulations.

In short, video alone is not enough, detecting AI-assisted cheating requires methods that go beyond what is visible on screen.

Behavioral and Interaction Signals to Monitor

Even without recording video, interviews can reveal critical patterns and cues that indicate AI-assisted cheating. Modern AI tools can mimic human speech convincingly, but subtle inconsistencies in behavior, attention, reasoning, and engagement often reveal assistance.

Key signals to monitor include:

1. Response Timing and Latency Anomalies

The speed and rhythm of answers can reveal AI assistance. AI tools often introduce unnatural pauses, delayed responses, or overly consistent timing that stand out in live interviews.

Track unusually fast or slow responses compared to question complexity. AI might produce near-perfect answers too quickly or take long “thinking” pauses to generate responses.

Look for repetitive timing patterns across multiple questions, which can indicate a scripted or AI-assisted workflow.

Use time-boxed questions or rapid-fire follow-ups to increase pressure and expose reliance on external help.

Tip: Combine timing analysis with other signals to reduce false positives; some pauses may be genuine thinking moments.

2. Speech Patterns, Phrasing, and Reasoning Depth

AI-generated answers often differ subtly from natural human speech. Careful analysis of language, structure, and logic can reveal inauthentic responses.

Listen for overly formal, polished, or repetitive phrasing that doesn’t match a candidate’s background or experience.

Probe reasoning depth with follow-up or trade-off questions; AI often provides surface-level logic without real-world nuance.

Watch for overconfident generalizations or lack of concrete examples, which may indicate answers generated without lived experience.

Example: A candidate describing “how they debugged a complex system” with perfect structure but no mention of trade-offs, failures, or real challenges may be relying on AI-generated content.

3. Eye Movement, Attention Shifts, and Engagement Cues

Even without video, live observation of attention and engagement patterns can reveal off-screen prompting. Candidates using hidden aids often exhibit subtle behavioral anomalies.

Notice frequent glances off-screen, split attention, or scanning behaviors.

Observe micro-pauses, fidgeting, or unusual composure under difficult questions.

Track engagement changes when questions are unexpected or require improvisation.

Tip: Combining these cues with questioning pressure can quickly highlight AI-assisted or coached responses.

4. Cross-Round Consistency and Memory Tracking

AI tools and scripts often fail to maintain longitudinal consistency. Tracking candidate responses across rounds can uncover discrepancies.

Compare answers and reasoning across multiple interview stages.

Detect contradictions, memory drift, or repeated scripted patterns that lack natural variation.

Identify gaps between claimed experience and real problem-solving ability, such as technical steps skipped or inconsistently described.

Example: A candidate giving detailed technical steps in round one but failing to recall the same approach in round two may be relying on pre-generated answers.

5. Interactive Problem Solving Under Pressure

Dynamic tasks expose reliance on AI, which struggles with real-time problem-solving in evolving contexts.

Assign coding exercises, whiteboarding, or scenario-based tasks that require on-the-spot thinking.

Observe how candidates adapt when assumptions change or information is incomplete.

Focus on the problem-solving process, not just the final answer. AI may get correct outcomes but often lacks reasoning detail.

Tip: Encourage candidates to think aloud during tasks to reveal authentic reasoning paths.

6. Real-Time Question Variation and Probing

Unexpected follow-ups force spontaneous reasoning, which AI or scripts cannot reliably produce.

Introduce edge-case, hypothetical, or “what-if” questions mid-interview.

Monitor how quickly and confidently candidates adjust and defend their answers.

Track depth, clarity, and coherence of explanations under pressure to identify gaps between real knowledge and AI-generated content.

Example: Asking “How would you solve this if X constraint didn’t exist?” or “Explain why you chose this approach over another” can immediately expose superficial or scripted reasoning.

These behavioral and interaction signals provide strong indicators of fraud and help assess true human capability.

However, manually tracking all these cues across multiple candidates and rounds is challenging and prone to error. To scale detection effectively and ensure no AI-assisted cheating slips through, organizations need purpose-built tools and systems that automate monitoring, analyze patterns in real time, and provide actionable insights for recruiters.

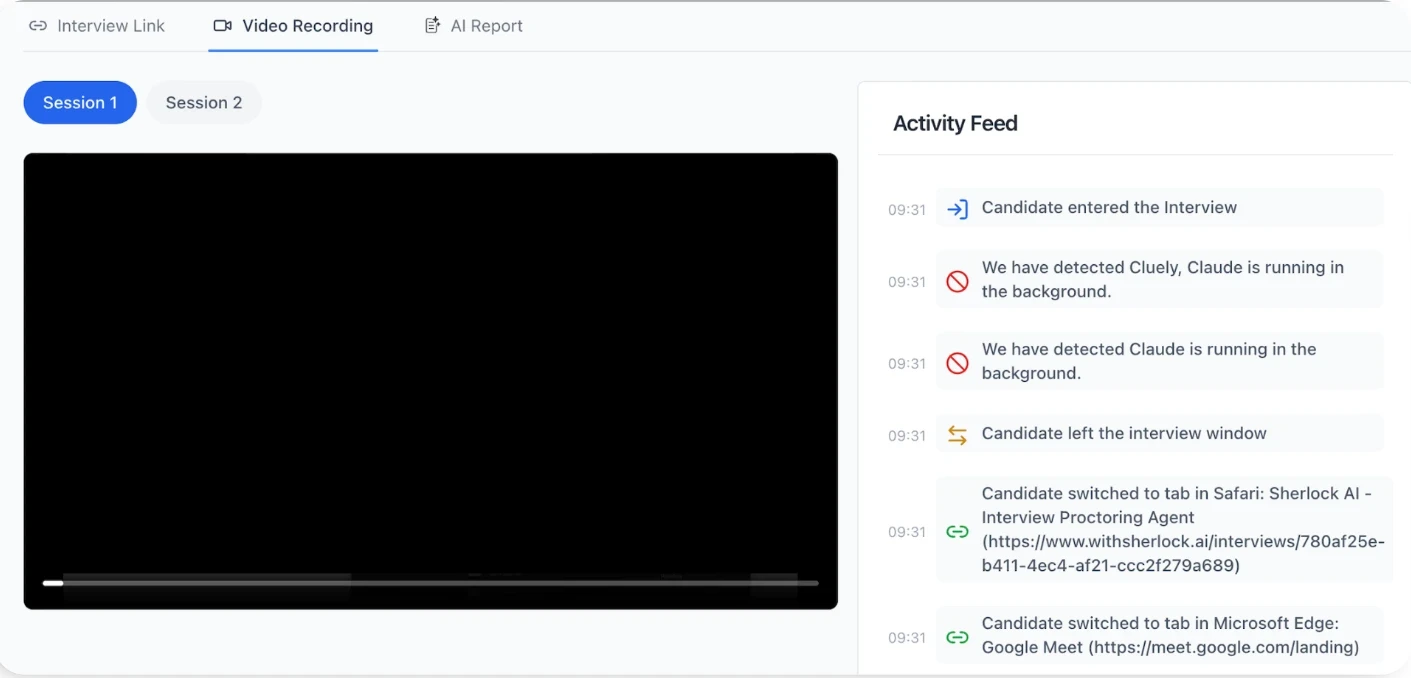

Sherlock AI: Detecting AI Cheating Without Video

Sherlock AI is a purpose-built platform designed to detect AI-assisted cheating without recording video, enabling recruiters to scale integrity checks while maintaining candidate privacy.

Below are the key features that make Sherlock AI effective at detecting modern interview fraud:

Key Features of Sherlock AI

AI Copilot Detection: Flags candidates using AI tools or generative language models to produce real-time responses.

Deepfake and Voice Cloning Identification: Detects synthetic faces, voices, or audio streams designed to impersonate the candidate.

Proxy Candidate Detection: Verifies identity across rounds to prevent impersonation or replacement by another person.

Scripted or Pre-Generated Answer Detection: Identifies over-polished, repetitive, or inauthentic responses.

Cross-Round Consistency Analysis: Tracks contradictions, memory drift, and capability inflation across multiple interviews.

Audit-Ready Reporting: Generates structured evidence for HR, compliance, and legal review without storing video.

Recruiter Efficiency Tools: Prioritizes high-risk interviews and delivers actionable insights in real time to focus on genuine skill assessment.

Sherlock AI transforms complex behavioral signals into scalable, automated detection, enabling organizations to protect hiring integrity without recording video.

By combining real-time analysis, identity verification, AI-response detection, and cross-round consistency checks, Sherlock AI ensures recruiters can focus on genuine candidate capability while maintaining privacy, fairness, and trust across the hiring process.

Conclusion

Detecting AI-assisted cheating without recording video iss essential for maintaining hiring integrity in the remote, AI-driven era. Traditional video-based methods and manual checks cannot reliably catch AI copilots, deepfakes, voice cloning, scripted answers, or proxy candidates.

By leveraging behavioral signals, interactive problem-solving, cross-round consistency, and tools like Sherlock AI, organizations can detect AI cheating in real time without violating privacy. This approach ensures that every interview reflects true candidate capability, safeguards fairness, and allows recruiters to make informed hiring decisions.

In short, building video-free, AI-resilient interview systems is the key to preventing fraud and ensuring trustworthy, scalable hiring.