Back to all blogs

Learn how to detect AI-assisted cheating in interviews without invading candidate privacy, using behavioral signals, integrity audits, and privacy-first tools like Sherlock.

Abhishek Kaushik

Feb 17, 2026

In 2026, AI-assisted cheating has become a real and growing threat in hiring. Recent surveys suggest that about 1 in 5 professionals admit to using AI tools during job interviews, and over half of workers believe it’s becoming the norm in recruitment settings.

Traditional surveillance-style monitoring like webcams, screen recording, and invasive proctoring might seem like the obvious way to stop this trend. But such approaches can infringe on privacy and trust, raise legal and ethical issues, and still miss sophisticated misuse of AI. Recent legal actions have even challenged AI hiring systems for potentially violating privacy laws when personal data is processed without transparent consent.

In this post, we’ll explore how organizations can detect AI-assisted cheating effectively without resorting to invasive monitoring, and in ways that preserve both candidate experience and legal compliance.

Why Privacy-Invasive Controls Backfire in Hiring

Adding webcams, screen capture, or always-on proctoring might seem like the obvious way to prevent AI cheating but it often doesn’t work and can create new problems.

Hurts candidate trust: Constant monitoring makes applicants feel watched, stressed, or unwelcome, leading to drop-offs.

Legal and compliance risks: GDPR, CCPA, and other privacy laws restrict unnecessary data collection. Recording video or keystrokes without clear consent can create legal liability.

False sense of security: Heavy monitoring may feel like it prevents cheating, but AI can still generate answers that bypass these controls.

Bias and fairness issues: Surveillance can penalize candidates who are nervous or slow typists, making hiring less fair.

Invasive monitoring adds risk without fully solving the problem. Smarter approaches rely on behavioral and signal-based detection, not constant surveillance.

Behavioral Signals That Reveal AI Assistance

Detecting AI-assisted responses doesn’t require tracking a candidate’s screen, keystrokes, or personal devices. Instead, it’s about analyzing how they answer and spotting patterns that feel unnatural for humans. Here are the key behavioral signals to watch for:

1. Unnatural Timing and Response Flow

Candidates using AI often reply with unrealistically fast answers or maintain perfectly consistent pacing, unlike natural human speech or thought.

Responses may lack the typical pauses, self-corrections, or hesitations that occur when someone thinks through a problem.

2. Over-Structured or Scripted Answers

AI-generated responses tend to be highly organized, often following a template-like format.

Across multiple questions, the answer style may remain uniform, with polished grammar, precise examples, and stepwise explanations that a human might vary.

3. Sudden Jumps in Vocabulary or Skill Level

Watch for candidates who start with simple explanations and suddenly produce advanced language, concepts, or technical detail.

These sudden jumps can indicate external assistance, since human knowledge usually progresses more gradually.

4. Lack of Natural Interaction

AI-assisted candidates may skip clarifying questions, fail to ask for examples, or show minimal engagement in discussion.

Natural conversation often includes back-and-forth, thoughtful interruptions, or attempts to confirm understanding, patterns that AI may not mimic perfectly.

5. Inconsistent Alignment With Experience

Answers may overperform relative to a candidate’s resume or background, or show knowledge in unrelated areas.

Cross-question consistency checks for example, asking the same concept in different ways, often reveal mismatches between claimed skill and actual understanding.

You don’t need invasive monitoring to detect AI usage. By focusing on timing, structure, engagement, and skill alignment, you can identify suspicious patterns while fully respecting privacy. These behavioral signals are actionable, measurable, and effective without recording or storing personal data.

Privacy-First AI Auditing: Detecting Cheating Without Surveillance

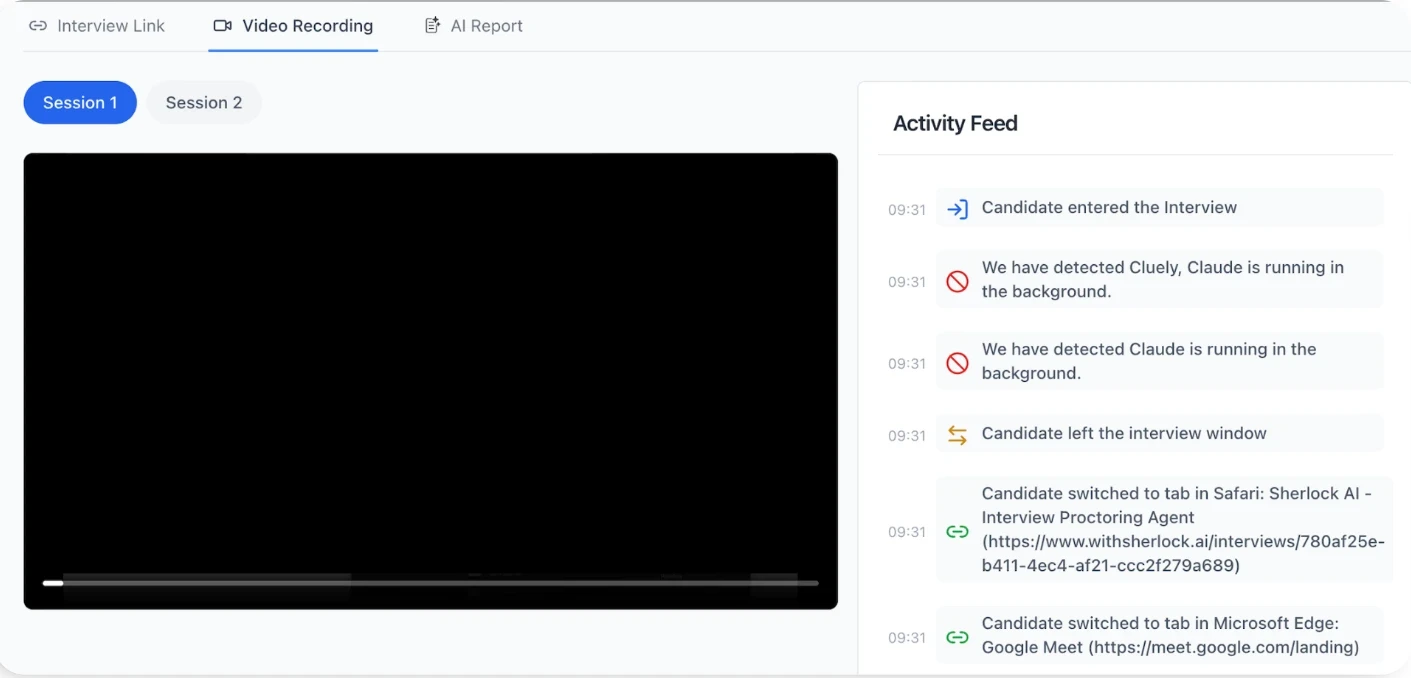

The key to combating AI-assisted cheating is analyzing the integrity of the interview itself. Privacy-first AI auditing focuses on patterns and signals, rather than invading personal data.

1. Interview-Level Integrity Analysis

Instead of monitoring a candidate’s screen, webcam, or keystrokes, modern systems evaluate how the interview progresses as a whole.

This approach detects anomalies in responses, timing, and engagement across the interview, allowing organizations to flag potential AI usage without tracking individuals.

2. Signal-Based Detection

Privacy-preserving auditing relies on behavioral and linguistic signals, not personal data.

Examples include:

Linguistic patterns: unnatural phrasing, over-structured sentences, or vocabulary jumps

Consistency checks: comparing answers to prior questions or across multiple sessions

Skill verification: validating that responses align with claimed experience and expertise

3. Real-Time vs Post-Interview Scoring

Integrity scoring can happen during the interview to give recruiters instant alerts, or after the session for deeper analysis.

Crucially, this is done without storing private candidate data, relying on anonymized or aggregate patterns to maintain compliance and trust.

The future of AI fraud detection is privacy-preserving, scalable, and audit-driven. It’s about measuring interview integrity, not monitoring people, ensuring both fairness and security.

Sherlock AI: Purpose-Built for Privacy-First AI Cheating Detection

When it comes to detecting AI-assisted cheating at scale, generic monitoring tools fall short.

Sherlock AI is designed specifically to address the challenges of modern interviews, combining privacy, accuracy, and scalability.

1. Signal-Driven, Not Surveillance-Driven

Sherlock AI analyzes interview-level behavioral signals such as timing, consistency, and answer structure.

It does not track webcams, screens, or keystrokes, ensuring candidates’ privacy is fully respected.

Recruiters get actionable insights, not invasive data streams.

2. Detects Deepfake and Proxy Attempts

Beyond AI-generated answers, Sherlock AI can flag identity fraud, including deepfake videos or proxy candidates.

By checking voice, behavioral patterns, and response alignment, it ensures the person in the interview matches the claimed identity.

3. Real-Time and Post-Interview Insights

Sherlock AI can score interviews as they happen to alert recruiters immediately, or run post-interview audits for deeper integrity checks.

This flexible approach allows organizations to catch subtle AI assistance that traditional methods often miss.

4. Scalable for Mass Hiring

Large hiring drives create ideal conditions for AI cheating, proxy candidates, and identity fraud.

Sherlock AI is built to monitor hundreds or thousands of interviews efficiently, providing a consistent, reliable integrity assessment at scale.

5. Trust-Centric Design

By focusing on trust in the interview process, Sherlock AI ensures fair and unbiased candidate evaluation.

Candidates experience privacy-respecting interviews, while organizations gain confidence in the authenticity of their hiring decisions.

Sherlock AI provides a purpose-built, privacy-first solution for modern hiring challenges. It goes beyond invasive monitoring to detect AI-assisted cheating, proxies, and deepfakes, helping organizations hire with confidence while protecting candidate trust.

Conclusion

AI-assisted cheating has become a reality that can distort hiring decisions, waste resources, and introduce risk. Traditional monitoring tools and invasive controls are ineffective and harmful, often undermining candidate trust and creating legal challenges.

The solution lies in privacy-first, signal-based approaches that focus on interview integrity, not personal surveillance. By analyzing behavioral patterns, response consistency, and skill alignment, organizations can detect AI assistance without collecting sensitive data.

Purpose-built systems like Sherlock AI make this practical at scale, detecting AI-generated answers, proxies, and deepfakes while preserving fairness and candidate experience. With privacy-preserving auditing, recruiters can hire with confidence, ensuring that their decisions reflect real human talent, not artificial assistance.