Back to all blogs

Learn how to design interviews AI can’t game using smarter questions, reasoning-based evaluation, and tools like Sherlock AI to protect hiring integrity.

Abhishek Kaushik

Feb 11, 2026

Artificial intelligence is transforming how companies hire but not always in ways recruiters intended. Applicants can now use generative AI to game interview processes and inflate their perceived skills.

Industry data shows that 1 in 5 professionals admit to secretly using AI during job interviews, and more than half say it has become the norm now.

A whooping 72% of recruiters have encountered AI job fraud, and deepfake tools are now being used in approximately 15% of video interviews.

Major tech companies are reintroducing in-person interview stages to reduce reliance on remote AI-vulnerable formats, while others are experimenting with policies that either prohibit or explicitly integrate AI into interview workflows to reflect real-world job contexts.

In this blog, we explore how to design interview processes that focus on authentic skill signals, adaptive reasoning, and human insight, so organizations can identify real talent in an age where AI can game nearly any predictable interview format.

Why AI Can Game Most Interviews Today

Most interviews weren’t designed to be secure systems, they were designed to evaluate humans in a world where only humans were answering.

Interviews Reward Fluency More Than Truth: Modern interviews often prioritize clear articulation, confidence, and structure. Unfortunately, these are exactly the traits generative AI excels at.

Predictable Formats Train AI Faster Than Candidates: Behavioral frameworks like STAR, common case questions, and recycled technical prompts are now deeply embedded in AI training data. The more standardized your interview, the easier it becomes for AI to produce “perfect” answers at scale.

Scoring Systems Favor Correctness Over Capability: Most rubrics reward completeness and keyword coverage, not cognitive effort or real reasoning. AI thrives under such scoring models, even when underlying understanding is shallow.

Interviews were built to evaluate humans not to defend against machines. Designing interviews AI can’t game requires closing that gap deliberately.

How to Design Interviews AI Can’t Game

Designing interviews that AI can’t game doesn’t mean banning technology or turning interviews into interrogations. It means redesigning how signal is generated, evaluated, and protected so that human capability remains visible even in an AI-saturated environment.

This requires changes across three layers: questions, evaluation, and the interview system itself.

1. Redesign Interview Questions for Signal, Not Style

Generic beahvioural questions like, “Tell me about a time you showed leadership” or “Describe a challenge you overcame” have now become AI friendly.

AI can now pre-generate high-quality responses with near-perfect framing. It doesn’t need real experience only familiarity with how experience should sound.

To make interviews AI-resistant, questions must demand experience, judgment, and tradeoffs, not narration.

Experience reconstruction

Instead of:

“Tell me about a bug you fixed”

Ask:

“Walk me through exactly how you diagnosed and fixed the last critical bug you worked on, from first symptom to resolution.”

This forces:

Process recall

Tool familiarity

Decision sequencing

Real cognitive effort

Tradeoff-based questions

Instead of:

“What’s the best way to design X?”

Ask:

“If you had to optimize for speed over reliability here, what would you sacrifice and why?”

This surfaces:

Value systems

Practical judgment

Engineering or business maturity, not just theoretical knowledge.

Constraint-based problem solving

Give problems with:

Incomplete information

Artificial limitations

Conflicting goals

These simulate real work and reveal how candidates reason under ambiguity.

The thinking process matters more than the final answer

Reward candidates for:

Asking clarifying questions

Identifying missing data

Acknowledging uncertainty

Not just for reaching “correct” conclusions.

2. Evaluate How Candidates Think, Not Just What They Say

AI struggles with authentic reasoning under pressure.

To design interviews AI can’t game, evaluation must shift toward cognitive effort, adaptability, and reasoning integrity.

Ask candidates to revise answers live

After an initial response, ask:

“Now assume budget is cut in half, what changes?”

or

“How would your answer change if this assumption is wrong?”

This tests:

Mental flexibility

Depth of understanding

Ability to adapt reasoning

Introduce contradictions or edge cases

Ask:

“What would break your solution?”

“Where would this fail in the real world?”

This exposes:

Overconfidence

Superficial logic

Lack of practical grounding

Follow-up chains that force memory consistency

Ask multi-step questions that reference earlier parts of their answer:

“Earlier you said X how does that align with Y?”

AI-generated responses often lack internal consistency across long reasoning chains.

Ask for reasoning paths, not summaries

Instead of:

“Summarize your approach”

Ask:

“What were the first three things you thought about and why?”

This reveals how the mind actually navigates problems.

“Explain your own answer” loops

Ask:

“Why is this the right decision?”

Then:

“Why is that important?”

Then:

“What would change your mind?”

This drills into depth and conviction.

3. Engineer the Interview Experience, Not Just the Questions

Even the best questions fail if the interview environment allows invisible AI assistance.

Live problem-solving over prepared responses

Avoid asking:

“Tell me how you would…”

Prefer:

“Let’s solve this together now.”

This removes pre-generation and forces real-time cognition.

Screen sharing + navigation tasks

Ask candidates to:

Walk through code

Navigate unfamiliar systems

Debug live

Interrupt-driven questioning

Break their flow intentionally:

“Pause. Why did you choose that?”

“What would you do differently if…?”

This disrupts scripted or AI-assisted delivery.

Designing interviews AI can’t game restores signal integrity in hiring.

Smart Interviews are built around real cognitive work, adaptive evaluation and system-level protections

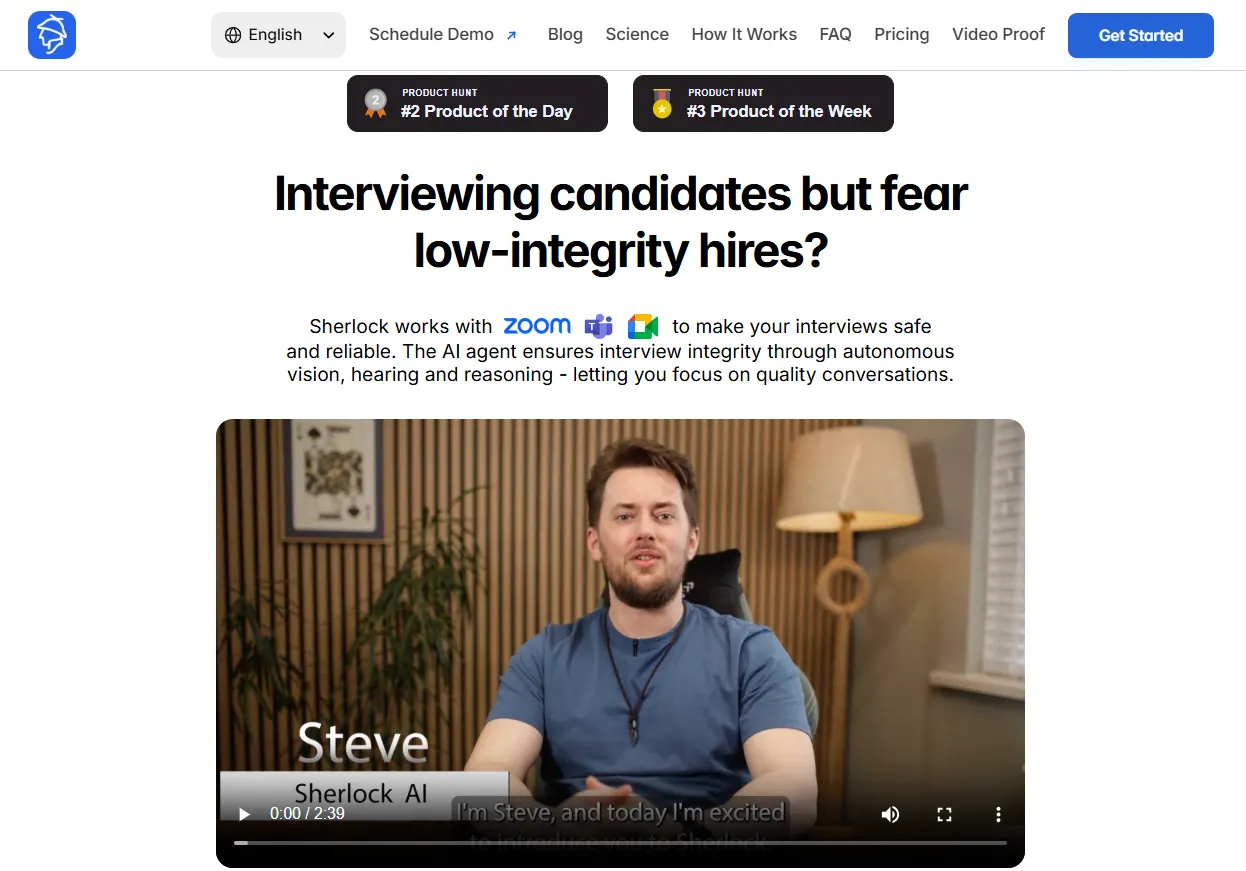

Sherlock AI: Restoring Trust in Remote Interviews

Sherlock AI is built to preserve the integrity of interview signals by operating silently within live interview environments like Zoom, Teams, and Google Meet. It doesn’t interrupt candidates or degrade experience, it observes patterns humans can’t reliably detect.

Sherlock AI focuses on preserving fairness, protecting hiring accuracy and preventing distorted decision-making

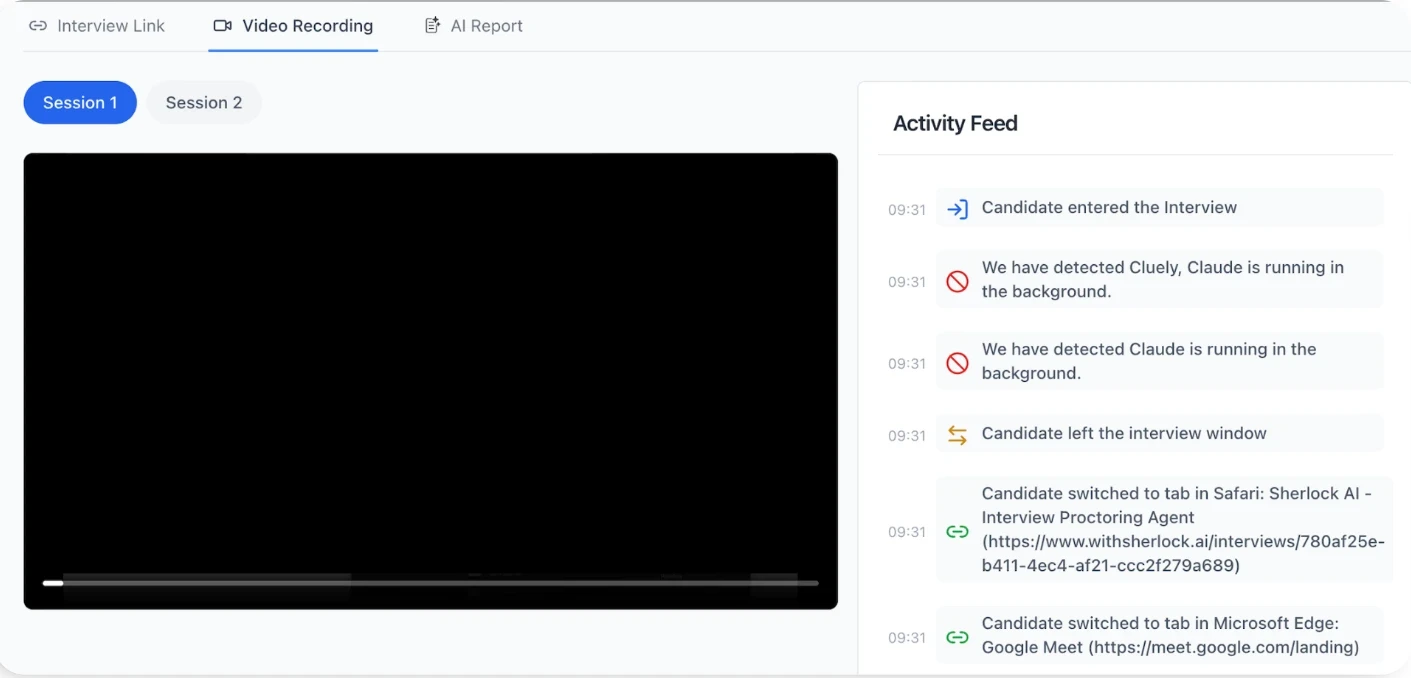

What Sherlock AI Detects That Humans Miss

Human interviewers are trained to evaluate answers, not to detect invisible AI systems operating alongside candidates. Sherlock AI fills this gap by monitoring behavioral, temporal, and identity-level signals that are impossible to track reliably in real time without automation.

Detects real-time AI copilots and LLM-assisted answering during live interviews

Flags unnatural response timing patterns linked to AI-generated replies

Identifies deepfake faces, voice cloning, and synthetic video or audio streams

Detects impersonation by matching speaker identity across interview rounds

Flags proxy candidates replacing the real applicant mid-process

Identifies scripted or pre-generated answers masquerading as spontaneous responses

Detects behavioral signals of off-screen prompting and external assistance

Surfaces mismatches between fluency and actual reasoning depth

Scores response authenticity based on spontaneity and human-like variance

Tracks cross-round consistency in identity, reasoning, and claimed experience

Flags contradictions and memory drift across multiple interviews

Generates audit-ready evidence for compliance and dispute resolution

Together, these capabilities allow Sherlock AI to turn interviews from trust-based conversations into verifiable, AI-resilient systems, enabling organizations to hire with confidence even as AI becomes invisible.

Conclusion

AI has changed what interviews reveal not just how candidates prepare. When polished answers can be generated instantly, interviews must shift from evaluating presentation to verifying real capability.

Designing interviews AI can’t game means prioritizing signal over style, reasoning over responses, and system integrity over blind trust. With tools like Sherlock AI protecting interview authenticity at scale, organizations can move beyond suspicion and back to confident hiring.

In an AI-first hiring world, interviews must become AI-resilient systems, not just better conversations.