Back to all blogs

Audit interview integrity using AI to detect fraud, AI-assisted cheating, and skill misrepresentation before bad hires happen. Learn how AI secures hiring decisions.

Abhishek Kaushik

Feb 17, 2026

Interview integrity is under unprecedented pressure as AI tools become widely used in hiring. In a Checkr report, 59 % of hiring managers said they’ve suspected candidates used AI tools to misrepresent themselves, and 62 % agreed that job seekers are now better at faking identities with AI than hiring teams are at detecting them.

The problem isn’t limited to AI-assisted deception. A separate survey revealed that 71 % of HR professionals have encountered fabricated or misleading candidate information, yet only about one in five feel confident in spotting this type of fraud.

These findings make it clear that traditional interview evaluations are no longer sufficient to distinguish genuine skills from AI-assisted responses. To protect hiring quality and build trust in talent decisions, organizations must adopt AI-powered interview integrity audits that detect fraud early and reliably.

Why Interview Integrity Matters Today

Interview integrity means verifying who the candidate really is, whether their skills are authentic, and ensuring their responses reflect their own ability without outside help.

Today’s remote hiring landscape and AI tools make this harder than ever.

Remote interviews remove many natural checks that used to help confirm identity and capability. Without face-to-face interaction, it’s easier for candidates to use off-screen tools, scripted answers, or even third-party impostors to get through screens undetected.

A large manager survey found that 31 % of hiring leaders have interviewed someone who later turned out to be using a fake identity, and 35 % confirmed someone other than the listed applicant participated in a virtual interview.

The risks of compromised interview integrity are substantial and very real for business performance. According to research, a bad hire can cost an organization up to 30 % of the employee’s first-year salary in lost productivity, retraining, and turnover costs.

When a mis-hire happens, the effects ripple outward:

Teams lose confidence in assessments and may overcompensate with extra interview rounds.

Projects get delayed and workloads shift to other employees.

Managers spend time correcting direction instead of driving outcomes.

All of this shows that interview integrity is a measurable business risk that impacts hiring quality, productivity, and organizational trust.

Why Traditional Methods Fail

Traditional interview methods were built for a world where candidates responded with their own knowledge. Today, these approaches often miss sophisticated AI-assisted fraud, especially in remote and high-volume hiring processes.

Key Limitations of Traditional Methods

Structured interviews miss hidden assistance: Standard question sequences and predictable formats make it easy for candidates to use AI copilots or hidden tools that generate real-time answers, without triggering suspicion. These tools can remain invisible during screen sharing or video calls, bypassing conventional checks.

Human review can’t spot tech-enabled deception: Traditional cues like eye contact, pauses, or confidence are no longer reliable indicators of authenticity. AI-generated responses can sound polished and natural, fooling even experienced interviewers without deeper verification technology.

Post-hire checks are too late: Waiting for performance reviews or probation periods to catch misrepresentation means bad hires slip into teams, costing companies time, money, and morale before issues surface.

Why Manual Audits Fall Short

Remote interviews remove natural safeguards: Body language, spontaneous interaction, and in-person dynamics that once helped confirm authenticity are absent in virtual settings, creating an easy vector for deception.

High volume demands overwhelm reviewers: Recruiters managing dozens of interviews per week can’t realistically scrutinize every response for subtle signs of AI assistance or identity fraud using manual processes alone.

Traditional interview methods were never designed to detect real-time AI assistance, proxy participation, or hidden cheating tools. Without AI-powered auditing integrated into the hiring flow, these gaps leave organizations vulnerable to fraud that standard processes simply don’t catch.

How AI Can Audit Interview Integrity

AI makes it possible to audit interviews not by intuition, but by evidence. Instead of relying on what looks authentic, AI evaluates what is behaviorally and technically consistent with genuine human performance at scale and in real time.

What Signals AI Can Detect

Modern AI systems can surface patterns that humans consistently miss, including:

Impersonation and identity inconsistencies: AI can compare facial movement, voice signatures, and behavioral markers across interview stages to flag when the person speaking may not be the same individual who applied or completed earlier rounds.

External assistance and AI copilots: Subtle signals such as unnatural response latency, eye-movement patterns, speech rhythm changes, and answer complexity spikes can indicate real-time help from off-screen tools or AI copilots.

Scripted or AI-generated responses: AI can identify language patterns common in generative models such as overly generic phrasing, uniform sentence structure, and low variance in syntax, that differ from natural human speech.

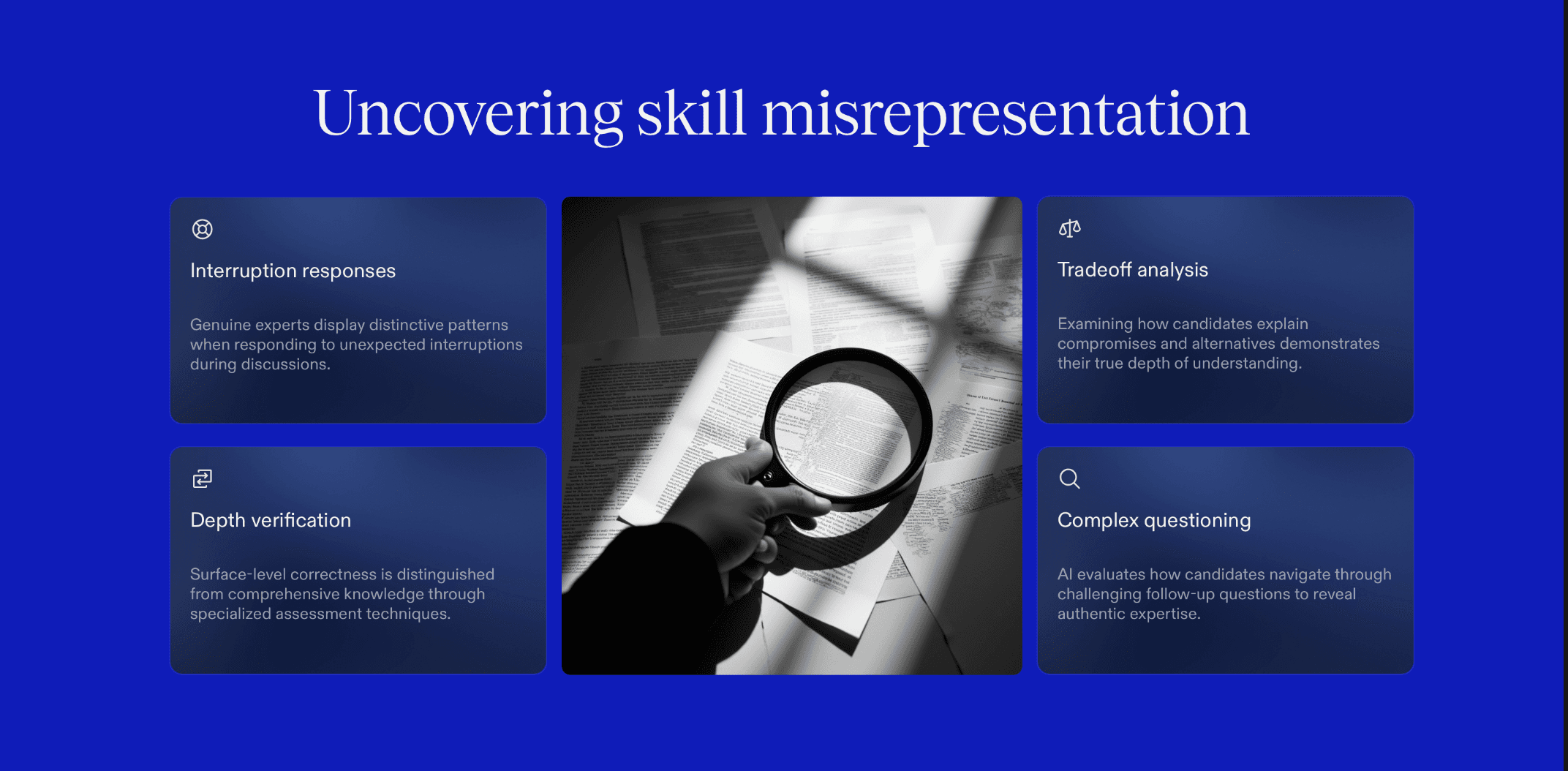

Skill misrepresentation: By analyzing how candidates reason through follow-ups, explain tradeoffs, or respond to interruptions, AI can distinguish surface-level correctness from true depth of understanding.

These are not signals a recruiter can reliably spot while running a live interview especially under time pressure.

Why AI Auditing Is More Effective

AI brings three advantages that traditional methods cannot match:

Faster detection: AI processes behavioral, visual, and linguistic signals simultaneously, reducing the time between fraud occurring and being identified from weeks to minutes.

Scalable monitoring: Whether it’s 10 interviews a week or 10,000, AI applies the same scrutiny without fatigue, bias, or variance in judgment.

More reliable skill assessment: By filtering out assisted or scripted performance, AI helps ensure that what you’re evaluating is the candidate’s actual capability not their toolset.

AI doesn’t replace interviews, it makes them trustworthy again. By auditing identity, independence, and skill authenticity in real time, AI restores confidence that hiring decisions are based on real people with real abilities.

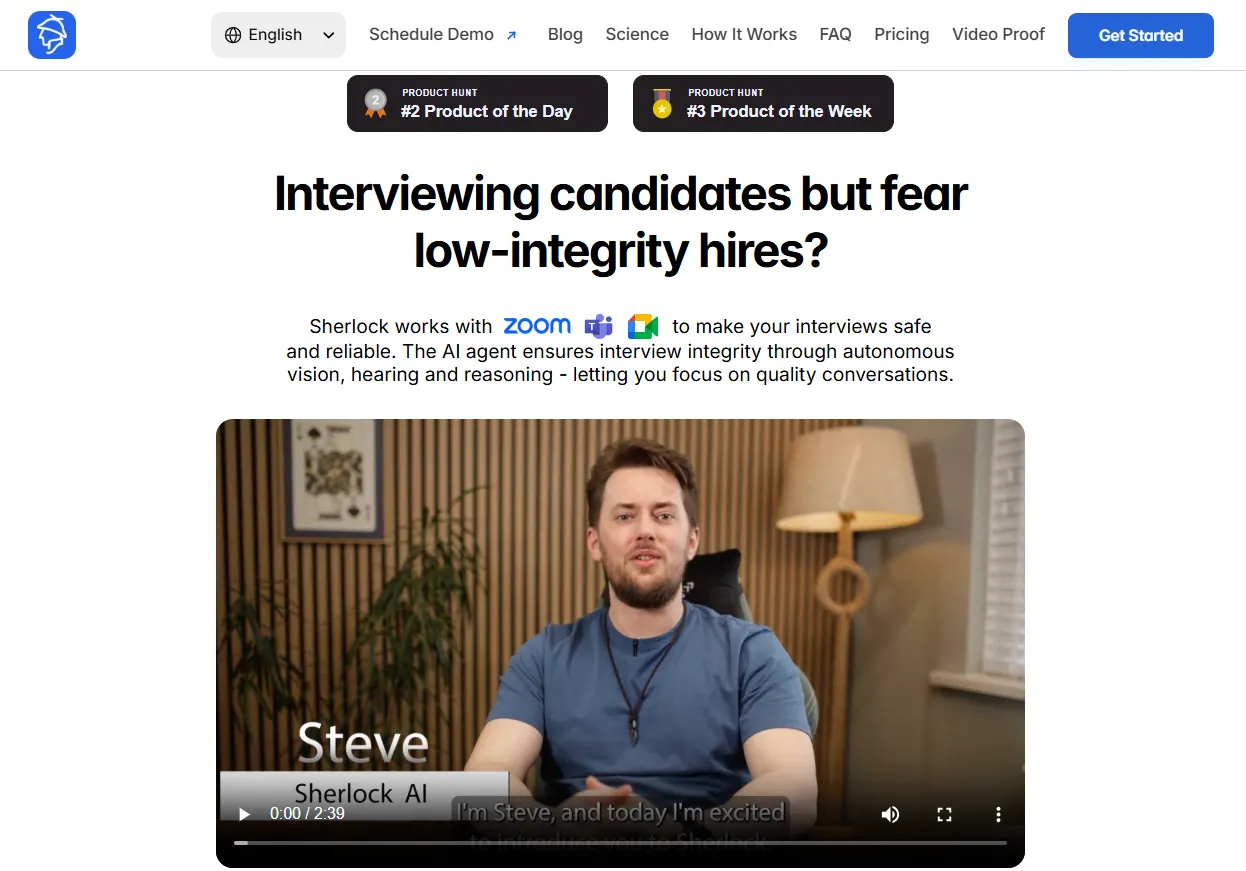

Sherlock AI: Built for Interview Integrity in the AI Era

Sherlock AI is built specifically to protect interview integrity in remote hiring as a purpose-built interview integrity layer that operates silently inside live interviews.

Interview integrity can no longer be treated as a side effect of good interviewing. It needs its own system.

Sherlock AI doesn’t replace interviews or recruiters, it strengthens them by verifying what humans cannot reliably observe by:

1. Identity Integrity

Detects inconsistencies in facial and voice patterns across interview rounds

Flags potential impersonation, deepfake or candidate substitution

Identifies mismatches between the applicant and the interview participant

Prevents proxy interviews from slipping through remote hiring

2. Independence of Thought

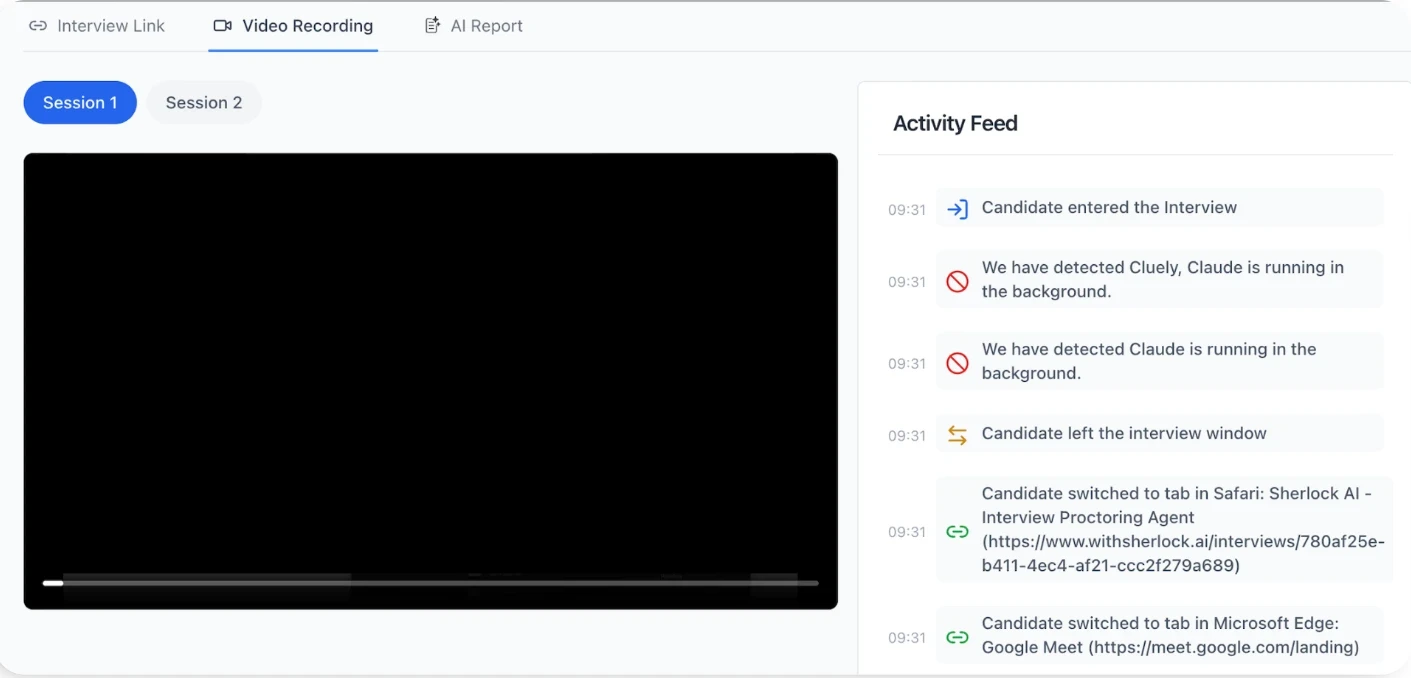

Detects signs of off-screen assistance from AI copilots or human helpers

Flags unnatural response delays linked to prompting

Identifies gaze and attention patterns associated with second-device usage

Surfaces sudden performance spikes inconsistent with earlier responses

3. Skill Authenticity

Evaluates depth of reasoning, not just surface correctness

Detects scripted or memorized responses

Identifies AI-generated language patterns

Flags candidates who repeat correct answers but fail to explain or adapt

4. AI-Generated and Scripted Response Detection

Identifies linguistic structures common in LLM-generated answers

Flags overly generic or formulaic phrasing

Detects low variability in syntax and expression

Differentiates natural speech from generated or pre-written responses

5. Real-Time Interview Auditing

Audits interviews as they happen not after decisions are made

Surfaces integrity risks before candidates move forward

Prevents bad hires instead of explaining them later

Reduces reliance on post-hire performance discovery

6. Seamless Integration into Hiring Workflows

Works natively inside Zoom, Microsoft Teams, and Google Meet

Requires no changes to how recruiters interview

Adds no friction for candidates

Operates silently in the background

7. Compliance and Audit Readiness

Creates verifiable records of interview integrity

Supports internal audits and regulatory reviews

Strengthens defensibility of hiring decisions

Reduces legal and reputational exposure

Sherlock AI turns interview integrity from a trust-based assumption into a verifiable system, ensuring that when you hire someone, you’re hiring their real skills, not their AI setup.

Conclusion

AI has changed how candidates present themselves and in many cases, how they misrepresent themselves. Interviews can no longer rely on trust, intuition, or traditional controls alone.

To protect hiring quality, organizations must move from assuming integrity to verifying it. AI-powered interview auditing makes this possible by validating identity, independence, and real skill in real time.

With systems like Sherlock AI, interview integrity becomes enforceable ensuring hiring decisions are based on real people with real capabilities, even in a fully remote, AI-assisted world.