Back to all blogs

Learn how candidates use ChatGPT to cheat in interviews, the behavioral and technical warning signs to watch for, and how hiring teams can detect AI-assisted interview fraud.

Abhishek Kaushik

Jan 21, 2026

As generative AI tools like ChatGPT become more accessible, they are increasingly being used outside their intended purposes, including during job interviews. Employers are increasingly concerned about this trend: 65 percent of hiring managers reported being worried about candidates using generative AI to cheat on recruitment assessments.

Research shows that many candidates are already leveraging AI during the hiring process in subtle ways. In a recent survey, 39 percent of job seekers said they used AI during applications to generate text for resumes, cover letters, and even answers to assessment questions. Additionally, 6 percent admitted to participating in interview fraud, including impersonation or having someone else take the interview on their behalf.

This shift poses a real challenge for organizations trying to accurately assess candidate skills and fit. Traditional interviews were designed to test individual knowledge and judgment, but when candidates use tools like ChatGPT to generate responses in real time or prepare polished scripts ahead of time, those assessments can lose their effectiveness.

1. Real‑Time Use During Remote Interviews

Candidates increasingly use ChatGPT and similar tools during live interviews to generate answers on the fly rather than rely on their own knowledge or experience.

In remote technical interviews, job seekers might paste questions into ChatGPT or another AI tool and read back the response as if it is their own. Interviewers have caught candidates doing exactly this even on basic questions like “tell me about your background,” despite being told not to use external resources.

20 % of U.S. professionals report they have secretly used AI tools during job interviews, suggesting this behavior is more than anecdotal. In the same survey, 55 % agreed AI use in interviews has become “the new norm.”

This trend has become significant enough that major tech recruiters are actively warning candidates not to use AI tools during interviews because it creates an unfair advantage and undermines the hiring process.

The result is that interviewers often hear perfect or near‑perfect answers that a candidate cannot explain when probed further, revealing the disconnect between AI output and genuine understanding.

2. Prepared Scripted Responses

Applicants often input common interview questions or role descriptions into ChatGPT ahead of time to generate ready‑made, rehearsed answers that they memorize and recite verbatim during the interview. These responses tend to include engaging but generic narratives that lack personal specificity.

Because interviewers today expect candidates to be well prepared, highly polished scripts from AI often blend in until deeper follow‑up questions expose inconsistency or surface‑level understanding

When interviewers ask for deeper explanation or personal context, these overly prepared answers can fall apart, revealing the absence of real experience.

3. Technical Problem Solving and Coding Assistance

One of the most consequential ways candidates exploit ChatGPT is in technical interviews, coding challenges, and take‑home assignments.

Major employers and interviewers have publicly acknowledged that candidates now use ChatGPT on second screens, hidden browser tabs, or separate devices to generate code, debug errors, or interpret problem statements in real time.

AI can make cheating undetectable to interviewers during live coding rounds. In an experiment conducted by a tech interview platform, interviewers were unable to tell when candidates used ChatGPT mid-interview to generate code solutions. Candidates whose prompts were taken from common interview question sources solved correctly 73 % of the time, and interviewers did not suspect misuse in any of the sessions.

When candidates read back AI-generated solutions in a live coding round, they may sound fluent but often cannot explain the logical choices, trade-offs, or adapt the solution to new constraints, a clear sign that the reasoning did not originate with them.

Signs of ChatGPT Cheating

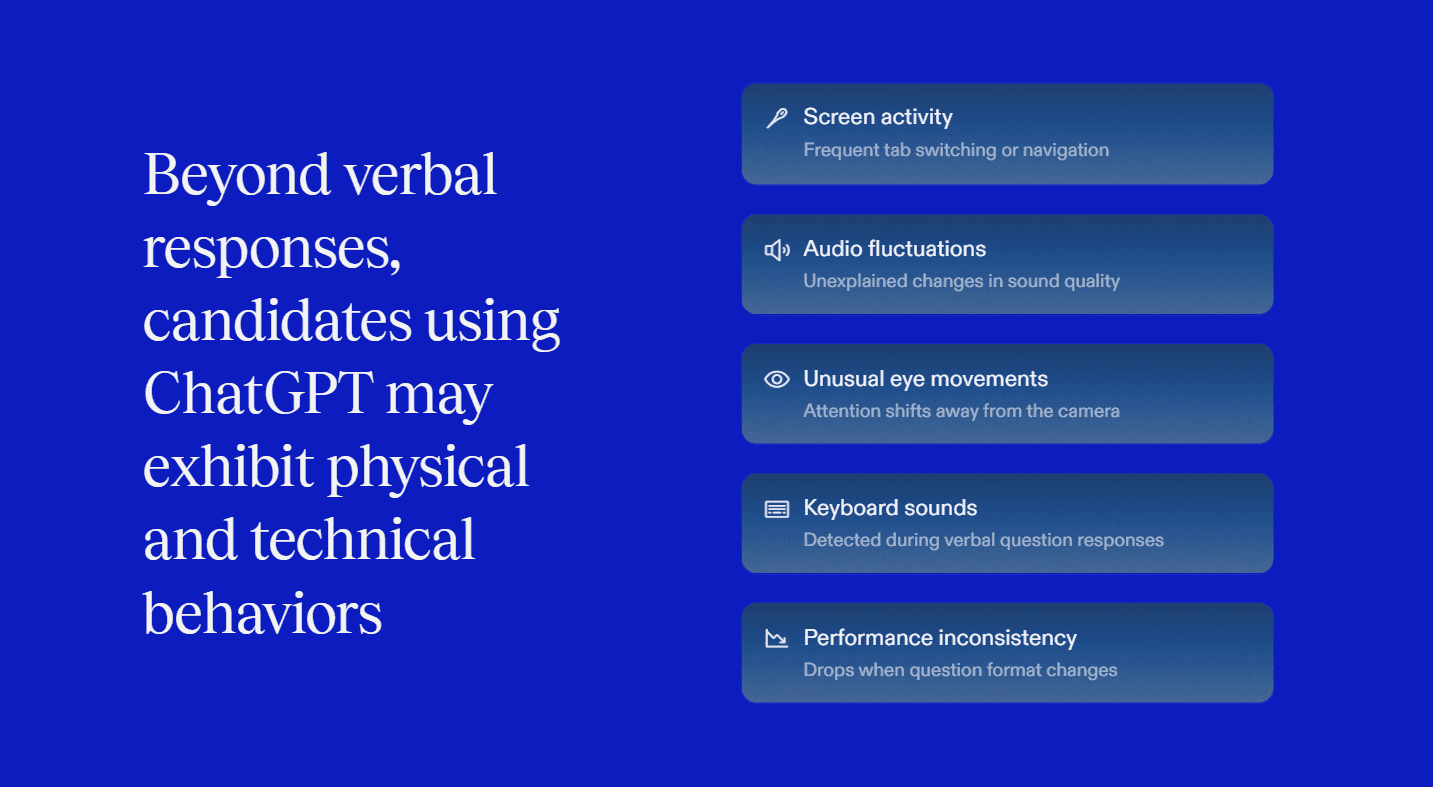

Candidates using AI during interviews often display subtle but repeatable behavioral and technical cues.

Delayed responses to straightforward questions

Sudden shifts from hesitation to highly polished answers

Difficulty explaining reasoning when asked follow-ups

Answers may sound fluent yet lack personal detail, performance often drops sharply when interviewers probe deeper or change the question format.

Frequent tab switching, typing sounds during verbal rounds, unexplained audio changes, or attention drifting away from the primary screen.

How Structured Interviews and Detection Tools Help

Structured interviews create a consistent baseline that makes irregular behavior easier to spot. When candidates are evaluated using the same questions, scenarios, and follow-ups, gaps between polished answers and real understanding become more visible. Sudden shifts in response quality, vague explanations, or difficulty adapting to follow-up questions stand out clearly when the interview format is standardized.

Detection tools add critical context by surfacing patterns that interviewers may miss in real time, especially in remote settings. By tracking response timing, attention shifts, and interaction flow, these tools support interviewer judgment rather than replace it. The result is more focused follow-ups, clearer evaluations, and greater confidence that interview performance reflects genuine ability.

Sherlock AI - The Practical Detection Solution

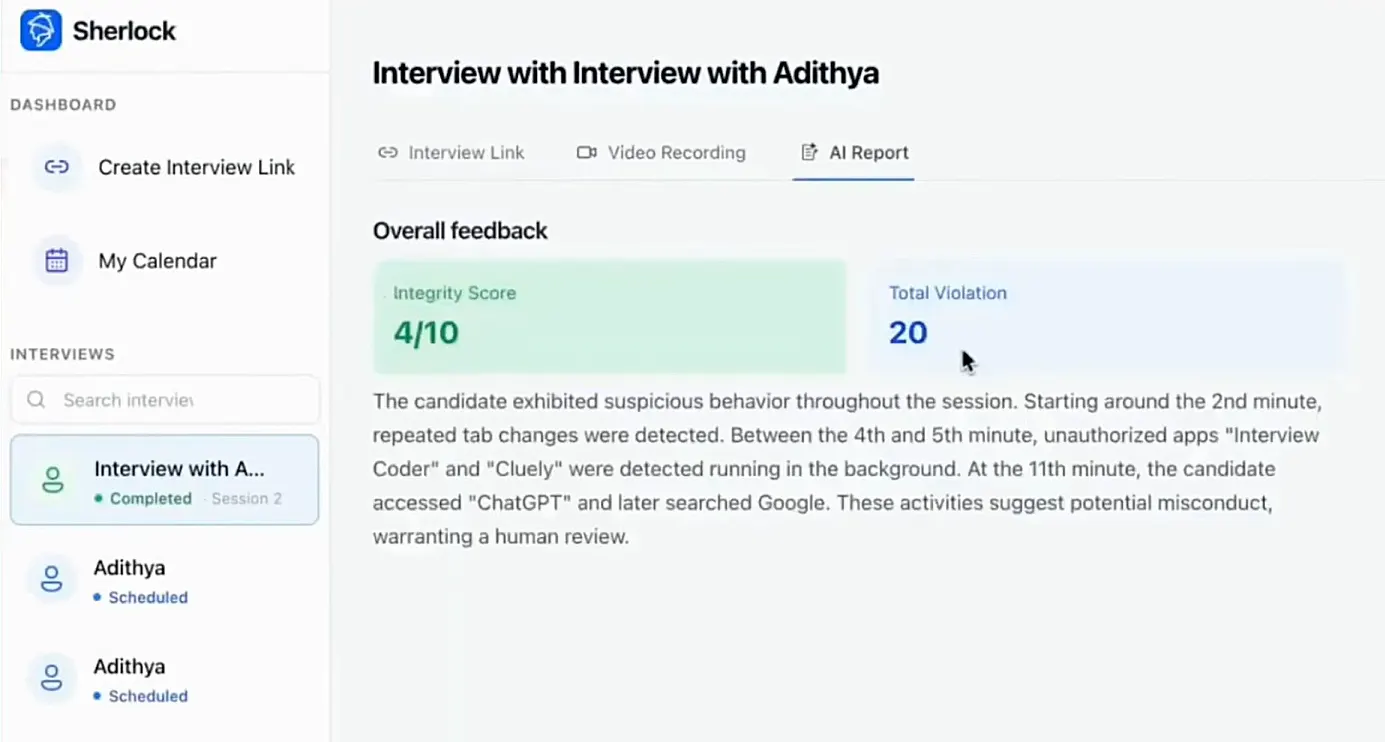

Sherlock AI is designed to support interviewers by adding visibility into risk signals without disrupting the interview experience. It strengthens hiring integrity while keeping humans in control.

Key benefits of Sherlock AI

Real-time AI assistance detection: Surfaces signals associated with live AI usage so interviewers can probe further when necessary.

Behavioral pattern tracking: Analyzes response timing, attention shifts, and consistency to highlight anomalies across the interview.

Environment and activity monitoring: Identifies suspicious off-screen behavior and context switching that often accompany hidden assistance.

Interviewer-first design: Provides insights and flags without making automated pass or fail decisions.

Post-interview risk insights: Delivers clear summaries that help hiring teams assess interview integrity alongside performance.

Together, structured interviews and tools like Sherlock AI enable fair, confident hiring decisions in an era where AI-assisted cheating is increasingly difficult to detect manually.

Conclusion

AI has changed how interviews look, but it has also changed how they can be manipulated. Behavioral cues and technical signals still matter, but they are easier to miss in remote settings. By combining structured interviews with tools that surface hidden risk signals, hiring teams can protect interview integrity without sacrificing fairness or candidate experience. The goal is not to restrict technology, but to ensure hiring decisions reflect real skills and genuine capability.