Back to all blogs

Protect your hiring process from fake candidates. Learn how to spot AI-generated personas, deepfakes, proxy interviews, and identity fraud in interviews.

Abhishek Kaushik

Feb 3, 2026

Hiring teams now face a rapidly evolving threat; job candidates who are not just exaggerating skills, but actively misrepresenting their identities and capabilities. Research from Gartner shows that by 2028, as many as 1 in 4 candidate profiles worldwide could be entirely fake, driven by AI-generated personas, deepfakes, and synthetic identities.

A recent industry survey found that 59% of managers suspect candidates used AI tools to misrepresent themselves, and 31% have already interviewed someone later revealed to be using a false identity. Meanwhile, 17% of hiring managers report encountering deepfake technology in video interviews, a trend amplified by remote hiring practices that make visual and voice manipulation easier.

For recruiters and hiring managers, the implications are significant. Beyond wasted time and poor hiring outcomes, fake candidates can pose security, financial, and operational risks if brought into sensitive roles. Recognizing and filtering these candidates demands a new level of vigilance and technical strategy.

Types of Fake Candidates and Modern Interview Fraud Techniques

Recruiters and hiring managers are no longer just dealing with embellished resumes. Fraudulent candidates are using advanced technology and deceptive tactics to appear real while hiding their true identity or intentions. These tactics range from AI-generated personas to proxy interviewing and identity theft. Below are the major types you need to know, with stats and real examples to illustrate how these frauds show up in real hiring situations.

1. AI-Generated Personas and Fake Profiles

AI-generated resumes and identities look polished and often tailor themselves to job descriptions to pass automatic screening systems. Many contain detailed career histories that sound convincing but cannot be verified when probed.

Recruiters report 72% of fake hiring cases involve AI-generated resumes, portfolios, or credentials.

Remote roles increase risk since applicants don’t need to show up in person, making it easier for non-existent candidates to apply and interview.

2. Deepfake Interviews and Video Impersonation

Around 17% of hiring managers have encountered deepfake candidates in video interviews, where the person on screen isn’t who they claim to be.

One high-profile example involved a candidate dubbed “Ivan X” whose facial movements were slightly out of sync with speech, revealing a deepfake attempt used to secure an interview.

These deepfakes are sophisticated enough to pass initial visual scrutiny but often show tell-tale glitches such as unnatural eye movements or audio lag.

3. Proxy Interviewing

Instead of the person on the résumé, an accomplice attends video calls, relying on coaching or hidden feeds to answer technical questions.

This technique is a form of credential deception where the interviewee’s performance does not reflect the real worker who would do the job.

In some cases, this also includes tag-teaming, where different people handle different interview stages.

4. Stolen Identities and Fake Credentials

In a widely reported case, the U.S. Department of Justice indicted 14 North Korean nationals who used stolen identities, fabricated credentials, and false online work histories to secure remote IT roles at U.S. companies. They hid their true nationality and location by using proxy networks and fake company websites to appear legitimate.

Some fraudsters use stolen Social Security numbers, government ID photos, or professional profiles to create accounts, submit applications, and pass initial background checks.

In other cases, applicants claim to live in one country, but their digital footprint, IP activity, or contact information reveals they are operating from a different location.

This risk is especially serious because if such a candidate is hired, they may gain access to sensitive systems and data under a false identity, which can lead to data theft, intellectual property loss, compliance issues, or deliberate sabotage.

Key Takeaway

These fraud types are not theoretical; they are occurring now and impacting real hiring pipelines. Fake candidate tactics now combine technological sophistication with social engineering, blurring the line between real and artificial profiles.

Detection requires both technical tools and manual vigilance, since some fake candidates pass initial automated screening but fail deeper checks.

Technical Indicators and Behavioral Signals of Fake Candidates

Fake candidates often say the right things. What gives them away is how they behave under real interview conditions. The signals below are patterns hiring teams consistently report after repeated exposure to interview fraud.

1. Response Timing That Feels Off

Pauses before answering basic questions like role responsibilities or recent projects, followed by very clean, structured responses

Similar delay lengths across different questions, regardless of difficulty

Faster answers to complex or technical prompts than to personal or clarifying questions

These timing patterns usually appear when candidates are reading, generating, or receiving help rather than thinking naturally.

2. Eye Movement and Visual Focus Issues

Repeated glances to the same off-screen location before or during answers

Minimal blinking or frozen facial expressions during long explanations

Eye focus that does not align with head movement or camera position

Recruiters often notice this more clearly when follow-up questions are asked quickly, leaving little time for external guidance.

3. Voice and Audio Behavior That Lacks Natural Variation

Steady, neutral tone even when discussing challenges, mistakes, or conflict

Slight changes in accent, pitch, or clarity between answers

Audio that sounds flattened or compressed during longer responses

These patterns suggest voice manipulation, AI assistance, or audio routing tools, especially when paired with other signals.

4. Signs of Multi-Device or Assisted Setup

Frequent downward or sideways glances during responses

Audible typing, clicking, or notification sounds while the candidate is speaking

Sudden camera or microphone adjustments when questions become more specific

Repeated technical interruptions at moments that require live thinking

These behaviors often increase once the interview moves beyond prepared talking points.

5. Resume Strength That Does Not Hold Up Live

Impressive role titles or tool stacks that the candidate cannot explain in detail

Answers that stay high-level when asked about decision making or tradeoffs

Difficulty describing mistakes, constraints, or lessons learned

Real experience produces uneven stories. Fake experience stays polished and vague.

6. Proxy Interviewing Patterns Across Rounds

Strong performance in early interviews, followed by noticeable drop-off later

Different communication style, confidence level, or problem-solving approach between rounds

Trouble recalling or expanding on answers given in previous interviews

These inconsistencies become obvious when interviewers compare notes across stages.

7. Identity and Personal Continuity Gaps

Hesitation on basic details like work timeline, location, or availability

Minor inconsistencies between resume, LinkedIn profile, and spoken answers

Awkward pauses when asked about personal contributions versus team outcomes

These signals point to assembled identities rather than lived careers.

8. Over-Polished Communication With No Depth

Perfectly structured answers that lack concrete examples

Reuse of the same phrases across different questions

No clarifying questions, pushback, or curiosity about the problem

Strong candidates think aloud. Fake candidates deliver finished responses.

No single signal proves fraud. But clusters of timing issues, behavioral inconsistencies, and technical anomalies strongly indicate a fake or assisted candidate. Detection improves when interviews are designed to test thinking, context, and presence, not just answers.

Methods and Tools to Detect and Filter Fake Candidates

Detecting fake candidates is about verifying identity, ensuring presence, and validating behavior across the entire interview flow. No single tool is enough. Effective detection comes from layered controls that reinforce each other.

1. Identity Verification Beyond Resume and LinkedIn

Basic profile checks are no longer sufficient.

Verifying government-issued ID against a live candidate helps confirm that the person interviewing matches the identity being presented

Cross-checking name, photo, and date of birth consistency across application data, ID, and interview presence surfaces early mismatches

Comparing identity documents against known fraud patterns helps flag reused or synthetic identities

Avoid one-time checks and instead revalidate identity at key stages, especially before final interviews or offers.

2. Liveness Detection to Confirm a Real Human Is Present

Liveness detection ensures the interviewee is physically present and not a recorded, manipulated, or AI-generated feed.

Passive liveness checks look for natural micro-movements like blinking, facial muscle variation, and head motion

Active liveness prompts may ask candidates to perform simple actions that are hard to fake in real time

Inconsistencies between motion, lighting, and facial depth often indicate video manipulation or replay attacks

These checks are especially effective in remote interviews, where deepfakes and video overlays are harder to spot manually.

3. Deepfake Detection for Video and Audio Streams

Deepfakes rarely fail visually in obvious ways. They fail in patterns.

Facial mapping tools detect misalignment between facial landmarks and speech

Audio analysis flags synthetic voice traits such as unnatural frequency consistency or compression artifacts

Timing mismatches between lip movement and sound are often subtle but measurable

Deepfake detection works best when combined with live questioning, where the candidate must react rather than recite.

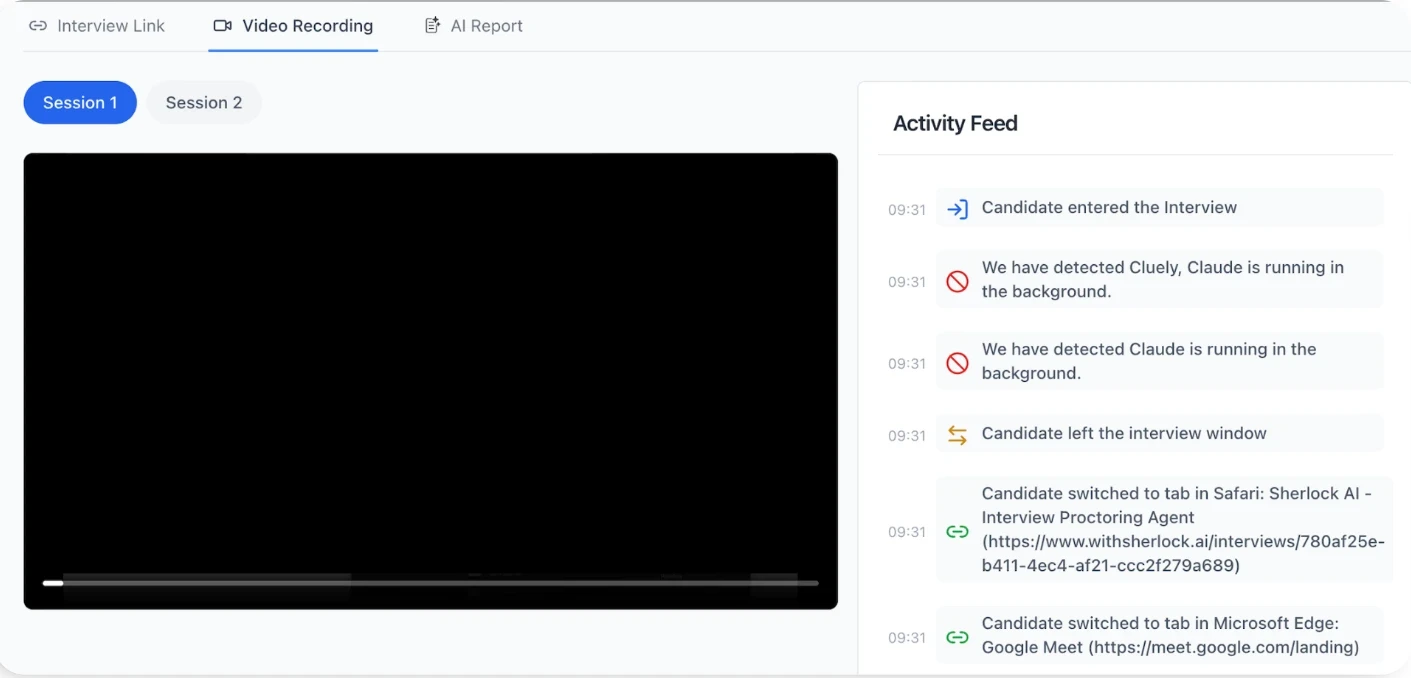

4. Browser and Device Monitoring During Interviews

Many fake candidates rely on a second screen, AI copilots, or hidden assistance.

Browser monitoring can detect tab switching, copy-paste behavior, and external prompt usage

Device fingerprinting helps identify multiple logins or unusual device changes mid-interview

Monitoring keyboard and mouse activity patterns can reveal real-time assistance

These signals are not about surveillance. They provide context when behavior does not match claimed ability.

5. Behavioral AI and Pattern Recognition

This is where manual interviews struggle most and where behavioral analysis adds value.

Behavioral models analyze response timing, hesitation patterns, and answer structure

Repeated anomalies across answers carry more weight than single events

Comparison against baseline human interview behavior helps identify outliers

Analyze how candidates respond, not just what they say. This gives interviewers objective signals to guide follow-up questions rather than replace judgment.

6. Cross-Round Consistency Analysis

Fake candidates often pass single interviews but fail across a sequence.

Comparing communication style, reasoning depth, and confidence across rounds reveals inconsistencies

Behavioral drift between technical, behavioral, and managerial interviews is a strong indicator

Sudden changes in voice, pace, or clarity across sessions suggest proxy involvement

Structured interview data makes these patterns visible instead of anecdotal.

7. Contextual Questioning Supported by Detection Tool

Tools are most effective when interviews are designed to use them.

Asking candidates to explain decisions made earlier in the interview

Revisiting answers from previous rounds with added constraints

Introducing small changes to problems to test adaptability

Detection tools surface signals. Interview design confirms them.

8. Risk-Based Filtering Rather Than Binary Decisions

Not every signal means fraud.

Hiring teams assign risk scores based on combined indicators

Low-risk anomalies may trigger additional verification

High-risk patterns prompt identity revalidation or live reassessment

This approach avoids false positives while still blocking real threats.

How Sherlock AI Supports Secure and Trustworthy Hiring

Sherlock AI is built specifically for modern remote interview integrity. It combines real‑time monitoring with intelligent behavior analysis so your team can focus on evaluating true capability, not playing detective.

Key Features and Benefits

Real-Time Integrity Monitoring: Flags delayed responses, unnatural eye movement, or off-screen glances.

AI Usage Detection: Identifies if candidates are receiving real-time AI-generated prompts during interviews.

Proxy Interview Detection: Recognizes inconsistencies suggesting someone other than the actual candidate is answering.

Multimodal Fraud Detection: Analyzes video, audio, typing, gaze, and device behavior in one unified profile.

Behavioral Pattern Analysis: Detects unnatural pauses, over-polished answers, or repetitive phrasing across questions.

Context-Aware Evaluation: Measures how candidates solve problems, differentiating genuine skill from assisted output.

Automatic Notes & Insights: Generates flagged events and structured summaries for quick, objective review.

Workflow Integration: Works seamlessly with Google, Outlook, Apple calendars, and major video platforms.

Human-Centered Decision Support: Provides actionable signals for follow-up probing without replacing human judgment.

Privacy & Compliance First: Collects only essential data to maintain candidate trust and legal alignment.

Sherlock AI provides real-time insights, detect hidden assistance, and highlight suspicious patterns, enabling teams to make confident, fair, and secure hiring decisions without compromising candidate experience.

Conclusion

Fake candidates and sophisticated interview fraud are a growing challenge for recruiters and hiring managers. Traditional checks alone are no longer enough, detecting AI-assisted answers, proxy interviewing, and identity manipulation requires a combination of technical monitoring, behavioral analysis, and structured interviewing.

Read more: 5 Ways to Stop AI Fraud in Interviews Without Harming Candidate Experience