Back to all blogs

Interview fraud is evolving with AI, proxy candidates, and deepfakes. Learn why traditional hiring controls fail and why interview integrity must be measured, not assumed.

Abhishek Kaushik

Mar 24, 2026

In today’s increasingly digital hiring landscape, interview fraud undermines talent acquisition, team performance, and organizational trust. Recent industry research shows that by 2028, as many as 1 in 4 candidate profiles worldwide could be fake, driven largely by generative AI tools that help applicants misrepresent skills, identities, or even their presence in live interviews.

Employers are already feeling the impact. Surveys reveal that 59% of hiring managers have suspected candidates of using AI to misrepresent themselves, while 35% reported that someone other than the actual applicant participated in a virtual interview.

Fraudulent hires can erode productivity, waste recruitment budgets, and diminish team morale. As AI-powered deception tools outpace traditional screening methods, hiring teams must rethink how they detect and deter interview fraud, or risk making costly mistakes in every hiring cycle.

Interview Fraud Has Evolved Faster Than Hiring Processes

Interview fraud today looks nothing like it did a few years ago. It’s no longer limited to obvious impersonation or candidates reading answers off another screen.

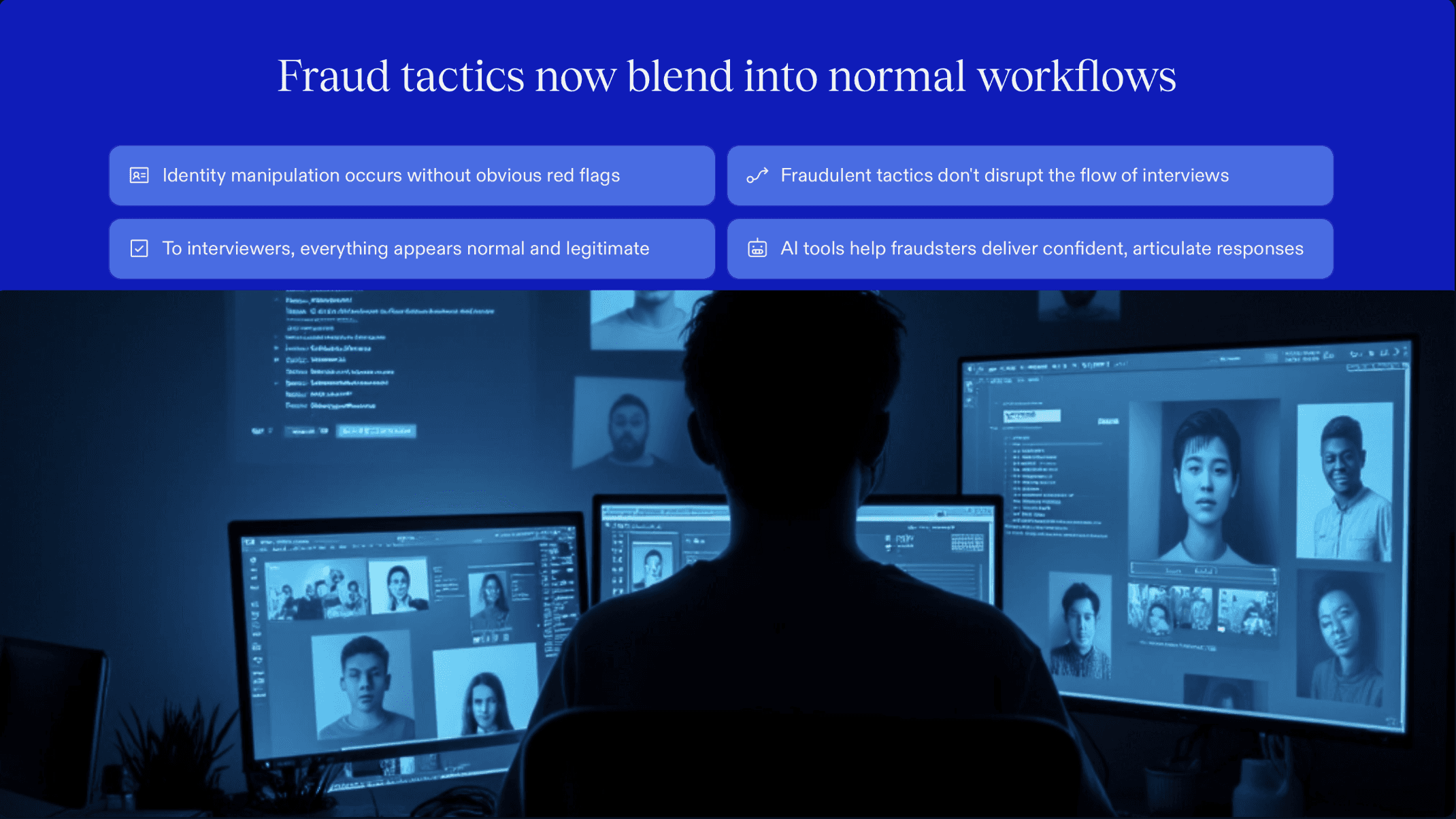

What makes modern interview fraud especially dangerous is how seamlessly it blends into normal hiring workflows:

AI-assisted responses that sound articulate and confident but lack real depth or ownership

Scripted answers delivered fluently, masking gaps in actual skill or experience

Proxy candidates appearing for early or technical rounds, then disappearing later

Deepfake video or voice tools that obscure who is really present in the interview

None of these tactics disrupt the interview flow. To an interviewer, everything appears “business as usual.”

Most teams still depend on intuition, interviewer experience, and basic identity checks. Unfortunately, “gut feel” breaks down when confidence can be manufactured, answers can be generated in real time, and identities can change without obvious signals.

The result is a widening gap between how interview fraud works today and how hiring processes are built to detect it. Many teams are still defending against yesterday’s fraud, while modern, AI-enabled tactics slip through unnoticed because they haven’t evolved fast enough to keep up.

Explore: 15 Interview Fraud Examples Hiring Teams Must Know in 2026

Traditional Interview Controls Can’t See What Actually Matters

Most hiring teams already rely on multiple safeguards to reduce risk such as resumes, ID verification, proctoring tools, and post-hire audits. The issue isn’t effort. It’s misalignment. These controls were built to validate access and credentials, not to evaluate what’s actually happening inside an interview.

At the interview stage, where skills, identity, and authenticity should come together, traditional controls fall short:

Resumes validate historical claims, not real-time problem-solving or ownership of answers

ID checks confirm who logged in, not who is actively responding or receiving help

Proctoring tools look for obvious violations, missing subtle forms of AI or human assistance

Post-hire audits detect fraud only after the cost has already been incurred

This creates critical blind spots that hiring teams rarely have visibility into:

No continuity across rounds

There’s little assurance that the candidate demonstrating skills in later stages is the same person who appeared earlier, especially in remote or high-volume hiring.No way to detect external assistance

AI prompts, off-screen helpers, or parallel devices can influence responses without leaving visible traces.No verification that demonstrated skills are real

Polished explanations and confident delivery can mask shallow understanding, memorized scripts, or AI-generated answers.

The core problem is timing and focus. These controls either activate too late or monitor the wrong signals. While they secure access and documentation, they miss the behavioral and response-level indicators that actually determine interview integrity, leaving modern fraud hidden in plain sight.

Read more: 8 Clear Signs a Candidate Is Using AI to Answer Questions During a Live Interview

Interview Integrity Needs to Be Measured, Not Assumed

As interview fraud becomes more sophisticated, hiring teams can no longer rely on trust, intuition, or isolated checks. To keep pace, fraud detection must shift from being subjective and assumed to systematic, continuous, and evidence-based.

Interview integrity becomes visible through a set of measurable signals:

1. Consistency Across Interviews and Rounds

Genuine candidates typically show stable reasoning patterns and skill expression over time.

Alignment in explanations, terminology, and problem-solving approach across rounds

Natural progression in depth rather than sudden jumps in quality or confidence

Detection of inconsistencies that may indicate coaching, substitution, or proxy participation

2. Response Behavior Under Real Interview Conditions

How answers are produced often reveals more than the answers themselves.

Response latency patterns that suggest real-time thinking versus assisted generation

Over-polished or templated phrasing that doesn’t match spontaneous follow-ups

Sudden mid-interview improvement in clarity or structure that may indicate external help

3. Depth and Authenticity of Demonstrated Skills

Surface-level correctness is easy to fake; real capability is harder to sustain.

Ability to handle follow-up questions, edge cases, and alternative scenarios

Clear reasoning behind decisions, trade-offs, and problem-solving steps

Signals of genuine understanding versus memorized or AI-generated responses

4. Identity Continuity Throughout the Hiring Journey

Modern hiring often treats each interview as a standalone event.

Verification that the same individual appears and performs across all rounds

Detection of silent handoffs between early and later interviews

Continuity checks that connect behavior and identity end to end

When these signals are measured together, interview integrity stops being an assumption and becomes defensible evidence.

In an environment shaped by remote hiring and AI-assisted deception, hiring teams need visibility into how interviews actually unfold.

Sherlock AI: Purpose-Built for Interview Integrity

Modern interview fraud succeeds because traditional tools were never designed to evaluate interviews themselves. Sherlock AI fills that gap by focusing on interview-level integrity rather than resumes, recordings, or post-hire signals.

Instead of trying to “catch candidates,” Sherlock AI evaluates whether an interview is trustworthy based on how it unfolds.

How Sherlock AI Addresses Modern Interview Fraud

1. Interview-Level Integrity Analysis (Not Candidate Surveillance)

Sherlock AI analyzes interviews as discrete events, without monitoring candidates outside the interview context.

Evaluates authenticity within the interview interaction itself

Avoids invasive tracking, background monitoring, or continuous surveillance

Keeps trust focused on interview quality, not personal data

2. Continuity Tracking Across Interview Rounds

Sherlock AI connects interviews across stages to ensure the same individual is being assessed throughout the process.

Detects identity or behavior shifts between early and later rounds

Flags inconsistencies that suggest proxy interviews or silent handoffs

Maintains end-to-end interview integrity, especially in mass hiring

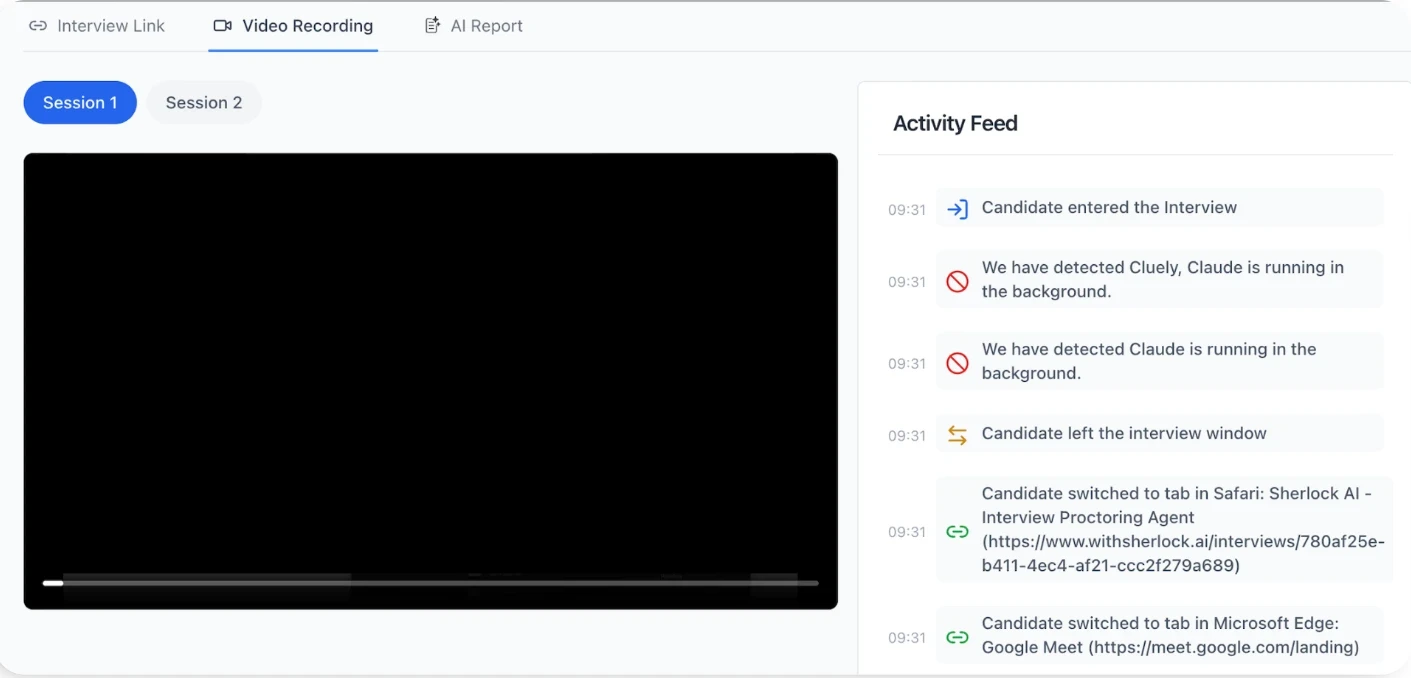

3. Detection of External Assistance and AI-Aided Responses

Rather than looking for obvious cheating, Sherlock AI identifies subtle assistance patterns.

Response latency and timing irregularities

Over-structured or templated answer patterns

Sudden jumps in answer quality that don’t align with prior performance

These signals help surface AI prompts, off-screen helpers, or real-time coaching even when interviews appear smooth.

4. Skill Authenticity and Depth Validation

Sherlock AI focuses on how candidates demonstrate skills, not just what they answer.

Evaluates reasoning depth, follow-up handling, and conceptual consistency

Distinguishes genuine understanding from scripted or generated responses

Reduces false confidence passing as real competence

5. Privacy-First, Scalable by Design

Built for modern hiring volumes without compromising candidate trust.

No storage of unnecessary personal or sensitive data

Works across remote, high-volume, and campus hiring environments

Designed to scale without adding friction to interviewers or candidates

Why Sherlock AI Works Where Traditional Controls Fail

Traditional controls secure access and credentials. Sherlock AI secures interview trust.

By measuring consistency, behavior, skill depth, and identity continuity at the interview level, Sherlock AI turns hiring integrity into evidence, not intuition. In a hiring environment shaped by AI and remote workflows, that shift isn’t optional anymore. It’s how trustworthy hiring scales.

Conclusion

Interview fraud has quietly become part of modern hiring, especially in remote and high-volume interviews. As AI makes it easier to fake confidence, skills, and even identity, relying on gut feel or basic checks is no longer enough. When fraud slips through, the cost shows up later as bad hires, wasted time, and lost trust in the process.

The shift hiring teams need to make is simple: stop assuming interviews are trustworthy and start measuring them. Real integrity comes from observing consistency, behavior, skill depth, and identity continuity across interviews.

This is where purpose-built systems like Sherlock AI matter. By focusing on interview-level integrity instead of candidate surveillance, Sherlock AI helps teams detect modern interview fraud without adding friction or compromising trust. In an AI-driven hiring world, measuring interview trust isn’t optional anymore, it’s how reliable hiring scales.