Back to all blogs

Learn How to Detect and Stop AI Interview Fraud using real-time monitoring, identity verification, and actionable detection strategies for secure hiring.

Abhishek Kaushik

Apr 14, 2026

AI is changing hiring, but it is also changing how candidates cheat. Today, interview fraud is no longer limited to someone reading notes off screen. Candidates can use AI copilots that generate answers in real time, receive live help from hidden devices, or even use proxy test takers.

What once required elaborate coordination can now be done with a browser tab and a second device. AI tools can listen, analyze questions, and deliver polished, technically correct responses in seconds. To an untrained eye, the candidate appears confident and capable. In reality, the interview performance may not reflect the candidate’s true skills at all. In a 2026 analysis of over 19,000 interviews, more than one in three candidates displayed behavior flagged for potential cheating, suggesting this issue is widespread rather than isolated.

For recruiters and hiring managers, the challenge is clear. How do you detect AI interview fraud accurately, and how do you stop it before a bad hire enters your organization?

The stakes are higher than just a mismatched hire. When organizations unknowingly hire candidates who misrepresented their identity or skills, the impact can include poor performance, security risks, data exposure, and loss of trust in the hiring process. As remote and global hiring continue to grow, interview integrity has become a business risk, not just a recruiting concern.

This guide breaks down the exact fraud methods, the real detection signals, and the systems companies now use to protect interview integrity with platforms like Sherlock AI.

What Is AI Interview Fraud

AI interview fraud happens when a candidate uses artificial intelligence or unauthorized assistance to misrepresent their skills, identity, or performance during an interview.

This includes:

AI tools generating answers in real time

Proxy candidates attending interviews

Hidden human helpers off camera

Deepfake Tools or identity manipulation tools

The goal is simple. Make the candidate appear more skilled, experienced, or even like a completely different person.

How AI Interview Fraud Actually Happens

AI interview fraud is no longer random or obvious. It often involves deliberate use of AI tools, hidden assistance, or identity manipulation to create a false impression of a candidate’s skills. Understanding the common methods used helps recruiters recognize risk signals early and apply the right detection strategies.

1. AI Copilots and Real Time Answer Generators

AI interview assistants can listen to questions and instantly produce structured, high quality responses. Some tools also provide:

Live coding solutions

Behavioral interview answer frameworks

Technical explanations tailored to the question

These answers often sound impressive but lack deep, contextual understanding when challenged.

2. Proxy Interviews

A proxy interview is when someone else, usually more skilled, takes the interview on behalf of the candidate.

This may happen through:

Different people appearing in different interview rounds

Off camera experts feeding answers through earbuds

Full impersonation using shared credentials

Proxy fraud often goes unnoticed when identity checks happen only once at the start.

3. Hidden Devices and Second Screens

Candidates may use:

Phones placed just outside camera view

Smartwatches receiving text prompts

Tablets or second monitors with AI tools open

Because these devices are small and off screen, human interviewers rarely notice them.

4. Deepfake and Identity Manipulation

Though still emerging, deepfake tools can alter:

Facial appearance

Voice tone

Lip synchronization

This makes visual verification unreliable if not supported by biometric and behavioral analysis.

How to Detect AI Interview Fraud

Detection requires analyzing patterns, not just isolated behaviors. Below are the methods used to detect AI interview fraud:

1. Response Timing Analysis

AI generated answers often introduce subtle but consistent delays. The candidate appears to think, but the pause reflects AI processing time rather than natural recall.

Red flag patterns:

Similar pause length before most answers

Faster responses to complex questions than simple ones

Delays followed by overly structured responses

Example: A candidate pauses for nearly the same amount of time before every technical answer, then delivers a perfectly structured response that sounds rehearsed rather than conversational.

2. Speech and Language Pattern Shifts

When candidates rely on AI, their speaking style may suddenly change.

Look for:

Sudden improvement in vocabulary or structure

Inconsistent tone across different questions

Robotic or overly formal phrasing in spoken answers

AI generated language often sounds written rather than spoken.

Example: Early answers are simple and conversational, but later responses suddenly become highly polished, use advanced terminology, and sound like written content being read aloud.

3. Gaze and Eye Movement Tracking

Eye behavior can reveal off screen assistance.

Suspicious indicators include:

Repeated glances to the same side of the screen

Reading style eye movement patterns

Eyes moving while the candidate is supposedly thinking

AI powered gaze tracking can detect these patterns more reliably than human observation.

Example: The candidate repeatedly looks to the lower right corner of their screen before answering, with eye movements that resemble reading from a hidden prompt window.

4. Inability to Explain Reasoning

AI tools can provide answers, but they struggle with unscripted follow ups.

Try:

Asking the candidate to explain why they chose a specific approach

Changing one variable in the problem and asking them to adapt

Requesting the same solution using a different method

If performance drops sharply, AI assistance may be involved.

Example: After giving a strong solution to a coding problem, the candidate struggles when asked why they chose that approach or how they would modify it for a slightly different scenario.

5. Identity and Presence Inconsistencies

Fraud often involves more than one person.

Warning signs include:

Subtle facial differences across interview rounds

Lighting and environment changes that suggest different locations

Voice tone inconsistencies

Continuous identity verification is far more reliable than a one time ID check.

Example: In a follow up interview, the candidate’s facial features, lighting setup, or voice tone appear noticeably different from the previous round, raising concerns about whether the same person is present.

6. Environment and Device Monitoring

AI interview fraud often depends on secondary devices.

Detection systems can identify:

Phone usage during the interview

Smartwatch interactions

Additional screens reflecting in glasses or eyes

Other people entering or leaving the room

These signals are difficult for human interviewers to catch in real time.

Example: The candidate frequently looks down at their lap and their smartwatch screen lights up multiple times during complex questions, suggesting they may be receiving external assistance.

This kind of pattern based observation helps recruiters move from vague suspicion to structured, evidence based interview integrity.

Why Manual Detection Is No Longer Enough

Recruiters are trained to assess skills, not run digital forensics. AI fraud signals are subtle, fast, and often invisible to the human eye.

Manual detection leads to:

Missed fraud cases

Inconsistent decisions across candidates

Bias caused by relying on “gut feeling”

AI interview fraud requires AI powered detection.

How to Stop AI Interview Fraud

Detection must be paired with prevention. Organizations that build structured safeguards into the interview process reduce the chances of fraud before it affects hiring decisions.

Use continuous identity verification

Identity checks should run throughout the interview, not just at the start. Ongoing verification helps ensure the same person remains present and prevents proxy switching between questions or interview rounds.Restrict unauthorized tools and applications

A secure interview environment can limit access to screen sharing software, remote control tools, AI answer generators, and unauthorized browser activity. Reducing tool access lowers the opportunity for real time assistance.Monitor behavior and the interview environment in real time

AI based monitoring can detect unusual gaze patterns, the presence of additional devices, or other people entering the room. This provides early warning of hidden assistance without interrupting the interview flow.Use dynamic and interactive questioning

Structured scripts are easier for AI tools to handle. Live follow ups, problem variations, and scenario based questions force candidates to think in real time and demonstrate genuine understanding.Standardize integrity checks across all candidates

Applying the same monitoring and verification process to every candidate ensures fairness, reduces bias, and prevents fraudsters from targeting weaker interview stages.Train interviewers to probe beyond surface answers

Recruiters and hiring managers should be prepared to ask why, how, and what would change questions. Deeper probing exposes whether the candidate truly understands the topic or is relying on generated responses.Combine multiple detection signals

No single behavior proves fraud. Reliable prevention combines identity verification, behavioral analysis, environmental monitoring, and language pattern evaluation to form a complete risk picture.

These preventive measures help organizations move from reactive fraud detection to proactive interview integrity, especially when supported by dedicated platforms like Sherlock AI.

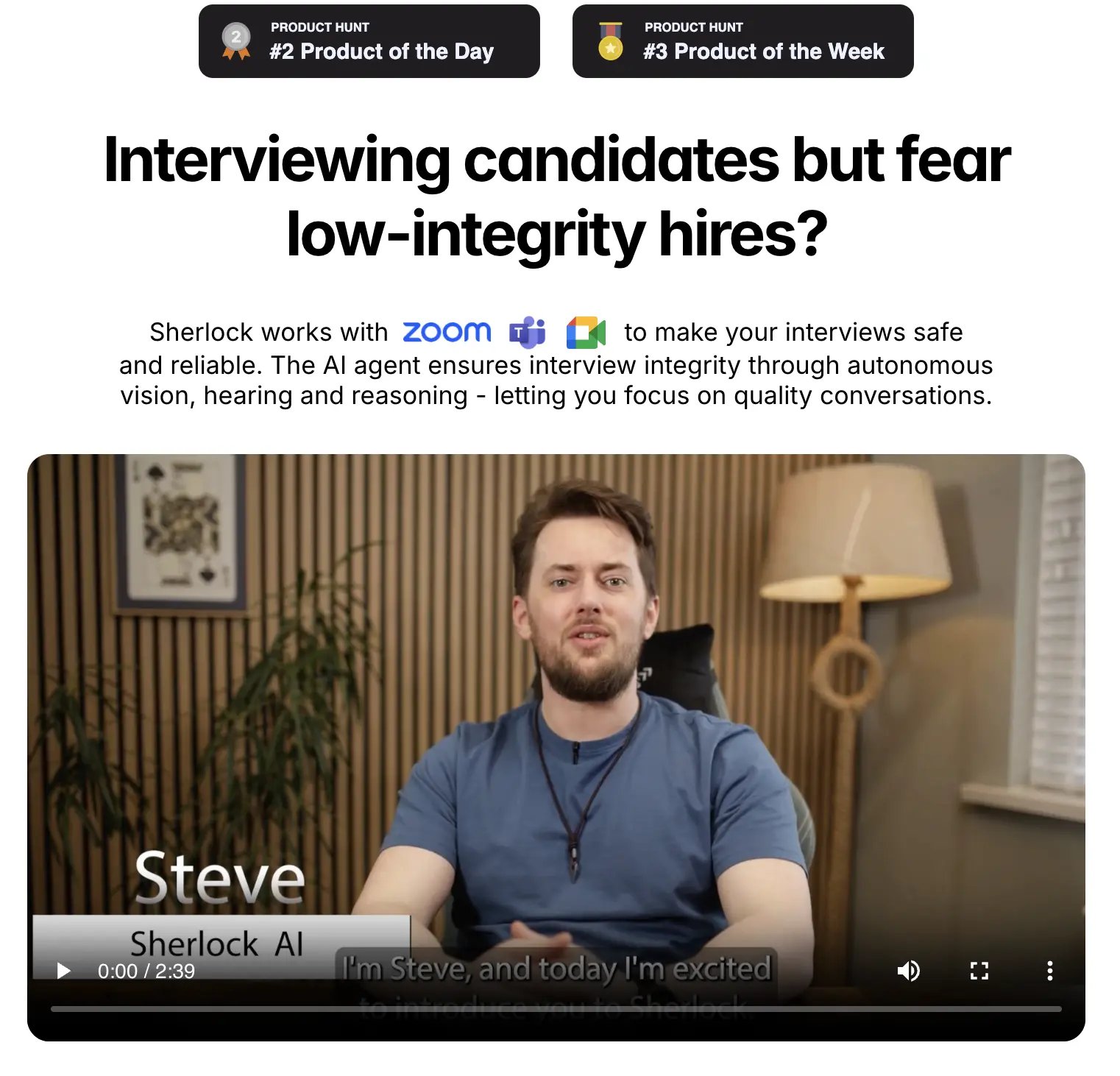

How Sherlock AI Detects and Stops AI Interview Fraud

Sherlock AI is built specifically to address AI driven interview fraud in remote and global hiring. It combines identity verification, behavioral analysis, and environmental monitoring to protect interview integrity in real time.

Sherlock AI helps organizations in:

1. Real-Time Behavioral Monitoring

Sherlock AI observes candidates during interviews to detect subtle fraud signals that humans cannot reliably see.

Key features include:

Eye movement and gaze tracking

Tracks where candidates are looking and flags unusual patterns that suggest hidden assistance.Response timing analysis

Detects delays or pacing that are consistent with AI generated answers.Detection of overly polished or scripted answers

Flags answers that appear unnaturally structured or inconsistent with earlier responses.

Real-time monitoring ensures potential fraud is identified as it happens, not after the interview concludes.

2. Continuous Identity Verification

Sherlock AI verifies candidate identity throughout the interview process, not just at login.

Confirms the same person is present across multiple rounds

Detects proxy candidates attempting to switch in the middle of an interview

Combines facial recognition, biometrics, and behavioral patterns

Continuous identity verification prevents impersonation and strengthens interview integrity.

3. Environment and Device Monitoring

Sherlock AI monitors the candidate’s physical environment to reduce hidden assistance risk.

Detects additional screens, phones, or smartwatches

Flags unexpected movements or other people entering the room

Monitors lighting changes and background anomalies

This ensures that candidates are not receiving hidden guidance during the interview.

4. Language and Response Pattern Analysis

Sherlock AI identifies signs of AI generated answers or external assistance.

Analyzes speech patterns and vocabulary shifts

Detects repeated phrasing, unnatural structure, or overly formal language

Flags inconsistencies between technical and behavioral answers

This helps recruiters understand whether answers are genuinely the candidate’s own.

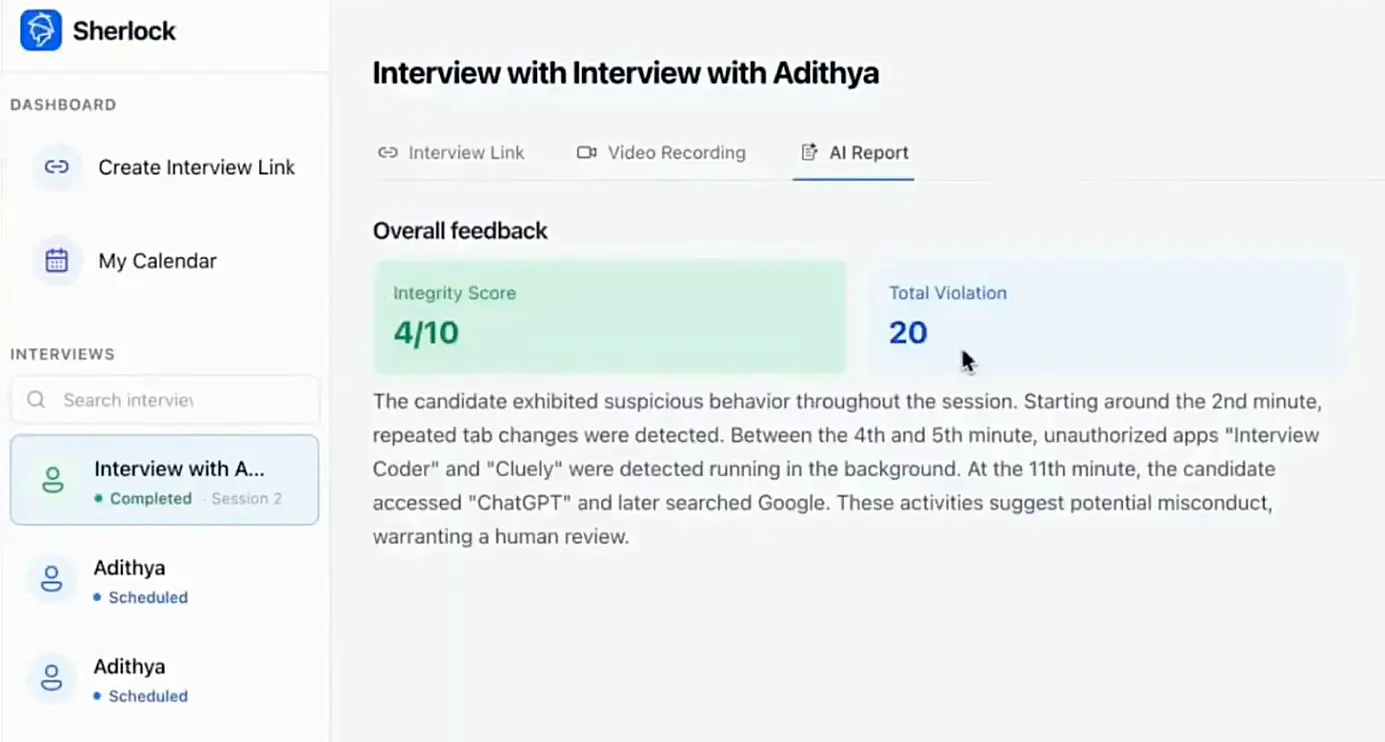

5. Explainable Fraud Risk Scoring

Sherlock AI provides a clear, structured risk score that recruiters can act on confidently.

Combines identity verification, behavior, environment, and language analysis

Generates actionable insights for hiring teams

Helps decision makers identify high-risk situations without bias

With explainable risk scoring, recruiters can evaluate talent while maintaining high confidence in interview integrity.

Final Thoughts

AI interview fraud is growing because the tools to cheat are becoming easier to access. Traditional video interviews and human observation alone cannot keep up.

Organizations that want accurate hiring decisions must move from passive observation to active, AI powered interview integrity. Detecting and stopping AI interview fraud is no longer optional. It is a core part of secure, trustworthy hiring.

Sherlock AI helps ensure that the person you hire is the person you interviewed, with the skills they truly claim to have.