Back to all blogs

Learn how to balance AI and human judgment in remote interviews with a hybrid workflow that improves fairness, detects integrity risks, and keeps final hiring decisions human-led.

Abhishek Kaushik

Feb 25, 2026

Remote interviews have become the default hiring model for global teams but they’ve also exposed a new challenge of scaling evaluation without losing human judgment. Today, 45% of organizations already use AI in recruitment, reducing time-to-hire by up to 50% and cut recruitment costs by 30%, making them indispensable for high-volume remote hiring.

But efficiency comes with trade-offs. 40% of candidates say they feel uneasy about AI in hiring, and 45% worry about algorithmic bias, highlighting growing concerns around fairness and transparency. At the same time, the rise of AI-assisted applications has created new integrity risks, with recruiters reporting difficulty identifying authentic candidates due to AI-generated content, especially in remote processes.

Organizations that rely entirely on automation risk bias, poor candidate experience, and missed soft-skill insights. Those that ignore AI struggle with scale, consistency, and interview integrity.

In this guide, we’ll break down how to design a hybrid interview workflow where AI handles verification, pattern detection, and structured scoring, while humans focus on judgment, culture fit, and final hiring decisions.

What AI Should Handle vs. What Humans Should Decide

A balanced remote interview process starts with a clear division of responsibilities. AI should generate signals and structured data, while human interviewers interpret context and make the final hiring decision.

Where AI Adds the Most Value

AI excels at tasks that require consistency, pattern detection, and real-time monitoring at scale:

Identity verification and face matching

Confirms the candidate’s identity against submitted documents

Detects multiple faces, screen substitutions, or mid-interview swaps

Proxy interview and environment risk detection

Flags tab switching, virtual machines, remote desktop usage, and device anomalies

Identifies background voices, hidden prompts, or unusual eye-gaze patterns

👉 Proxy Interviews Explained - How They Work & Why They’re Rising

Speech and behavioral signal analysis

Tracks response latency, voice continuity, and answer similarity across questions

Highlights potential scripted or AI-assisted responses for human review

Structured scoring for skills assessments

Applies standardized rubrics to technical or role-based questions

Ensures every candidate is evaluated on the same measurable criteria

These capabilities improve consistency, auditability, and scalability, especially in high-volume remote hiring.

Where Human Judgment Is Irreplaceable

Humans bring contextual understanding, empathy, and role-specific nuance that AI cannot reliably infer:

Cultural fit and motivation

Alignment with team values, communication style, and growth mindset

Genuine interest in the role versus rehearsed responses

Problem-solving depth and creativity

How candidates approach ambiguous scenarios

Ability to adapt, ask clarifying questions, and think aloud

Contextual interpretation of behavior

Distinguishing nervousness from dishonesty

Accounting for connectivity issues, language differences, or neurodivergent communication styles

Final hiring decisions

Weighing AI signals alongside interview performance, references, and business needs

Applying role-specific judgment that goes beyond structured scoring

The Operating Principle: AI as Signal, Humans as Decision-Makers

AI should surface anomalies, standardize evaluation, and reduce administrative load.

Humans should validate those signals, interpret context, and own the hiring outcome.

This “AI as co-pilot” model prevents over-automation, reduces bias, and preserves the human insight required to make confident remote hiring decisions.

Reducing Bias While Maintaining Fairness

One of the biggest advantages of combining AI with human interviewers is the ability to increase consistency without removing critical judgment. When designed correctly, a hybrid model can reduce subjective decision-making while still accounting for real-world context.

Here is how AI + Humans improve objectivity:

AI standardizes evaluation criteria

AI applies the same scoring logic, question weightage, and behavioral benchmarks to every candidate. This minimizes variation caused by interviewer mood, fatigue, or unconscious preferences.

Structured interview rubrics reduce subjectivity

Using predefined scoring frameworks ensures candidates are evaluated on skills, competencies, and role-relevant behaviors rather than gut feeling. AI helps enforce rubric adherence and highlights gaps in interviewer scoring.

Humans review AI flags, not blindly trust them

AI should surface signals such as response similarity, unusual device activity, or inconsistent timelines. Recruiters then validate whether these are genuine risks or explainable situations (e.g., lag, background noise, accessibility tools).

Calibration sessions using AI insights

Hiring teams can compare AI-generated score distributions across interviewers to:

Identify lenient vs. strict evaluators

Align on what “strong,” “average,” and “weak” actually look like

Improve inter-rater reliability over time

This creates a data-backed feedback loop that continuously improves fairness.

Risks to Watch For

Over-reliance on AI scores

Treating AI outputs as final decisions can:

Reinforce hidden model biases

Penalize candidates with non-linear communication styles

Reduce holistic evaluation

AI scores should be inputs and not verdicts.

Ignoring edge cases

Certain candidates may trigger false positives due to:

Neurodivergent communication patterns

Speech differences or accents

Low bandwidth, camera lag, or shared workspaces

Use of accessibility technologies

Without human review, these factors can be misclassified as integrity risks.

Best Practice: Evidence-Based, Human-Validated Decisions

A fair remote interview process follows three principles:

Standardize what can be standardized (questions, rubrics, scoring)

Review anomalies with context rather than auto-rejecting

Make final decisions through trained human panels

This approach improves equity, defensibility, and candidate trust while still benefiting from AI’s scale and consistency.

Designing a Hybrid Interview Workflow

A well-designed remote hiring process integrates AI at key checkpoints while preserving human control over evaluation and decision-making. The goal is to automate verification and structure, so interviewers can focus on depth, context, and candidate potential.

Before the Interview: Establish Trust and Baseline Signals

AI-powered authentication and environment checks

Identity verification through face matching and document validation

Detection of multiple faces, virtual devices, or remote access tools

Baseline audio, video, and network quality assessment

Pre-interview skill assessments

Role-specific technical or functional tests with standardized scoring

Consistent benchmarking across all candidates

Early detection of mismatches between claimed and demonstrated skills

This stage ensures interviewers enter the conversation with verified candidates and objective performance data.

During the Interview: AI in the Background, Humans in the Lead

AI monitors integrity signals silently

Flags tab switching, background prompts, unusual eye movement, or voice inconsistencies

Tracks response timing and potential scripted patterns

Captures structured notes aligned to evaluation rubrics

Interviewers focus on deep evaluation

Probing follow-up questions

Real-world scenario discussions

Assessing communication, ownership, and adaptability

By offloading monitoring and note-taking to AI, interviewers can stay fully present in the conversation and evaluate higher-order competencies.

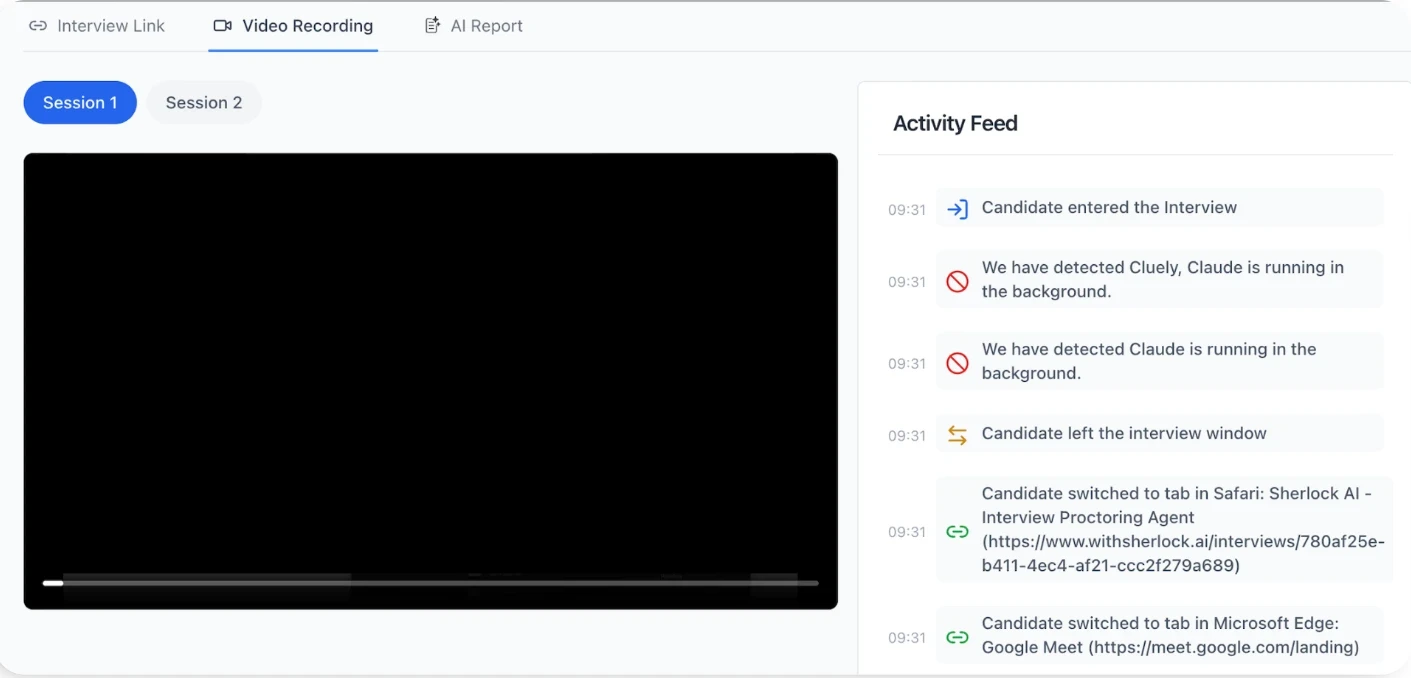

After the Interview: Structured Evidence, Human Judgment

AI generates post-interview reports

Consolidated skill scores and rubric-based summaries

Timeline of flagged integrity events (if any)

Response pattern analysis for reviewer context

Human panel reviews and decides

Validates whether AI flags are meaningful or explainable

Weighs technical performance, soft skills, and role fit

Applies business context and team needs before making the final call

This creates a defensible, audit-ready hiring trail while preserving human accountability.

Operating Principle: AI as Co-Pilot, Not Autopilot

In an effective hybrid workflow, AI verifies identity, structures evaluation, and surfaces patterns. Humans interpret nuance, apply context, and make the final call.

This balance leads to faster interviews, more consistent scoring, and stronger candidate trust because decisions are data-informed but human-owned.

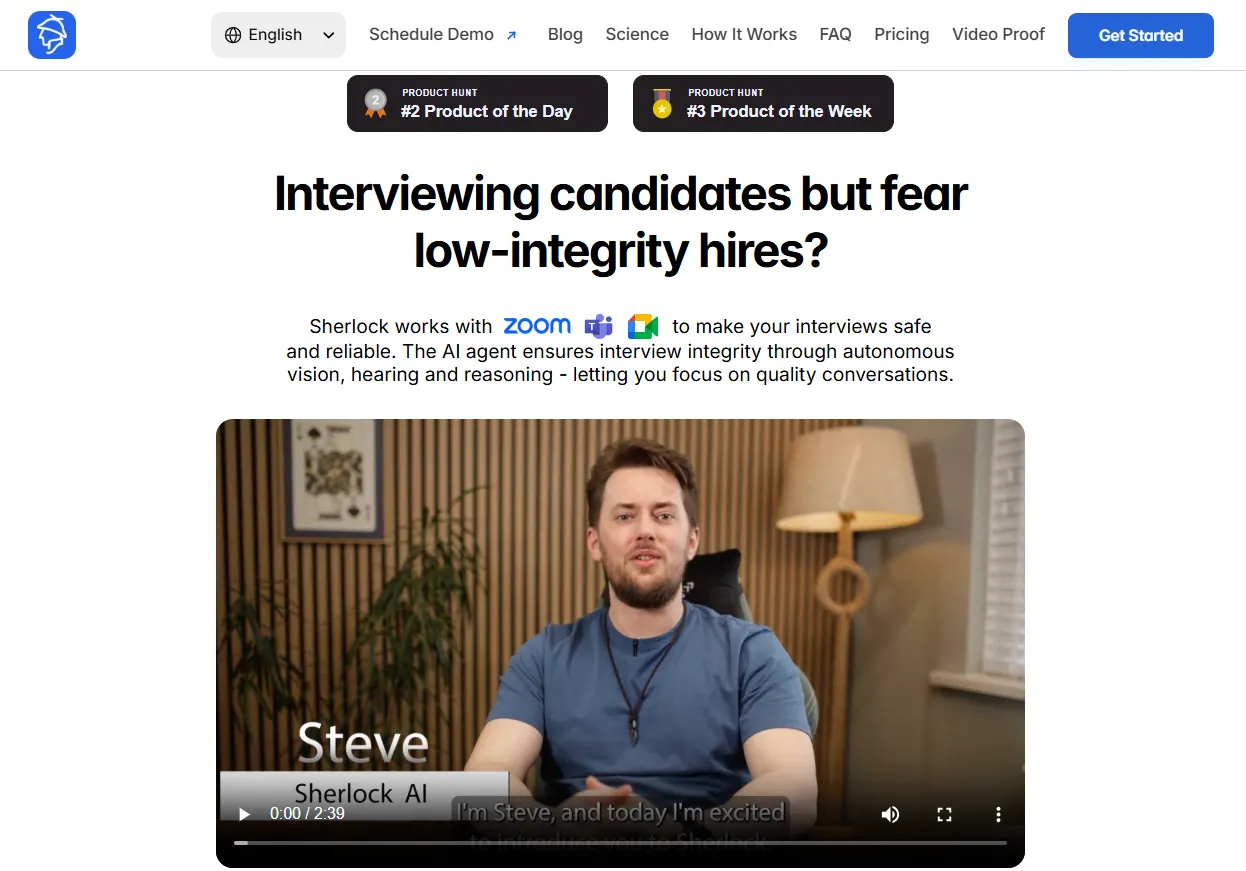

Sherlock AI: Enable Human-First, AI-Supported Workflow

A hybrid interview model only works if the technology is designed to support human judgment rather than replace it. This is where platform like Sherlock AI align closely with the “AI as Interview Integrity Layer" philosophy.

Sherlock AI operates in the background as an interview intelligence layer, handling identity verification, device and environment monitoring, and real-time integrity signals. This allows interviewers to stay focused on evaluating reasoning, communication, and problem-solving instead of policing behavior during the conversation.

Supporting, Not Interrupting, the Live Interview

During the live conversation, Sherlock AI runs quietly in the background, monitoring multimodal signals such as device activity, audio consistency, and behavioral anomalies.

Instead of forcing interviewers to react in real time, it captures context for later review, allowing the discussion to remain natural and uninterrupted.

Key advantages during the interview:

Silent monitoring without disrupting the flow

Contextual alerts that can be reviewed post-conversation

Structured note capture aligned to evaluation rubrics

Freedom for interviewers to focus on probing questions and reasoning depth

Post-Interview: Evidence-Based, Human Decisions

After the session, Sherlock AI compiles structured notes, a timeline of flagged events, and standardized scoring inputs. These outputs are review materials for a human panel, not automated hiring decisions.

This human review layer is essential for fairness and contextual judgment. Recruiters can:

Validate whether a flag represents genuine risk or a benign issue

Account for role requirements and candidate circumstances

Weigh integrity signals alongside problem-solving ability and cultural fit

Sherlock AI treats its outputs as signals, not verdicts, ensuring that decision authority remains with trained interviewers.

Improving Consistency Without Over-Automation

Because the same monitoring logic and note-taking framework is applied to every candidate, Sherlock AI helps standardize evaluation and reduce interviewer variability. At the same time, it avoids intrusive lockdown approaches and instead focuses on authorship verification and reasoning continuity, factors that better reflect real job performance.

This balance leads to:

Fairer, like-for-like candidate comparisons

Audit-ready hiring documentation

Reduced cognitive load for interviewers

Greater trust in remote interview outcomes

The Net Effect: Technology That Protects Human Judgment

In a well-structured hybrid workflow, Sherlock AI manages the integrity and evidence layer, while humans retain ownership of interpretation and final decisions. That division of responsibility is what makes remote hiring both scalable and fair.

Rather than automating hiring outcomes, Sherlock AI creates structured, reviewable evidence and frees interviewers to focus on what they do best - evaluating thinking, potential, and fit in a human-first process.

Explore: How Sherlock AI Detects AI Interview Cheating in Remote Hiring

Conclusion

Balancing AI and human judgment in remote interviews isn’t about choosing one over the other, it’s about assigning each to the work they do best. AI brings structure, consistency, and real-time integrity signals that make remote hiring scalable and defensible. Humans bring context, empathy, and role-specific judgment that no model can reliably replicate.

When AI is used to verify identity, standardize scoring, and surface anomalies, and humans interpret those signals, probe deeper, and make the final decision, organizations get the best of both worlds - speed without shortcuts and fairness without friction.

This hybrid approach also strengthens candidate trust. Interviews feel more conversational and less like surveillance, while hiring teams gain audit-ready evidence and consistent evaluation frameworks. The result is a process that is not only more efficient, but also more equitable and human.

The future of remote hiring will belong to teams that use technology to enhance judgment, not replace it.