Back to all blogs

AI interview fraud is rising fast, creating security, compliance, and workforce risks. Learn how enterprises can protect hiring integrity with Sherlock AI.

Abhishek Kaushik

Mar 24, 2026

Hiring has entered a new era. Artificial intelligence is now embedded in how candidates prepare, how interviews are conducted, and how employers evaluate talent. While AI has improved speed and efficiency in recruitment, it has also introduced a new category of risk that many organizations are still unprepared for.

According to Gartner, 6 % of job candidates admitted to participating in interview fraud either posing as someone else or having someone else pose as them and this is expected to grow, with predictions that 1 in 4 candidate profiles will be fake by 2028.

AI interview fraud is no longer a rare or isolated issue. It is becoming a scalable, sophisticated threat that directly impacts workforce quality, security, and business performance. For enterprises, this is not just a hiring problem. It is an enterprise risk.

The Rise of AI-Driven Interview Manipulation

Interview fraud has not suddenly appeared — it has adapted alongside the rapid shift to remote and virtual hiring. While most interview formats still rely on trust, conversation, and basic video interaction, the tools available to manipulate those interactions have become significantly more advanced, affordable, and difficult to detect.

What has changed is not just that candidates can cheat, but how seamlessly technology now blends into normal interview behavior. AI-enabled assistance can operate in the background without obvious signals, making traditional red flags far less reliable.

AI-enabled manipulation can now occur at multiple stages of the interview process, often in ways that appear completely natural to interviewers.

Real-time AI assistance during live interviews

Candidates use AI tools silently during technical, case-based, or behavioral interviews. These systems generate structured answers, refine phrasing, and suggest frameworks while the candidate appears to be thinking independently. Because the delivery still sounds human and conversational, this type of assistance is extremely difficult to recognize in the moment.

Identity masking through synthetic or altered video

Modern video manipulation tools can subtly enhance, blur, or alter a person’s on-screen appearance. In more serious cases, they can enable someone other than the original applicant to participate in the interview. Since the video feed appears stable and realistic, visual verification alone is no longer a reliable safeguard.

Hidden third-party support during interviews

Some candidates receive live help from another person off-camera. This can range from silent prompting to full answer coaching through hidden audio devices or chat tools. Because this support happens outside the interviewer’s view, the conversation can appear smooth and legitimate despite outside assistance.

AI-assisted speech and communication enhancement

Voice enhancement and speech-generation tools can help candidates adjust tone, clarity, or fluency in real time. While not always used for impersonation, these tools can mask communication gaps and create a level of polish that does not accurately reflect the candidate’s independent ability.

Concealed devices and on-screen support tools

Candidates may use secondary screens, hidden devices, or minimized browser windows to access AI tools, prepared answers, or coding solutions. With practice, they can reference this support while maintaining steady eye contact and natural pacing, making the assistance nearly invisible to interviewers.

These methods are no longer rare or technically complex. Many are inexpensive, easy to set up, and widely discussed in online communities. As remote hiring continues to grow, so does the need for organizations to recognize that interview fraud is no longer limited to obvious cheating — it now includes subtle, technology-assisted manipulation that can influence hiring decisions without being easily detected.

Why This Is an Enterprise Level Risk

Many organizations still treat interview fraud as a recruiter inconvenience. In reality, the consequences extend far beyond talent acquisition and into core areas of enterprise risk.

1. Security and data exposure

Hiring someone with falsified credentials or a hidden identity can introduce serious security threats.

Fraudulent hires may gain access to internal systems, source code, customer records, or financial data

Stolen or misused access can lead to data breaches, insider threats, or intellectual property loss

In regulated industries, unauthorized access can trigger compliance violations and mandatory disclosures

A single compromised hire can create risk levels similar to a major cybersecurity incident.

2. Operational and performance impact

When a candidate exaggerates or fabricates their abilities, the cost shows up in day to day operations.

Projects slow down as teams discover skill gaps that should not have existed

High performers are forced to compensate, leading to burnout and morale issues

Missed deadlines, rework, and quality failures directly affect customer outcomes

The financial cost of a bad hire is high. When that hire entered through fraud, the damage spreads across teams and timelines.

3. Brand and trust damage

Workforce credibility is part of enterprise reputation.

If clients or partners discover that unqualified or fraudulent individuals were hired into critical roles, trust erodes quickly

Public exposure of hiring failures can harm employer brand and make it harder to attract strong candidates

For consulting, technology, healthcare, and finance firms, perceived expertise is central to market positioning

Reputation damage often lasts longer than the hiring mistake itself.

4. Legal and compliance consequences

Fraudulent hiring can lead to regulatory and legal exposure.

Identity fraud, fake certifications, or misrepresented work authorization can trigger investigations

Cross border employment violations may result in penalties or restrictions

Failure to perform adequate verification can weaken an organization’s legal defense

Enterprises may face audits, fines, contract disputes, or lawsuits tied directly to hiring failures.

5. Breakdown of internal trust and culture

Interview fraud does not only affect systems. It affects people and culture.

When teams realize a colleague was hired under false pretenses, confidence in leadership and hiring processes declines

Employees may question fairness if others advanced through deception rather than merit

Managers become more risk averse in hiring, which can slow growth and innovation

Over time, repeated incidents can weaken organizational cohesion and engagement.

6. Distorted workforce planning and strategic decisions

Hiring data drives major business decisions. Fraud corrupts that data.

Leaders rely on hiring outcomes to assess talent pipelines, skill availability, and workforce readiness

If interview results do not reflect real capability, workforce planning becomes inaccurate

Training budgets, project staffing, and expansion plans may be based on false assumptions

This creates a ripple effect where one fraudulent hire influences decisions far beyond a single role.

AI interview fraud is not just about one bad candidate slipping through. It reveals a systemic weakness in how organizations verify talent in a digital hiring environment. That makes it an enterprise risk, not just a recruiting challenge.

Read More: The Recruiter’s Guide to Spotting Memorized vs Real Experience Stories

Why Traditional Interview Processes Fail

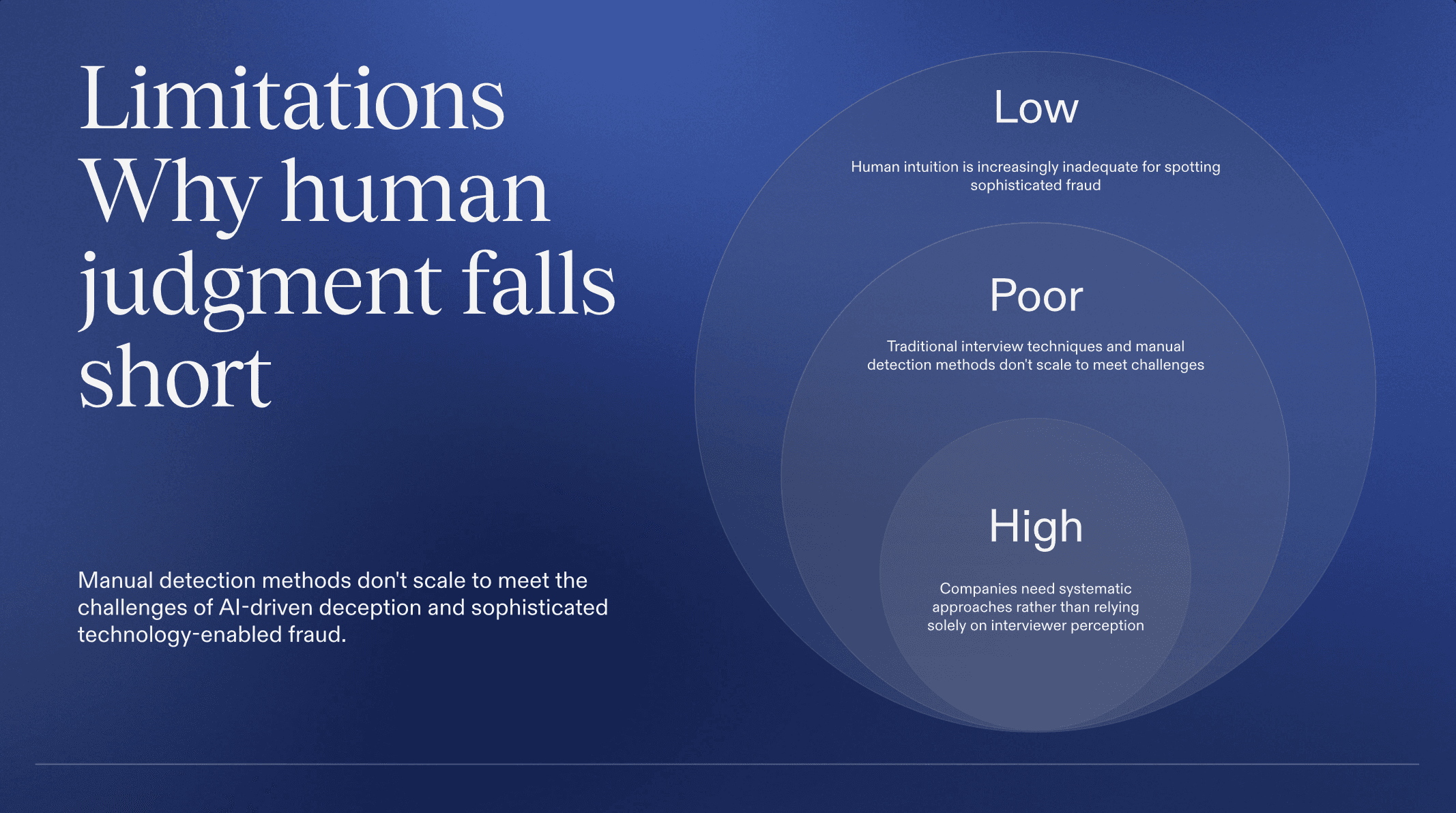

Most interview processes were not designed to handle AI-driven deception.

Video calls, panel interviews, and technical assessments rely heavily on trust and visual cues. These signals are now easy to manipulate. A confident voice, steady eye contact, and quick answers no longer guarantee authenticity.

Even experienced interviewers struggle to detect when:

Answers are being generated in real time by AI

The person on screen is not the person who applied

A hidden collaborator is assisting off camera

Manual detection does not scale, and human intuition is no longer enough.

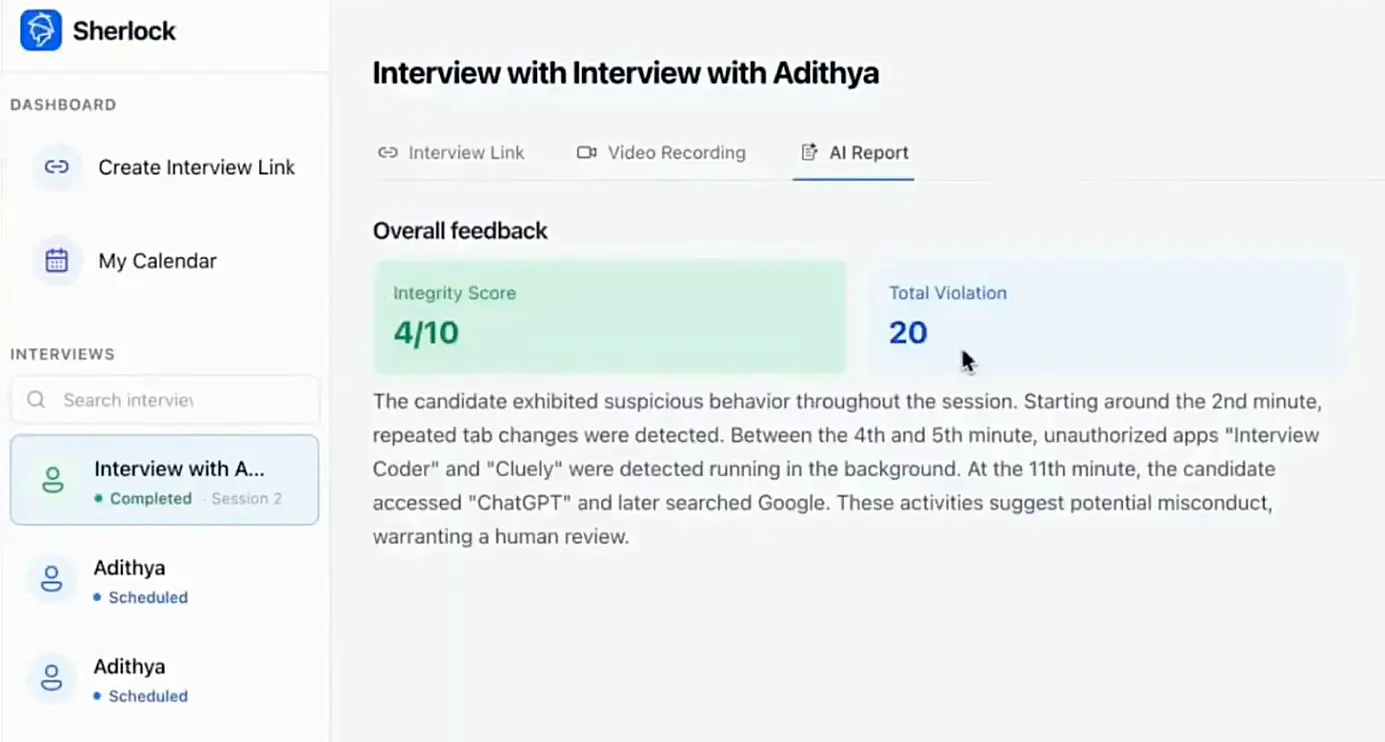

How Sherlock AI Helps Enterprises Stay Ahead

Sherlock AI is designed to address the growing challenge of AI interview fraud in remote and virtual hiring environments.

1. Works as an intelligent integrity layer

Sherlock AI supports interviewers rather than replacing them

It adds a verification layer that strengthens trust in digital hiring

Hiring teams remain in control while gaining deeper visibility into interview authenticity

2. Analyzes live interview signals

Sherlock AI evaluates behavioral and interaction patterns during interviews

It helps identify signs of AI assistance, impersonation, or suspicious activity

Risks that are invisible to the human eye can be surfaced in real time

3. Protects remote and campus hiring at scale

Enterprises can maintain confidence in virtual interviews without making the process intrusive

Both experienced hires and early talent pipelines can be protected from AI driven fraud

Integrity controls scale with hiring volume across locations

4. Detects external support and proxy participation

Sherlock AI helps uncover indicators of hidden AI tools or off screen assistance

It supports detection of cases where another person may be influencing or replacing the candidate

This reduces the likelihood of fraudulent candidates progressing through the funnel

5. Provides objective risk insights for hiring teams

Interviewers receive additional context to support their evaluations

Risk signals help teams make more informed and consistent hiring decisions

This reduces reliance on intuition alone in high stakes hiring scenarios

6. Safeguards enterprise assets from the point of entry

By improving interview integrity, Sherlock AI helps protect workforce quality

It reduces downstream risks to data security, customer trust, and brand reputation

Prevention begins before system access or sensitive responsibilities are granted

By embedding integrity directly into the interview process, Sherlock AI helps organizations close the gap between how interviews are conducted and how fraud actually occurs today.

Conclusion

AI is reshaping hiring on both sides of the table. Candidates have access to tools that can enhance preparation, but those same tools can also be used to misrepresent identity and ability. As a result, interview fraud has evolved from an occasional concern into a scalable enterprise risk.

The impact reaches far beyond recruitment. Security exposure, operational disruption, reputational damage, legal consequences, and flawed workforce planning can all stem from a single fraudulent hire. Traditional interview methods, built on visual cues and trust, are no longer sufficient in an AI augmented world.

Enterprises that recognize interview integrity as a core business risk will be better prepared for the future of work. By combining human judgment with purpose built technology like Sherlock AI, organizations can ensure that hiring decisions are based on genuine capability, not artificial assistance.

In the age of AI driven deception, protecting interview integrity is no longer optional. It is a fundamental part of enterprise risk management.