Back to all blogs

Learn how to detect deepfake candidates in video interviews using visual, audio, and behavioral signals and how Sherlock AI stops interview fraud.

Abhishek Kaushik

Feb 5, 2026

In an era where remote hiring has become the norm, a new threat is emerging - deepfake candidates. Industry analysts predict that by 2028, as many as one in four job candidates worldwide could be fake, driven by AI-generated identities that can respond and interact in real time.

In a recent survey of 1000 hiring managers across U.S, approximately 17 percent of hiring managers surveyed said they had encountered candidates using deepfake technology to alter their video interviews.

The consequences are no longer theoretical. In May 2024, the U.S. Department of Justice revealed that more than 300 American companies had unknowingly hired impostors tied to North Korea for remote IT roles, resulting in at least $6.8 million in overseas revenue being funneled out of the country.

Being able to spot the subtle signs of deepfake is essential for protecting your organization’s integrity, security, and talent outcomes.

What a “Deepfake Candidate” Really Means in 2026

A deepfake candidate is not just someone lying on a resume. In 2026, it typically refers to applicants who use AI-driven tools to manipulate their visual or audio identity in real time during video interviews. This includes:

AI face swaps, where the candidate’s real face is replaced with another person’s face on camera.

Real-time video manipulation, where facial features, expressions, or age are subtly altered to match a stolen or fabricated identity.

Identity overlays, where a synthetic face is layered over a live video feed to impersonate a legitimate person.

In many cases, the person answering questions is not the same person whose face appears on screen and sometimes not even the same person who later shows up for work.

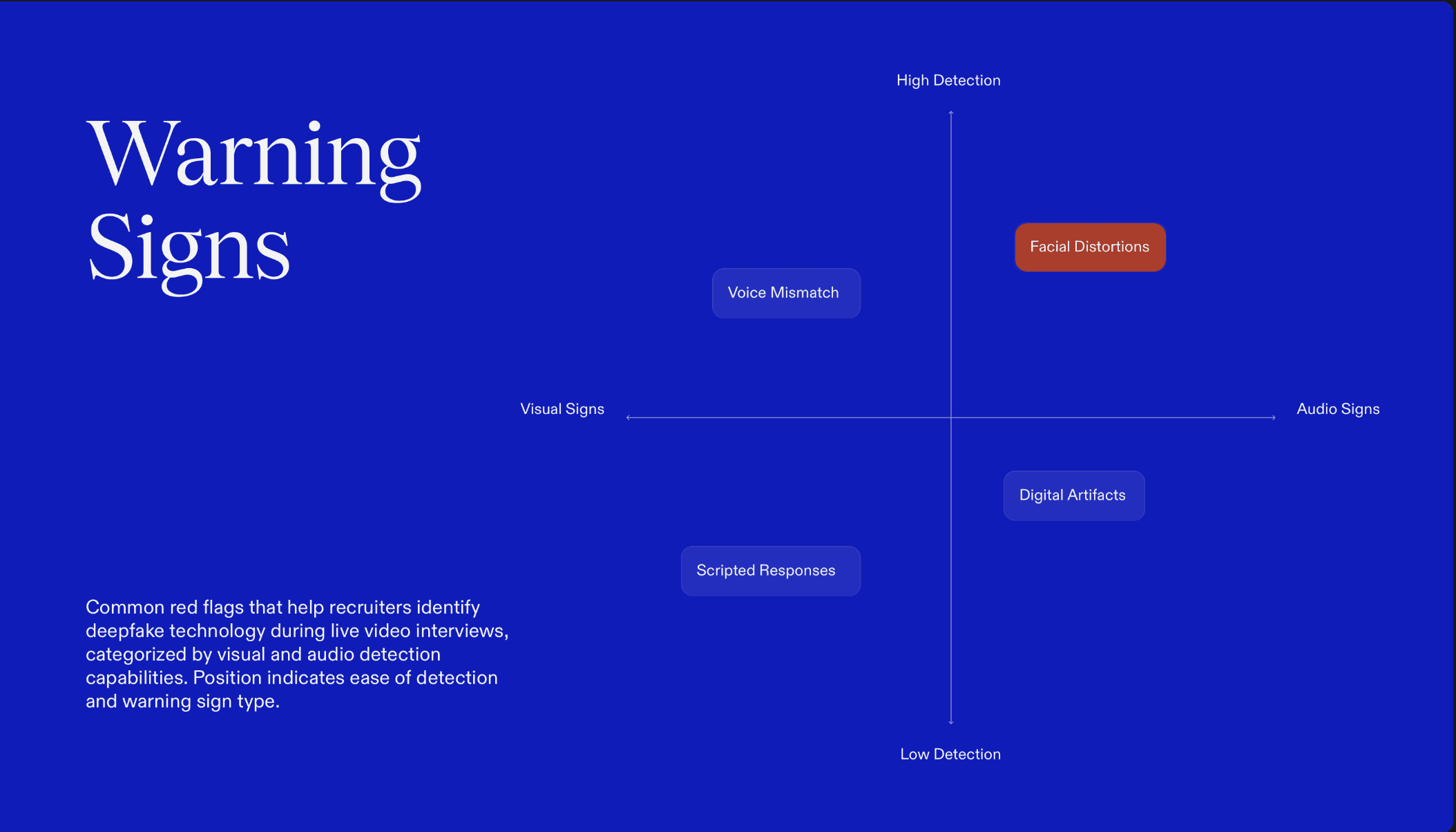

Common Red Flags in Live Interviews

Deepfake technology still leaves behind detectable artifacts, especially in real-time video calls. Recruiters and interviewers often report the following warning signs:

Unnatural blinking or eye movement, such as long pauses without blinking or blinking that feels out of rhythm with speech.

Lip-sync delays, where mouth movement slightly lags behind the audio.

Frozen or distorted facial regions, especially around the jawline, cheeks, or forehead during head movement.

Lighting mismatches, where shadows or brightness don’t shift naturally as the candidate moves.

Voice-face desynchronization, where tone, emotion, or mouth shape doesn’t align with spoken words.

Individually, these issues can look like bad internet or webcam quality. Together, they often point to synthetic video manipulation.

Technical & Behavioral Signals That Reveal Deepfakes

Deepfake candidates don’t just look different, they behave differently too. While modern AI can generate highly convincing faces and voices, real-time manipulation still produces technical artifacts and behavioral inconsistencies that trained interviewers can spot. This section outlines the most reliable red flags recruiters can use during live video interviews.

1. Visual Anomalies That Signal Synthetic Video

Real-time deepfake overlays often struggle with motion, lighting, and facial boundaries. Common visual warning signs include:

Inconsistent head movement: The face appears to lag behind head turns or tilts, or remains unnaturally stable while the head moves.

Pixel warping and distortion: Subtle ripples or blurring appear around the eyes, mouth, jawline, or ears especially during speech or quick movement.

Edge flicker and facial boundary artifacts: The outline of the face flickers, softens, or shifts slightly against the background, particularly near the hairline and chin.

Unnatural blinking or eye behavior: Blinking may be too infrequent, too synchronized, or oddly timed relative to speech and emotion.

Individually, these issues can look like poor webcam quality. Together, they strongly suggest synthetic video manipulation.

2. Audio Inconsistencies That Don’t Match the Face

Deepfake and voice-cloning tools often leave subtle audio fingerprints that don’t align with natural human speech.

Robotic tone shifts: The voice suddenly sounds flat, metallic, or overly smooth especially during emotional or spontaneous responses.

Latency between speech and mouth movement: The candidate’s lips move a fraction of a second after the audio, or continue moving after the sentence ends.

Voice-cloning artifacts: Repeated speech patterns, identical pacing across answers, or unnatural pronunciation of certain words.

These mismatches become more obvious when candidates are interrupted or asked unexpected questions.

3. Behavioral Mismatches That Don’t Add Up

Even when the video looks convincing, deepfake candidates often fail behaviorally.

Perfect technical answers but weak follow-ups: Candidates deliver polished, textbook responses but struggle when asked to explain trade-offs, mistakes, or real project details.

Inability to improvise: They freeze, stall, or deflect when asked to solve a problem live or explain their thinking step by step.

Overly scripted responses: Answers sound rehearsed, generic, or identical in structure across different questions.

This pattern often indicates off-screen assistance, proxy interviewing, or AI-generated answer support.

4. Live Verification Techniques Recruiters Can Use

Simple real-time prompts can disrupt deepfake overlays and expose inconsistencies.

Spontaneous gesture requests: Ask the candidate to wave, touch their nose, smile broadly, or blink rapidly.

Head-turn and angle prompts: Ask them to turn their head fully left and right or tilt it up and down.

Object-based challenges: Ask them to hold up a specific object (e.g., a pen or ID) next to their face and rotate it slowly.

Lighting change tests: Ask them to move closer to a window or turn on a desk lamp to change lighting conditions.

Deepfake systems often fail or glitch during these unscripted movements.

When technical artifacts align with unnatural interview behavior, recruiters are no longer dealing with a normal candidate, they’re likely facing synthetic identity fraud.

How Sherlock AI Solves the Deepfake Candidate Problem

Sherlock AI is built specifically to detect synthetic identities, deepfake video manipulation, and real-time interview fraud in situations where traditional interviews and resume checks fall short.

1. Real-Time Deepfake & Video Manipulation Detection

Facial boundary distortions and edge flicker

Pixel warping around eyes, mouth, and jawline

Lip-sync irregularities and voice-face desynchronization

Inconsistent head movement and unnatural blinking patterns

2. Identity Verification Beyond “Face on Camera”

Matching the live video face against verified ID documents

Running biometric consistency checks across interview sessions

Detecting face swaps and synthetic overlays

Identifying repeated or recycled synthetic identities across candidates

3. Behavioral & Interaction Anomaly Detection

Speech cadence and repetition patterns

Delays between questions and responses

Over-scripted answer structures

Inconsistencies between technical depth and behavioral signals

4. Built-In Live Verification Challenges

Spontaneous gesture prompts

Head-turn and motion challenges

Lighting and angle change requests

Object-based verification

5. Audit Trails & Compliance-Ready Evidence

Timestamped anomaly logs

Video and audio artifact snapshots

Identity mismatch records

Behavioral deviation reports

Traditional background checks don’t address synthetic video. And standard video platforms were never designed for identity assurance.

Sherlock AI closes this gap by turning every live interview into a continuously monitored identity-verification and fraud-detection session.

Final Thoughts

Deepfake hiring fraud has outgrown manual detection. While trained recruiters can catch some visual and behavioral red flags, real-time video manipulation, voice cloning, and proxy interviewing are now sophisticated enough to bypass casual human scrutiny.

While recruiters can learn to spot visual glitches, audio mismatches, and behavioral inconsistencies, manual detection alone isn’t enough. Deepfake technology is evolving faster than human pattern recognition, and what looks “slightly off” today may look perfectly normal tomorrow. Traditional interview checks, resume verification, and background screening were never designed to defend against real-time AI impersonation.

Sherlock AI add real-time deepfake detection, biometric identity verification, behavioral anomaly analysis, and audit-ready evidence into live interviews, closing the gap that standard video platforms leave wide open.