Back to all blogs

As AI reshapes hiring risks, explore the Top 10 Deepfake Detection Tools for Interviews in 2026 and how recruiters prevent impersonation and fraud.

Abhishek Kaushik

Jan 29, 2026

Deepfake technology has advanced rapidly, making fake videos, cloned voices, and AI generated identities harder to detect with the human eye. The amount of deepfake content circulating online is projected to grow from around 500,000 files in 2023 to an estimated 8 million by 2025, and this trend is only accelerating into 2026. In 2025 alone, deepfake fraud attempts surged thousands of percent as attackers embraced AI to scale deception across digital systems.

In 2026, deepfakes are no longer limited to social media manipulation. They are actively used in job interviews, remote hiring, executive impersonation, and recruitment fraud, making authentication and verification a top priority for security and talent teams. Deepfake fraud attempts spiked by over 3,000% in 2023, and deepfakes now account for about 6.5% of all reported fraud attacks globally.

Because of this shift, Deepfake Detection Tools have become a critical layer of protection for organizations conducting virtual interviews.

This guide explores the best Deepfake Detection Tools in interviews, explains why they matter in 2026, and shows how Sherlock AI is purpose built for interview specific deepfake detection.

What Are Deepfake Detection Tools?

Deepfake Detection Tools are AI powered systems designed to identify manipulated or synthetic media such as:

AI generated video faces

Voice cloning and synthetic speech

Identity swaps and impersonation

Real time AI assisted interview responses

These tools analyze facial micro expressions, voice frequency patterns, behavioral consistency, and reasoning flow to determine authenticity.

In interview settings, deepfake detection must go beyond media analysis and verify whether the same real person is consistently present and responding genuinely.

Top 10 Deepfake Detection Tools for Interviews in 2026

Below are the most relevant Deepfake Detection Tools evaluated specifically for interview and hiring use cases.

1. Sherlock AI - Deepfake Detection for Interviews

Sherlock AI is purpose built to protect interview integrity by detecting deepfake usage, impersonation, and AI assisted responses during remote and hybrid hiring. Unlike general deepfake detectors that focus only on media authenticity, Sherlock AI evaluates the human authenticity of the candidate throughout the interview lifecycle.

Sherlock AI combines behavioral intelligence, identity verification, and reasoning analysis to surface risks that traditional tools and human interviewers often miss.

How Sherlock AI Detects Deepfakes in Interviews

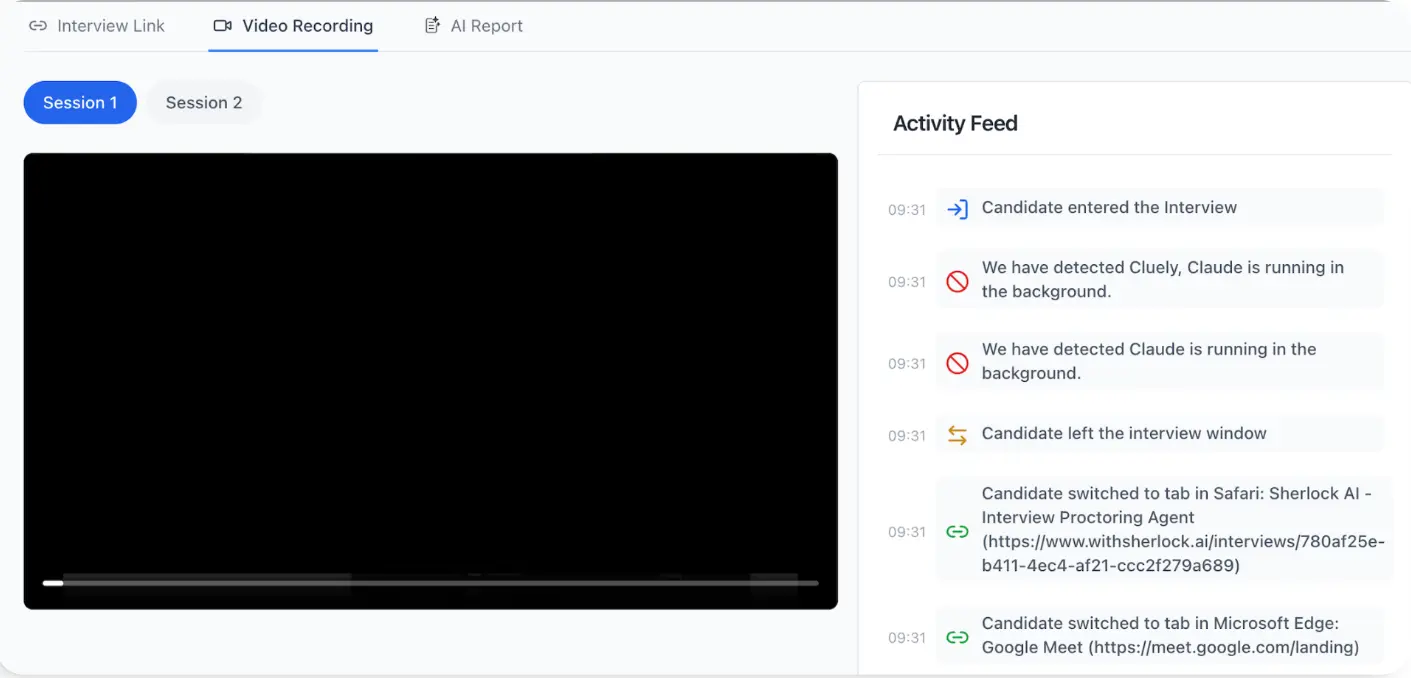

Sherlock AI analyzes multiple authenticity signals simultaneously, including:

Behavioral consistency across interview rounds and formats

Reasoning flow to identify AI generated or scripted answers

Identity and interaction patterns over time

Sudden changes in communication style, confidence level, or expertise depth

Indicators of proxy interviewing or real time external assistance

This multi layer approach allows Sherlock AI to detect not just fake media, but fake participation.

Key Features of Sherlock AI

Designed specifically for live and asynchronous interviews

Detects deepfake faces, voice manipulation, and identity swapping

Identifies proxy candidates by comparing behavior and interaction patterns across rounds

Flags AI generated, rehearsed, or externally assisted responses

Provides contextual and explainable risk insights rather than pass or fail decisions

Bias aware evaluation that avoids accent, appearance, or background based assumptions

Seamless integration with existing interview workflows

Scales across high volume remote hiring without degrading candidate experience

Sherlock AI focuses on one critical question that other tools miss. Is the same real human genuinely present and thinking independently throughout the hiring process?

Learn more at https://www.withsherlock.ai/

2. OpenAI Deepfake Detector

OpenAI’s deepfake detection capabilities are designed to identify AI generated content across text, image, and video formats. These tools are built on large scale foundation models that recognize patterns commonly associated with synthetic media.

OpenAI’s detector is often used in content moderation, research, and platform safety initiatives. It focuses on identifying whether content was generated or altered by AI systems.

Key Features

Detects AI generated text, images, and video

Uses foundation model based content analysis

Identifies synthetic media generation patterns

Supports large scale content authenticity checks

Continuously evolves with advancements in generative AI

3. Hive AI Deepfake Detection

Hive AI provides deepfake detection through scalable APIs that analyze images, videos, and audio for signs of manipulation. It is widely used in media moderation and digital safety workflows.

Hive AI enables organizations to automatically screen large volumes of recorded content for authenticity and potential manipulation.

Key Features

API based detection for image, video, and audio content

Fast analysis of recorded media

Scalable infrastructure for high volume processing

Supports automated content review workflows

Integrates with moderation and compliance systems

4. Sensity AI

Sensity AI focuses on deepfake threat intelligence and the detection of impersonation attacks. It is commonly used to monitor and analyze deepfake misuse targeting brands, executives, and public figures.

Sensity emphasizes understanding how deepfakes are created, distributed, and weaponized across digital channels.

Key Features

Detects facial manipulation and identity misuse

Monitors deepfake activity across platforms

Provides intelligence on impersonation campaigns

Supports brand and executive protection

Analyzes trends in synthetic media threats

5. Reality Defender

Reality Defender is designed to identify manipulated and AI generated audio and video in real time. It is used in scenarios where continuous media authenticity monitoring is required.

The platform focuses on detecting synthetic signals during live or recorded media playback.

Key Features

Real time detection of audio and video deepfakes

Supports streaming media analysis

Identifies synthetic visual and vocal patterns

Delivers fast authenticity assessments

Enables continuous media monitoring

6. Intel FakeCatcher

Intel FakeCatcher is a research driven deepfake detection system that analyzes physiological signals in facial videos. It focuses on detecting whether a video represents a real human by examining subtle biological cues.

FakeCatcher represents a hardware accelerated approach to deepfake detection.

Key Features

Analyzes subtle biological signals in facial video

Uses advanced processing techniques

Research backed detection methodology

Focuses on real human signal validation

Designed for high accuracy analysis

7. Deep Media DeepID

Deep Media DeepID specializes in detecting facial manipulation and identity spoofing in digital content. It is designed to verify whether a face in an image or video has been altered or synthetically generated.

The tool is commonly used for media verification and identity protection.

Key Features

Detects face swaps and synthetic facial content

Analyzes image and video authenticity

Identifies identity spoofing attempts

Uses AI driven facial analysis

Supports digital media verification workflows

8. AI Voice Detection Tools

AI voice detection tools focus on identifying synthetic or cloned speech generated by AI models. These tools analyze vocal characteristics to determine whether a voice is human or machine generated.

They are commonly used in voice based verification and security contexts.

Key Features

Detects voice cloning and synthetic speech

Analyzes pitch, cadence, and waveform patterns

Identifies AI generated audio signals

Supports voice authenticity verification

Useful for audio based interactions

9. Pindrop Security

Pindrop Security specializes in voice authentication and fraud detection. It is widely used in environments where voice is the primary interaction channel.

The platform uses machine learning to analyze voice signals and assess risk.

Key Features

Voice biometrics and authentication technology

Detects voice based fraud attempts

Analyzes audio signals for risk indicators

Uses machine learning driven verification

Supports secure voice interactions

10. Facia

Facia provides facial recognition and liveness detection technology to verify whether a real person is present. It is commonly used for identity verification and access control.

Facia focuses on confirming human presence at the moment of verification.

Key Features

Facial recognition and identity verification

Liveness detection to prevent spoofing

Supports real time face authentication

Works across image and video inputs

Used for secure identity verification processes

Conclusion

Deepfake technology has fundamentally changed the risk landscape of remote hiring in 2026. Interviews are no longer just conversations. They are high value targets for identity manipulation, AI assisted responses, and proxy participation that can easily bypass traditional interview methods and manual review.

While many Deepfake Detection Tools focus on identifying manipulated video or audio, interviews require a broader and more continuous approach. Organizations must verify that the same real person is present across interview stages and that responses reflect genuine human reasoning rather than scripted or AI generated output.

Sherlock AI addresses this challenge by combining behavioral analysis, identity continuity, and explainable risk insights into a single interview focused platform. By strengthening recruiter decision making without compromising candidate experience, Sherlock AI helps organizations build secure, fair, and trustworthy hiring processes at scale.