Back to all blogs

Learn how to assess candidate integrity in interviews using behavioral signals, technical verification, and structured workflows that detect AI assistance, proxies, and authorship gaps while keeping hiring decisions human-led.

Abhishek Kaushik

May 8, 2026

Candidate integrity has become one of the most critical and hardest to evaluate factors in modern hiring. With remote interviews and AI-assisted applications on the rise, recruiters are no longer just assessing skills; they’re verifying authenticity.

According to a Checkr survey, 59% of hiring managers suspect candidates have misrepresented themselves during the hiring process, with many pointing to remote interviews and AI-assisted responses as growing risk factors.

The cost of getting this wrong is significant. According to the U.S Department of Labor, a single bad hire can cost up to 30% of the employee’s annual salary, not including team disruption and productivity loss. Despite these risks, most interview processes still rely heavily on intuition and unstructured conversations, which are prone to bias and inconsistency.

In this guide, we’ll break down how to assess candidate integrity using a structured, evidence-based approach so hiring teams can make confident decisions without compromising fairness or candidate experience.

Why Integrity Risks Are Rising in Interviews

Recruiters are no longer just evaluating people, they’re evaluating people through layers of technology, where real-time assistance, identity masking, and content generation are increasingly accessible.

Key drivers behind the growing focus on integrity include:

Real-time AI assistance that can generate polished but shallow answers

Proxy interview participation where the selected candidate is not the one evaluated

Deepfake and voice-cloning risks in remote video workflows

Résumé inflation and unverifiable project claims

Authorship gaps between take-home assessments and live problem solving

These challenges also impact fairness. Candidates who rely on external tools gain an artificial advantage over those who present their own work, skewing hiring outcomes and weakening skill benchmarks.

Hiring teams need to look beyond intuition and evaluate a combination of behavioral patterns and technical integrity signals that reveal whether a candidate’s responses are authentic and repeatable.

Read more: Why Interview Integrity Is a Security Issue in 2026

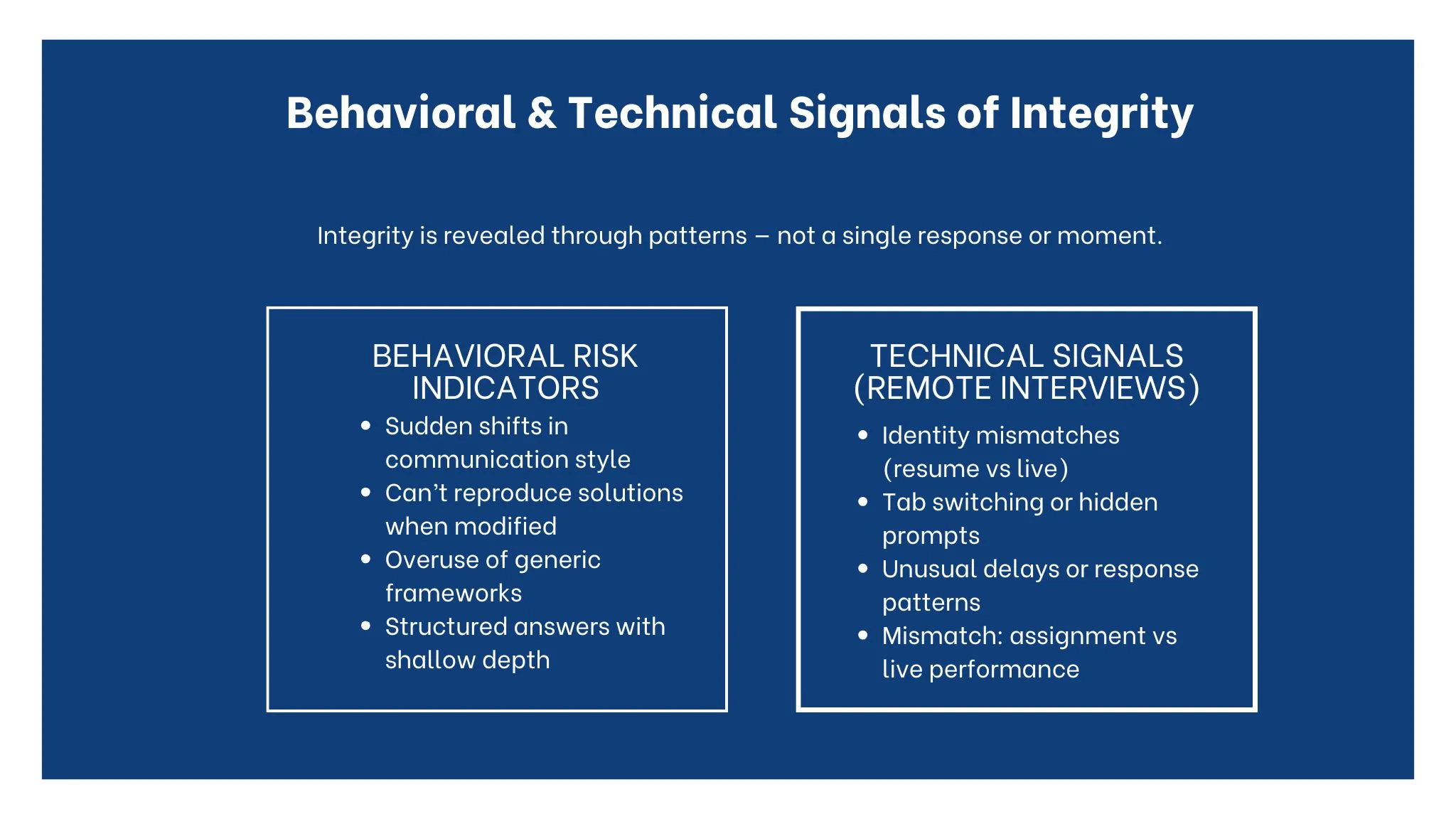

Behavioral and Technical Signals of Integrity

Assessing candidate integrity should never rely on a single moment, a trick question, or gut feeling. Authenticity emerges through patterns across behavior, reasoning, and technical context. When multiple signals align, recruiters can make confident, evidence-based judgments without penalizing normal nervousness or communication differences.

A strong integrity evaluation combines how a candidate thinks, how consistently they perform, and whether their environment supports independent work.

Behavioral Signals of Authenticity

These indicators suggest the candidate is working from their own understanding rather than external assistance.

Consistency between résumé claims and live responses

Candidates can explain projects in detail, including trade-offs, failures, and decision rationale.Step-by-step reasoning

They break problems into components, verbalize assumptions, and adjust their approach when given new constraints.Comfort with follow-ups and edge cases

Authentic candidates engage with clarifying questions instead of deflecting or restarting with memorized answers.Natural thinking patterns

Pauses, self-corrections, and iterative problem-solving often indicate real cognition, not scripted delivery.

Behavioral Risk Indicators

No single behavior proves misconduct, but repeated inconsistencies can signal authorship gaps.

Sudden shifts in communication style or depth between questions

Inability to reproduce earlier solutions when the problem is slightly modified

Overuse of generic frameworks without role-specific detail or practical examples

Perfectly structured but shallow answers that lack implementation nuance

These patterns often appear when responses are externally generated or memorized rather than understood.

Technical Signals in Remote Interviews

In virtual settings, integrity also has an environmental component. Technical signals help validate that the same person is independently producing the work.

Identity mismatches between submitted documents and live video

Frequent tab switching or hidden prompt behavior during problem solving

Voice, latency, or response pattern anomalies that suggest relayed answers

Authorship discontinuity between take-home assessments and live performance

These signals should always be reviewed with context, connectivity issues or accessibility tools can create false positives if evaluated in isolation.

Integrity Is a Pattern, Not a Moment

The most reliable decisions come from signal aggregation over time, not one suspicious pause or one polished answer. When behavioral consistency, reproducible reasoning, and clean technical context align, recruiters can be confident they are evaluating the candidate’s genuine capability.

This pattern-based approach reduces bias, improves fairness, and shifts integrity assessment from intuition to structured evidence.

Designing an Integrity-First Interview Process

Integrity is most effectively assessed when it is designed into the interview workflow, not evaluated through ad-hoc suspicion. By embedding verification, structured evaluation, and evidence review at each stage, hiring teams can reduce both misconduct and false positives while maintaining a fair candidate experience.

Before the Interview: Establish Authenticity and Baselines

Integrity starts before the live conversation. Early verification ensures that interviewers spend their time evaluating skills rather than confirming identity.

Identity verification to confirm the same individual progresses through every stage

Structured, role-based assessments that measure demonstrable skills using standardized rubrics

Clear candidate guidelines outlining permitted tools, collaboration rules, and interview expectations

This creates a shared understanding of the process and reduces ambiguity that can lead to unintentional policy violations.

During the Interview: Validate Authorship Through Reasoning

The live interview should be designed to test thinking, not memorization. Scenario-based and open-ended questions reveal how candidates approach unfamiliar problems and whether they can adapt their prior answers.

Reasoning-first problem solving where candidates explain trade-offs and assumptions

Real-time follow-ups that modify constraints to check reproducibility of solutions

Human-led evaluation supported by integrity signals rather than dominated by them

This approach shifts the focus from catching misconduct to confirming authentic capability.

After the Interview: Evidence Over Instinct

Post-interview review is where integrity decisions should be made, based on aggregated signals, not isolated moments.

Evidence review instead of instant rejection to avoid penalizing candidates for technical issues or communication differences

Cross-interviewer calibration to align scoring standards and reduce individual bias

Documentation for auditability and fairness, creating a defensible hiring trail

This ensures that integrity assessments are consistent, explainable, and repeatable across candidates.

Operating Principle

Integrity should be designed into the interview system, not judged subjectively.

When verification, structured questioning, and evidence review are built into the process, authenticity becomes measurable rather than intuitive.

While these principles can be applied manually, maintaining consistency across high-volume remote hiring requires technology that can capture signals, structure evidence, and support human reviewers without disrupting the interview flow.

This is where an interview intelligence layer becomes essential, automating verification, monitoring context, and generating structured review artifacts so hiring teams can apply an integrity-first framework reliably at scale.

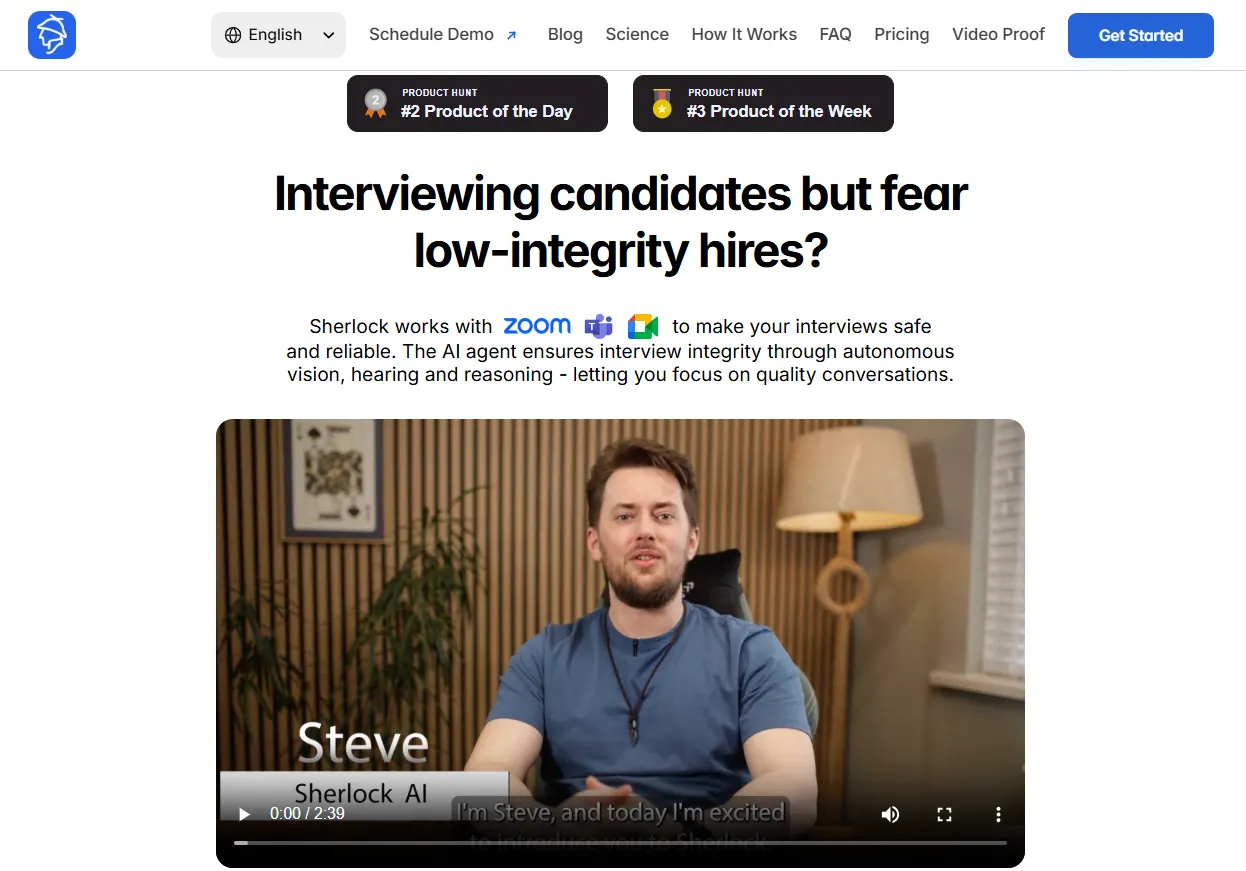

Sherlock AI: Enabling an Integrity-First Interview System

Sherlock AI acts as an interview intelligence layer, handling identity verification, environment monitoring, and authorship analysis in the background while interviewers focus on reasoning, communication, and role fit. Instead of replacing human judgment, it structures evidence so decisions remain contextual and defensible.

Before the Interview: Verified Candidates, Clear Baselines

Sherlock AI establishes trust before the conversation begins by validating that the right person is present and that the interview environment meets integrity standards.

Face matching against submitted identity

Detection of multiple faces or screen substitutions

Identification of virtual machines, remote desktops, or hidden devices

Baseline audio, video, and network quality checks

This ensures interviewers start with authenticated candidates and clean technical conditions, reducing the need for manual policing later.

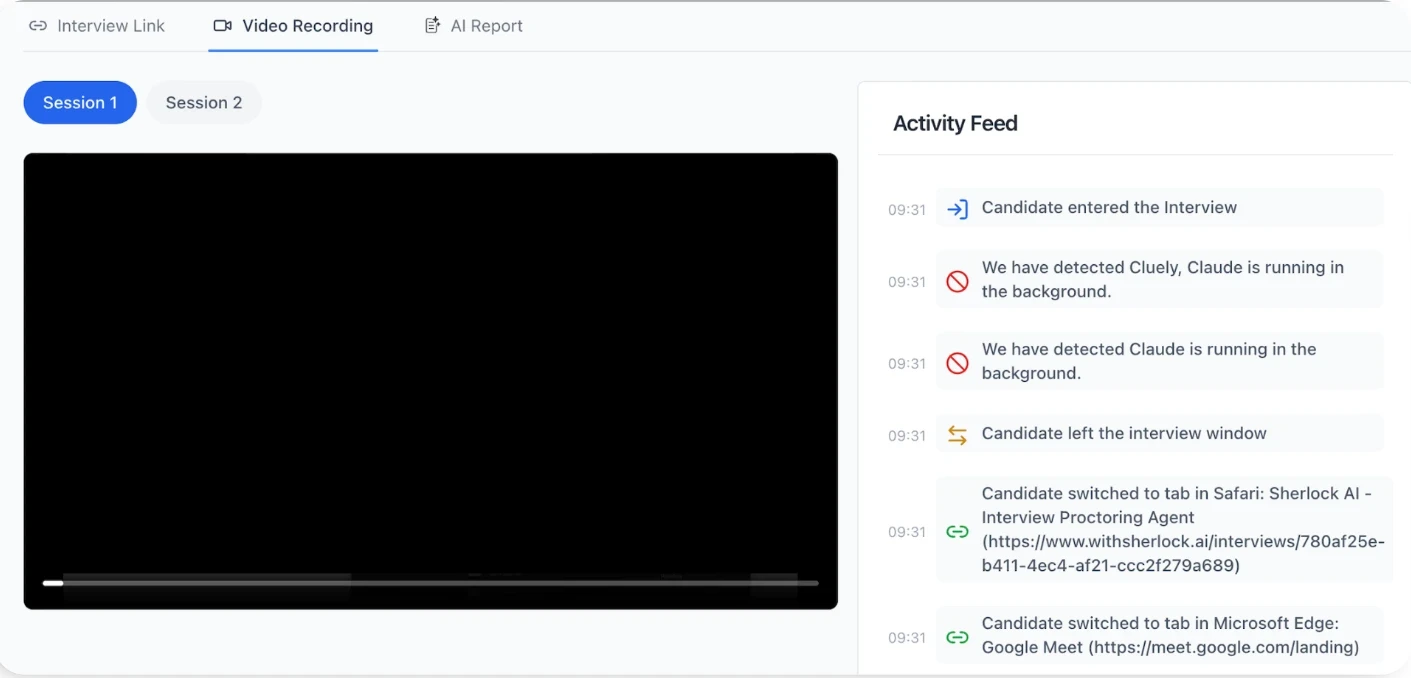

During the Interview: Silent Signal Capture

While the interviewer leads the discussion, Sherlock AI monitors multimodal signals like device activity, voice continuity, response timing, and behavioral patterns, without interrupting the flow.

Flags potential proxy behavior or covert assistance

Tracks authorship continuity between earlier assessments and live responses

Captures structured notes aligned to interview rubrics

Surfaces alerts for later review rather than forcing real-time decisions

This shifts the interviewer’s role from surveillance to evaluation, improving both candidate experience and signal quality.

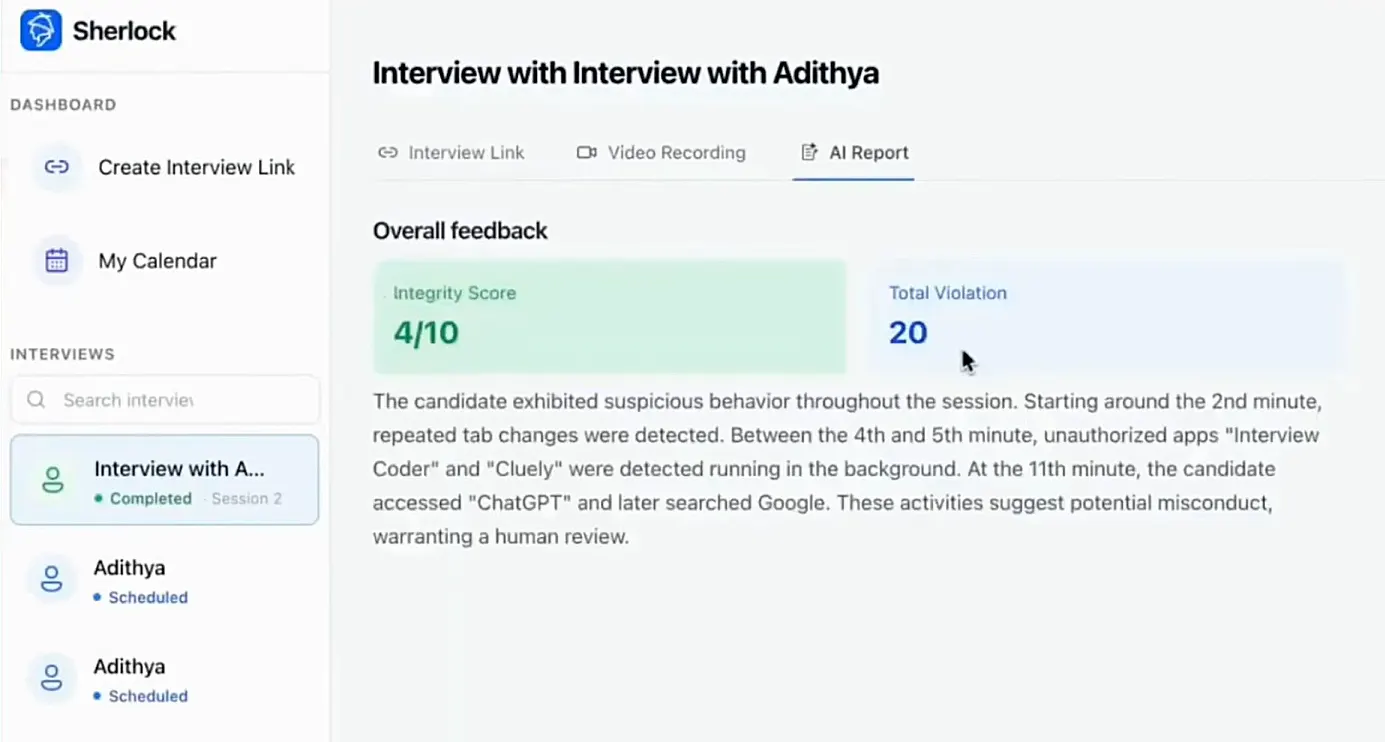

After the Interview: Evidence, Not Verdicts

Post-interview, Sherlock AI generates structured outputs that support human review:

Timeline of flagged integrity events

Standardized rubric-based score inputs

Response pattern summaries for context

Consolidated notes for panel evaluation

Crucially, these outputs are inputs to human decision-making, not automated pass/fail outcomes. Hiring panels can validate whether a flag reflects genuine risk, a technical issue, or a benign behavior.

Consistency Without Over-Automation

Because the same monitoring logic and documentation framework is applied across all candidates, Sherlock AI helps:

Standardize evaluation criteria

Reduce interviewer variability

Create audit-ready hiring trails

Lower cognitive load during interviews

At the same time, it avoids intrusive lockdown methods and focuses on authorship verification and reasoning continuity, which are more relevant to real job performance.

The Outcome: Technology That Protects Human Judgment

In an integrity-first model, Sherlock AI handles the signal and evidence layer, while humans retain ownership of interpretation and final hiring decisions.

This division of responsibility makes remote interviews scalable, fair, and defensible, ensuring integrity is built into the system rather than judged subjectively.

Conclusion

Assessing candidate integrity in modern interviews requires more than intuition, it demands a structured, evidence-based approach that combines behavioral evaluation, technical verification, and human judgment. As AI copilots, proxy participation, and deepfake risks reshape the hiring landscape, authenticity can no longer be assumed; it must be validated through reproducible reasoning and consistent performance.

An integrity-first process doesn’t mean treating candidates with suspicion. It means designing interviews that reward genuine thinking, provide clear guidelines, and evaluate patterns rather than isolated moments. When identity checks, structured assessments, and post-interview evidence reviews are built into the workflow, hiring teams can reduce mis-hire risk while maintaining fairness and a positive candidate experience.

With the support of an interview intelligence layer like Sherlock AI, organizations can scale this model without adding friction. Technology captures signals and structures documentation, while humans interpret context and make the final decision.

The result is a hiring process that is not only more secure, but also more equitable, consistent, and defensible, where integrity is measured systematically and judgment remains human.