Back to all blogs

Discover effective strategies to prevent AI-based candidate impersonation in interviews, ensuring authentic responses and reliable hiring decisions every time.

Abhishek Kaushik

May 13, 2026

Remote hiring has unlocked global talent access, but it has also opened the door to a new class of hiring fraud. One of the most serious threats today is AI-based candidate impersonation during interviews. With the rise of deepfake videos, voice cloning, and real-time AI assistance, recruiters are no longer guaranteed that the person interviewing is the person being hired. A major survey reported that 91% of hiring managers have either encountered or suspected AI‑generated interview responses during video calls.

For hiring teams, this is not just a technical issue. It is a trust, compliance, and business risk problem. Industry research forecasts that by 2028, up to 25% of all job applicants could be fake identities, highlighting a rapidly intensifying problem.

This article explores what interview impersonation is, why it is increasing, the red flags recruiters must watch for, and how platforms like Sherlock AI help prevent it in real time.

What Is Interview Impersonation?

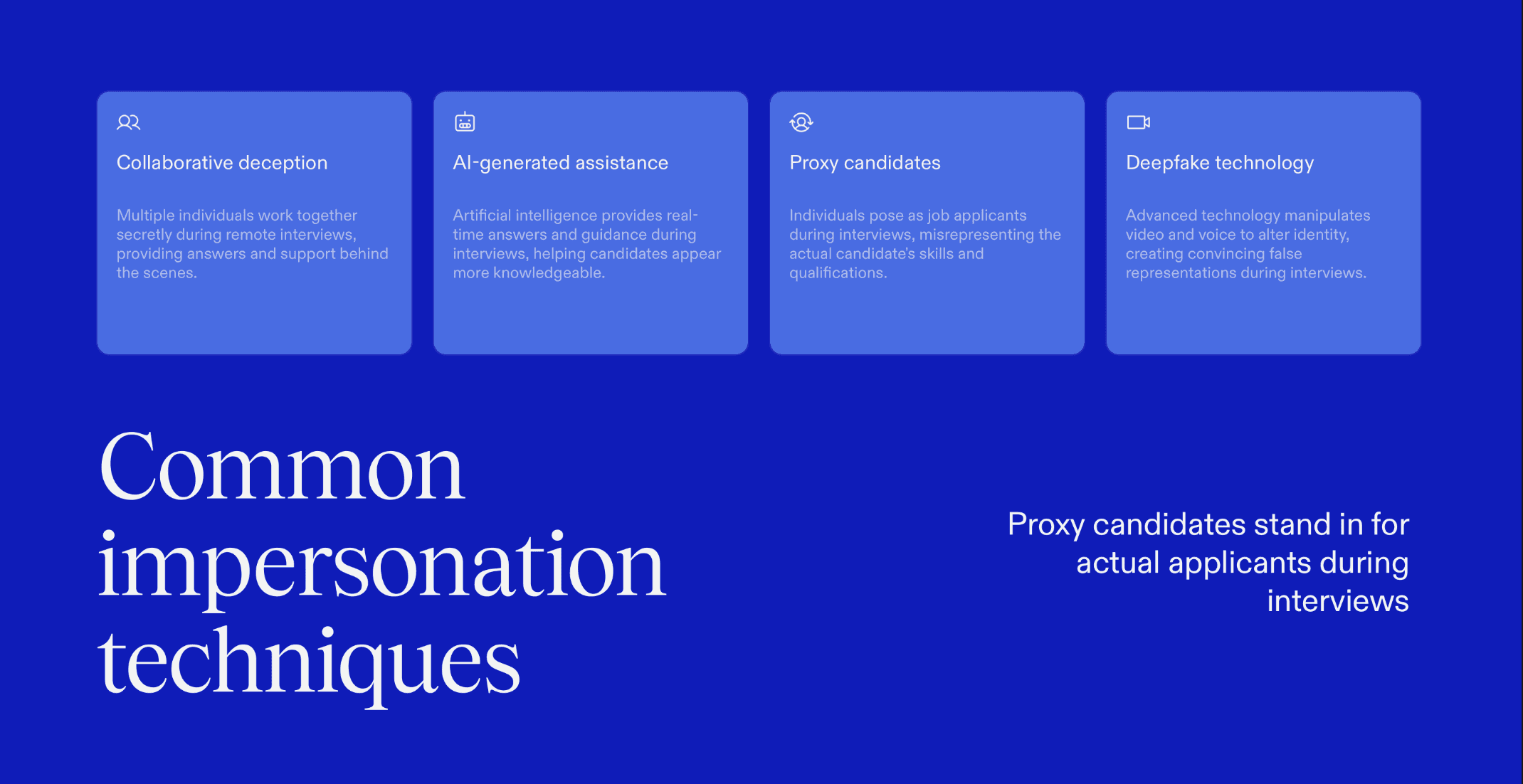

Interview impersonation happens when:

A proxy candidate attends interviews on behalf of the real applicant

Deepfake video or voice technology alters a candidate’s identity

A candidate receives real-time AI-generated answers during interviews

Multiple individuals collaborate secretly during remote interviews

In many cases, the impersonation is limited to early interview rounds, allowing unqualified candidates to advance and eventually get hired.

Why Is AI-Based Interview Impersonation Happening?

Several factors have accelerated this problem:

Widespread access to AI tools

Voice cloning, real-time transcription, and AI-generated answers are now affordable and easy to use, making live interview assistance difficult to detect.Remote-first hiring models

Recruiters no longer control the interview environment, devices, or candidate surroundings, reducing visibility into who is actually participating.Resume inflation using AI

Candidates can create highly polished resumes that appear role-ready but do not reflect real skills or experience.Lack of real-time verification

Most interview platforms prioritize scheduling and video quality rather than validating identity, behavior, and response authenticity during interviews.

Interview Impersonation: A Growing Threat to Hiring Integrity

Interview impersonation occurs when someone other than the actual candidate appears in an interview or assists covertly during the evaluation process. In 2026, this problem has escalated due to accessible AI tools that can manipulate video, audio, resumes, and live responses.

Unlike traditional fraud, AI-powered impersonation is designed to appear natural, confident, and technically competent. This makes it extremely difficult to detect using conventional screening methods.

What’s at Stake for Hiring Teams?

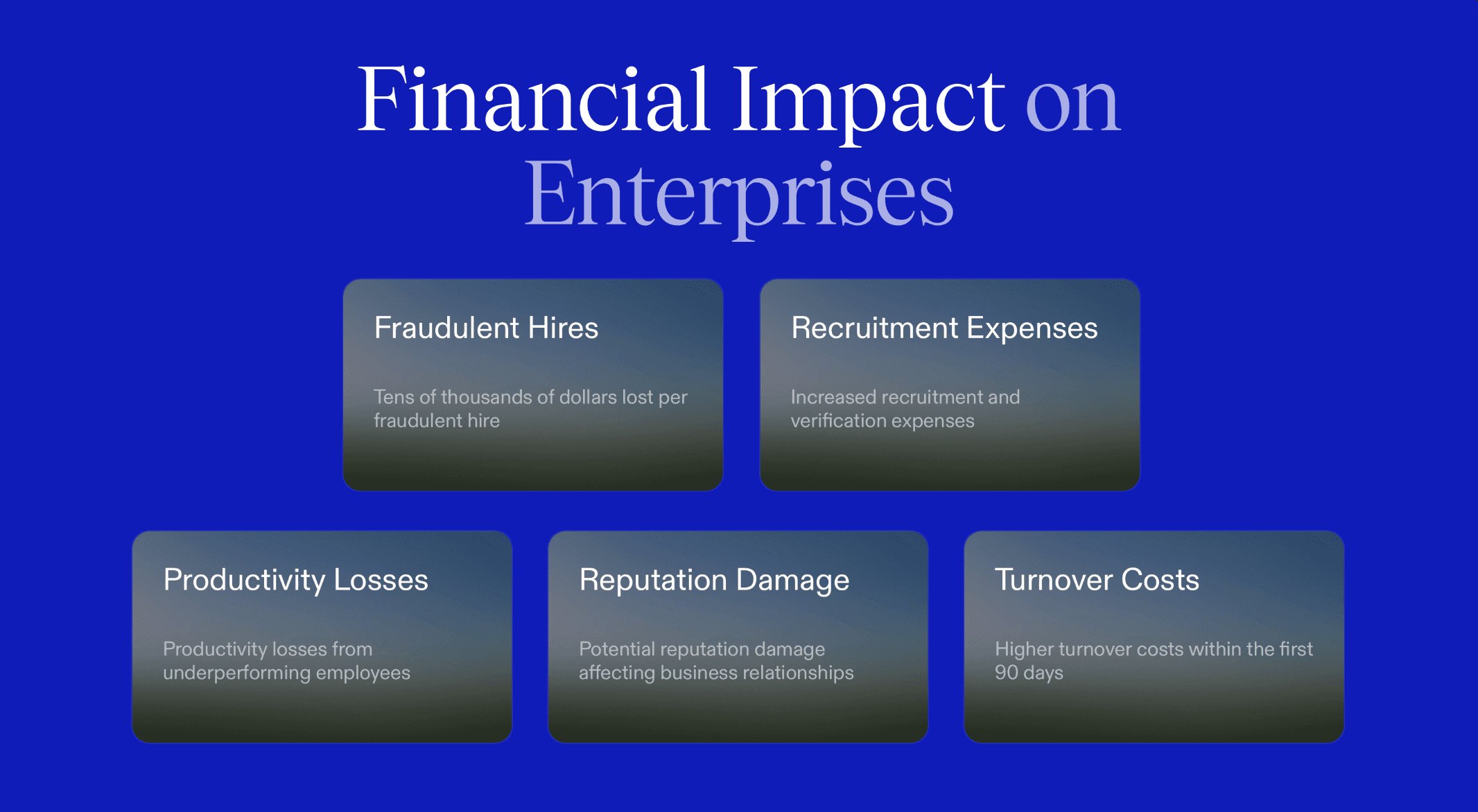

Interview impersonation is not a minor inconvenience. The impact is measurable and costly.

Bad hires who cannot perform once onboarded

Security risks when impersonators gain system access

Compliance failures in regulated industries

Loss of trust between recruiters and candidates

Increased attrition within the first 90 days

For enterprise organizations, a single impersonation-driven hire can cost tens of thousands of dollars.

How to Prevent AI-Based Interview Impersonation

Effective prevention relies on combining process design, technology, and recruiter awareness. Below are key prevention methods, with a brief explanation and an example of how each appears during interviews.

1. Continuous Identity Verification

Identity should be validated throughout the interview, not just at login. This ensures the same individual remains present and engaged from start to finish.

Example: A candidate’s facial presence, voice, and interaction style remain consistent from introductions through technical discussion.

2. Behavioral Consistency Analysis

Authentic candidates show stable communication patterns and reasoning styles across questions. Sudden changes in clarity or confidence often indicate impersonation or external help.

Example: A candidate explains high-level concepts fluently but struggles to justify basic follow-up questions using their own reasoning.

3. Real-Time AI Assistance Detection

Interview systems must detect signals of live AI-generated input or third-party assistance. Uniform response timing and unusually polished language are common indicators.

Example: The candidate pauses for several seconds before every answer and then responds with textbook-level explanations.

4. Cross-Round Candidate Validation

Comparing behavior across interview stages helps uncover proxy switching or identity drift. Variations in voice tone, pacing, or problem-solving approach are key warning signs.

Example: A candidate who confidently solved problems in round one appears hesitant and inconsistent in later interviews.

5. Controlled Interview Environment Standards

Clear interview environment expectations reduce opportunities for off-screen support. Visual and behavioral cues often reveal when rules are not followed.

Example: The candidate repeatedly looks away from the screen while answering complex questions.

6. Purpose-Built Interview Verification Platforms

Traditional video tools were designed for communication, not authenticity verification. Intelligent platforms analyze identity, behavior, and response integrity in real time.

Example: Recruiters receive alerts when candidate behavior deviates from previously verified patterns during the interview.

7. Recruiter Training and Awareness

Technology is most effective when recruiters know how to interpret verification signals. Strategic follow-up questions help validate genuine understanding.

Example: An interviewer asks the candidate to re-explain a solution using a different approach, exposing gaps in real comprehension.

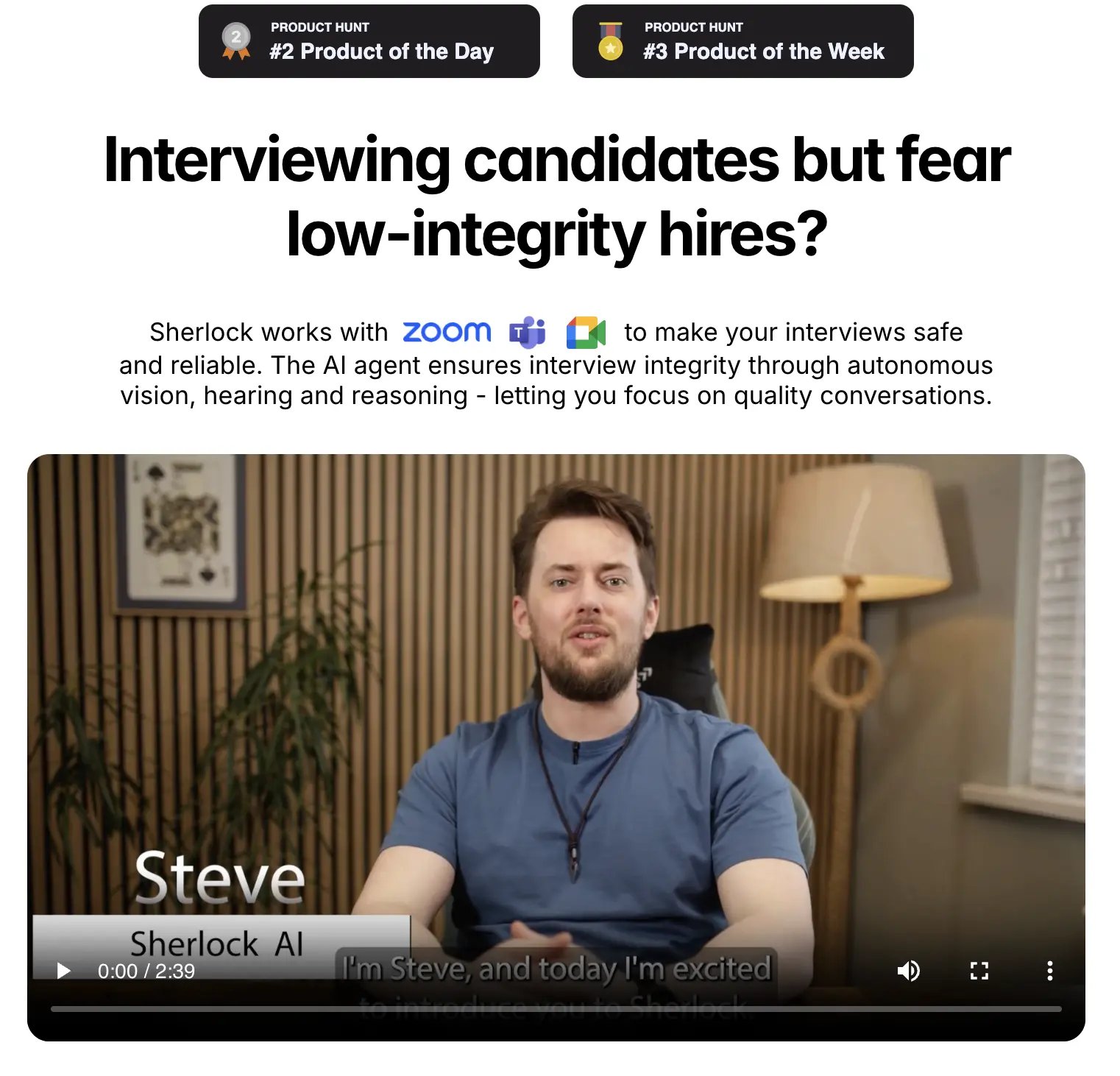

Preventing AI-Based Interview Impersonation with Sherlock AI

Sherlock AI is designed specifically to address the modern impersonation problem, not yesterday’s hiring challenges.

How Sherlock AI Helps Prevent Impersonation

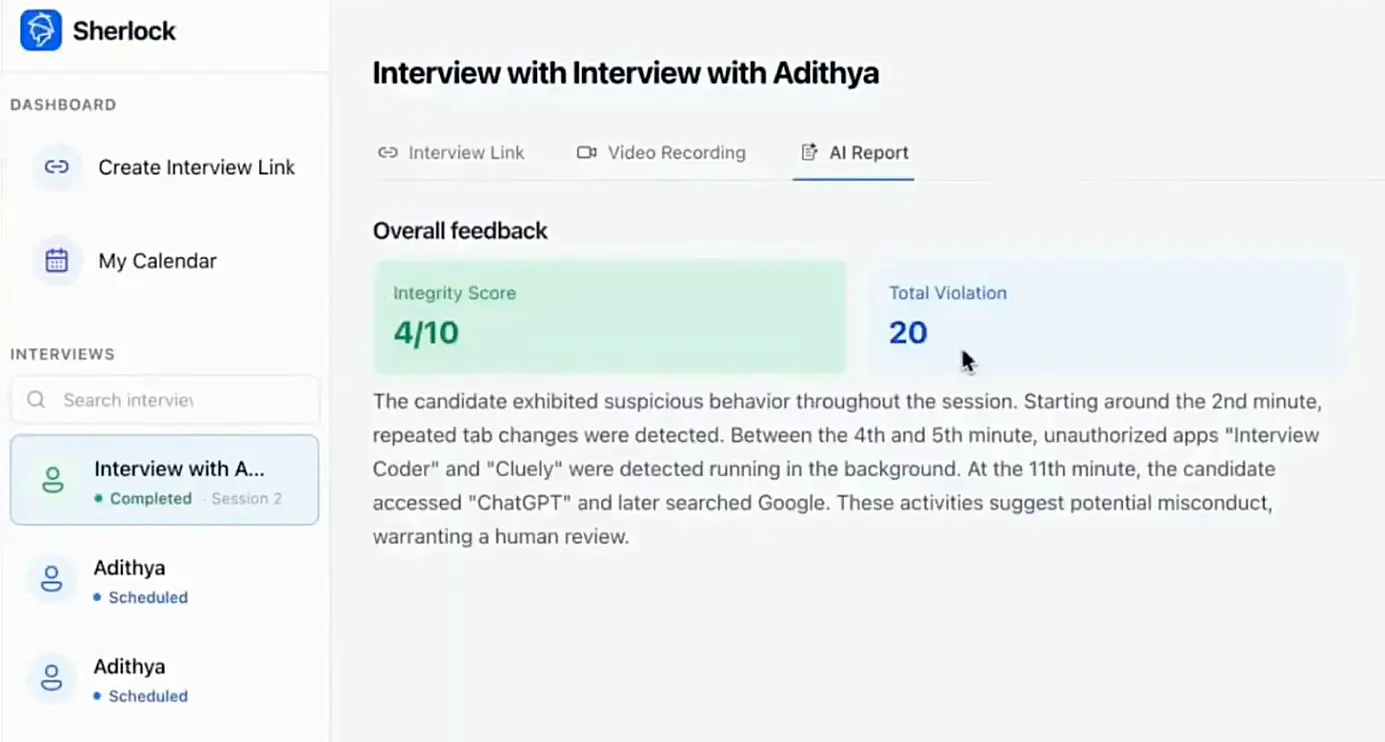

AI Assistance Signal Detection: Identifies response timing and language patterns that suggest live AI support during interviews.

Attention and Gaze Analysis: Monitors eye movement and focus to detect frequent off-screen attention or unnatural viewing behavior.

Deepfake and Proxy Identification: Detects facial anomalies, lip-sync mismatches, and signs of unauthorized participants.

Speech Pattern Consistency Checks: Analyzes voice tone, vocabulary usage, and response pacing to spot sudden inconsistencies.

Device and Screen Activity Monitoring: Flags suspicious application usage, rapid tab switching, or external audio inputs.

Live Alerts and Integrity Summaries: Notifies recruiters of high-risk behavior in real time and provides a post-interview integrity report.

Seamless Video Platform Compatibility: Operates alongside Zoom, Microsoft Teams, and other platforms without interrupting interviews.

Real-World Examples of Interview Impersonation

These are not isolated incidents. Recruiters across industries are encountering interview impersonation in real hiring workflows.

Case 1: The Proxy Developer

A fintech company hired a senior developer who performed exceptionally well during technical interviews. After joining, the new hire struggled with basic coding tasks, prompting an internal review that revealed a proxy candidate had attended the interviews.

Case 2: Multiple Identities, One Environment

A staffing firm noticed unusual similarities across interviews for different candidates. Further investigation revealed that multiple applicants were interviewing from the same environment under different identities.

Case 3: The Voice That Did Not Match

An enterprise recruiter conducted phone screens and later video interviews with the same candidate. The recruiter identified noticeable differences in voice and speaking style between rounds conducted only days apart.

These scenarios show how interview impersonation often remains undetected until after hiring, when the cost of correction is significantly higher.

Why This Isn’t Just an HR Problem

Interview impersonation impacts multiple business functions:

Engineering teams suffer productivity losses

Security teams face access control risks

Legal teams handle compliance exposure

Leadership loses confidence in hiring pipelines

Preventing impersonation requires collaboration between HR, IT, and security teams.

Common Signals of AI-Based Interview Impersonation

Risk Area | What Appears During the Interview | Why Recruiters Should Pay Attention |

|---|---|---|

AI-assisted answering | Delayed but highly polished responses that sound scripted | Indicates possible real-time AI-generated support |

Attention and gaze behavior | Frequent off-screen glances or fixed, unnatural eye movement | Suggests use of secondary devices or external help |

Identity manipulation | Facial inconsistencies or mismatched lip movement | Signals deepfake usage or proxy participation |

Speech consistency | Sudden changes in tone, vocabulary, or speaking pace | Often occurs when different sources generate responses |

Device and screen activity | Signs of tab switching or unexplained audio changes | Points to unauthorized tools running in the background |

Live behavioral anomalies | Repeated pauses before complex answers | Helps identify when assistance is being consulted |

Post-interview review | Discrepancies between interview performance and follow-up tasks | Reveals impersonation that may not be obvious in real time |

Final Thoughts

AI-based interview impersonation is no longer an edge case. It is a growing risk that directly impacts hiring quality, security, and organizational trust. As remote interviews become the default, recruiters can no longer rely solely on resumes, video presence, or surface-level performance.

Preventing impersonation requires a shift from reactive checks to proactive verification. Hiring teams need visibility into identity, behavior, and response authenticity throughout the interview process. This is where intelligent interview verification becomes essential.

Sherlock AI helps organizations move beyond traditional screening by providing continuous candidate verification and real-time integrity insights during interviews. By combining behavioral analysis, AI assistance detection, and privacy-first verification, Sherlock AI enables recruiters to confidently hire the person behind the screen, not just the performance they present.

In a hiring landscape shaped by AI, trust must be engineered into the interview process. Platforms like Sherlock AI make that possible by ensuring interviews reflect real skills, real identity, and real potential.