Back to all blogs

Modern interviews are easy to game with AI assistance. Learn how to design cheat-resistant interview processes that test real skill.

Abhishek Kaushik

Mar 31, 2026

In an age where remote hiring is standard, interview cheating has shifted from a fringe concern to a measurable business risk. An industry survey found that 59 % of hiring managers have suspected candidates of using AI or other deceptive methods to misrepresent their skills during the hiring process, and 31 % reported interviewing someone who later turned out to be using a false identity showing that traditional evaluation formats are struggling to keep up with new threats.

Beyond outright identity deception, workplace sentiment data reveals that 20 % of job seekers admitted to having secretly used AI tools during interviews, while a majority believe using AI in interviews is becoming the norm.

These trends signal a clear message: the status quo in interview design is vulnerable, and building a process that is resistant to cheating is essential for preserving fairness, accuracy, and hire quality.

Why Modern Interviews Are Easy to Cheat

Interviews didn’t suddenly become broken. The environment around them changed faster than their design.

Traditional interview formats like video calls, shared coding editors, structured behavioral questions were built on a simple assumption that the candidate’s responses originate from their own thinking. That assumption is no longer true.

AI Copilots and Real-Time Answer Generation

Modern AI tools don’t replace the candidate, they augment them silently. With copilots generating answers in real time, candidates can:

Produce well-structured responses without deep understanding

Solve problems they wouldn’t complete independently

Maintain confidence and fluency under pressure

To the interviewer, this looks like preparation and competence. In reality, the interview is measuring AI output filtered through a human, not actual skill.

Proxy Candidates and Deepfake Assistance

Remote interviews have also made identity substitution easier and harder to detect:

Proxy candidates stepping in for technical rounds

Deepfake video or voice masking during live calls

Different people appearing across interview stages

Because most verification happens after an offer is made, these forms of fraud often go unnoticed during evaluation.

Why Webcam Monitoring and “Good Questions” Fail

Webcams confirm presence and strong questions test knowledge but neither test authenticity and ownership of that knowledge.

They cannot reliably detect:

Off-screen assistance

Prompt-driven answers

Scripted reasoning delivered confidently

As long as answers sound right and arrive on time, interviews pass candidates through.

Modern interviews are easy to cheat not because interviewers are careless, but because the process was never designed to distinguish human capability from AI-assisted performance. Fixing this requires redesigning how interviews work and not policing candidates harder.

Designing Interviews That Test Real Skill

The solution to cheating isn’t stricter monitoring but it’ better design. Cheat-resistant interviews don’t try to catch candidates in the act; they make external assistance less useful in the first place.

1. Move Beyond Recall to Live Reasoning

AI excels at recall: definitions, frameworks, ideal answers. Humans reveal skill through reasoning in motion.

Effective interviews:

Ask candidates to make decisions with incomplete information

Explore trade-offs instead of “right” answers

Require candidates to adapt as new constraints are introduced

When thinking has to happen live, AI output becomes harder to relay convincingly.

2. Introduce Friction AI Struggles With

Cheat resistance comes from moments where scripts break.

Follow-up pivots

Instead of progressing linearly, interviewers should:

Change assumptions mid-answer

Ask “what would you do differently if…”

Challenge earlier statements

AI-generated responses tend to collapse when the path shifts unexpectedly.

Context switching

Move candidates between:

Concept → application

Explanation → execution

Past experience → hypothetical scenarios

This tests understanding across modes, not just fluency in one.

“Explain your thinking” moments

Asking how a candidate arrived at an answer exposes:

Gaps in reasoning

Memorized vs internalized logic

Over-polished but shallow responses

AI can provide answers; owning the reasoning is harder.

3. Mix Synchronous and Asynchronous Stages Intentionally

Asynchronous tasks are not inherently flawed but they must be paired thoughtfully.

Design principles:

Use async rounds to observe preparation style

Use live rounds to probe decisions made earlier

Ask candidates to defend or revise their own submissions

Inconsistencies between stages often reveal over-assistance.

4. Enforce Consistency Across the Interview Journey

Cheating often shows up as performance spikes, not total failure.

A cheat-resistant process:

Reuses core problem themes across rounds

Checks whether depth matches earlier confidence

Looks for continuity in thinking, not perfection

When the same capability must appear repeatedly, external assistance becomes harder to hide.

Cheat resistance doesn’t come from assuming bad intent. It comes from designing interviews that reward genuine understanding and adaptive thinking, qualities AI can’t consistently fake yet.

Read more: Best Practices to Prevent AI Fraud in Your Hiring Process

Embedding Integrity Checks Into the Interview Workflow

Most interview processes don’t fail because they lack checks, they fail because those checks exist outside the workflow. Integrity is treated as an afterthought, not a design principle.

1. Maintaining Identity Continuity Across Stages

One of the most common blind spots in modern hiring is stage-to-stage identity drift.

In many processes:

The person who completes the screening isn’t verified against the person in later rounds

Different interviewers assess different stages in isolation

No one confirms that performance belongs to the same individual throughout

When continuity isn’t enforced, proxy participation and substitution slip through unnoticed.

2. Watching the Right Integrity Signals

Cheating rarely looks chaotic. It looks too smooth.

Effective integrity checks focus on behavioral and response-level signals, not suspicion:

Response latency: Perfect answers that arrive with unnatural timing especially on complex questions can indicate external assistance.

Scripted confidence: Candidates sound rehearsed but struggle when interrupted, redirected, or asked to justify decisions.

Over-polished answers: Language is precise, structured, and generic, yet lacks personal nuance or experiential detail.

These signals are subtle, contextual, and easy to miss without structured observation.

3. Real-Time vs Post-Interview Review

Not all integrity checks need to happen live.

Real-time checks help interviewers adjust questioning when signals appear

Post-interview analysis allows patterns to emerge across rounds, roles, and candidates

The combination is critical. Real-time insight without follow-up lacks context; post-review without live signals misses nuance.

Integrity in modern interviews depends on continuity, signal interpretation, and pattern analysis across stages. When these elements are built into the workflow, interviews remain reliable even as formats, tools, and scale evolve.

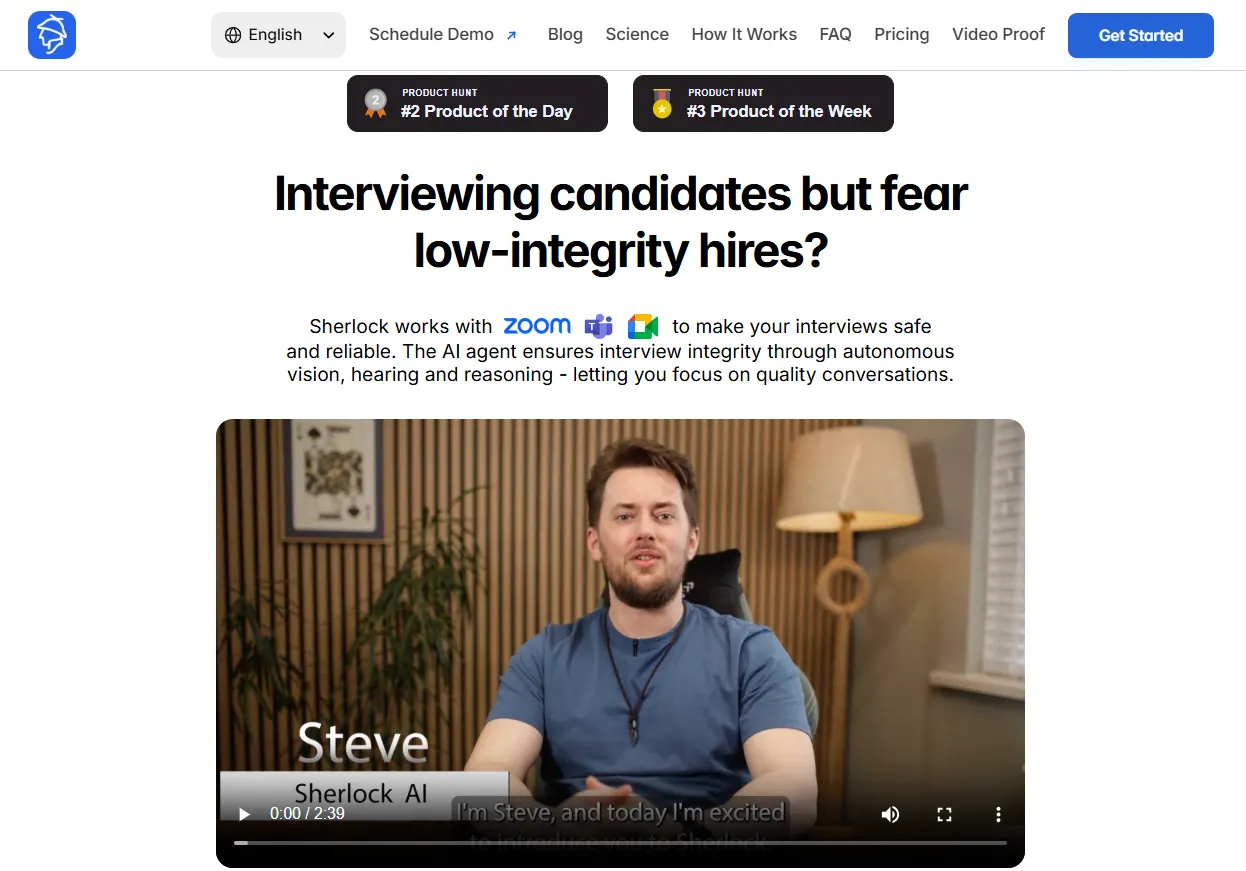

Sherlock AI: A Modern Solution for Interview Integrity

Sherlock AI is an Interview Proctoring & Integrity Platform is designed to secure remote interviews by analyzing behavior, context, and interaction patterns.

Sherlock AI focuses on real-time detection and cross-session consistency so hiring teams can trust that what they see in an interview reflects authentic candidate performance, not external assistance.

Continuous Integrity Monitoring Across Stages

Sherlock AI integrates with interview workflows (e.g., calendar scheduling and meeting links) so it can monitor sessions consistently rather than as isolated events. This helps maintain identity continuity and behavior tracking from start to finish.

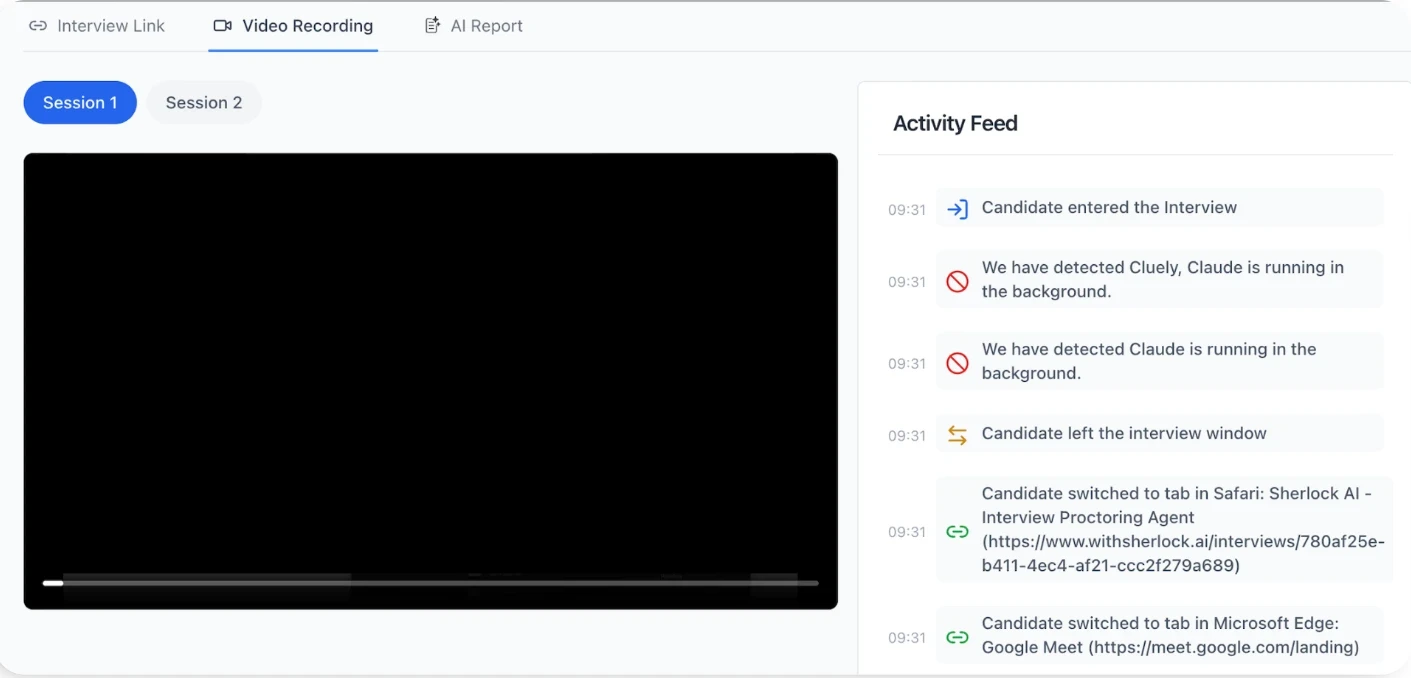

Behavioral and Contextual Signals

Instead of simple triggers, Sherlock AI uses pattern analysis to identify indicators linked to assistance or fraud:

Timing and response patterns that don’t align with natural reasoning

Consistency in language and delivery that suggests scripted support

Subtle behavior signals that surface when candidates rely on external aid

This approach reduces false positives and focuses on meaningful patterns over isolated behaviors.

Identity and Fraud Detection

Sherlock AI evaluates a range of signals relevant to interview integrity:

Live analysis of video and audio environments

Detection of identity masking, deepfake indicators, or proxy behavior

Consistency checks on presence and engagement throughout an interview

These systems help teams distinguish genuine performance from assisted participation without intrusive monitoring.

Dual-Mode Insight: Real-Time Alerts + Post-Interview Intelligence

Sherlock AI provides:

Real-time alerts to support interviewers as conversations unfold

Post-interview reports and insights that show patterns across interviews

This layered visibility helps hiring teams both probe suspicious signals during an interview and compare outcomes across stages and candidates.

Scales With Remote and High-Volume Hiring

Sherlock AI is built to work in large, distributed hiring environments where manual review can’t scale. It provides interview intelligence, from fraud detection to structured notes, while integrating into existing workflows with minimal disruption.

Sherlock AI helps teams find signals that matter like response consistency, behavioral patterns, identity continuity, so interviews measure capability, not assisted performance or polished delivery that hides gaps. That turns interview integrity from a perceived risk into a measurable part of the hiring process.

Conclusion

Interview cheating didn’t emerge because candidates suddenly became dishonest. It emerged because interviews were built for a different era, one where answers reliably reflected individual effort and reasoning. That assumption no longer holds.

Modern interviews now operate in environments where AI assistance, proxy participation, and identity substitution are easy to introduce and hard to spot. In this context, stronger questions, stricter rules, or interviewer intuition alone can’t restore trust.

Cheat-resistant interviews come from:

Designing interactions that require live reasoning and consistency

Embedding integrity checks across the interview workflow

Evaluating patterns over time, not isolated responses

This is where purpose-built systems matter. Sherlock AI brings integrity into the interview itself so hiring teams can confidently assess real capability, even at scale.

As interviews continue to evolve, trust can’t be assumed. It has to be designed, measured, and maintained.